At OCP Summit 2022, Microsoft showed what is a next-generation server for the rest of the world. The server shown we were told was an AMD EPYC “Genoa” generation server. This was part of the Microsoft Hydra demonstration on the show floor.

Microsoft Shows AMD Genoa 1P Server Powered by Hydra at OCP Summit 2022

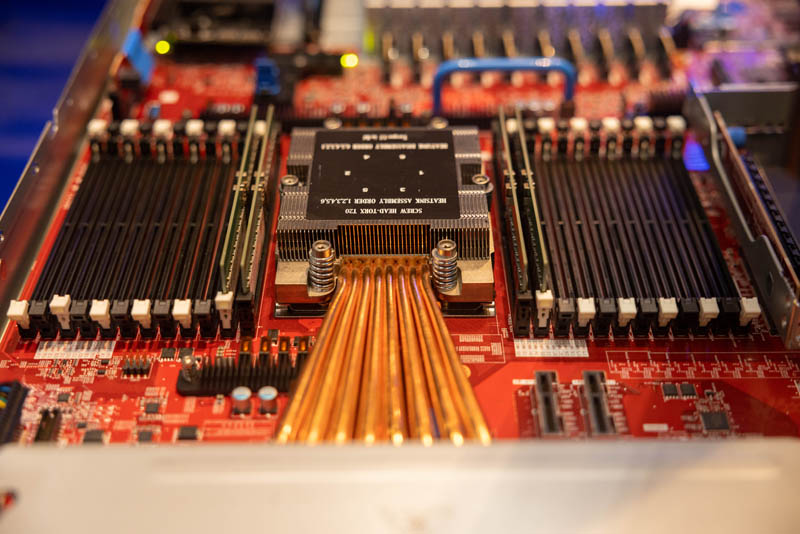

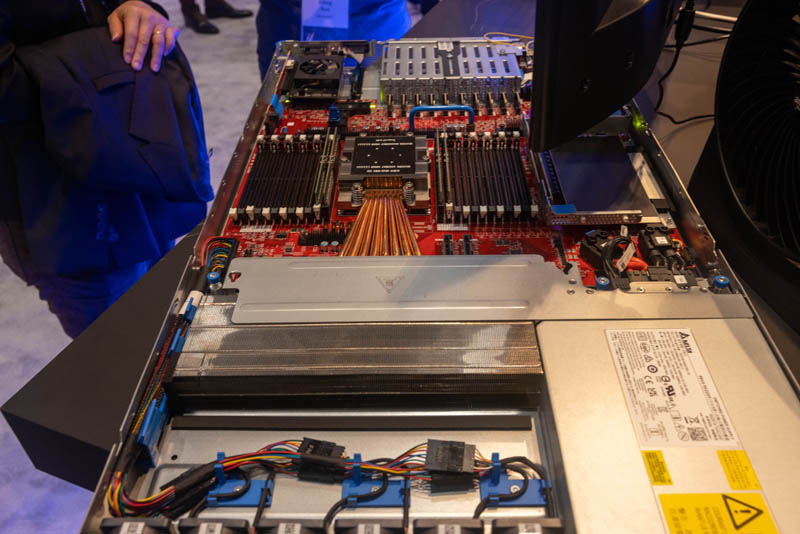

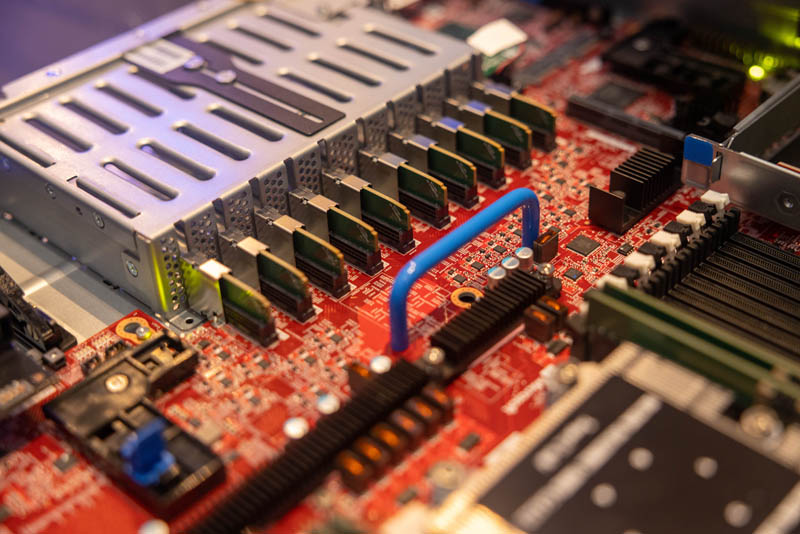

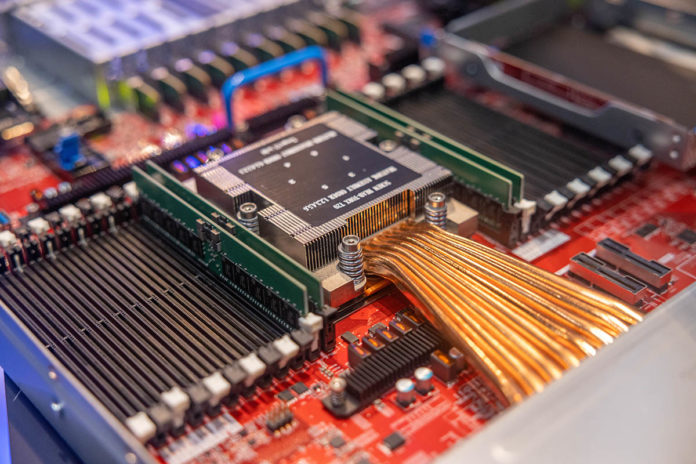

At OCP Summit 2022, while walking through the Microsoft booth, something immediately caught my eye, Microsoft was showing AMD Genoa. One can see the new socket and the 24x DIMM slots, albeit with only two populated.

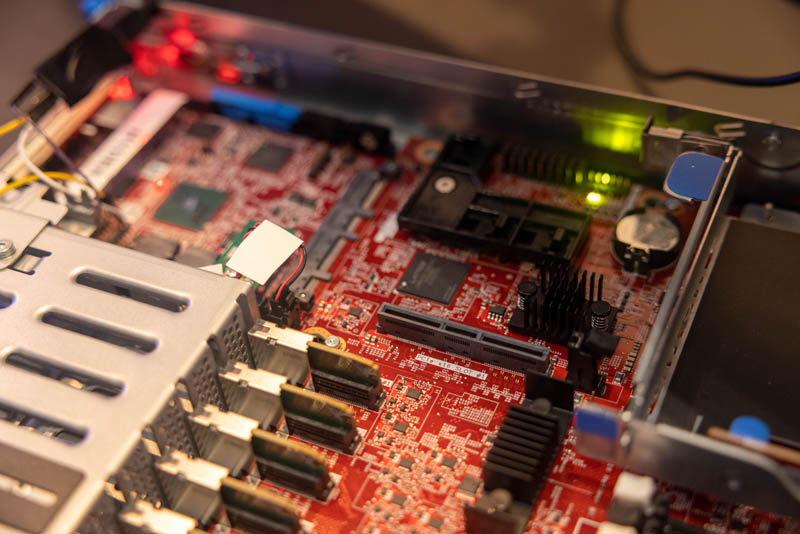

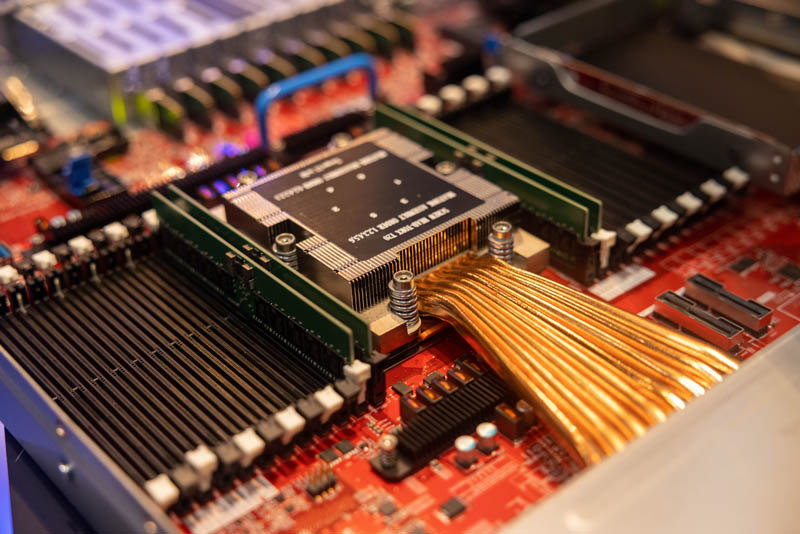

Something that may be immediately noticeable is the cooling solution. This is a 1U platform so there is limited height. We cannot disclose the name of the socket, or specs beyond DDR5, and PCIe Gen5 support that AMD has already discussed for its upcoming Genoa platform because we are under embargo.

What we can do however is show the absolutely massive heat-pipe and heatsink setup.

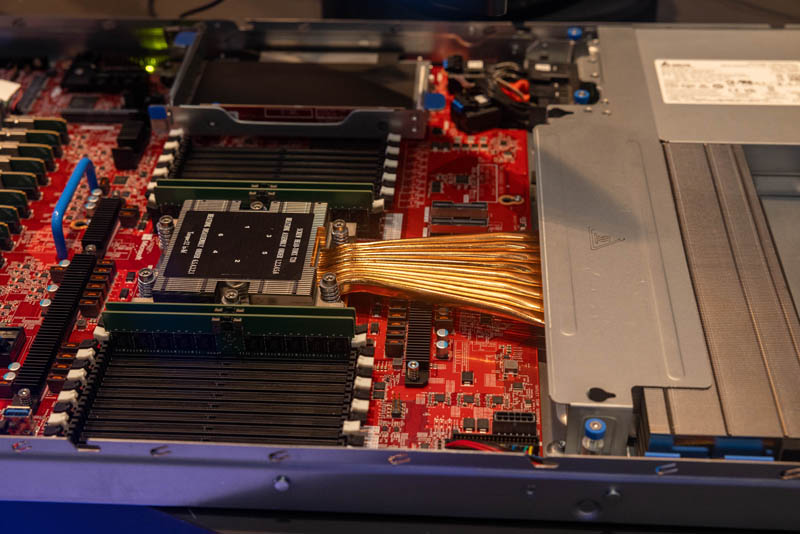

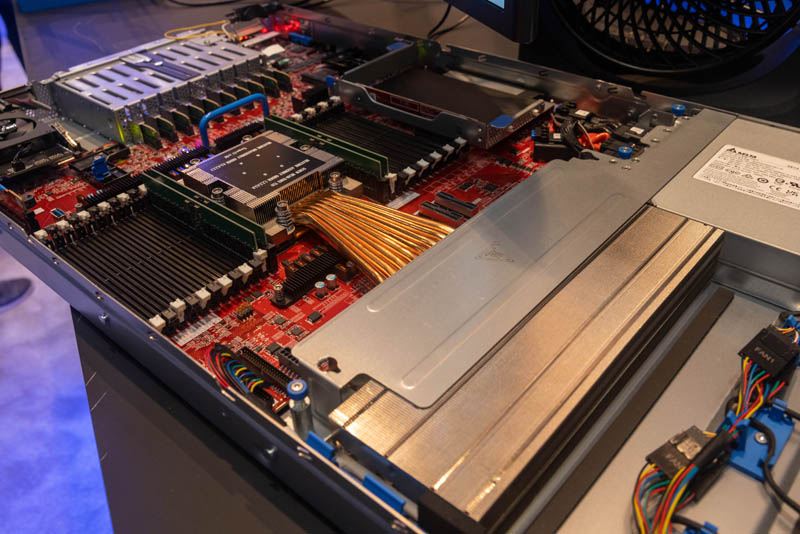

The CPU has a small heatsink over it, but then there are eight copper heat pipes that lead to a much larger heatsink that sits alongside the power supplies.

This is a very Microsoft server design with 10x E1.S EDSFFF slots in the middle. On the side we can see a small Gigabyte GPU that Microsoft added to this server just to demonstrate Hydra.

There are also a number of slots and cabled PCIe connectors on the motherboard.

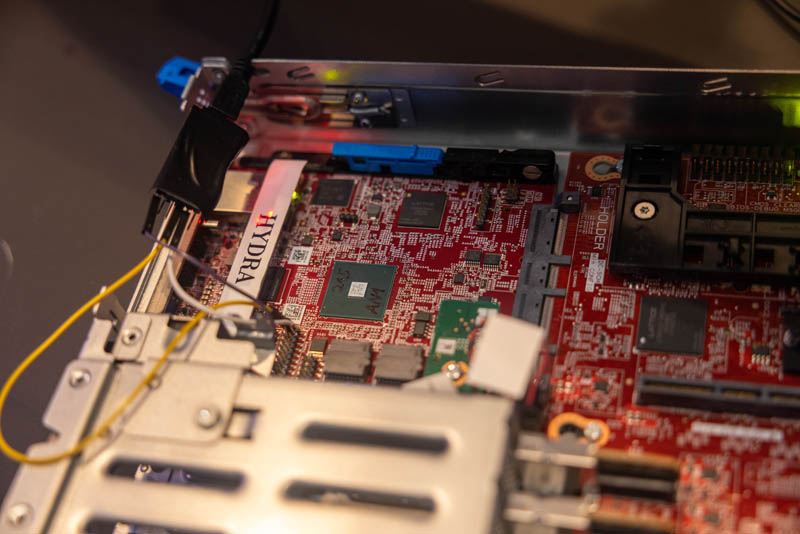

The real star of this, aside from Genoa, is the Hydra card. This is a card that is a BMC on a card jointly developed with Nuvoton. One can see that it plugs into the motherboard via an OCP NIC 3.0 connector. You can learn more about OCP NIC 3.0 form factors in our guide.

This will have Microsoft’s silicon root of trust and more running. Part of the goal of the newer OCP servers is to be more modular so parts can be replaced or upgraded more easily.

Final Words

Microsoft has been a major supporter of AMD EPYC and has been a key customer. We expect that as Genoa launches later this quarter we will hear more from Microsoft about its deployment. Still, one can tell that we are far from the days of 125W TDP CPUs with the cooling solution the company is deploying. Seeing 24x DIMM slots connected to a single CPU is also awesome since that helps to drive higher memory density.

We will have more on the AMD EPYC Genoa platform when we can show you the official platforms in detail with performance results later this quarter. It is coming, just wait.

Patrick, how long is that machine? If it fits in a 19″ rack then it looks to be about 60″ long?

@emerth – OCP racks are 21.22″ wide, not 19″.

@emerth – Source: https://www.opencompute.org/wiki/Open_Rack/SpecsAndDesigns – Open Rack Specification 2.2

> “2.0 Mechanical Requirements

The standard allows for 2 different depths of IT Gear: ~800mm (the original depth from revision V1.1), and a new modular shallow depth of ~660mm. The original IT Gear depth targeted a rack depth of 1048mm (though any depth is acceptable). The modular shallow derivation of the Open Rack features a shallow base rack depth with options for a modular front extension for cable management and security provisions. The desired rack depth for the shallow variant is 762mm [30.0in] from front to back. The rear retention portion of the rack will remain common with the Open Rack v1.2 standard. External Width and Height are not specified, as they can vary depending on application.”.

@rob that’s even worse … then it’s 67″ long, but OCP racks are 42″ deep.

The review stated, “seeing 24x DIMM slots connected to a single CPU is also awesome since that helps to drive higher memory density.” From my point of view, memory bandwidth is even more important on these high-core-count AMD processors.

For example, it can easily happen with fairly modest single-threaded numerical codes to end up with a 60 percent slowdown due to contention for memory bandwidth when all cores on a current 64-core CPU are busy.

24 DIMMs per socket means

1.5 TB DRAM with cheap 64GB RDIMMs

3 TB with relatively affordable 128 GB LR or 3DS DIMMs/ ($750each for DDR4, no prices for DDr5 yet)

6 TB with expensive 256 GB 3DS DIMMs

Two socket Genoa could really take some market share from 4 socket Xeon for SAP Hana systems.

Wait, I thought that AMD already officially mentioned the name of the socket (SP5) in their own presentations before, why is that a secret?

Sp5 coolers have been on the market for months too. You can literally just buy them. (Examples: Dynatron J10, Lori 2U-K22)

Is using the OCP NIC 3 slot purely about it being the obvious interoperable module with external access for the management NIC; or is it up to something more than a typical BMC that would make the bandwidth the slot offers relevant?

Even if it’s only a 2C+ that’s 8x PCIe links, which is a lot by BMC standards. It doesn’t look like it has the SPF cages or the heatsinks to suggest some DPU-level behavior; but you sure don’t need that much speed to keep an eye on SMBUS, offer a lowest-common-denominator GPU for remote console work, and check bootloader signatures; so I’m curious if it has more going on than normal.

There are 4 DIMMs! in every photo in this article.

The OCP NIC slot is about standardizing on connectors.

The part nobody discusses is that, realistically, it is so the motherboards can be standardized while management is added according to BMC vendor and region. The entire world will not use ASPEED BMCs in the future.

“The real star of this, aside from Genoa, is the Hydra card. This is a card that is a BMC on a card jointly developed with Nuvoton. One can see that it plugs into the motherboard via an OCP NIC 3.0 connector.”

It is OCP NIC 3.0 connector but not for NIC. It’s called DC-SCM form factor.