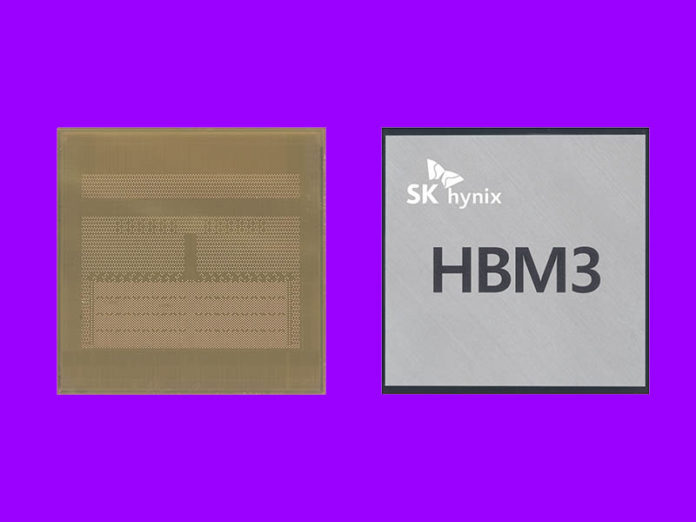

A few months ago, we covered SK hynix announcing its ultra-fast HBM3. Now we have the official JEDEC specification published for the next generation of high-speed memory. HBM3 will likely see use next to high-speed accelerators and potentially next-generation CPUs. As a result, it is not something that is going to impact the market in the next month or two, but moving forward there are some big upgrades.

JEDEC Publishes JESD238 HBM3 Spec

HBM over its evolution has become not only faster and more feature-rich, but also more costly. that is typically why we see this on higher-end processors because the cost is significantly higher than standard memory. With the new JESD238 HBM3 spec, we get more performance, more speed, and new features as well.

Here are some of the highlights of HBM3 directly from JEDEC:

- Extending the proven architecture of HBM2 towards even higher bandwidth, doubling the per-pin data rate of HBM2 generation and defining data rates of up to 6.4 Gb/s, equivalent to 819 GB/s per device

- Doubling the number of independent channels from 8 (HBM2) to 16; with two pseudo channels per channel, HBM3 virtually supports 32 channels

- Supporting 4-high, 8-high and 12-high TSV stacks with provision for a future extension to a 16-high TSV stack

- Enabling a wide range of densities based on 8Gb to 32Gb per memory layer, spanning device densities from 4GB (8Gb 4-high) to 64GB (32Gb 16-high); first generation HBM3 devices are expected to be based on a 16Gb memory layer

- Addressing the market need for high platform-level RAS (reliability, availability, serviceability), HBM3 introduces strong, symbol-based ECC on-die, as well as real-time error reporting and transparency

- Improved energy efficiency by using low-swing (0.4V) signaling on the host interface and a lower (1.1V) operating voltage (Source: JEDEC)

More channels, more stacks, and faster data rates mean we will see higher capacities and more performance. One of the key challenges for HBM is to ensure that as capacity scales, performance per GB scales as well. The new RAS features are also important because the systems HBM equipped devices are placed into are also higher-dollar.

Final Words

We are seeing more classes of devices focus on HBM use. Intel Xeon Sapphire Rapids will support HBM but likely only for CPU-only HPC clusters given the pricing involved. Still, as CPUs get larger, we also see higher-performance needs from memory to feed more compute resources since memory bandwidth tends to be a common challenge.

For those that want to get into more details, with registration, one can download the new memory standard here.