This is one of those parts that is both very interesting, and not interesting at all. Today we have an Intel X710-DA2 OCP NIC 3.0. This is a dual SFP+ 10GbE card which is not exactly groundbreaking in 2020. Indeed, most of the industry has transitioned to 25GbE and using what is usually a PCIe x16 slot for this NIC to deliver dual 10GbE is not overly efficient. Still, the intrigue of the part comes as this is an OCP NIC 3.0 part. With any form factor, we need a range of NICs. In our review, we are going to see what it offers.

Intel X710-DA2 OCP NIC 3.0 Dual 10GbE NIC Overview

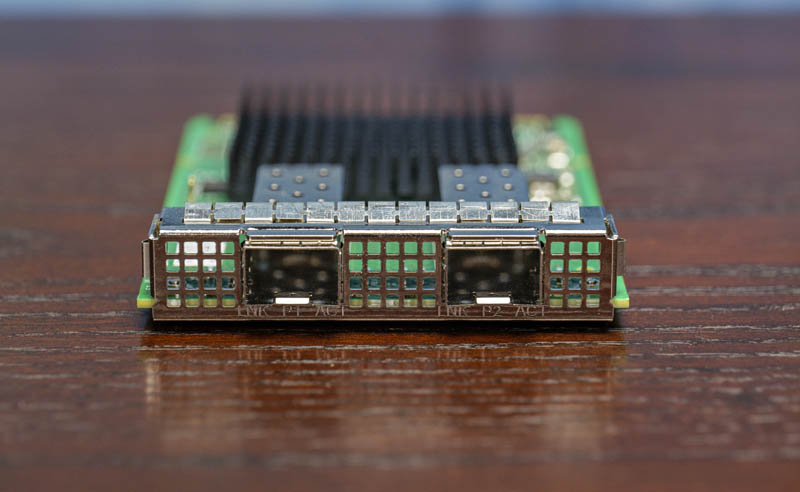

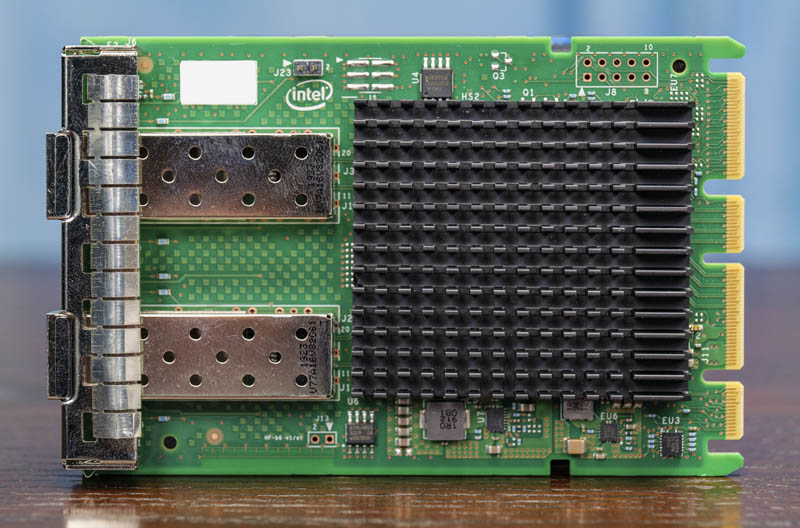

There are a number of changes in the OCP NIC 3.0 form factor which are extremely important to the server sector. The first example is the I/O connector edge of the NIC. Here we can see two SFP+ 10GbE ports. There is another small, but important detail. With the OCP NIC 3.0 form factor there is a new orientation which means we get the I/O faceplate assembly built-into the NIC. With OCP NIC 2.0 form factors, the server vendors had to design this faceplate themselves.

If you ever assembled a server where you had a NIC and the wrong faceplate, it led to some difficult decisions. See the very bottom right for an example of this on STH being obfuscated by cables.

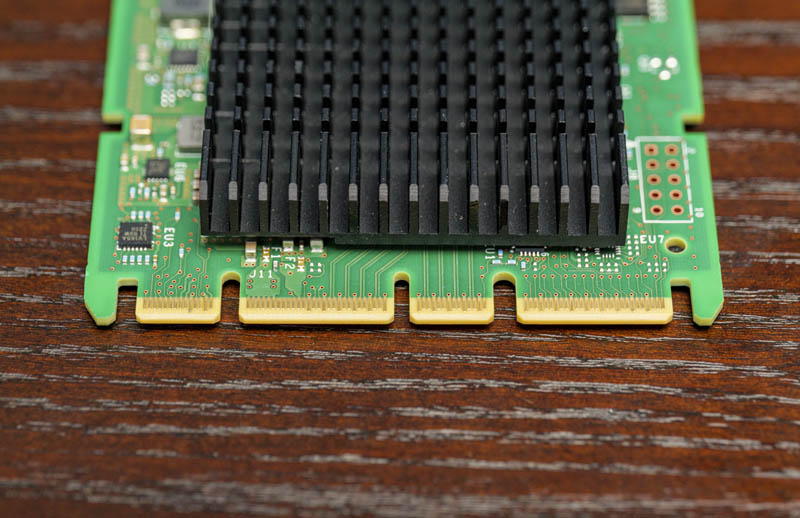

The other major change with this version is that the NIC is designed to be easily swappable with a connector in line with the plane of removing the NIC to the rear of the chassis. That makes installation much easier. The connector is built for PCIe Gen4 speeds and x16 links, but as a dual 10GbE card this is PCIe Gen3. Practically, that has an impact since this card is now using a PCIe Gen4 x16 motherboard slot for what can easily be accomplished in PCIe Gen3 x4.

The card we are looking at is the Intel X710DA2OCPV3. Server vendors re-brand this part. As an example, Dell says this is a W35V7 / Dell Part 540-BCOU. There is also an Intel X710DA4OCPV3 part which is the quad-port version of this card. We previously looked at a Intel X710-DA4 NIC if you want to see more about that solution in a traditional PCIe form factor. There is a notable difference. Technically this card is using the X710-BM2 controller (X710 2-port) while the DA4 version is using an XL710-BM1 essentially an XL710 40Gbps single port solution split into four 10GbE ports.

Especially as we move to the PCIe Gen4 server generation in 2021 we are going to see the OCP NIC 3.0 slot become ubiquitous. It can handle high-performance dual 100GbE networking or 10x what this card can achieve. Still, this is what Intel is selling as “basic connectivity” in this space. Some deployments will have servers with OCP NIC 3.0 slots and will not need 25GbE or faster speeds.

Intel X710 NIC in Linux

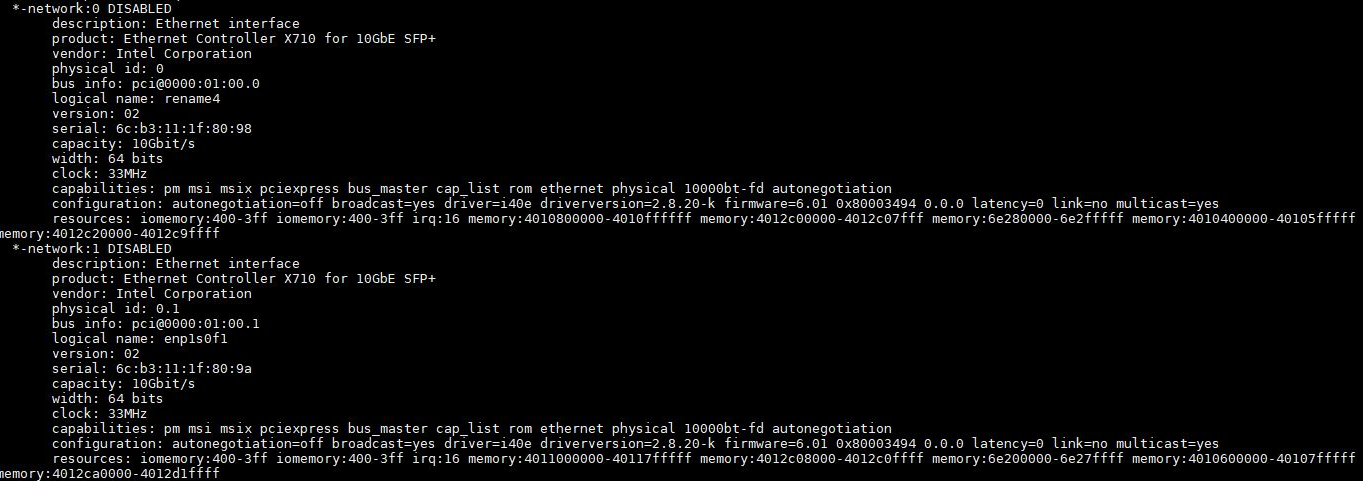

We wanted to take a few moments to show our readers what these NICs look like installed. Here is a look at the lshw output:

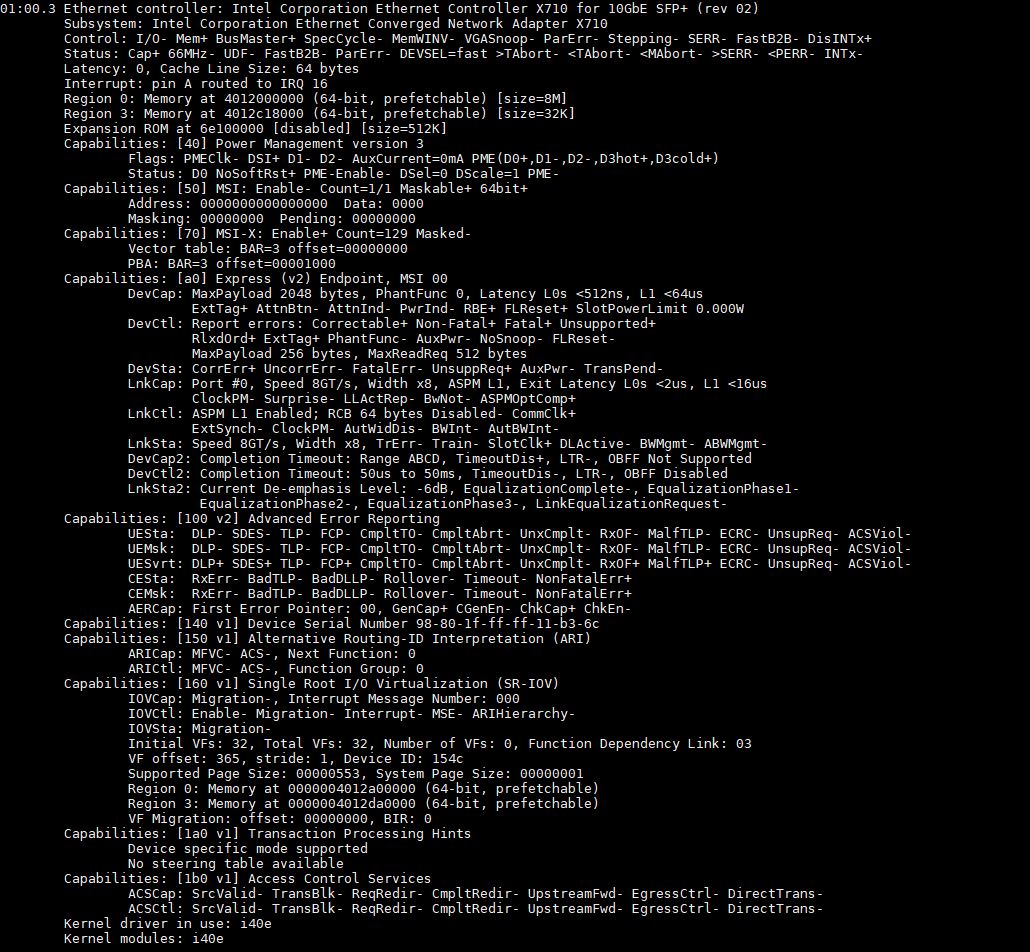

Here is the lspci -vvv output for an X710 NIC:

We can see the NICs are using the Intel i40e driver, which is Intel’s mainstream Fortville driver shared with the XL710 series and even the X722 PCH networking options found in Xeon Scalable platforms and the embedded NICs in the Xeon D-2100 series. We can also see SR-IOV support.

We are showing Linux here, but this NIC is supported in every major OS.

Intel X710-DA2 OCP NIC 3.0 Dual 10GbE NIC Performance and Power

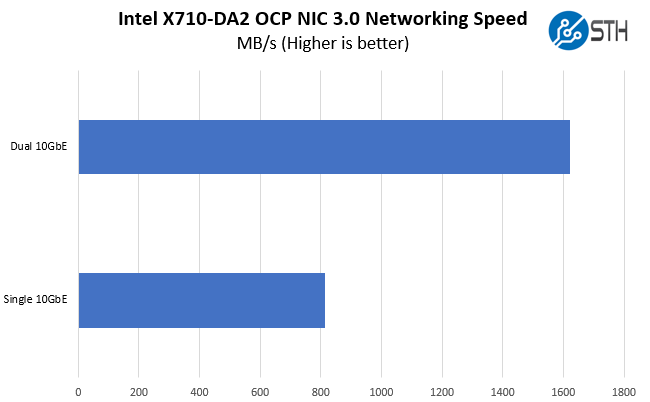

We tested the X710 OCP NIC using two ports on a 1m breakout QSFP+ DAC to our 32-port Arista 40GbE data center switch. We then varied the port speed and connection type and tested transfer speeds from our network storage.

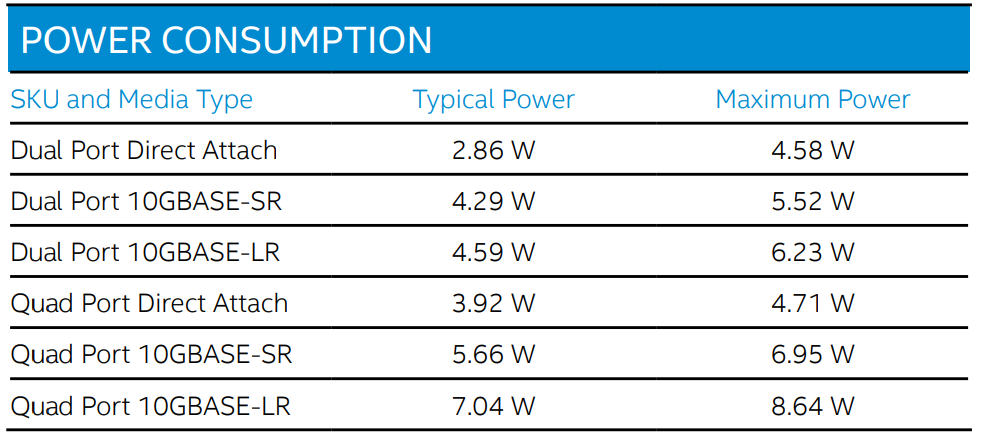

In terms of power consumption, this is where the Fortville NIC series shines. It is specifically designed to be a low-power option. Here is the official spec table with different attach levels for the dual and quad-port OCP NICs from Intel.

On the 10Gbps side, this is less of a concern than it is at 40GbE and dual 40GbE speeds, but the Fortville NIC does not have the same level of offloading that newer Columbiaville (Intel Ethernet 800 series) and other NICs have such as the Mellanox solutions. For example, RoCE V2 support Mellanox has been a major proponent of and we find in the Ethernet 800 series, but not these Fortville NICs. The 10GbE NICs here are low-enough speed where the impact on CPU performance does not necessarily require heavy offloads that we see in higher-end NICs. This is certainly more of a basic or foundational NIC.

Final Words

From a performance perspective, the Intel X710-DA2 OCP NIC 3.0 performs just as we would expect. We have been using Intel’s X700 series NICs for over six years now (see Intel Fortville Lower Power Consumption and Heat as an example) so the driver support is very good. There was a Fortville re-spin to fix an early bug, but cards like this OCP NIC 3.0 form factor are all built many years after that occurred. It is something we do not worry about these days.

This card actually brought to light one negative aspect of the OCP NIC 3.0 form factor: it is often designed for a PCIe Gen4 x16 slot, yet only brings 20Gbps of networking capacity. It is important to have a range of NICs at different price points for slots, as we have with traditional PCIe slots. While many are focused on the higher-end 200Gbps, SmartNIC, and DPU solutions, there will be a market for cards like this dual 10GbE unit. A downside of the specialized form factor is that it routes lanes even if they are unnecessary for the card. At some point, trade-offs have to be made and one can argue that if you are using an inexpensive dual 10GbE NIC you are most likely not using every lane in a system anyway so this is moot. It is simply a dynamic we wanted to call out since we, like many, focus on the new capabilities rather than sometimes considering the legacy market impact.

Overall, this is not the best performing, nor most feature-rich NIC that we will see in an OCP NIC 3.0 slot. Still, there is a strong market for legacy parts that deliver low-power and low-cost so the appeal makes sense. If you cannot tell, we are extremely excited by the OCP NIC 3.0 form factor at STH.

Any idea why it didn’t hit somewhere close to 900MB/s?