We have not done one of these for some time, but they are always fun. Since it is a holiday week, we are going to take a look inside a Celestica Seastone DC010 100GbE switch. This is a 32x 100GbE or a 3.2Tbps era switch that there is not a whole lot of information on. So as a result, we wanted to simply show what is inside instead of doing a formal review.

A Video Version

We have a video with some more footage from the switch. Here is the video for this:

The video covers a lot of the same content, but with video, we have more ability to show different angles. Plus, you can listen to the video as well. This has “nothing” to do with the fact that we are just a few subscribers short of hitting 40,000 subscribers on the STH YouTube channel by the end of the year. (Subscribe here) That was a goal that four weeks ago we were hoping to hit in March 2021, but now, are making a push to have it happen two and a half months early.

Celestica Seastone DX010 32x 100GbE Switch Overview

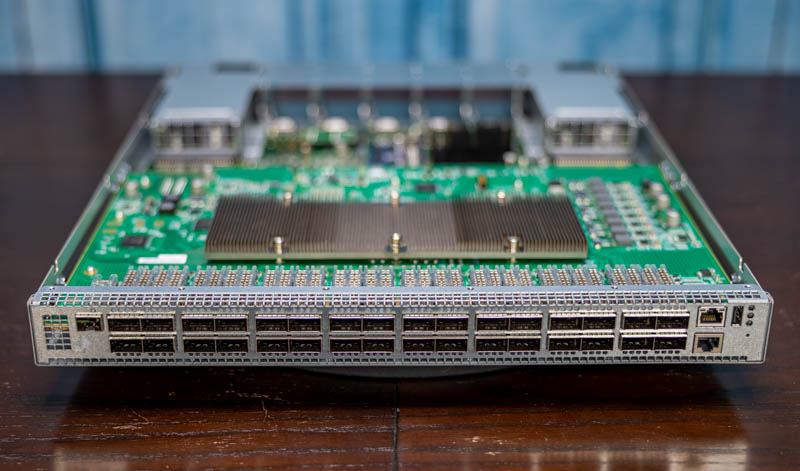

In the front of the switch, we have 32x QSFP28 ports. These are designed for 100GbE networking. For those looking towards cables, these QSFP28 ports will accept optics and DACs. Ours did not require coded DACs, but since it was purchased second-hand what we see may not be representative for every unit. QSFP28 is the 100Gbps generation. For 40GbE we used QSFP+ and for the next-generation 200GbE we use QSFP56.

On the right, we have two RJ45 ports. One is designed for out-of-band management duties. The other is a serial console port. There is also a USB port which can be handy for storage if one needs to load an OS or backup configurations. Sometimes these are used for firmware updates, but it is fairly difficult to find firmware updates from Celestica since the company’s website does not even return any search results for this model.

On the other side of the unit, we have a SFP cage that we never used.

Moving to the rear of the unit, we have many fans.

The two outermost units are 800W Delta power supplies. These are on opposite sides to facilitate airflow and to make short cable runs for A+B power sides of racks.

In the middle, we have an array of five hot-swappable fan carriers with Nidec fans. These keep the system cool and are fairly easy to replace. The latches are made from relatively easily removable metal but there are more heavy-duty stops for the cage. Overall, this is a functional design, but it is not the most intuitive 1U fan design we have ever used.

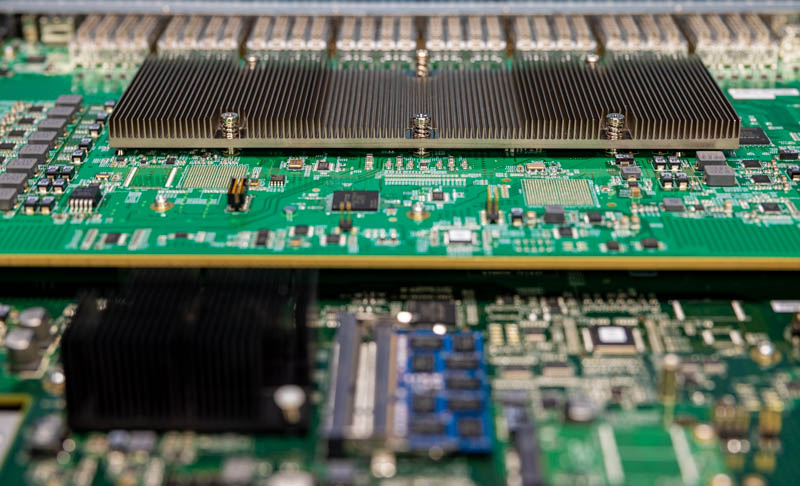

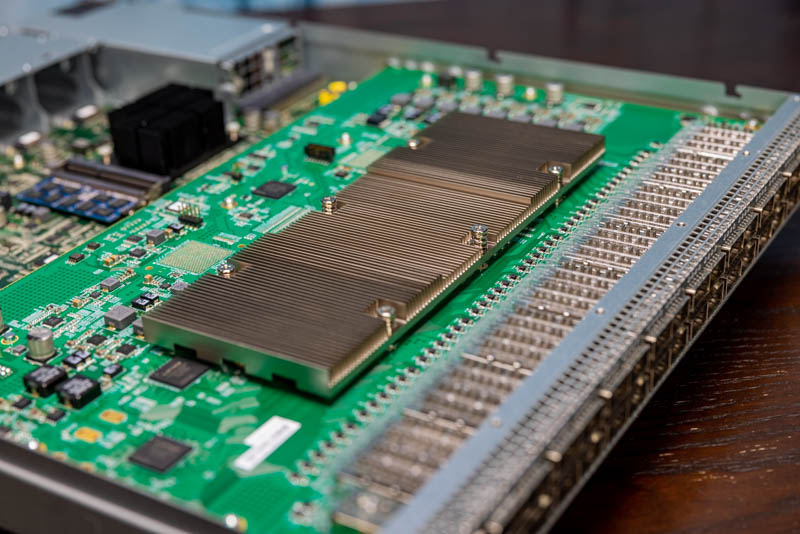

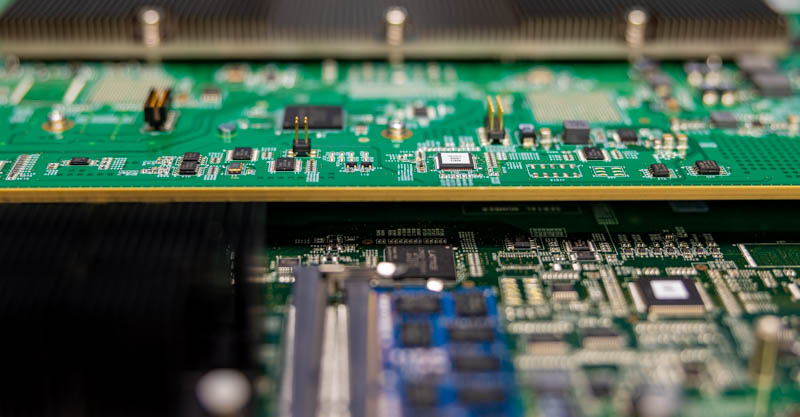

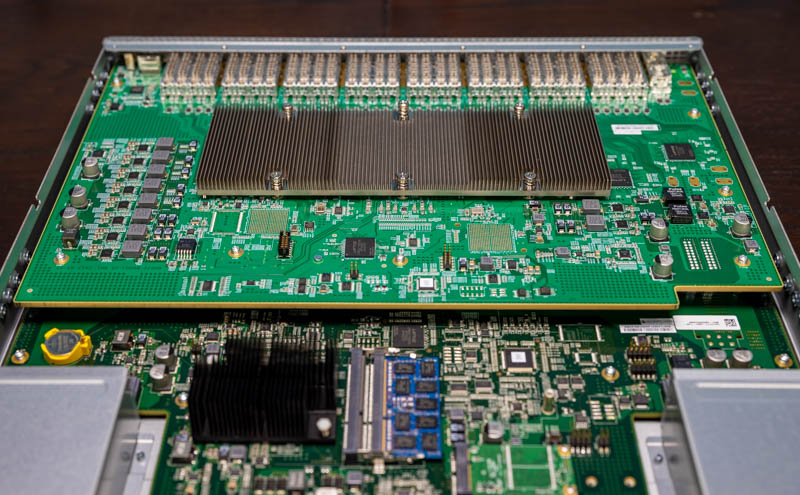

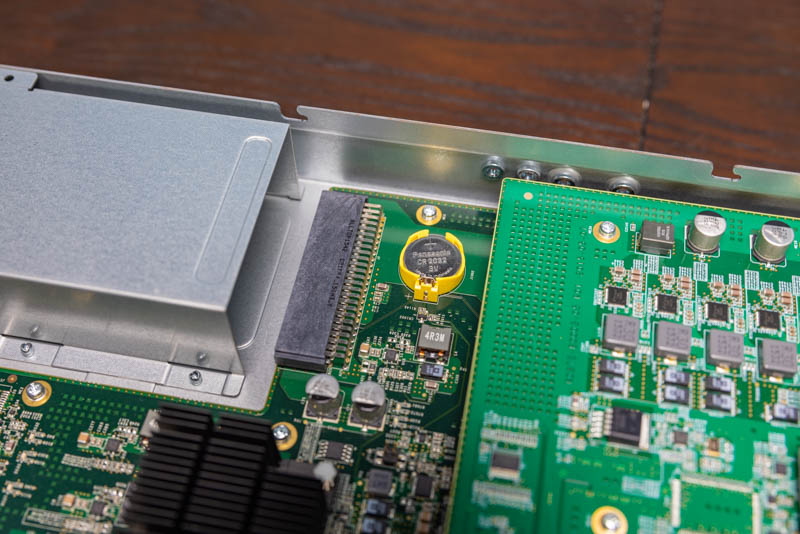

Removing the cover is not a user-friendly step. On even a $5,000 server we would expect a tool-less cover removal. On this switch, we are using a large array of screws to open the unit. Inside, we see a fairly simple layout. There are two main pieces of PCB.

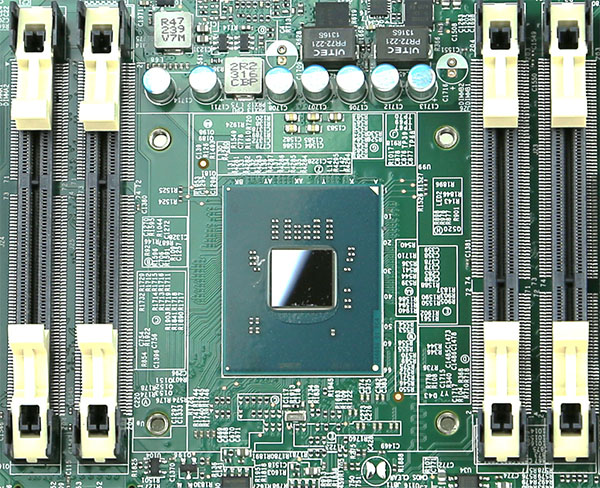

The upper PCB is designed for the main switching function. The large heatsink one may first assume is for multiple ICs. Instead, it is simply there to cool the Broadcom Tomahawk chip. This is the Broadcom BCM56960 also known as the StrataXGS. In terms of switch chip generation, this is a 3.2Tbps generation part. The industry is rapidly moving into 12.8T / 25.6Tbps switching these days. Still, for a 32x 100GbE model, this makes sense.

The Broadcom BCM56960 has integrated low-power 25Ghz SerDes and can handle 32x 100GbE, 64x 50GbE, or 128x 25GbE configurations. That means one can break-out the QSFP28 to 4x SFP28, for example, and connect a large number of devices while aggregating 100GbE links for uplinks. The switch is a Layer 3 switch and we get support for RoCE/ RoCEv2, OpenFlow 1.3+ and VXLAN, NVGRE, MPLS, and more.

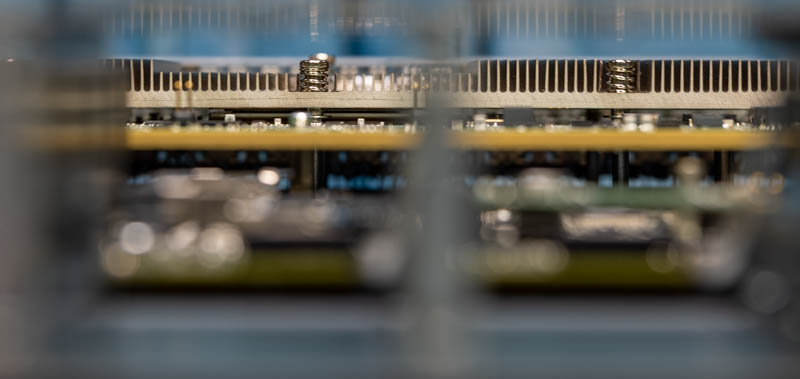

The PCB this switch chip is on is very thick. If you are accustomed to most server or consumer motherboards, this is several times thicker than what you are used to. The reason is simple, it has to take 3.2Tbps of traffic from the switch chip to the QSFP28 connectors on the front of the switch.

The bottom PCB is relatively simpler. One can tell it is a different thickness and a different color. There is a good reason for this. The bottom PCB is really more of what we consider a control PCB. On here we have the management complex along with power and cooling.

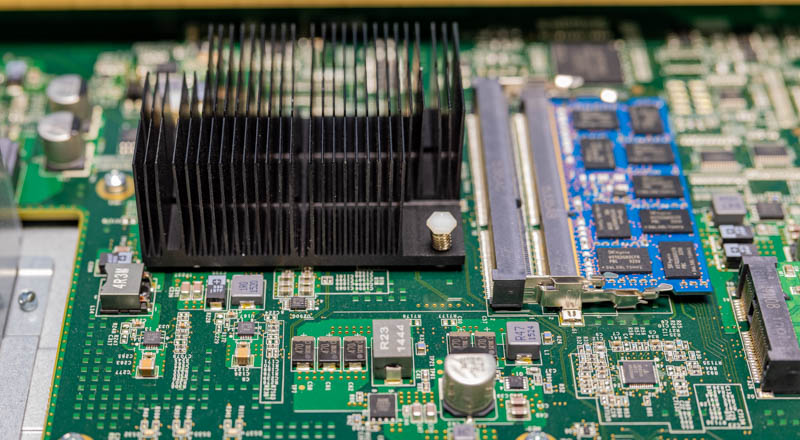

On the management complex, we get a Rangeley (Atom C2000 series) CPU under the black heatsink. As an x86 processor, we can load ONL or SONiC on this ONIE switch. Most of the STH readers who have done so are using SONiC at this point. For those who are unaware, SONiC is a network OS championed by Microsoft Azure. Since then other cloud providers as well as companies like Dell have jumped on the SONiC bandwagon. If you want to see SONiC installation, we have a guide using a Quanta switch that you can read about in Get started with 40GbE SDN with Microsoft Azure SONiC for under $1K. Since then, as one can imagine, the project has matured, but this is a switch designed for open networking.

Something that is serious here is that this switch was built in 2016. As a result, we have to worry about the Intel Atom C2000 Series Bug (AVR54). There is usually a build date on the exterior of the switch. We suggest asking about that if you are purchasing these second-hand. We saw the Intel Atom C2000 C0 Stepping Fixing the AVR54 Bug in mid-2017. The impact can be quite large. We have had the Intel Atom C2000 AVR54 Bug Strike STH. As a result of this, your switch can simply fail to reboot one day.

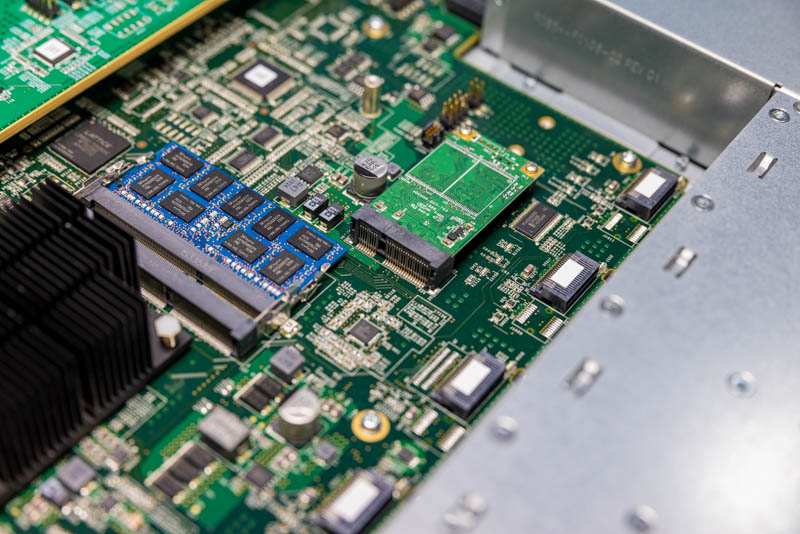

For memory, we see a DDR3 SODIMM. Our unit is a 4GB SK hynix module but one can see an easy upgrade path to two DIMMs and higher capacity. For most switches, 4GB is plenty, and 8GB certainly so. There is nothing overly special about this unit.

For storage, we get a mSATA drive. We have tried upgrading these on other switches, and it often does not end well. Our advice is that while the RAM is easy to upgrade leave this one alone.

Although much of this system is custom, there is even a standard CR2032 battery held within a bright yellow slot. We are not entirely sure why there is a yellow battery holder in an otherwise bland chassis.

Key Lessons Learned

This is an older switch, but there are a few items we wanted to point out here.

- First, the switch is a 32x 100GbE switch that for most lab usage will utilize under 200W of power. While the PSUs are 800W each, they are unlikely to run at that wattage given modern DACs and optics.

- Second, the Atom C2000 bug can be catastrophic (we have encountered it multiple times at STH now, but still on under 10% of our population.) We would not pay $9000-10000 for a switch like this today with an Atom C2000. Luckily, these switches are usually in the $1000-1500 range. That puts them, on a price per Gbps basis, as a lower-cost option than even many used 40GbE switches and much lower than even low-end 1GbE/ 10GbE switches. The discounting is built-in for the C2000 bug as well as the fact you are going to need to install and use SONiC with these. Our best advice is to understand the AVR54 risk, and then if you do want to deploy this, at minimum use two for redundancy with a cold spare.

- Third, while this may sound scary, some sellers will offer a warranty. Having a switch like this for learning 100GbE SONiC as well as using features such as RoCEv2 for fast networking/ storage can be worth it. $1000-1500 to get access to this level of learning on this level of performance hardware is quite reasonable.

Final Words

Overall, this is just a fun little piece for the holiday week to show off the switch. We meant to do this earlier, but this year presented a number of challenges. For our readers that are concerned about AVR54, one can look for newer/ reworked switches, or realistically look for Xeon D or Pentium D1508 based switches with the Broadcom Tomahawk and SONiC support.

I’ve got a C2550 Supermicro and it’s fine years later, I appreciate it’s a risk but my feeling is that machines which would go should have already gone? If it’s still working then surely it’s fine?

So, Patrick, how do your page views on the HTML version compare to page view on Youtube?

emerth – generally I look at YouTube views as a single-digit percentage of what the main site will do page view wise.

If anyone is curious, you can run setpci -s 00:00.0 8.w to determine the chip stepping.

If the command returns 0003, you have a C0 stepping CPU which is good.

Note that some manufacturers might have the board fix but you will have to confirm with them.

We use(d) a LOT of C2550 CPUs in Synology, Supermicro and other devices for many years (many are still in service today), all from the „Intel bug inside“ era and we had no issues whatsoever. Zero.

I feel STH should refrain from crying FUD about this. Typical American behavior to dramatise and exaggerate. The world is so much larger than STH..

Dave, the reason why the Intel bug is quite so, ‘dramatic,’ as you put it, is that the Atom CPUs are marketed by Intel SPECIFICALLY as long-life/embedded/mil-grade components. A bug that is basically a potential time bomb is a non-starter and has caused significant (with reason!) industry concern about this Intel product line and their QC/acceptance process.

You are correct in that most of these CPUs would have failed already if in use full time and are probably fine in perpetuity, at this juncture, but the uncertainty is there, which is the problem.

Dave, with a sample size of 2 coworkers owning syno’s with the Intel Atom CPUs: both had them fail.

I can’t even begin to tell you the number of Cisco routers we had blow up from the bug. STH is not blowing it out of proportion, those things are 100% time bombs.

Is it even possible to replace the CPU by removing it and putting a newer, safer version? I know it may take specialized equipment, but I’m willing find a workshop willing to do the swap, if it’s possible at all.

Hedgehog is testing and supporting SONiC on the DX010. We have built a SONiC factory so you can order your community edition SONiC with the features you need, and none of the bloat that you don’t need. Please let us know if you want help with SONiC on Celestica.