Dual NVIDIA Titan RTX NVLink Rendering Related Benchmarks

Next, we wanted to get a sense of the rendering performance of using two NVIDIA Titan RTX GPUs with NVLink.

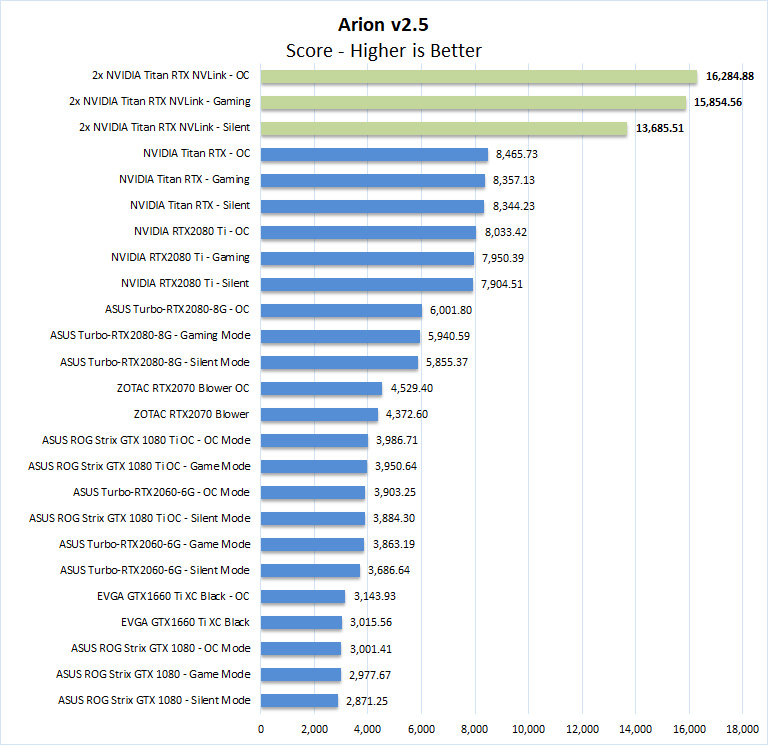

Arion v2.5

Arion Benchmark is a standalone render benchmark based on the commercially available Arion render software from RandomControl. The benchmark is GPU-accelerated using NVIDIA CUDA. However, it’s unique in that it can run on both NVIDIA GPUs and CPUs.

Download the Arion Benchmark from here. First-time users will have to register to download the benchmark.

As we will see in this section using dual NVIDIA Titan RTX GPUs with NVLink has a massive impact on rendering applications that we tested. Arion results effectively double, showing excellent scaling.

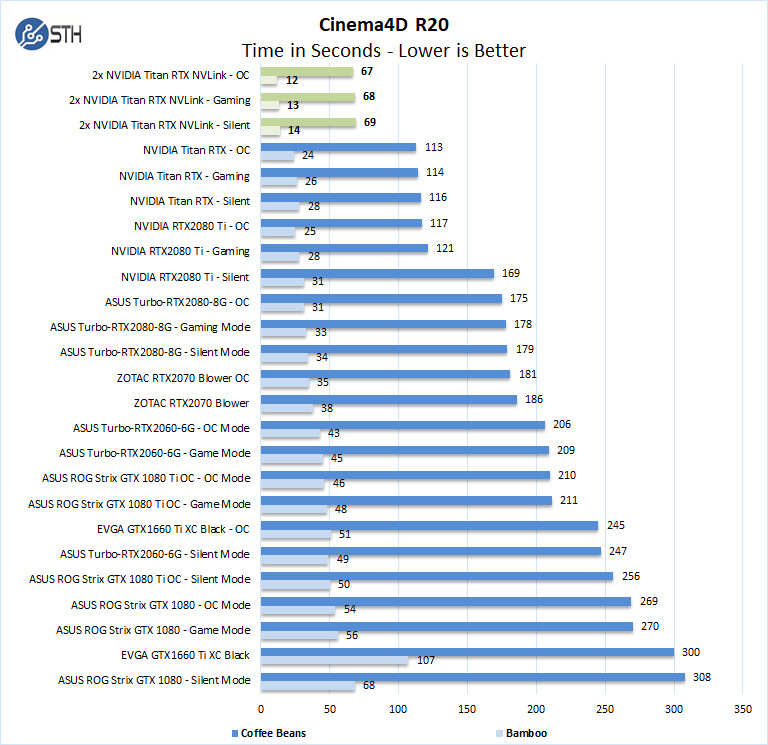

MAXON Cinema4D 3D

ProRender is an OpenCL based GPU renderer which is available in MAXON’s Cinema4D 3D animation software. A fully functional 42-day trial version is available for downloaded from the MAXON website here. Note: Even after expiration, the trial can still be used to measure render times.

In Cinema4D R20, the dual NVIDIA Titan RTX GPUs with NVLink cut our render times in half over the single GPU configuration. This shows excellent scaling.

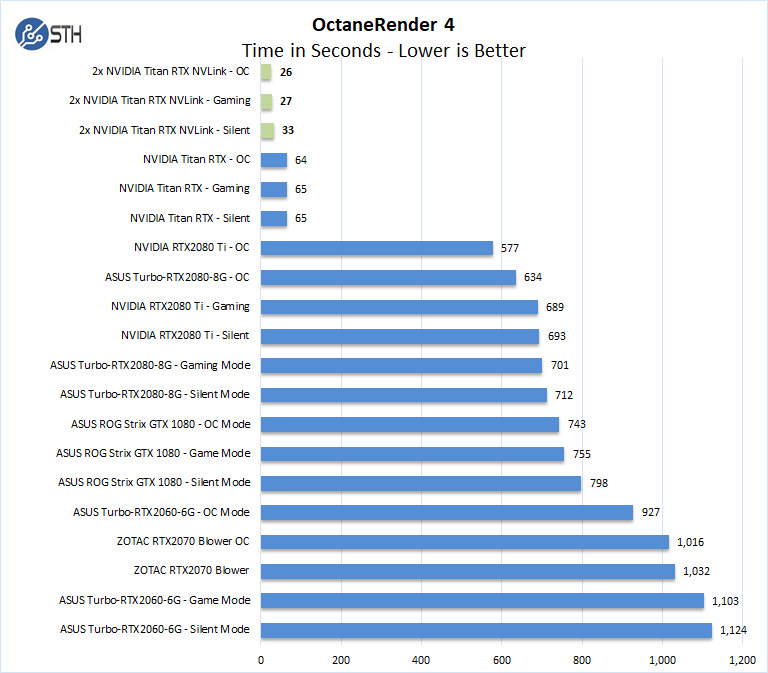

OctaneRender 4

OctaneRender from Otoy is an unbiased GPU renderer using the CUDA API. The latest release, OctaneRender 4, introduces support for out of core geometry. Octane is available as a standalone rendering application, and a demo version is available for downloaded from the OTOY website here.

If you want to go fast here, the dual NVIDIA Titan RTX GPUs with NVLink setup simply blows away the other configurations we have tested with OctaneRender.

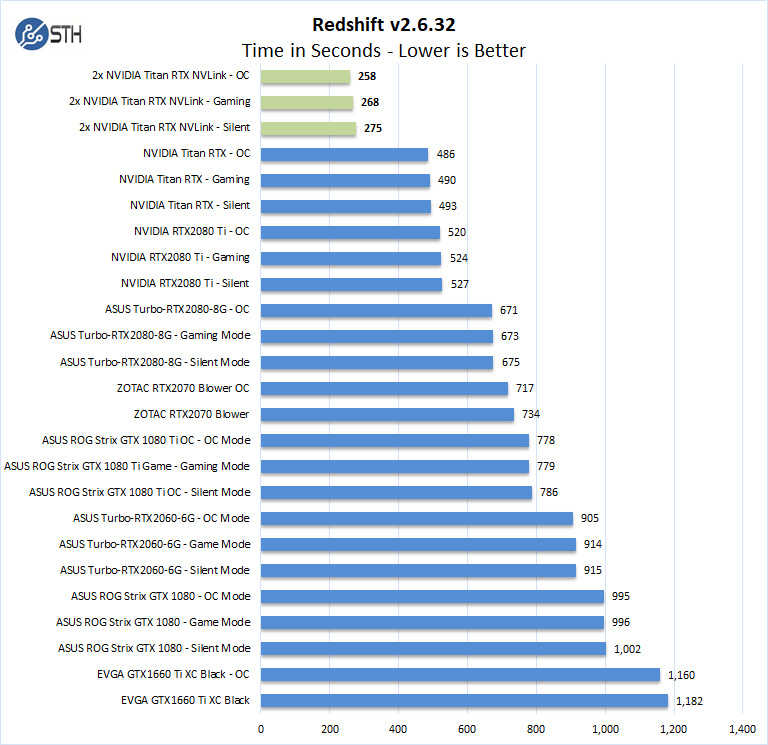

Redshift v2.6.32

Redshift is GPU-accelerated renderer with production-quality. A demo version of this benchmark can be found here.

Performance of the dual NVIDIA Titan RTX with NVLink setup is at the top of our results. Redshift is a very demanding benchmark, and these GPUs scale very well. We did not quite see half the rendering time of the single Titan RTX solution, but the dual configuration is not far off.

On our rendering benchmarks, one thing is clear: if you work against deadlines and need faster render times to get your job done and iterate faster, the dual NVIDIA Titan RTX setup performs extremely well in this domain.

Next, we will have 3DMark results before moving onto power consumption, thermals, and our final thoughts.

Incredible ! The tandem operates at 10x the performance of the best K5200 ! This is a must have for every computer laboratory that wishes to be up to date allowing team members or students to render in minutes what would take hours or days ! I hear Dr Cray sayin ” Yes more speed! “

This test would make more sense if the benchmarks were also run with 2 Titan RTX but WITHOUT NVlink connected. Then you’d understand better whether your app is actually getting any benefit from it. NVLink can degrade performance in applications that are not tuned to take advantage of it. (meaning 2 GPUs will be better than 2+NVLink in some situations)

I’m kind of missing the: NAMD Performance.

STH is freaking awesome. Great review William. You guys have got a great dataset building here

Great review yes – thanks !

2x 2080 Ti would be nice for a comparison. Benchmarks not constrained by memory size would show similar performance to 2x Titan at half the cost.

It would also be interesting to see CPU usage for some of the benchmarks. I have seen GPUs being held back by single threaded Python performance for some ML workloads on occasion. Have you checked for CPU bottlenecks during testing? This is a potential explanation for some benchmarks not scaling as expected.

Literally any amd GPU loose even compared to the slowest RTX card in 90% of test…In int32 int64 they don’t even deserve to be on chart

@Lucusta

Yep the Radeon VII really shines in this test. The $700 Radeon VII iis only 10% faster than the $4,000 Quadro RTX 6000 in programs like davinci resolve. It’s a horrible card.

@Misha

A Useless comparison, a pro card vs a not pro in a generic gpgpu program (no viewport so why don’t you say rtx 2080?)… The new Vega VII is compable to rtx quadro 4000 1000$ single slot! (pudget review)…In compute Vega 2 win, in viewport / specviewperf it looses…

@Lucusta

MI50 ~ Radeon VII and there is also a MI60.

Radeon VII(15 fps) beats the Quadro RTX 8000(10 fps) with 8k in Resolve by 50% when doing NR(quadro RTX4000 does 8 fps).

Most if not all benchmarking programs for CPU and GPU are more or less useless, test real programs.

That’s how Puget does it and Tomshardware is also pretty good in testing with real programs.

Benchmark programs are for gamers or just being the highest on the internet in some kind of benchmark.

You critique that many benchmarks did not show the power of nvlink and using pooled memory by using the two cards in tandem. But why did you not choose those benchmarks and even more important, why did you not set up your tensorflow and pytorch test bench to actually showcase the difference between nvlink and one without?

It’s a disappointing review in my opinion because you set our a premise and did not even test the premise hence the test was quite useless.

Here my suggeation: set up a deep learning training and inference test bench that displays actual gpu memory usage, the difference in performance when using nvlink bridges and without, performance when two cards are used in parallel (equally distributed workloads) vs allocating a specific workload within the same model to one gpu and another workload in the same model to the other gpu by utilizing pooled memory.

This is a very lazy review in that you just ran a few canned benchmark suites over different gpu, hence the rest results are equally boring. It’s a fine review for rendering folks but it’s a very disappointing review for deep learning people.

I think you can do better than that. Pytorch and tensorflow have some very simple ways to allocate workloads to specific gpus. It’s not that hard and does not require a PhD.

Hey William, I’m trying to set up the same system but my second GPU doesn’t show up when its using the 4-slot bridge. Did you configure the bios to allow for the multiple gpus in a manner that’s not ‘recommended’ by the manual.

I’m planning a new workstation build and was hoping someone could confirm that two RTX cards (e.g. 2 Titan RTX) connected via NVLink can pool memory on a Windows 10 machine running PyTorch code? That is to say, that with two Titan RTX cards I could train a model that required >24GB (but <48GB, obviously), as opposed to loading the same model onto multiple cards and training in parallel? I seem to find a lot of conflicting information out there. Some indicate that two RTX cards with NVLink can pool memory, some say that only Quadro cards can, or that only Linux systems can, etc.

I am interested in building a similar rig for deep learning research. I appreciate the review. Given that cooling is so important for these setups, can you publish the case and cooling setup as well for this system?

I only looked at the deep learning section – Resnet-50 results are meaningless. It seems like you just duplicated the same task on each GPU, then added the images/sec. No wonder you get exactly 2x speedup going from a single card to two cards… The whole point of NVLink is to split a single task across two GPUs! If you do this correctly you will see that you can never reach double the performance because there’s communication overhead between cards. I recommend reporting 3 numbers (img/s): for a single card, for splitting the load over two cards without NVLink, and for splitting the load with NVLink.