Recently we had the opportunity to test the Dell PowerEdge R930 server. This machine is simply awesome. At the heart of the 4U server were four Intel Xeon E7 V4 processors. With a total of 96 cores and 192 threads, this represents the top of the line for Intel’s Broadwell generation of processors. Beyond the high core count and correspondingly large (12TB) RAM capacity the Dell PowerEdge R930 was a spectacular platform for expansion. It has a total of 8x 2.5″ NVMe bays, 16x SAS bays, dual 10Gbase-T Ethernet and a plethora of expansion slots. The quad Intel Xeon E7 platform is clearly in the realm of overkill for many applications, but it is still awesome to see what the machine can do.

Test Configuration

Our test configuration had decidedly high-end processors. The Intel Xeon E7-8890 V4’s were the highest core count CPUs found in any Broadwell series Intel product. At an MSRP of over $7000 each, this is not a low-end configuration:

- Server Platform: Dell PowerEdge R930

- CPUs: 4x Intel Xeon E7-8890 V4 (24 cores/ 48 threads each)

- RAM: 512GB DDR4 RDIMMs (32 * 16GB)

- NVMe SSDs: 8x 400GB Dell Express Flash (Samsung) NVMe

- SAS SSD: 2x HGST 400GB SAS3 SSDs

- Network Cards Tested: Mellanox ConnectX-3 Pro EN (dual 40GbE), Mellanox ConnectX-3 VPI (FDR Infiniband and 40GbE), Intel X710-DA4, Chelsio T580-CR, Intel QuickAssist 8950 accelerator and Netgate CPIC-8955 QuickAssist Accelerator

- Power Supplies: 2x Dell 750W 80 Plus Platinum

- Operating Systems: Windows Server 2012 R2, Ubuntu Server 14.04 LTS, 16.04 LTS, CentOS 7.2

As you may have seen, we filled the PCIe slots up with networking gear just to put a bit more load on the system than it would otherwise see. Even with a massive number of expansion cards (some not on Dell’s HCL), the system performed solidly. We were shocked that the Dell PowerEdge R930 was more flexible than we would have expected.

Meet the Dell PowerEdge R930

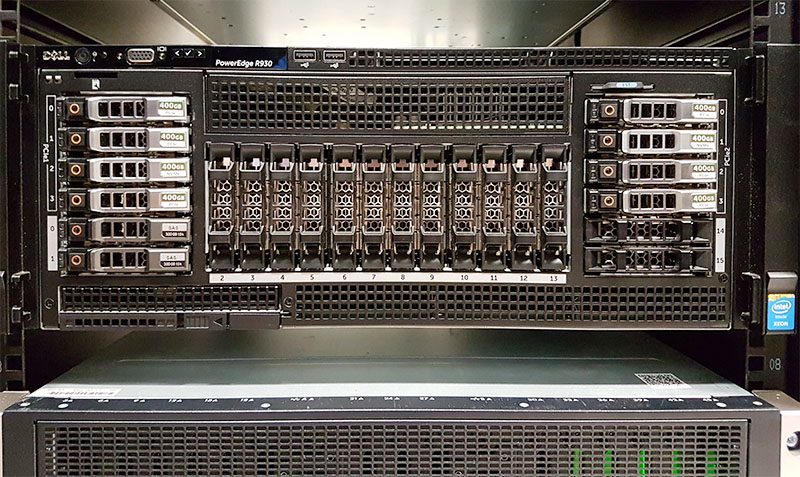

The Dell PowerEdge R930 is a reliable server. Fully configured our unit topped over 100lbs for the 4U machine, and there was plenty of room for that figure to go up. The box it came in had a pallet built-in which helps to move the server around the data center (assuming your facility allows this.)

Racking the Dell PowerEdge R930 is easy. We tried both as a two man team with the chassis holds as well as a single person team (with no server lift.) With a team of two, it is simple to install. An individual can safely install the server however we recommend having a second individual to help with the process. Once racked, the Dell PowerEdge R930 certainly looks impressive.

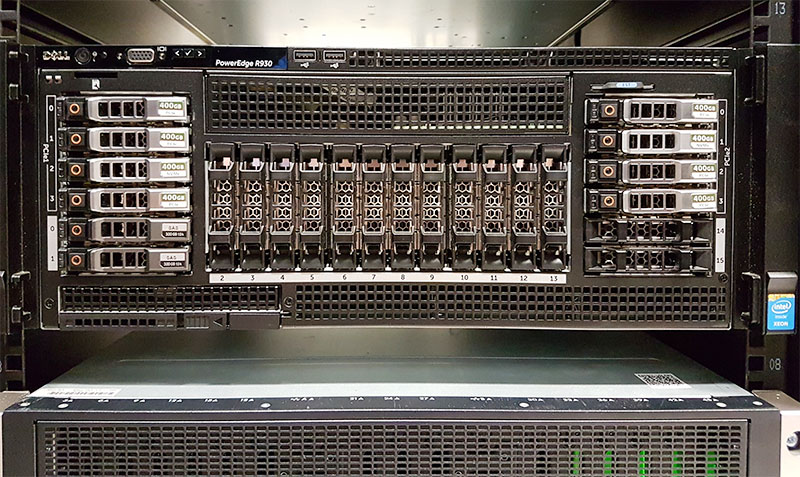

Along the front of the chassis, you can see front I/O so the server can be managed from a cold aisle. One can also see that there is an impressive storage array on the front of the chassis. One can see a total of eight PCIe SSD slots (four on the left and four on the right) of the chassis. There are also sixteen 2.5″ drive bays for SAS/ SATA drives. Dell does offer non-PCIe/ NVMe front I/O options as well. A few weeks before publishing this review we advised a small company looking at buying a pair of R930’s to “Get the 8x NVMe option. It is phenomenal.” That recommendation stands for those looking at the Dell PowerEdge R930 platform. We were able to get over 18GB/s of bandwidth from this array out of the box in Windows Server 2012 R2.

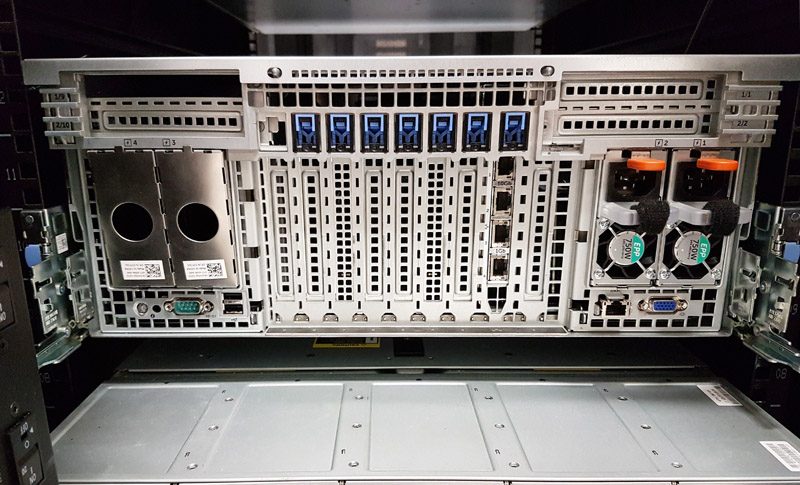

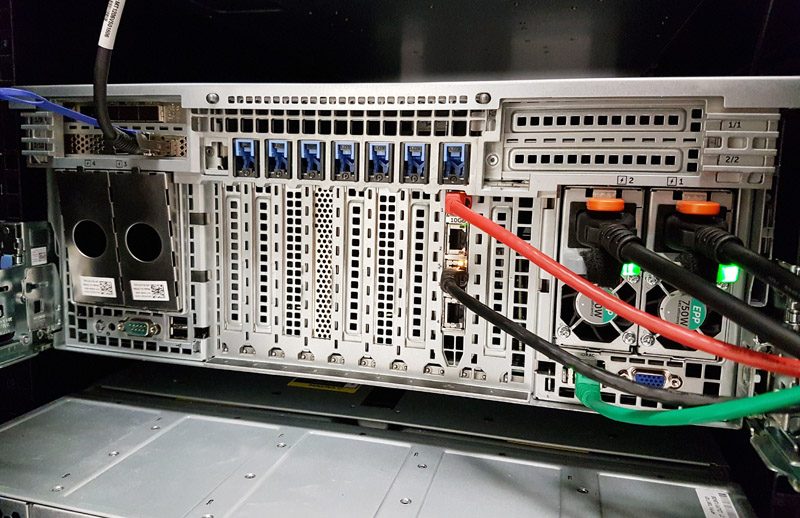

Moving to the rear of the chassis you can see a few configuration options for our Dell test system. First, there are only two 750w 80 Plus Platinum level power supplies in the server. We often had this system run above 1kW, so we certainly needed both. Our recommendation is to equip the unit with four power supplies.

Along the bottom of the chassis, there are standard I/O for management including the iDRAC port and physical VGA, USB and serial console ports. Dominating the rear of the server is an array of PCIe expansion slots that give the system massive I/O potential.

During our first boot, we needed to ensure we could get the machine onto our lab’s 10Gbase-T and 40GbE networks. Dell has an excellent networking option that gives dual 10Gbase-T ports and dual 1GbE ports in a single PCIe expansion slot. Unlike some competitive quad socket Intel Xeon E7 V4 offerings, the Dell PowerEdge R930 has well-placed rear I/O panels that make cabling significantly easier.

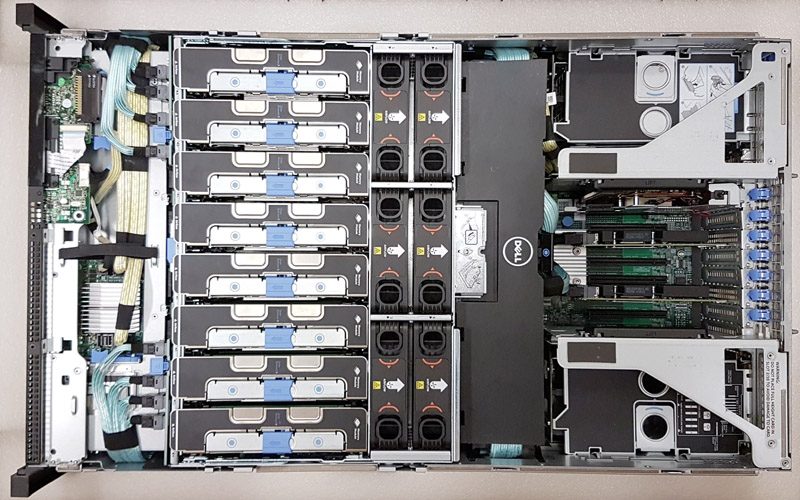

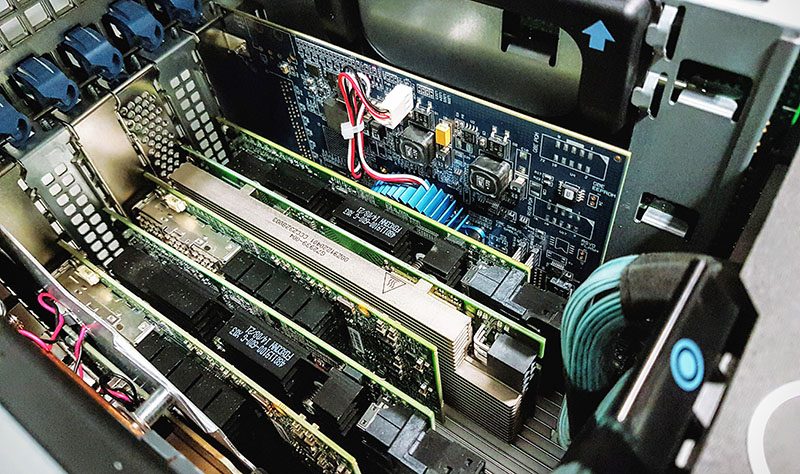

Inside the chassis, one can see a packed box of cables, cooling, RAM, CPUs and I/O expansion card slots. One of our lab techs saw this view and commented: “Wow that looks beautiful.” Dell has an excellent mechanical design, and the result of opening the chassis is that there are various sectors lined up that are all easy to service.

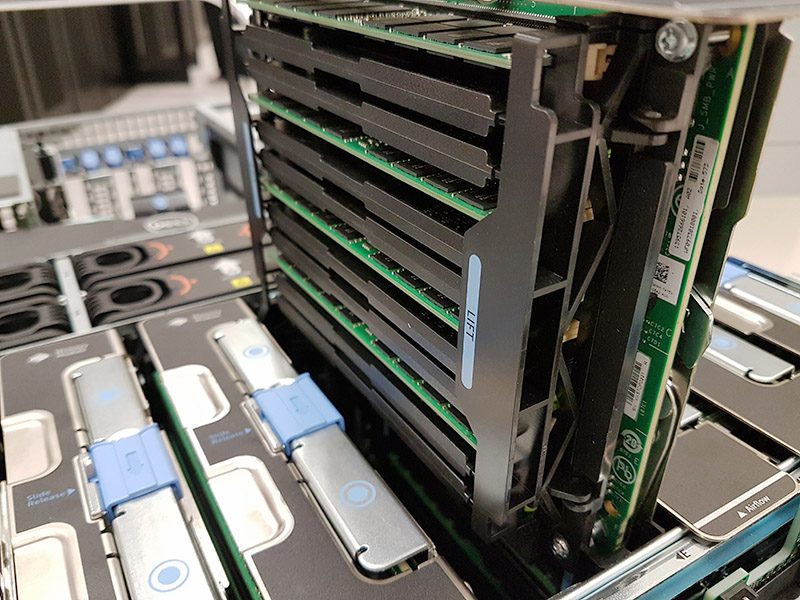

The first sector is the storage array. That provides NVMe and SAS connections to the front storage bays as well as houses drives themselves. Next, across the entire chassis, is an array of DIMM risers. The Intel Xeon E7 V4 series can take up to 3TB worth of RAM per CPU. Each CPU has two memory risers that house RAM and allow for relatively easy servicing. One can activate the latching mechanism and pull the memory card riser out. In under four minutes, we installed 8x DIMMs on a riser and had the system starting its boot process again.

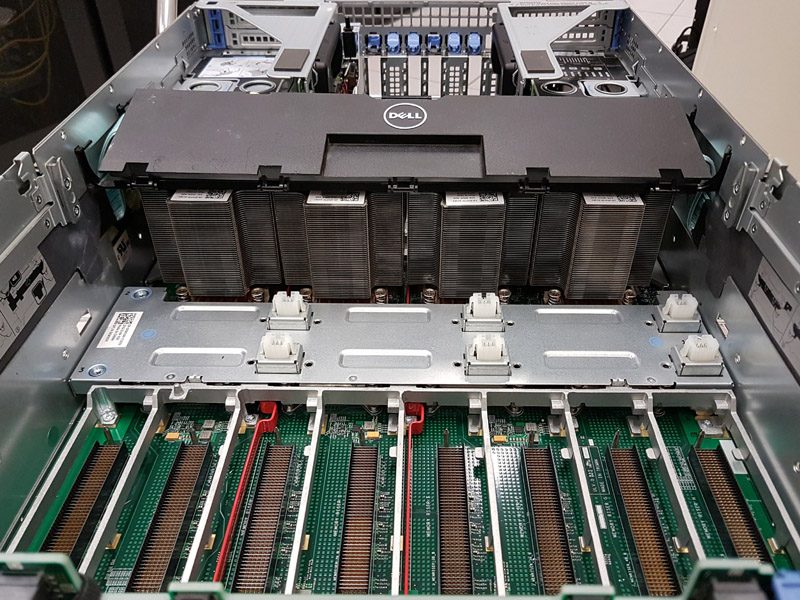

Here is a view of the system after removing the memory risers and hot-swap fans are installed. You can more clearly see the high pin count RAM riser connectors. as well as the hot swap fan connectors.

That giant wall of aluminum under the Dell logo is a set of tower coolers for the CPUs that connect to the thermal interface base plates via heat pipes. We call this the R930 “Heatsink Wall” as Dell engineers made these four sets of fin stacks both serviceable while maintaining a relatively close spacing between the heatsinks.

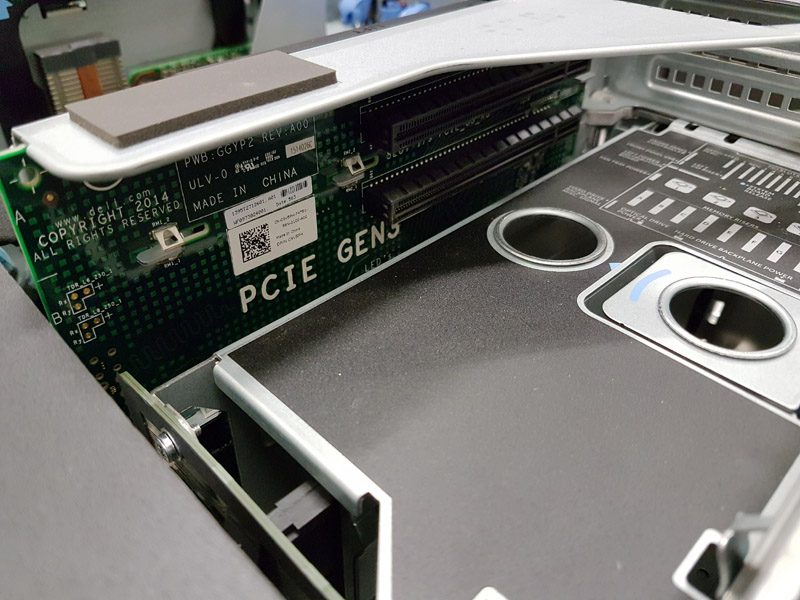

Past the R930 Heatsink Wall, we get to the I/O expansion sector. Dell has not just vertical slots but also has a riser system to add horizontal slots above both sets of power supplies. Here is what that looks like with networking cards installed:

The risers place cards just above the PSU cages and hold up to two cards each.

Pulling one of these risers out, one can see the actual PCB is more complicated than a simple set of traces to the PCIe slots. Overall this riser setup makes installing up to four horizontal expansion cards in the R930 a breeze.

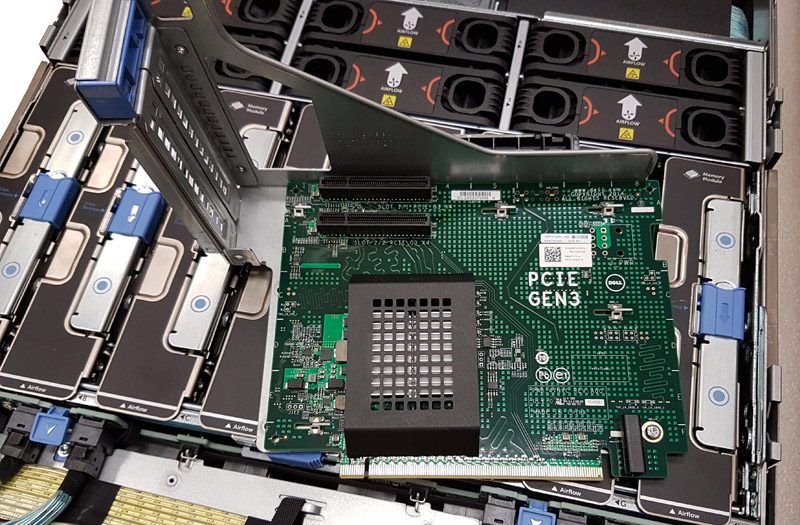

The primary expansion slots are configured as an array of 7x PCIe 3.0 x16 slots. Here is what we called our 80Gbps IPsec VPN configuration where we had two Intel QuickAssist cards, six 40GbE ports, and six 10GbE ports all configured. Installation of the cards was incredibly easy.

As an interesting note on performance, using the array of 8x NVMe drives and the pictured NICs and QuickAssist adapters we were able to push around 9GB/s of video streams over an IPsec VPN through the R930 with very low CPU utilization thanks to the QuickAssist cards. In a standard dual Intel Xeon E5 V4 server, there are only 80 x PCIe 3.0 lanes available. In a quad Intel Xeon E7 V4 system there are 128 PCIe lanes which can help when you need a plethora of I/O.

We do want to make a particular note here. Although there are seven vertical PCIe slots in this configuration three of them are otherwise occupied. Two by the PCIe adapters that are used to send 32 lanes to the front 8x PCIe/ NVMe drive bays. A dual 1GbE and dual 10GbE cardoccupies the third. That leaves four available in our configuration. When combined with the four horizontal slots we still have eight PCIe slots available for additional I/O.

While the hardware may look impressive, we wanted to see how it performed.

Dell PowerEdge R930 Performance

We were able to run our standard benchmarks on the Dell PowerEdge R930 as well as something new for STH. We have benchmarks of LS-Dyna crash simulations (run via ANSYS Workbench). This is an area that we are now running new systems through, including the Knights Landing systems we have in the lab to build a better data set. We did want to show some initial results in this review. To keep the size of this review more manageable, we are going to link you to a sample Linux-Bench output for the Dell PowerEdge R930. You can find that result here with over 50 different benchmark data points. If you have a Ubuntu 14.04 LTS LiveCD, you can run a direct comparison to the Dell PowerEdge R930 following the four steps in the Linux-Bench how-to.

LSTC/Ansys LS-Dyna HPC Workload – Crash Testing

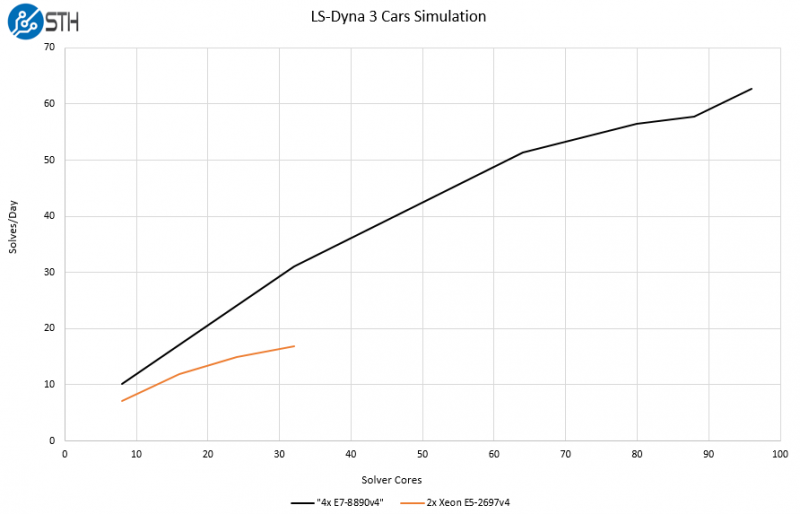

LS-Dyna is a general purpose structural and fluid dynamics simulation program. One of the key use cases of the software is for virtual crash testing of automobiles. We are going to have a follow-up piece where we compare this system to a cluster of a dozen single socket machines over Infiniband as well as dual Intel Xeon E5-2600 V4 servers. For now, we only wanted to show how the Dell PowerEdge R930 can out-scale smaller systems on two well-known workloads: 3 Vehicle Collision and Neon Refined Revised. Here is a 2-second video clip roughly approximating what is going on:

As one may imagine, using a Dell PowerEdge R930 to simulate multiple car collisions is significantly less expensive than doing so with real automobiles. Here is a comparison of the three vehicle collision workload run on a modern dual Xeon E4-2697 V4 system and the Dell PowerEdge R930 with quad Xeon E7-8890 V4’s:

The key takeaway here is that the larger pool of cores offers close to 3.7x the performance of the dual Intel Xeon E5-2697 V4 system.

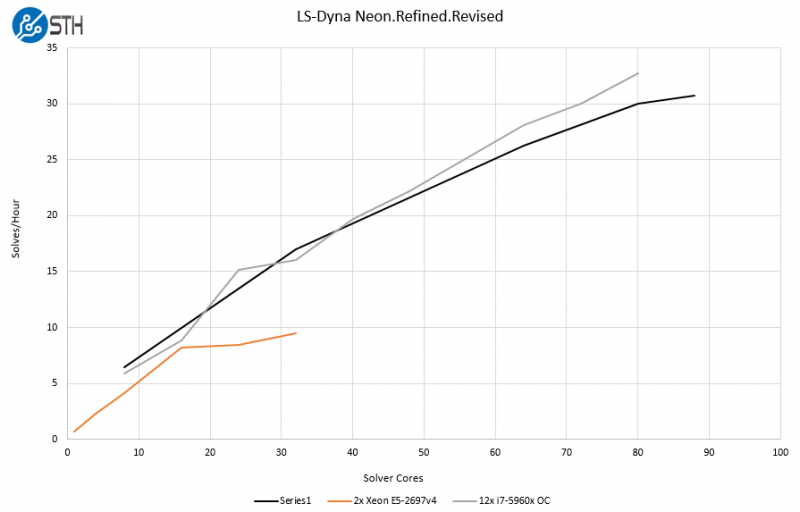

We also ran the LS-Dyna Neon Refined Revised workload on the systems and added in a tuned cluster of 10 overclocked Intel Core i7-5960X systems connected using QDR Infiniband. While this may seem strange at first, it is a typical setup for companies with similar workloads due to licensing costs. Having higher clock speeds and fewer cores is desirable to optimize on software costs. Note the actual cluster is “12x i7-5960X OC” but we were only using 10 of the 12 nodes.

Regarding performance, one can see that we are achieving peak performance at 88 solver cores on the quad Intel Xeon E7. What is notable though is that the Dell PowerEdge R930 is staying very competitive with the overclocked cluster. To achieve this level of performance, the cluster requires 10x 140w TDP CPUs that are then overclocked to use even more power. The cluster also requires 10x Infiniband network controllers, Infiniband switch ports, QSFP DACs, and an entire rack worth of space. Compare this to the Dell PowerEdge R930 which has around 95% of the performance of the whole cluster and at well under half the power and one-tenth of the rack space. When comparing the result to the dual Intel Xeon E5-2697 system we see greater than 2.9x the number of solves per hour with the Dell PowerEdge R930.

We are working on adding more systems to this list including the new Intel Xeon Phi x200 series and other Xeon E5 and E7 configurations. We also have several other benchmarks (e.g. Fluent) that we already have run on various systems. Expect more results soon on STH.

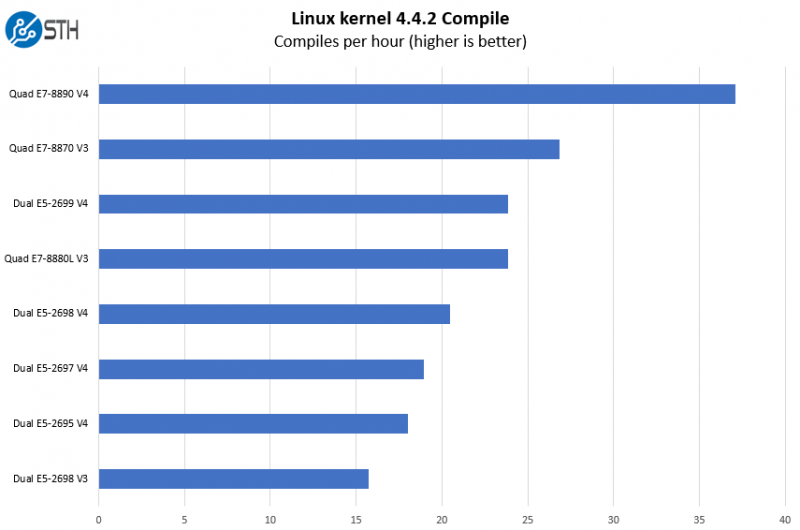

Python Linux 4.4.2 Kernel Compile Benchmark

This is one of the most requested benchmarks for STH over the past few years. We (finally) have a Linux kernel compile benchmark script that is consistent. Expect to see this functionality migrate into Linux-Bench soon (we are just awaiting the parser work on it.) The task was simple, we have a standard configuration file, the Linux 4.4.2 kernel from kernel.org, and make with every thread in the system. We are expressing results in terms of complies per hour to make the results easier to read.

As you can see, the Dell PowerEdge R930 with quad Intel Xeon E7-8890 V4 processors is by far the fastest production system we have tested to date.

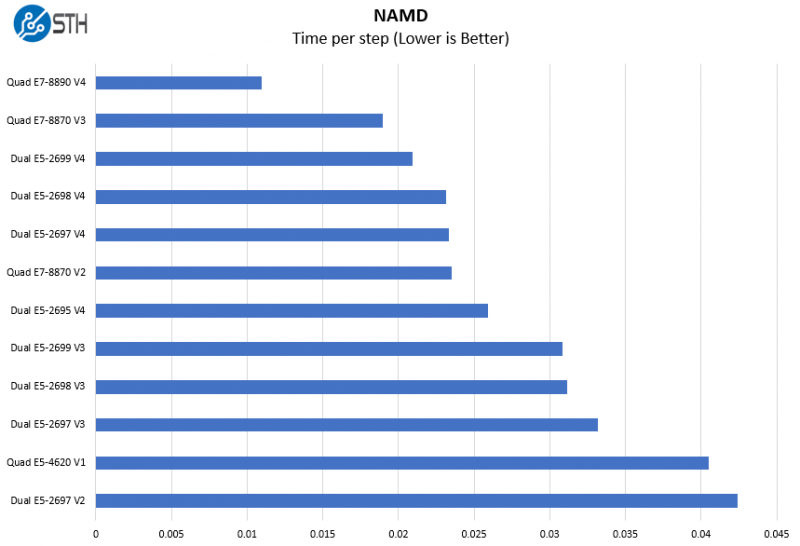

NAMD Performance

NAMD is a molecular modeling benchmark developed by the Theoretical and Computational Biophysics Group at the Beckman Institute for Advanced Science and Technology at the University of Illinois at Urbana-Champaign. More information on the benchmark can be found here.

As you can see, having 96 cores and 192 threads in a very well threaded benchmark works wonders for the R930.

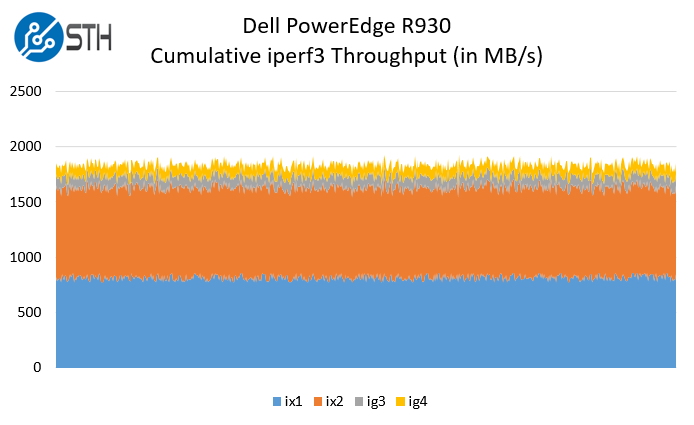

Networking

Regarding networking performance of the two 10Gbase-T ports and two 1Gbase-T ports performed as expected using iperf3:

The Dell PowerEdge R930 can easily utilize more than the configured level of 22Gbps combined networking offers. We highly suggest getting higher-speed networking for this machine so that you can feed it with enough network data. With enough clients and proper NICs, this is a system easily capable of pushing over 200gbps of network traffic.

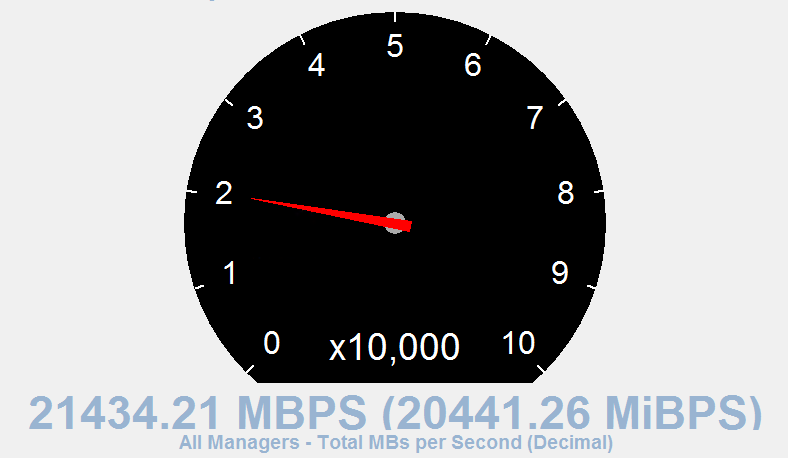

Storage Performance

To test the storage performance of the Dell PowerEdge R930 system we primarily focused on the 8x 400GB Dell-Samsung NVMe SSDs in the system. We wanted to test read workloads as the vast majority of enterprise workloads are heavily read focused.

When we fired up IOMeter and ran our standard 4K random read IOPS on the NVMe array, we hit just over 3 million IOPS out of the system. That is a fabulous figure for local storage.

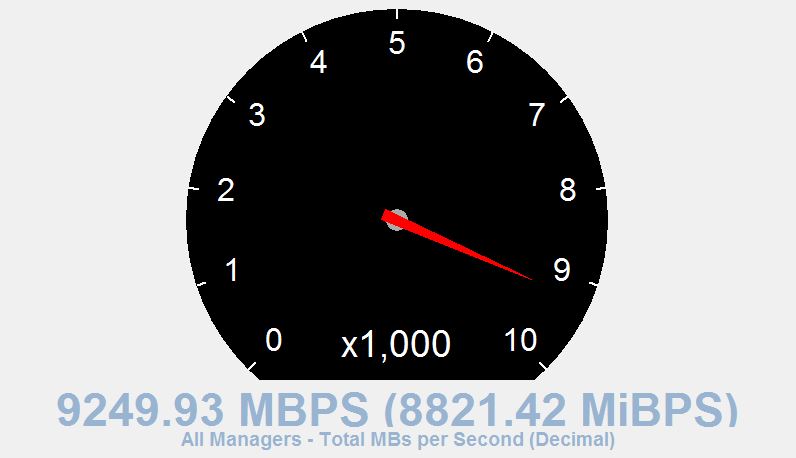

At the same time, we wanted to snag a hero shot of the read performance. You can see that the system was pushing around 21GB/s in sequential reads.

To put these local storage numbers into perspective, they are roughly double the IOPS and throughput of an entire 24x 1TB SATA SSD system using three SAS3 HBAs for connectivity. Having this level of local storage performance using just eight drives is impressive.

Since we were working with Intel and Netgate on the first 3rd party independent Intel QuickAssist testing on another Intel Xeon E7 system we had in the lab, we wanted to try a similar setup where we had many remote workers connected via IPsec tunnels to the system and sending video. This would be a setup where you had one of these machines processing video data and sending it to remote servers for further processing and analytics and very akin to a setup we helped an 8K 3D video surveillance company with for their workflow. We did require three different PCIe 3.0 x8 40GbE adapters and two QuickAssist cards in the R930 to balance the load.

We were limited by the two high-end Intel QuickAssist cards as they are only meant for around 5GB/s maximum (each.) With the QuickAssist adapters and the NVMe array, we were able to do this at extremely low CPU utilization leaving the large system to do more data processing.

Power Consumption

We used our calibrated Schneider Electric APC PDUs to measure the power consumption of the server. In the STH data center lab, we utilize a 208V circuit which is common in North American data centers.

- Idle Power Consumption: 410w

- Maximum Power Consumption: 1356w

If you think of this as (at least) equivalent to two dual Intel Xeon E5-2600 V4 servers, then these figures are very impressive The Dell PowerEdge T630 we reviewed hits over 630w under similar loads. This machine has more than double the storage, networking, and CPU capacity of the T630 we reviewed, so it is a considerably more power efficient platform. We were running fairly close to the dual 750W power supply maximum output.

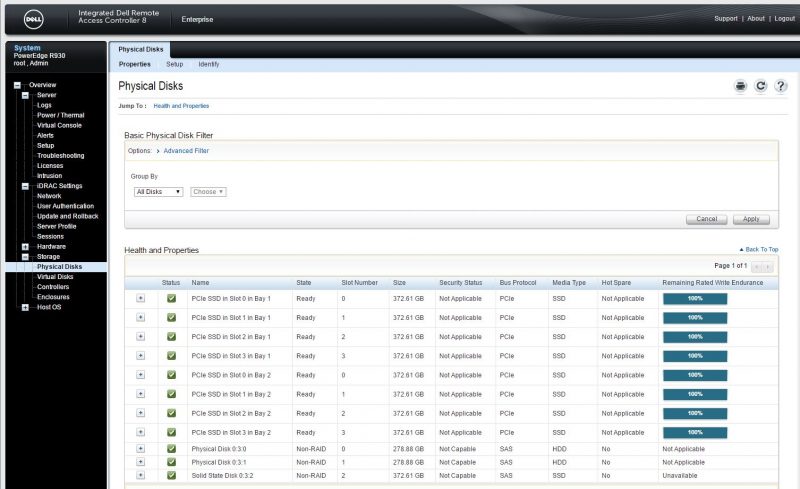

Remote Management

The Dell PowerEdge line includes iDRAC remote management capabilities. Instead of simply providing a basic functional way to access the server remotely, the Dell iDRAC solution provides an expanded set of features. We had a total of 35 features we wanted to specifically show using the iDRAC 8 interface. We recently did a Dell iDRAC 8 overview which will provide a more thorough look at iDRAC remote management capabilities. In that overview, we delve into what may be the industry’s best HTML5 KVM-over-IP solution and some of the other unique management features Dell offers.

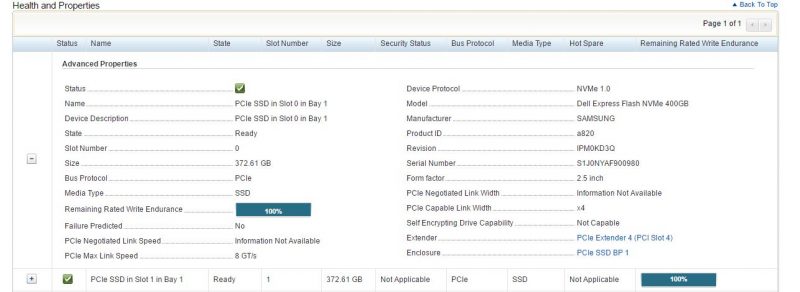

Since the Dell PowerEdge R930 has onboard NVMe, we wanted to check if the remote monitoring on the PCIe side was similar to Dell’s excellent PERC RAID card and hard drive health information. Directly from the iDRAC web GUI we were able to see each of the eight PCIe SSDs along with useful information such as status, capacity, slot, and remaining write endurance. This was extremely helpful when we briefly upgraded the NVMe SSDs to larger 2TB units we had on hand.

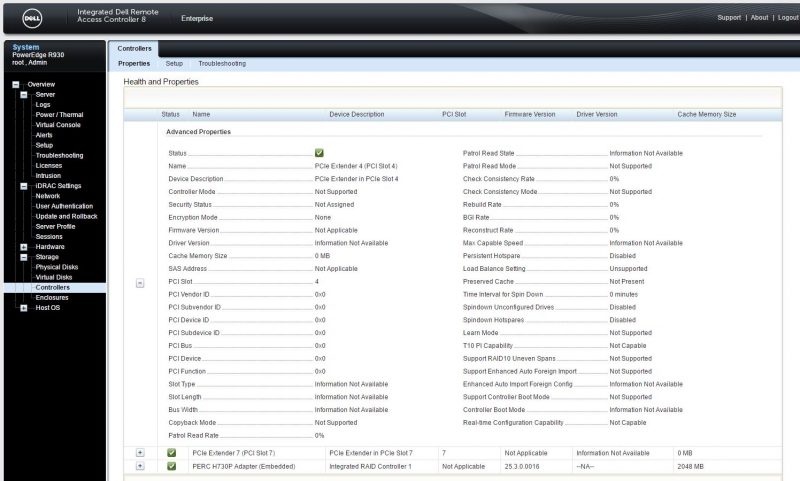

We were somewhat surprised to see that Dell is integrating information on the two PCIe extender cards we saw in the R930’s vertical slots to the iDRAC GUI. There is certainly less information on this than the embedded PERC H730P SAS RAID adapter, but that makes sense. The PCIe extenders have the primary purpose of taking motherboard PCIe lanes and extending them to the front of the chassis.

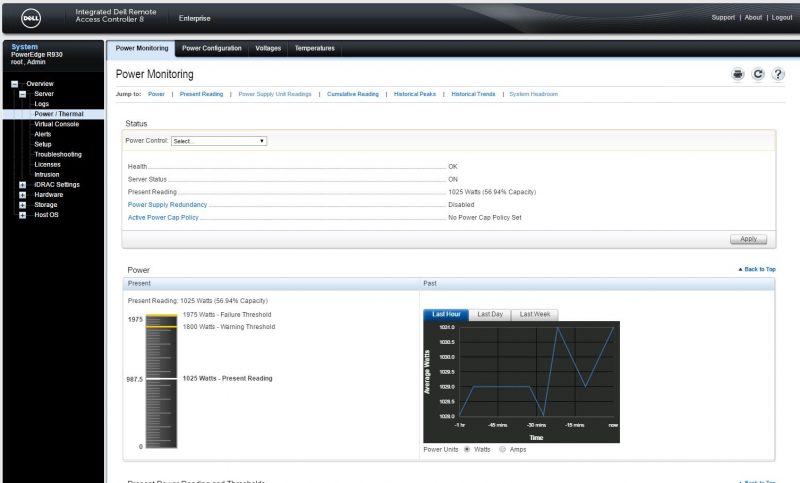

Also important in a system like this is power consumption. With so many CPUs, RAM chips, drives and cards our test system saw idle to load power consumption deltas of around 1kW. Dell’s power monitoring is excellent and gives readings close enough to our calibrated power meters to be extremely useful.

The Dell iDRAC 8 management is excellent. We are excited to see future versions as new platforms are launched as iDRAC is clearly an industry leader in the space.

Final Words

Working on the Intel Xeon E7 V4 platforms is always fun, and the Dell PowerEdge R930 is excellent. There is certainly a case to be made for using a few larger machines rather than a multitude of smaller devices. There are two primary considerations we found during our testing. First, software licensing can either make or break the value proposition of this machine. Software licensed on a per-machine basis makes the TCO of these platforms look superb. Other software has premium licensing pricing for four-socket and high core count servers. Second, if you are looking for a server to run Oracle EBS or SAP HANA or a similar program on, the Dell PowerEdge R930 has been designed to be a workhorse machine with little downtime, ideal for scale-up applications. Likewise, if you are trying to push the next level of virtualization efficiency, the Dell PowerEdge R930 is an excellent choice since you have larger pools of CPU and RAM with which to oversubscribe VMs with and can avoid hitting the external network more often.

At the end of the day, the Dell PowerEdge R930 is a premium product and working with the hardware and software on the machine it feels the part. In 2016 we reviewed dozens of servers, and this was by far our favorite single-node server to service. The sheer amount of I/O, RAM and CPU cores one can fit into the box is incredible. The excellent design makes the entire unit feel robust and very comfortable to work with. Our review Dell PowerEdge R930 was hammered with different workloads over several months and never had a stability hiccup. We expected the Dell PowerEdge R930 to run stable and were happy to confirm our expectation was met despite running a mixture of workloads even including HPC applications.

Do you think this server makes sense compared to just buying 2 x R730XD’s?

Generally, we have seen folks we advise purchase more than one of these and are looking to consolidate more like 2.5:1 or 3:1 versus smaller machines. Usually, they get this by making more effective use of local NVMe and being able to make effective use of more memory for VMs. We also see software licensing costs weigh heavily on the decision to use a larger server versus a higher number of smaller servers.

Good points. Thanks

“This is the highest core count Intel Xeon E7 V4 system out there”

Actually, Supermicro has the SuperServer 7088B-TR4FT, which is an 8-way E7 v4 system, supporting up to 192 cores, 24 TB of RAM, 16 U.2 NVMe ports and 15 PCIe slots. https://www.supermicro.com/products/system/7U/7088/SYS-7088B-TR4FT.cfm

It looks like a blade chassis, but is actually a single machine.

I’d be very interested in how long it takes from turning the machine on to getting to the boot loader.