Our Dell EMC PowerEdge R340 review will show why this is a premium 1U Intel Xeon E-2100 server. Previously we reviewed the PowerEdge R240 which Dell positions as a lower-cost option. With two servers in the entry single-socket 1U segment, the company is able to both share some components with the lower-cost platform, yet deliver a more feature rich server in the segment. In this review, we are going to show how Dell accomplished this task and how it manifests in a great server.

Dell EMC PowerEdge R340 Hardware Overview

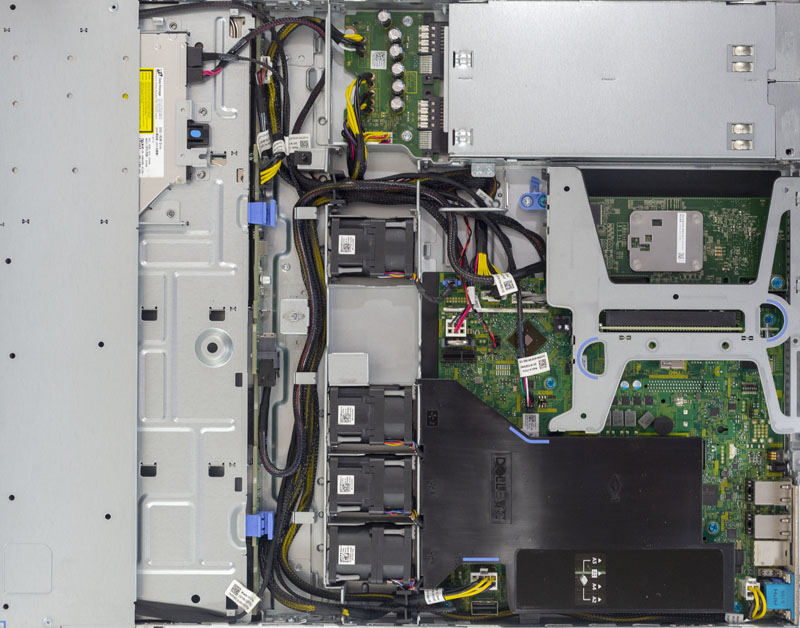

The Dell EMC PowerEdge R340 is a 1U short-depth server. Our review unit came equipped with four hot-swap 3.5″ bays, plus a slim optical drive. There is another version of the chassis available with eight 2.5″ hot-swap bays for those looking for more storage flexibility.

Looking at the rear of the chassis, there are legacy VGA and serial ports along with two USB 3.0 ports. A dedicated iDRAC management NIC and dual 1GbE ports. We have the PowerEdge R340 above the PowerEdge R240 here.

In the middle, we have the PCIe expansion rear I/O slots just like the PowerEdge R240. Where the PowerEdge R340 stands out is with its available redundant power supplies. Our review unit came with dual 550W 80Plus Platinum rated power supplies.

Inside the chassis, we have a standard 1U server layout. One can see storage in the front, a fan partition in the middle, and the motherboard and PSUs in the rear of the system.

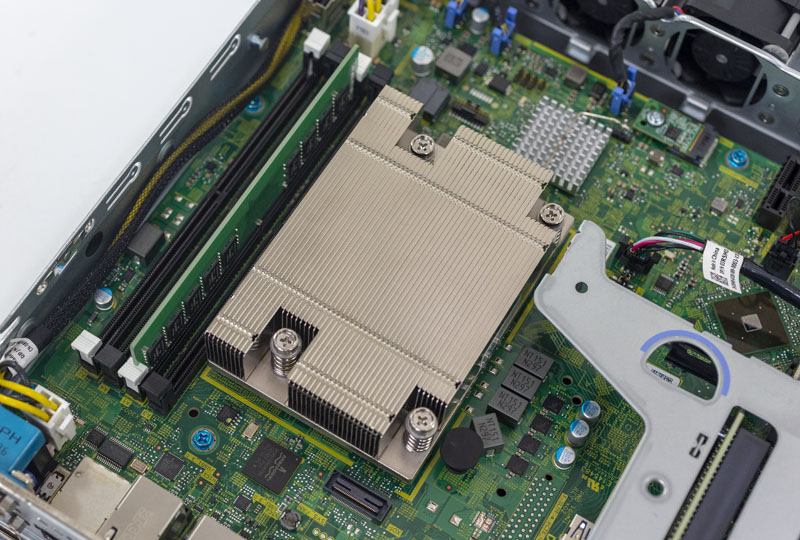

Providing compute for the Dell EMC PowerEdge R340 is an Intel Xeon E-2100 series CPU along with four DIMM slots. The server officially takes 64GB of ECC memory today utilizing 4x 16GB DDR4 ECC UDIMMs. As 32GB ECC UDIMMs come to market, Intel says that the Xeon E-2100 platform will support them so we expect the PowerEdge R340 to hit 128GB (4x 32GB) in 2019.

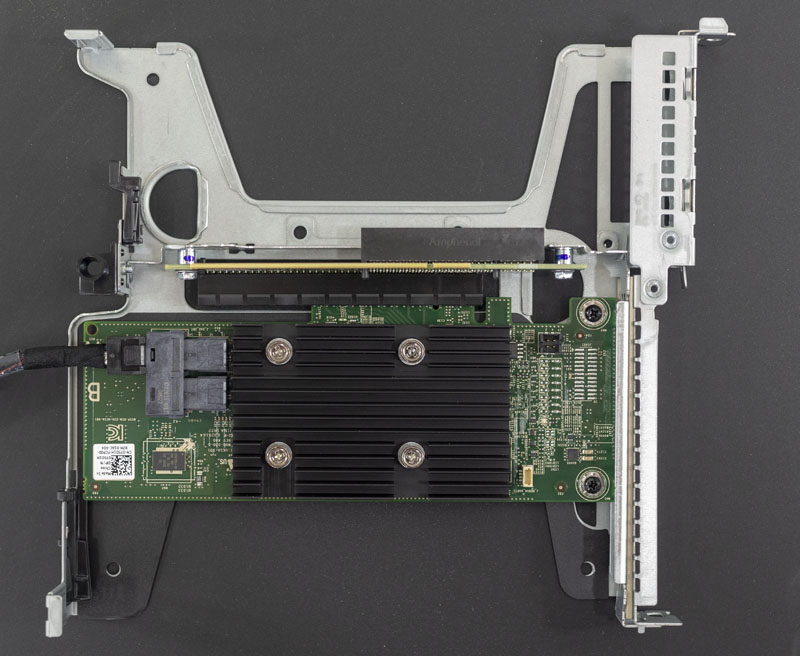

The motherboard itself is different than what we found on the PowerEdge R240. There is the same riser PCIe slot, however, in this case, we have another slot for a PERC storage adapter. This storage slot, on our chassis, did not have access to rear panel I/O so it is focused more on providing SAS3/ SATA connectivity to the front hot-swap bays. In the PowerEdge R240, that takes a valuable riser PCIe slot making the PowerEdge R340 more expandable.

Here is a picture of the riser for the PowerEdge R340/ R240. This is what a PERC card looks like installed in that riser.

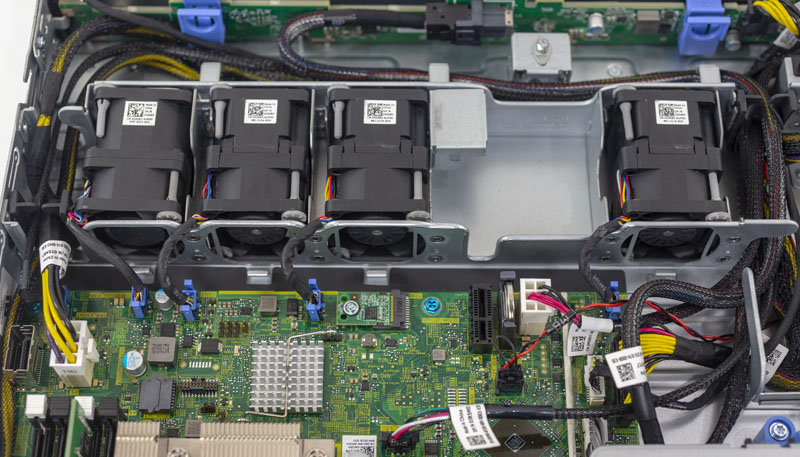

The fan wall is another area of differentiation. The Dell EMC PowerEdge R340 uses higher quality dual fan units instead of the lower cost single fan units we saw in the PowerEdge R240. These fans utilize a special fan connector Dell innovated on over a standard 4-pin PWM fan connector which makes replacement much faster than on white box servers.

In this photo, you can also see our test system is hooked up to the PERC RAID card, but there is an option to connect the 4x 3.5″ bays via the SFF-8087 header. Dell also includes a TPM header for its various TPM options.

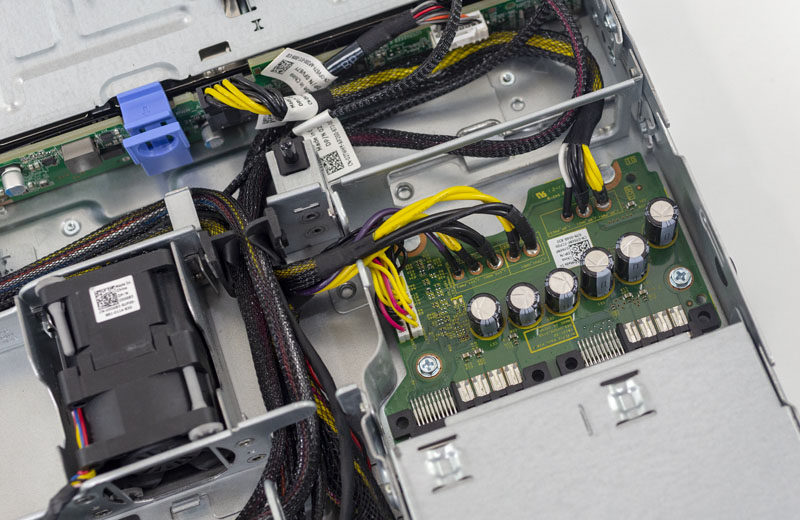

Earlier in this section, we showed the PowerEdge R340 redundant power supply solution. Internally, this is the PCB that handles input from both power supplies. It is a cost-optimized version of what we would see in higher-end PowerEdge servers. At the same time, it is a feature upgrade over the PowerEdge R240’s design.

Another small feature of the PowerEdge R340 is the PCB backplane for the hot swap drive bays. Dell’s solution uses clips to secure the PCB which is a nice feature upgrade over the typical white box server that utilizes screws to mount the PCB.

Overall the Dell EMC PowerEdge R340 hardware package is well designed and easy to service. There are some trade-offs the company made versus a higher-end PowerEdge like the Dell EMC PowerEdge R640 which make sense in this market segment.

Next, we are going to take a look at the PowerEdge R340 management before moving to the system topology and then our performance and power testing results.

We’ve got maybe a dozen of these since we’ve traditionally been a R220 and R230 hosting shop. We’ve been using the R340 alongside the R240’s for the better storage options. They’ve worked well but your right on iDRAC. The reason we’d consider going Supermicro is to be able to give our clients iKVM at a lower price point.

Has Dell improved the integration abilities of the iDRAC? Do they have an API which can be used by scripts or other tools for automation or mass changes? After about 10 servers, I really stop caring how human friendly the interface is and just want to script any changes or pull data programatically.

At one point, screen scraping was the method of choice for getting monitoring data out of iDRACs. Being read-only was fine, but since it’s a full device, I would like to have read-write capabilities as well as read-only.

Ryan Quinn Dell is really good at this now.

Ryan, the API that you most likely want for this purpose is Redfish (a modern, RESTful http protocol for all sorts of data center control) and is supported by almost every large-scale manufacturer of server-class equipment now — anything that has shipped over the past 2-3 years probably has it already including Supermicro, Dell, HPE, etc. It’s also been adopted by the Open Compute Project as its default for baseboard management controller systems control. More at https://redfish.dmtf.org and for tools go to https://github.com/dmtf (skip the private repositories, which are for developing the pre-release specs, and go for the public tools such as redfishtool or the libraries, or just explore the mockups at https://redfish.dmtf.org/redfish/v1 to get a feel for what you can do). The latest version even includes OpenAPI support for writing your own software based on the API.

Quick question , whats the pcie x1 slot for near the tpm.

I would also like to know what the pcie x1 slot is near the tpm. You can also see a cutaway in the chassis above the pcie x1 towards the hdd backplane. Also there seems to be a typo on page 2 ‘Dell EMC PowerEdge R240 Test Configuration’ should be 340 no?

@SADTech @Eli

That slot is for the Dell iDSDM (Dual SD Module) which is a redundant SD card module for booting hypervisors or emergency/recovery boot images.

Disclaimer: I work for Dell

“In this photo, you can also see our test system is hooked up to the PERC RAID card, but there is an option to connect the 4x 3.5″ bays via the SFF-8087 header. Dell also includes a TPM header for its various TPM options.”

Looking at the pictures it looks more like SFF-8643 connector?

Hey All,

Long-time lurker; first-time question. Would it be possible to fit a smaller graphics card in the PCIe riser?

Most likely yes if you are thinking something like a L4/ T4.