If we were to talk about some of the most hyped technology in recent memory, HPE The Machine may just be near the top of the list. HPE is touting “The Machine” as being a next-generation memory-centric architecture. At Supercomputing 2017 in Denver CO we had the chance to see some of the hardware behind the cool stock photo and learn more about how it works.

HPE The Machine at SC17

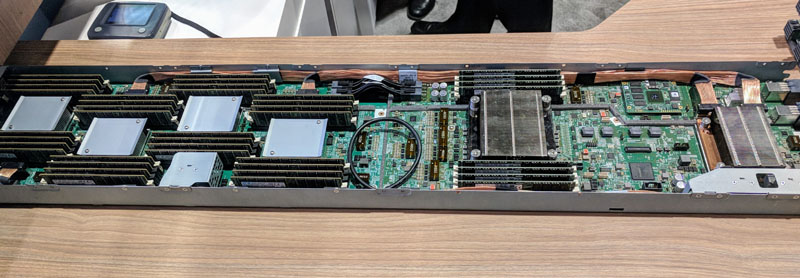

This is the compute and RAM sled of the HPE The Machine shown off at SC17. Just as a perspective, twice during interviews at the show did one of our (non-HPE) interviewees show us pictures with them holding the sled. In the server world, HPE has a powerful marketing message with The Machine.

The four heatsinks to the left are essentially RAM controllers. The middle right heatsink is a Cavium ThunderX2 32 core, 128 thread model with its octo-channel DDR4 memory. The heatsink to the far right is a FPGA. One of the most intriguing aspects of the machine is that the Cavium ThunderX2 CPU does not directly access the memory to the left of it. Instead, it goes through the FPGA/ switch fabric to access that memory. If you are thinking “is that not terrible for latency?” we are assured it is. The idea is that CPUs can access memory directly no matter if they are on the same PCB or not.

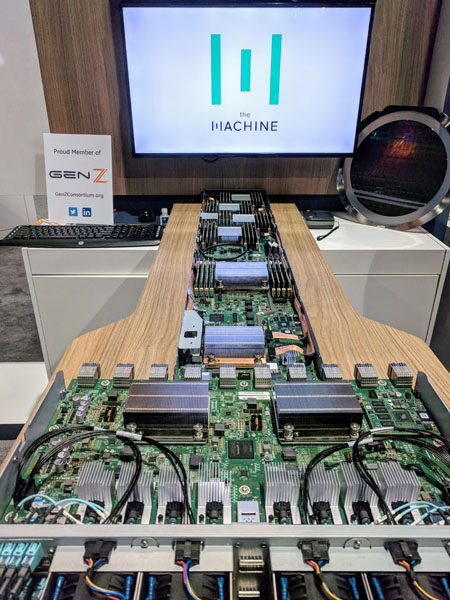

Here is a better view of the backplane that the compute nodes connect to. If you are getting the sense that these things are massive, then you are right. The row of eight heatsinks near the bottom of the assembly are photonics to connect other components via light. The two big heatsinks are the switches.

The HPE representative assured us that this is extraordinarily complex and the goal is to have different CPU architectures easy to plug and play in the system.

Final Words

Whether or not this is the future of computing or in-memory computing in ten years, only time will tell. Certainly, the brand recognition among the SC17 attendees was high. Furthermore, this is a working system being shown off at trade shows which is a big deal. Moving data through FPGAs and switches to access memory is an approach that will require software changes with the latency and addressability hits which is why HPE is so keen to sign up developers for the new platform.