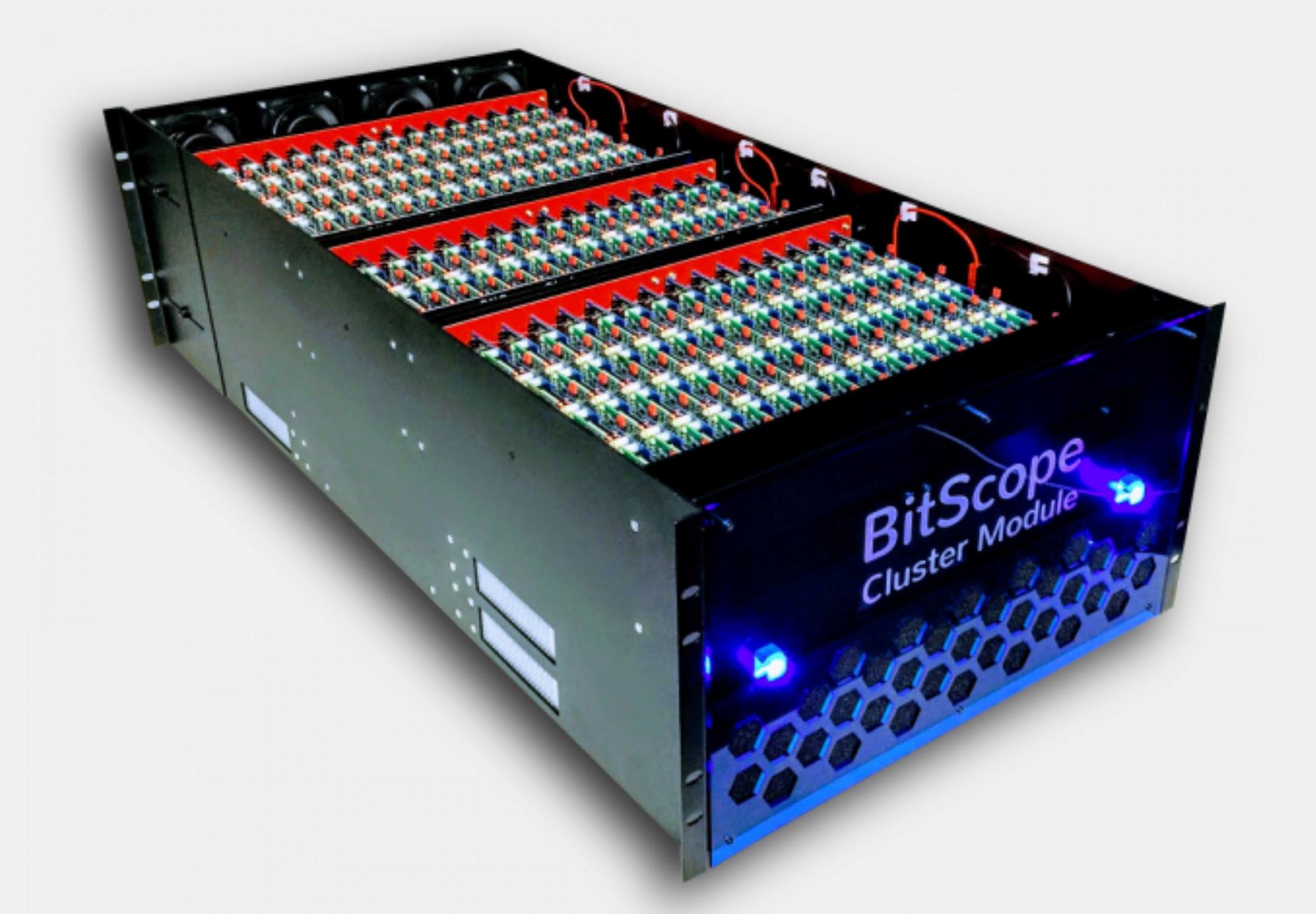

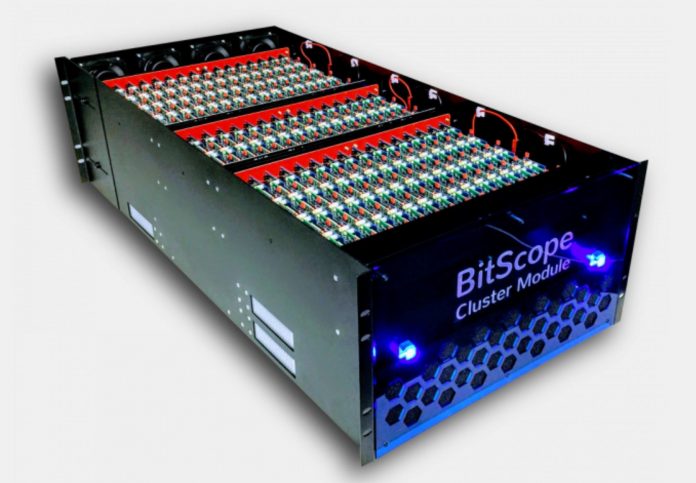

The Raspberry Pi 3 has been wildly successful. It is a $25 board with a quad-core ARMv8 64-bit CPU and 1GB RAM per node. While 4-10 Raspberry Pi 3 clusters have been popular for some time, BitScope took this to another level. The BitScope Cluster Module fits 144 Raspberry Pi 3 boards (plus six spares) plus networking power and cooling all in a 6U chassis. That means that in a 6kW 42U rack the solution can fit over 1000 nodes and was designed for Los Alamos National Laboratory.

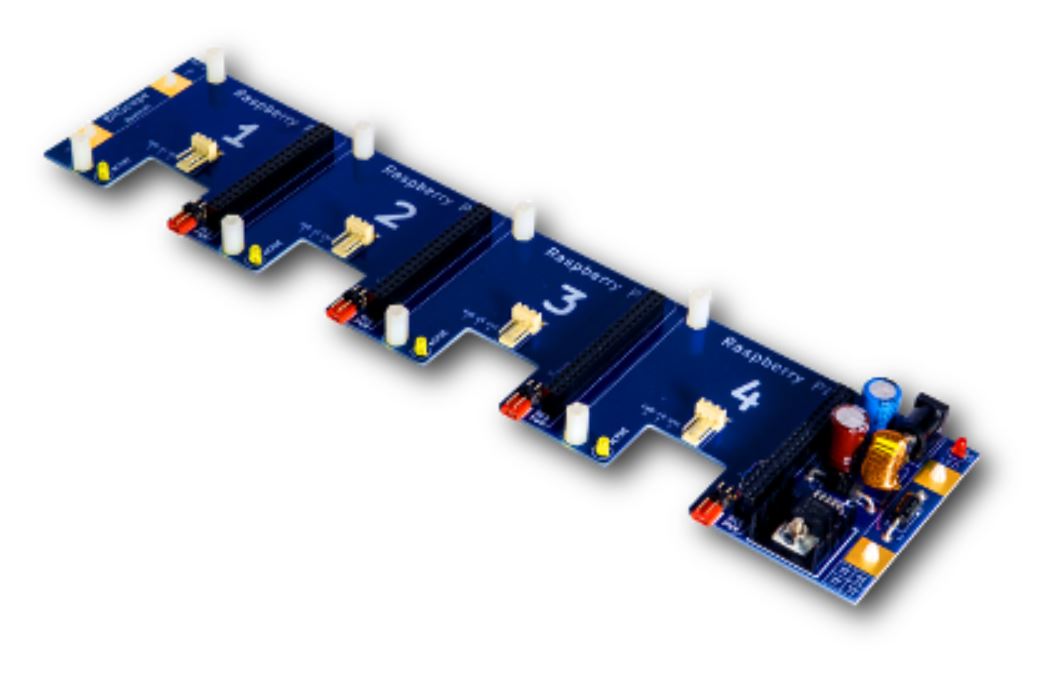

The clusters start with a Raspberry Pi 3 (or other models) that plug into one of BitScope’s Quattro Pi boards. These boards handle power delivery to the four Raspberry Pi nodes. If you are building a solution yourself, you will likely utilize a single power input on the board. If you are building a larger solution, you will likely use the plate connectors at either end of the Quattro Pi. If you build a Raspberry Pi cluster, cabling can easily become a pain so solving this for 1000 node per rack designs is important.

We are told that the BitScope Quattro Pi is $55-65 and can be purchased on its own if you want to build something similar.

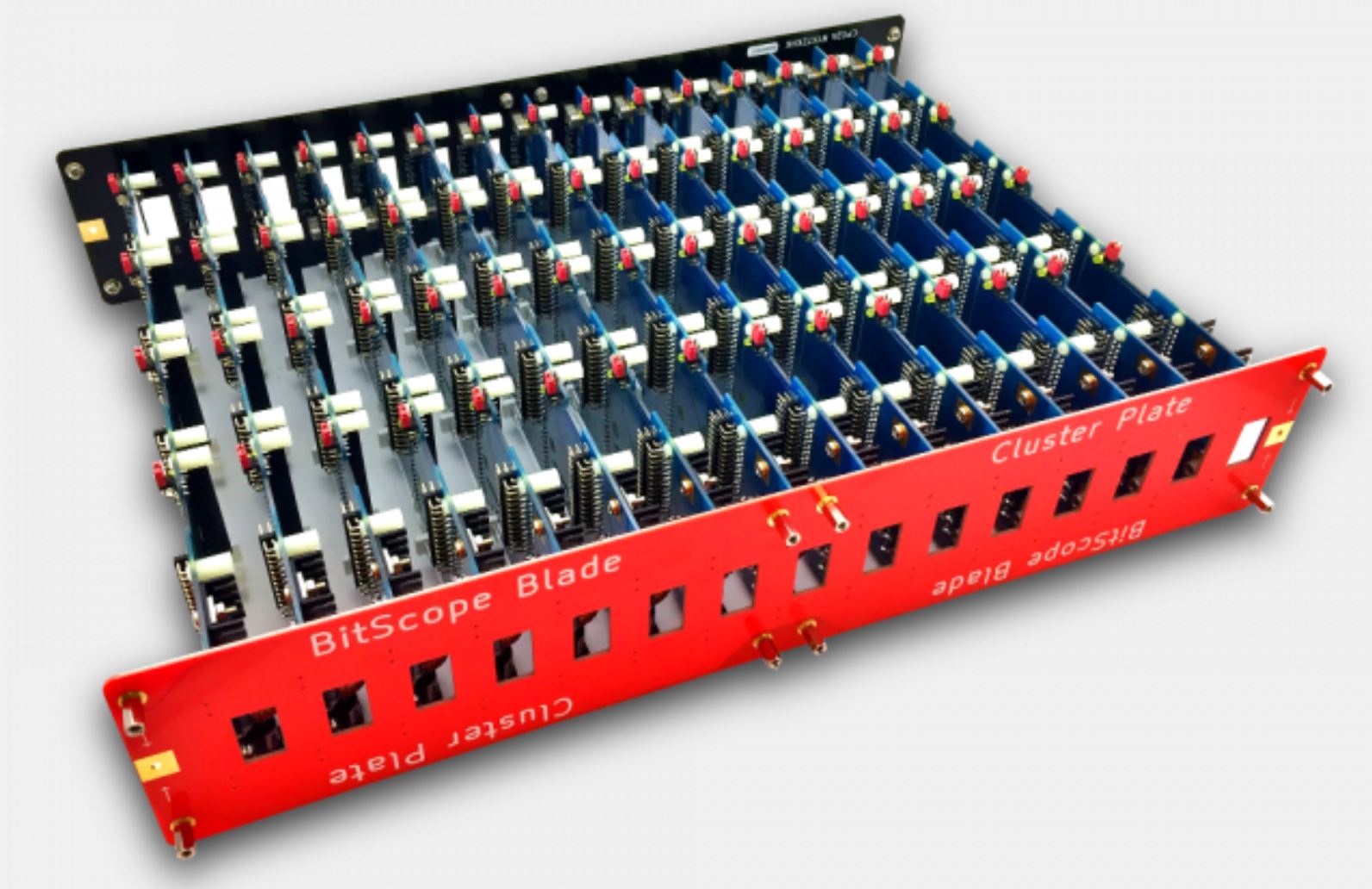

BitScope uses the Quattro Pi with power delivery via plates which also provide structural support. 15 Quattro Pi boards connected via the Cluster Plate gives you the BitScope Blade.

From here, one can add networking, cooling and a chassis to create a 144 node cluster (excluding 6 spares):

There are some applications (e.g. mobile games) where this type of architecture may be a viable hosting option. We have seen a few companies with boards such as the ODROID platforms wired up via PoE in racks to create high node count dedicated instance hosting. Four low clock speed ARM cores and 1GB of RAM is not necessarily going to be a compute powerhouse. On the other hand, the solution is extremely low power and at SC17 the Los Alamos team is using these Raspberry Pi clusters for cluster software development. The cost, power and space requirements are much lower than using traditional cluster building blocks. We are told that it makes it viable to host a 1000+ node development cluster.

Edit – we saw these on the SC17 floor live. They will accept Odroid-C2 modules:

You can learn more about the solution here.

The Raspberry Pi 3 normally costs $35, not $25

The price is now significantly higher than $35, thanks democRats.