We are at Hot Chips 2017 this week covering one of the more technical conferences in the space. AMD recently released its Vega10 architecture and discussed this in its GPU presentation at the conference. While much of the discussion on AMD Vega has been on graphics performance, AMD presented additional information on features such as SR-IOV and compute support.

AMD Vega10 Virtualization and Compute

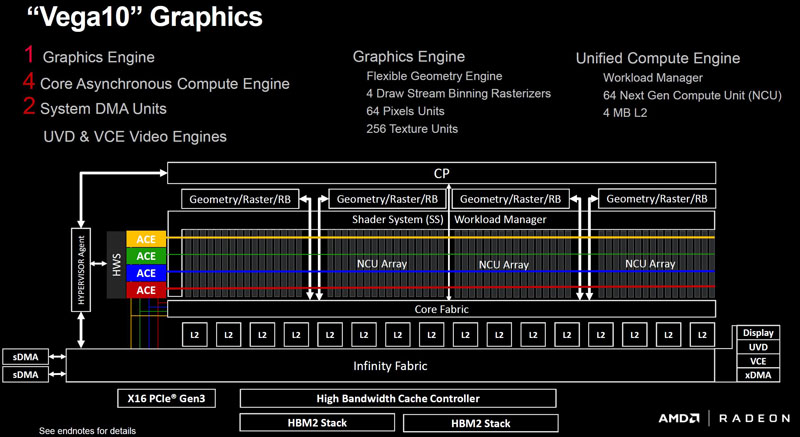

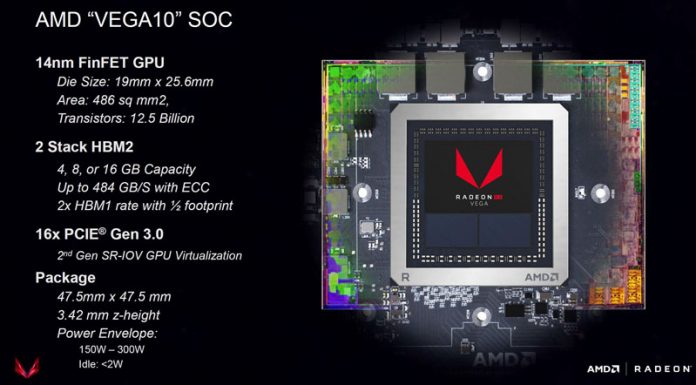

Here is the AMD Vega10 graphics overview. Suffice to say, this is a significant step forward from Fiji.

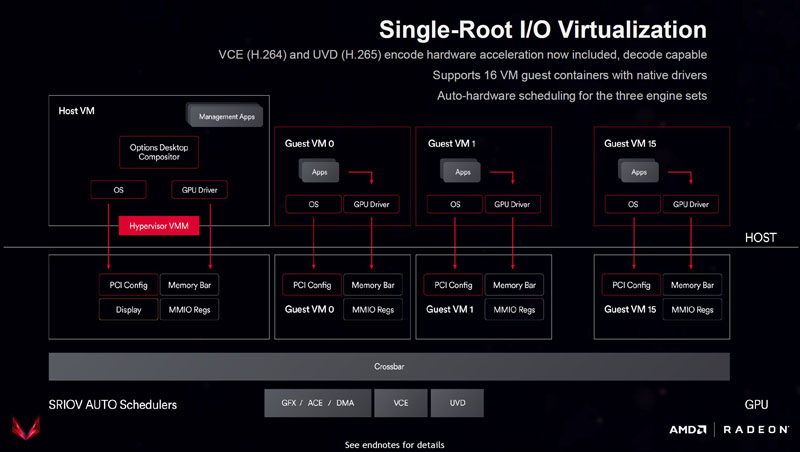

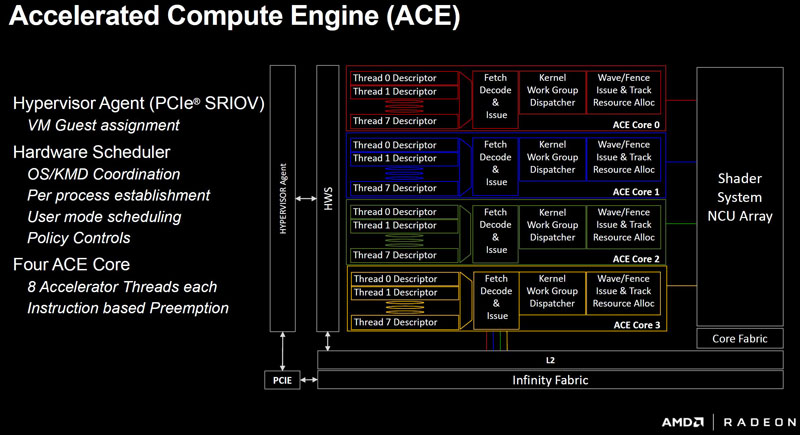

We wanted to focus on SR-IOV virtualization features of the AMD Vega10 platform. AMD exposes the GPU as an SR-IOV device to up to 16 VMs.

The key here is that AMD’s engine works to partition off compute and memory resources to VMs. Important to note here is that AMD does not have an expensive software licensing model like NVIDIA GRID has. This market has seen several releases ahead of VMworld 2017 with the NVIDIA Tesla P6 recently released.

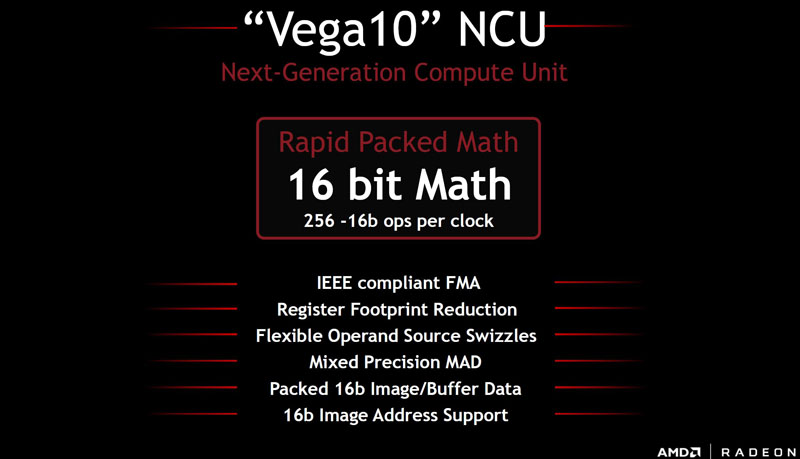

Beyond the virtualization features compute beyond what Fiji offered. AMD is also pushing its view of compute segmentation beyond what NVIDIA has been doing. It is offering scaling to 16-bit math on its Vega10 GPUs. That is something that NVIDIA GTX 1080 Ti’s for example do not do as well on.

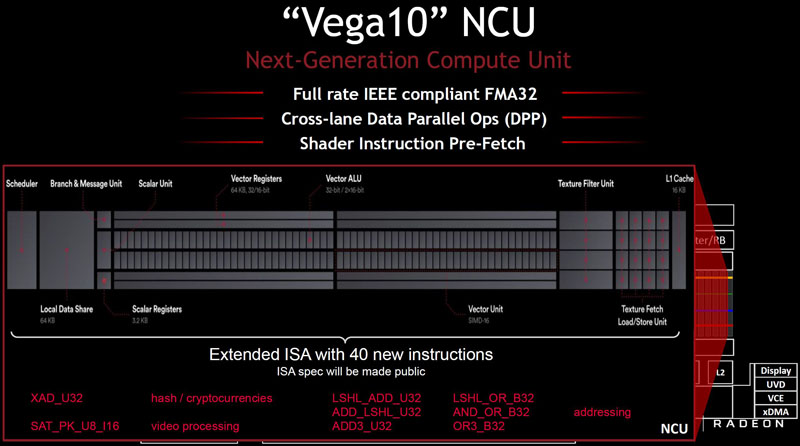

One of the more interesting NCU slides AMD presented at Hot Chips 2017 was this one:

Orally, and on the bottom of the slide there is a nod to cryptocurrencies and hashing. It is clear AMD has accepted that their GPUs do well in this segment, and are starting to embrace it formally in their marketing materials.

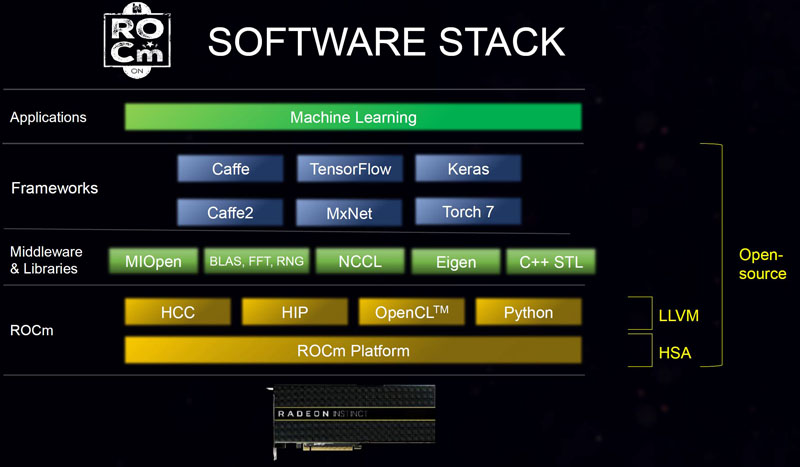

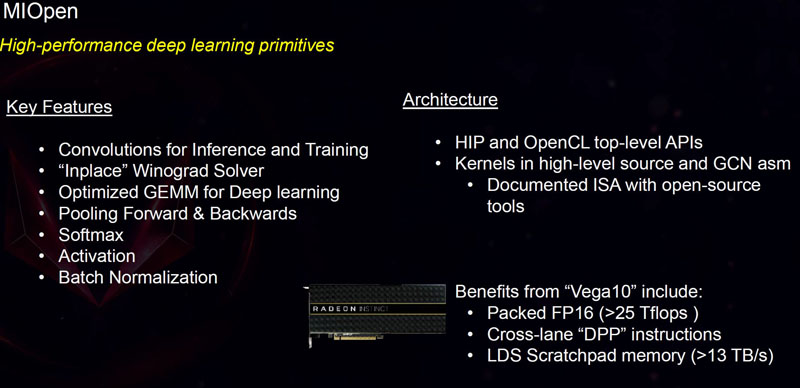

At the same time, both NVIDIA and Intel were pushing more than raw compute performance and discussing neural network specific enhancements with this generation. AMD is still developing its story and that starts with allowing 16-bit math and its ROCm software stack.

We have covered ROCm and MIOpen before, but essentially these are AMD’s efforts to make AMD GPUs accessible to the deep learning community.

AMD faces an uphill battle in this space, but solid software support is a foundational element that AMD needs to get right.

Final Words

On a day when both NVIDIA disclosed more about its (now shipping Tesla V100) and Intel discussed Xeon Phi Knights Mill for deep learning, AMD was relatively quiet on this front. While AMD did discuss ROCm and increased compute capacity, both NVIDIA and Intel are showing specific vector compute acceleration technologies. At the same time, during the talk, AMD did specifically note that it has cryptocurrency mining. Perhaps the most exciting discussion was around the SR-IOV for virtualization. For those that are using GPUs for applications such as VDI, AMD has an interesting approach with a significantly friendlier licensing model than NVIDIA GRID.

There are probably more GPUS out there used for mining crypto currencies than to do deep learning.

Too bad for AMD they can not sell their mining gpus at the same price as DL cards.

Mediocre on gaming. Generation behind on deep learning. But top notch crypto mining.