AMD Radeon Vega Frontier Edition Compute Related Benchmarks

The 16GB of HBM2 is undoubtedly a great feature which makes the GPU appropriate for workloads driven primarily by larger problem sizes. We wanted to take a look at the compute performance just to see where it falls in our growing GPU compute performance database.

The next GPUs in our cycle are going to be the NVIDIA RTX and NVIDIA Tesla cards, but we wanted to get some of these numbers out before we discuss those.

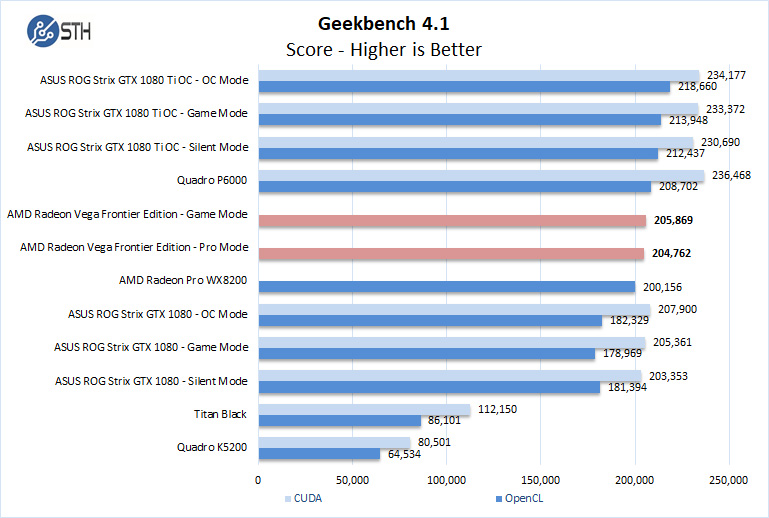

Geekbench 4

Geekbench 4 measures the compute performance of your GPU using image processing to computer vision to number crunching.

While the Vega FE does not have a CUDA score, it does show a good OpenCL score which is slightly higher than the AMD Radeon Pro WX 8200.

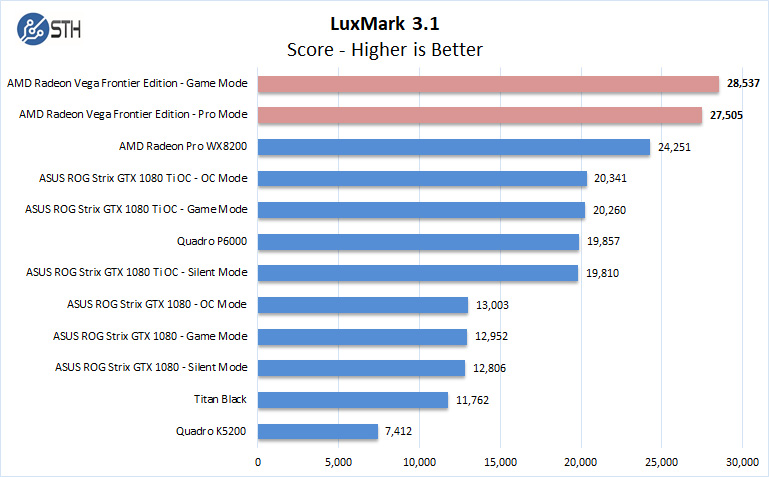

LuxMark

LuxMark is an OpenCL benchmark tool based on LuxRender.

The Vega FE takes the lead here with considerable performance jump over the Radeon Pro WX 8200. The Vega FE easily takes the high score for LuxMark based on OpenCL.

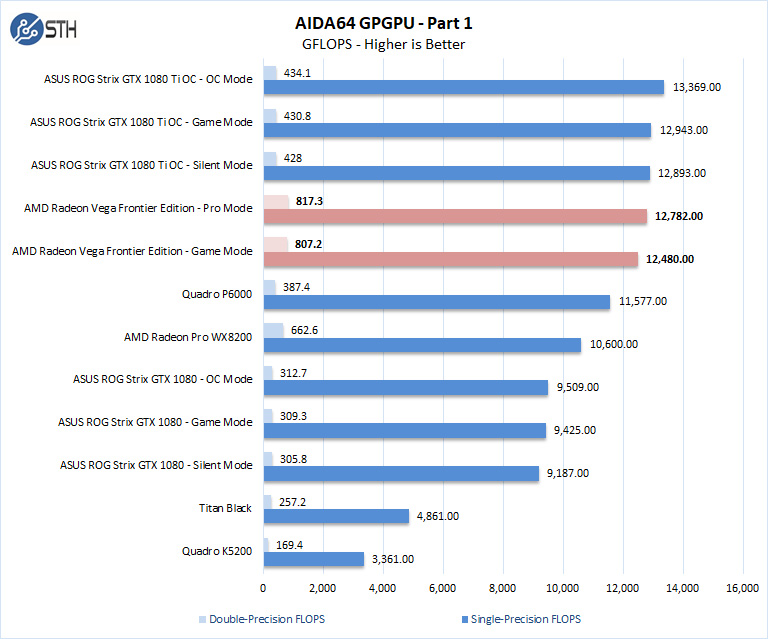

AIDA64 GPGPU

These benchmarks are designed to measure GPGPU computing performance via different OpenCL workloads.

Single-Precision FLOPS: Measures the classic MAD (Multiply-Addition) performance of the GPU, otherwise known as FLOPS (Floating-Point Operations Per Second), with single-precision (32-bit, “float”) floating-point data.

Double-Precision FLOPS: Measures the classic MAD (Multiply-Addition) performance of the GPU, otherwise known as FLOPS (Floating-Point Operations Per Second), with double-precision (64-bit, “double”) floating-point data.

Here single precision GFLOPS are just below what we are seeing from our GTX 1080 Ti and well above the AMD Radeon Pro WX 8200. Again, the AMD Radeon Vega Frontier Edition 16GB card is less expensive than the NVIDIA GTX, and newer NVIDIA RTX cards for this level of performance. In terms of raw double precision performance, the Vega FE is almost double the performance of the GTX 1080 Ti.

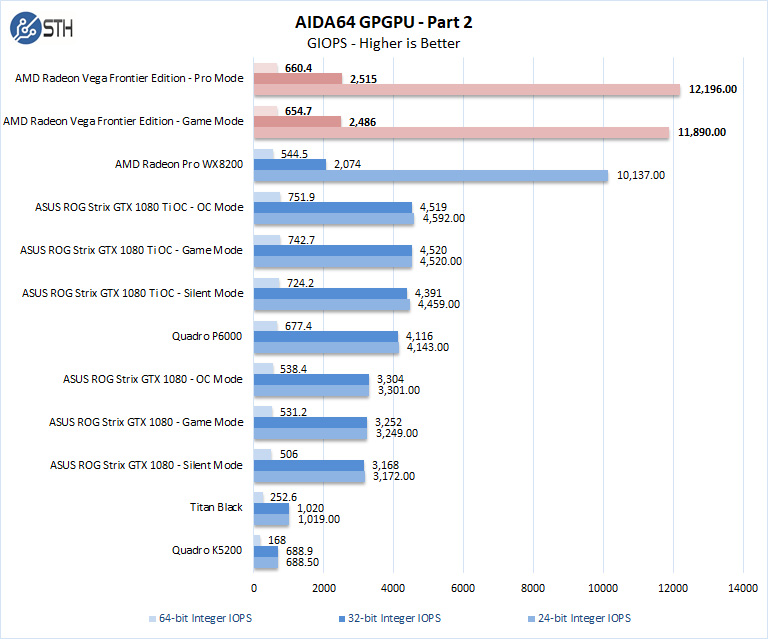

The next set of benchmarks from AIDA64 are focused on IOPS.

24-bit Integer IOPS: Measures the classic MAD (Multiply-Addition) performance of the GPU, otherwise known as IOPS (Integer Operations Per Second), with 24-bit integer (“int24”) data. This particular data type defined in OpenCL on the basis that many GPUs are capable of executing int24 operations via their floating-point units.

32-bit Integer IOPS: Measures the classic MAD (Multiply-Addition) performance of the GPU, otherwise known as IOPS (Integer Operations Per Second), with 32-bit integer (“int”) data.

64-bit Integer IOPS: Measures the classic MAD (Multiply-Addition) performance of the GPU, otherwise known as IOPS (Integer Operations Per Second), with 64-bit integer (“long”) data. Most GPUs do not have dedicated execution resources for 64-bit integer operations, so instead, they emulate the 64-bit integer operations via existing 32-bit integer execution units.

The Vega FE smashes the 24-bit integer IOPS with impressive results. This is a case where one’s workload will dictate which is the best solution for you.

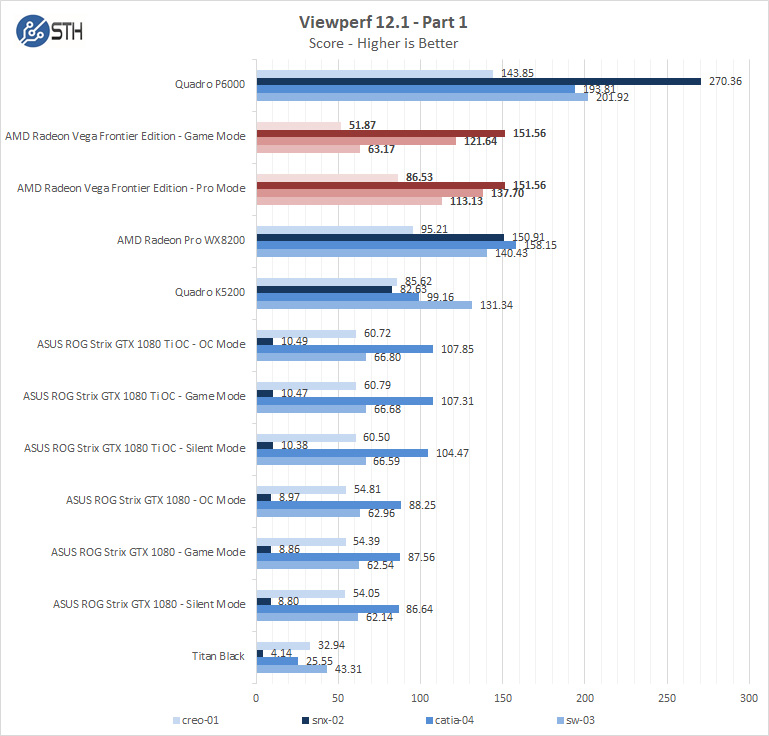

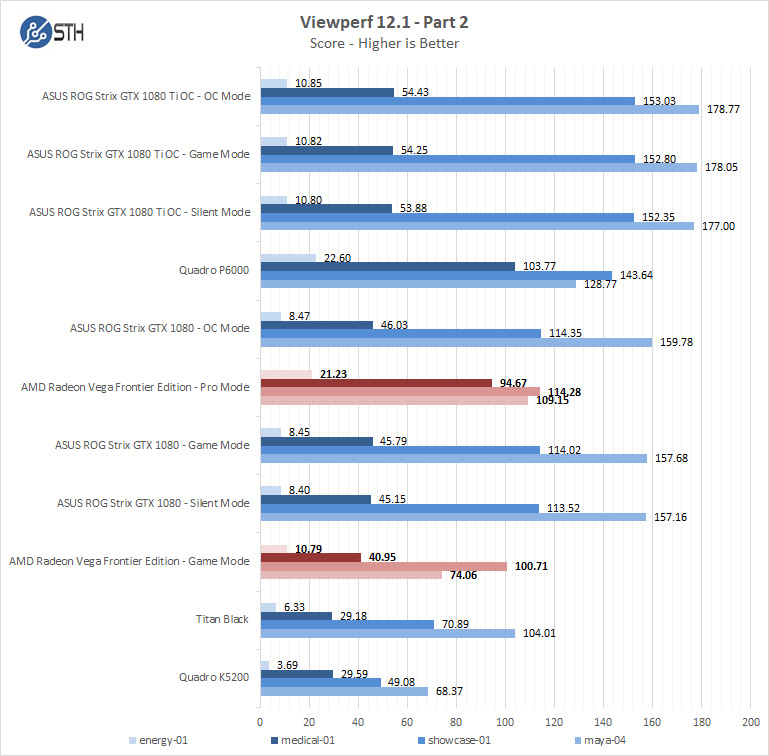

SPECviewperf 12.1

SPECviewperf 12 measures the 3D graphics performance of systems running under the OpenGL and Direct X application programming interfaces.

As you can see, the performance is solid, and on a current price/ performance basis is extremely competitive. When NVIDIA raised prices on its latest GeForce RTX series, the AMD Radeon Vega FE became a better value.

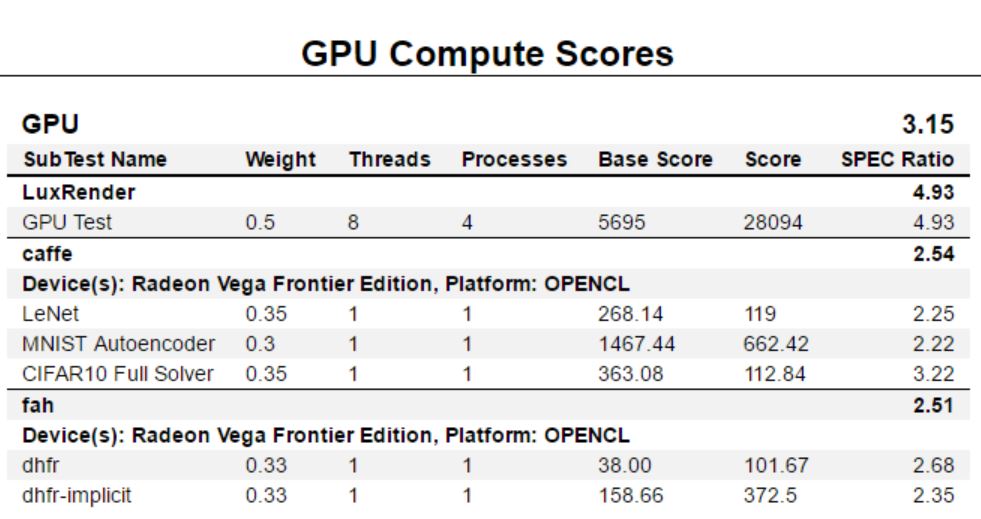

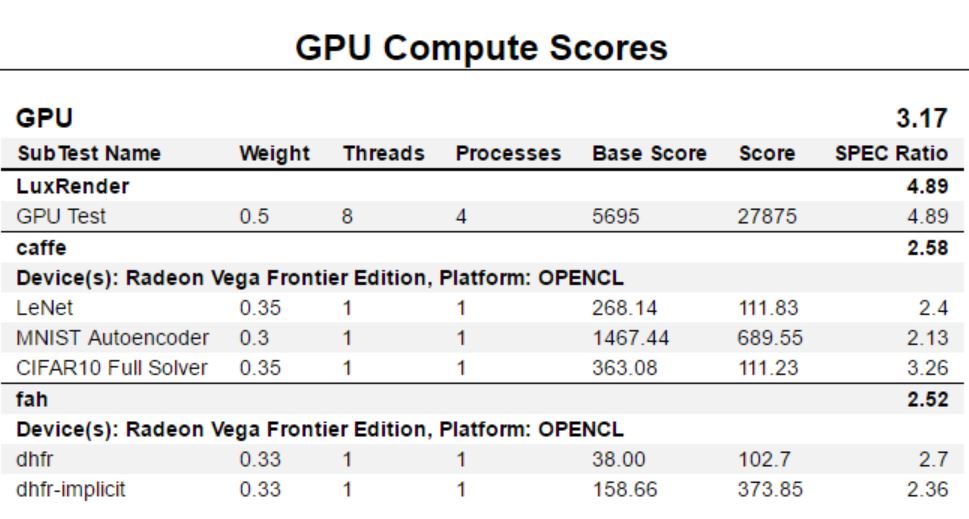

SPECworkstation 3

We have only started using the new SPECworkstation 3 benchmark, so we do not have a full set of graphics cards to compare. Still, we wanted to provide results for comparison.

SPECworkstation 3 output using the Professional Drivers.

SPECworkstation 3 output using the Gaming Drivers.

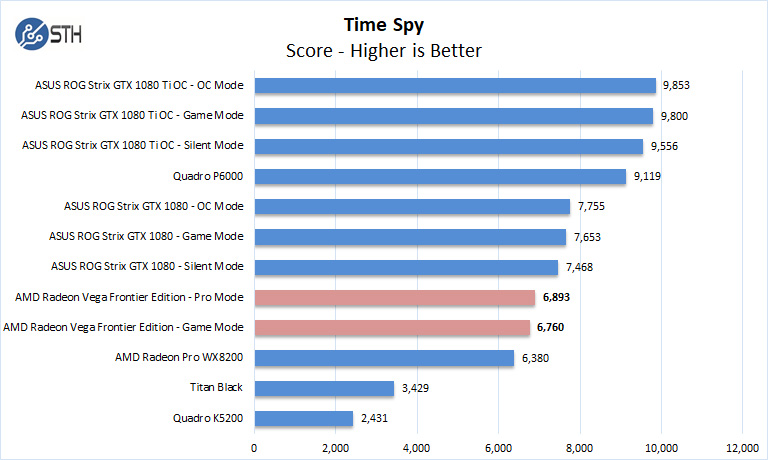

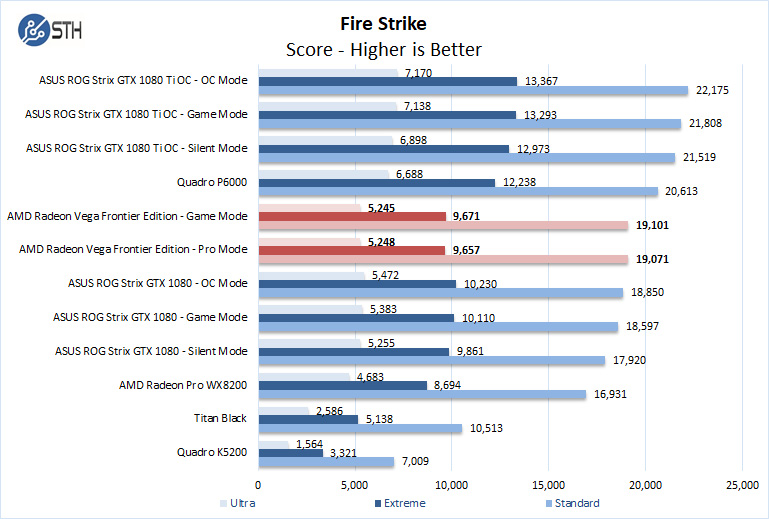

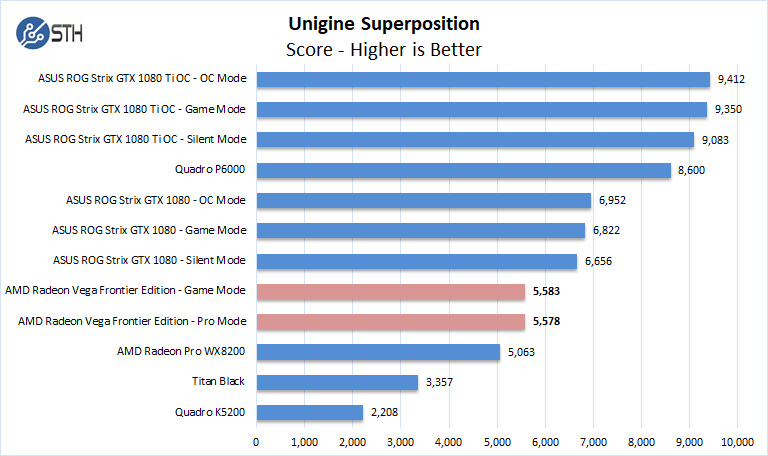

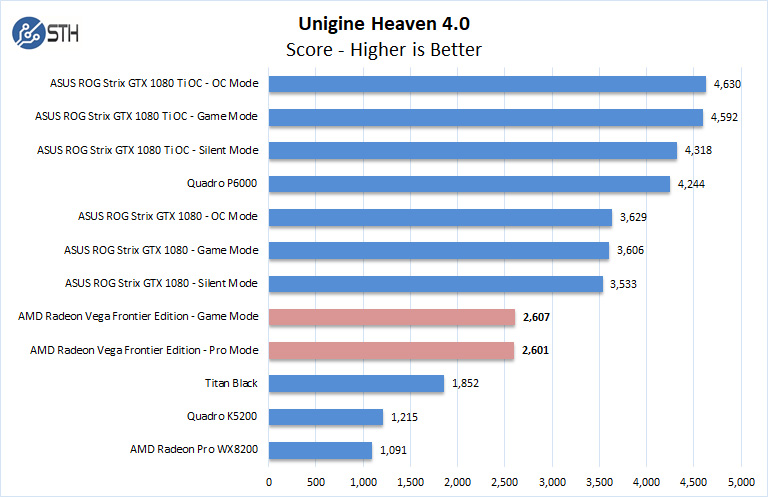

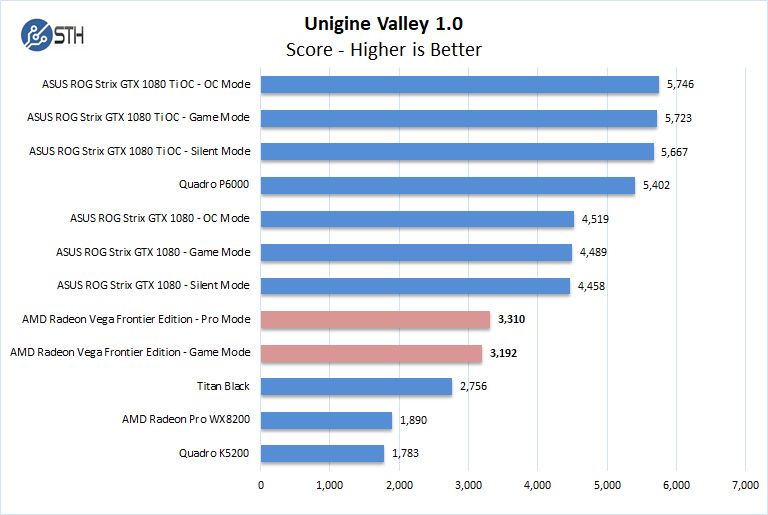

Graphics related benchmarks

Here we will run the Vega FE through all of our graphics-related benchmarks.

The Vega FE is not an actual gaming video card but targeted at professionals who wish to switch between Pro and Gaming modes to test applications and even grab some game time at the end of the day.

Next, we are going to look at power and temperature tests before giving our final thoughts.

You are pretty late with a review of this great GPU

“AMD Radeon Vega Frontier Edition 16GB Review by Igor Wallossek August 2, 2017 at 6:00 AM ”

at tomshardware.

Better late than never…

We have 3 of them, 1 standard and 2 watercooled.

For us the biggest features where 10 bits color overlay in OpenCL, 16 GB VRAM and HBCC (we use it for photo and video editing in Resolve and Adobe software.)

newegg had the liquid cooled version for $599 recently. Do you know if there are any pass-thru/sr-iov capability for vmware?

I thought for a moment this is first look at the 7nM Vega.

I’m starting to think Mi60 must be renamed Mia60 :-(

I don’t think this one has ECC memory support (as the review suggests). Also these Vega cards really get a lot better if they’re tuned properly. The “graphics related benchmarks” here, for example, show the card is noticeably slower than GTX 1080. But that’s most likely due to heavy throttling you’ve had – if you’d look at the clocks your Vega FE was running at during those tests, you’d see that they were nowhere near the stock 1600/945. In order to get decent results from these cards, they need to be undervolted and properly cooled. For example, my Vega FE card is rock solid stable at 1500/1100 @ 1.0V. But the stock voltage is 1.2V, and at that voltage the air-cooled card is throttled by both thermal limits and power limits (it just won’t work at 1600@ 1.2V with the stock TDP limit and air cooler, so it drops the clocks significantly). Ideally these cards need to be flashed with the LC version BIOS (which has higher powerlimit) and cooled with full-cover water blocks (or RAIJINTEK MORPHEUS at least). That’s when they actually start to perform well (equal or even faster than GTX 1080). Pascal cards are good out of the box and can’t really be pushed all that much, but Vega’s greatly benefit from tuning cause they can gain 10-20% compared to stock.