We have been working hard to get AMD’s newest generation of cards ever since the AMD Radeon RX 6900 XT 6800 XT and 6800 Launch over a quarter ago. We finally managed to purchase an AMD Radeon RX 6800 for our review. As such, we wanted to get some sense of the compute performance to balance out what we have seen from the few NVIDIA cards we have managed to purchase at this point.

As a quick aside, the AI/ deep learning benchmarks we use did not work with this card, so there is more work to be done. At the same time, we also need to ensure when we do get them working that they are a fair comparison to what we have for AMD. As a result, we are going to publish this review without that section and hopefully add numbers if/ when everything works. With that out of the way, let us take a look at the AMD Radeon RX 6800.

AMD Radeon RX 6800 Overview

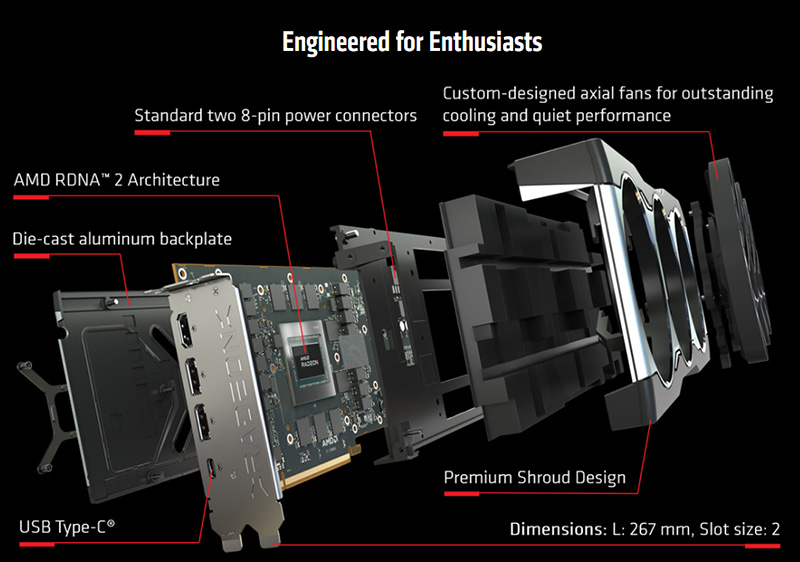

AMD Radeon RX 6800 is a dual-slot GPU with a length of 10.51″.

The next graphic shows a break-out view of the AMD Radeon RX 6800. The video outputs are shown, consisting of 2x DisplayPort 1.4 with DSC ports, 1x HDMI 2.1 VRR and FRL port, and a single USB-Type-C port. NVIDIA removed the Type-C port on this generation which makes its inclusion here notable.

Looking at the front of the AMD Radeon RX 6800, we can see the Tri-Fan setup, which covers the entire card. These large cooling fans will keep the RX 6800 cool and we will see how cool in our testing.

Flipping the AMD Radeon RX 6800 over, we can see the Die-cast aluminum backplate.

At the back end of the AMD Radeon RX 6800, we find 2x 8-Pin power connectors. We like that AMD is not using a new standard here and is simply using the format we have been accustomed to.

Next, let us look at the AMD Radeon RX 6800 key specifications and continue our performance testing.

Will you include video encoding benchmarks? Given how popular Plex is in the home lab space, I would expect video encoding to be very relevant.

Wanna know the real dirty little secret woth your new card, William? ROCm does not support RDNA GPUs. The last AMD consumer cards that ROCm supported was the Vega 56/64 and it’s 7nM die shrink the VII.

You got a RX5000 or RX6000 series card or any version of APU, well you get to use OpenCL. Aren’t you lucky?

nVidia supported CUDA on every GPU since at least the 8800GT. I can’t imagine how AMD expects to get ROCm out of the upcoming national labs when the only modern card it will work on is the mi100. Ever try to buy an mi100 (or mi50)? It is basically possible to find an AMD reseller that will even condescend to speak to a small ISV.

*correction:

It is basically IMPOSSIBLE to find an AMD reseller that will even condescend to speak to a small ISV.

I find all these reviews and release news for both AMD and Nvidia card a joke at the moment, as an end user I can’t ever find any in stock no matter how deep my pockets!!

I’m not just talking about STH

Why have the new reviews suddenly decided to skip 3080 altogether ???

Hi Ryuk – we still have not been able to grab one for testing.

Pure junk selling, USA warranty evading, AMD still owes me a video board since I did not even get a 1/2 year of performance from the 3 very poorly designed and QA, Vega Frontier Editions.

AMD wanting all the selfish benefits of consumer sales, but none of the mature responsibilities.

The replacement warranty boards from AMD all junk. The first not lasting more than 2 days without crashing (BSD), the second, having waited ~1 mth, just 1 day then crashing. I having the impression, no one watching AMD, hey simply return “defective boards” as replacements, then wash their foul hands, not honoring anything, their word to then not respecting customer.

Worst, this company then sought to abuse USA Consumer Protection Laws by expecting their customers in the USA to send the defective product OUT OF COUNTRY, having no USA depot.

Park McGraw above ^^ had a faulty system (likely PSU or motherboard) that was making graphics cards either not work or actually break/fail, and then decided to blame AMD for it… ♂️

You didn’t get 3x faulty graphics cards in a row, you freaking imbecile… Basic silicon engineering science says that getting 3x GPU duds in a row is practically an impossibility (unless the product itself had a fundamental device killing flaw… of which Vega 10 did not). Aka, it was YOUR SYSTEM that was killing the cards!

And to emerth, I wouldn’t expect ROCm to EVER come to RDNA personally. API translation seriously isn’t easy, so keeping things limited to just two instruction sets (modern CUDA to GCN [+ CDNA which uses the GCN ISA & is basically just GCN w/ the “graphics” cut out]) likely cuts down the work & difficulty DRAMATICALLY!

Not to mention that even IF RDNA DID support ROCm, performance vs Nvidia would still be total crap because of the stark lack of raw FP compute! (AMD prioritized pixel pushing DRAMATICALLY over raw compute w/ RDNA 1 & 2 to get competitive gaming performance & perf/W, with only RDNA 3 starting to eveeeeer so slightly reverse course on that front).

AMD just doesn’t give a crap, whatsoever, about the hobbyist AI/machine learning market. Nvidia’s just got way, WAAAAAY too much dominance there for it to be worth AMD spending basically ANY time & effort to try and assault it. Especially when CDNA is absolutely beating the everliving SH!T out of Nvidia in the HPC & supercomputer market!