AMD EPYC as a brand was just formally launched and we also have exciting new details and an official release month. Here are some of the new details on the AMD EPYC platform. We were certainly excited to see that AMD’s messaging is consistent with where we were thinking the Naples platform would shine.

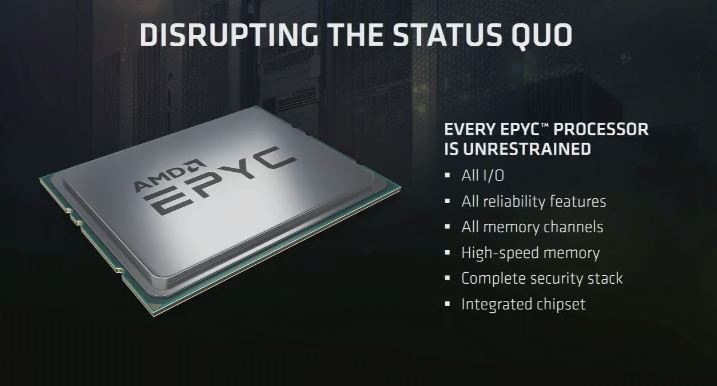

New AMD EPYC CPU Details: Unrestrained

AMD announced several key EPYC details. The first one is going to be major. Every EPYC CPU is what the company calls, “unconstrained.” That means that every feature is found on every CPU.

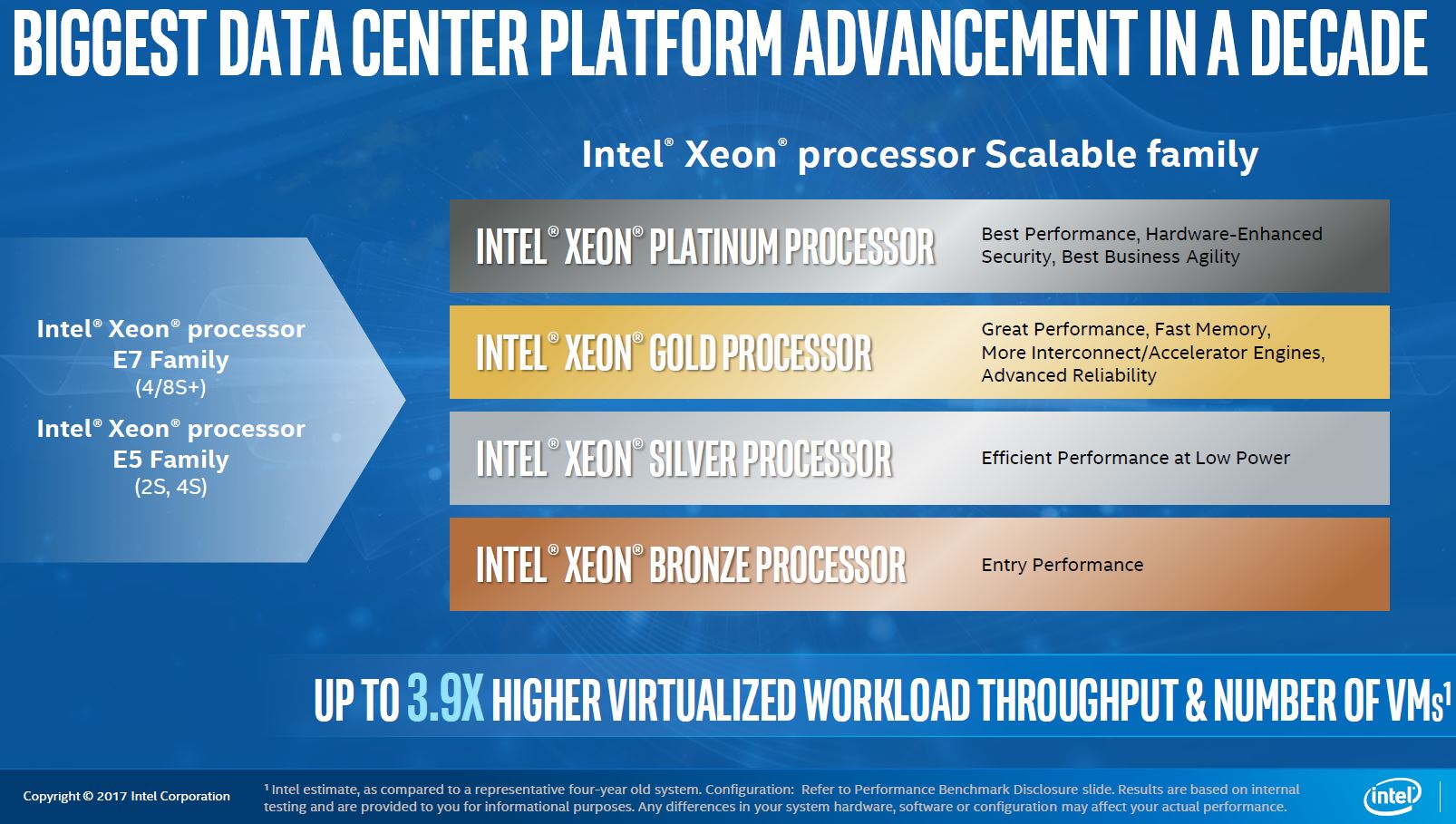

If you saw the Intel Scalable Processor Family coverage we had, you would be right in assuming this “Unrestrained” language is a direct shot at Intel. Intel clearly outlined that their new family will have features reserved for certain tiers (as they do even in the E5-2600 V3/ V4 generation.)

AMD frankly needs this posture to penetrate the market. Also, AMD is not directly targeting the 4-socket server market that the Intel Xeon Platinum CPUs will occupy, taking over from the Intel Xeon E7 V4 family (e.g. as we saw with the Dell PowerEdge R930.)

New AMD EPYC CPU Details: Chip Details

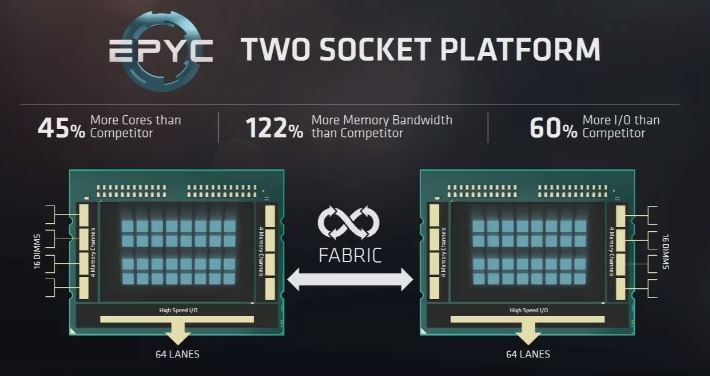

AMD EPYC is being compared to the current Intel Xeon E5-2600 V4 family. We can see the focus on I/O and memory bandwidth:

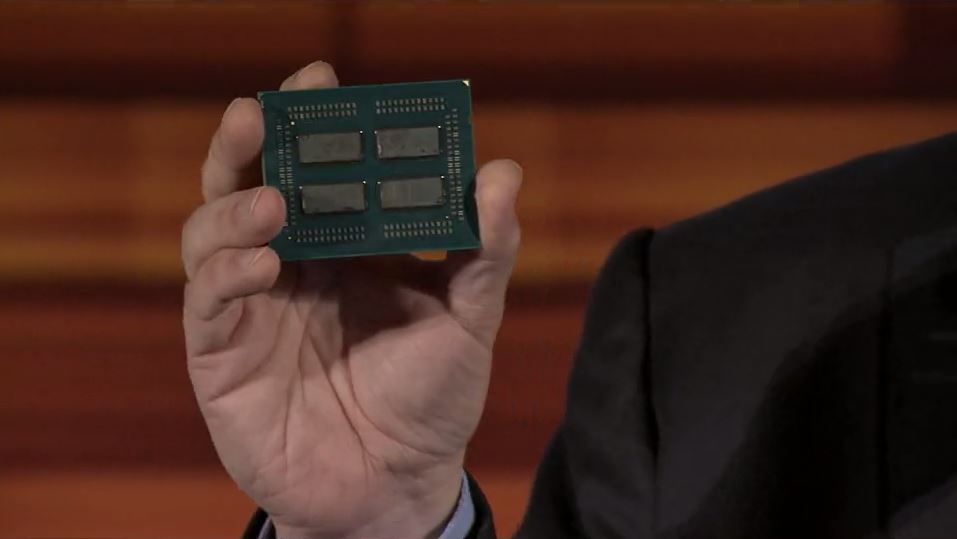

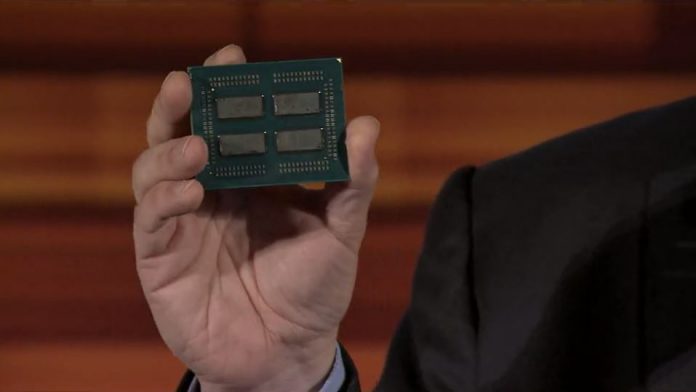

The major new piece from today is that, as expected, the AMD EPYC platform is essentially four 8 core dies on one packages. Two packages can go into a 2 socket system. As a result, one can think (very loosely) of an AMD EPYC package as four AMD Ryzen 8 core/ 16 thread die units.

That is a major oversimplification, but it did not take a lot of imagination to see that Ryzen had 2 channel memory x 4 = 8 channel memory on AMD EPYC. Likewise, 8 cores and 16 threads x 4 = 32 cores and 64 threads and so forth. Of course, AMD will need to keep clock speeds lower as cooling 4x 95W TDP die on a single package would be extremely difficult.

New AMD EPYC CPU Details: 1 Socket Impact

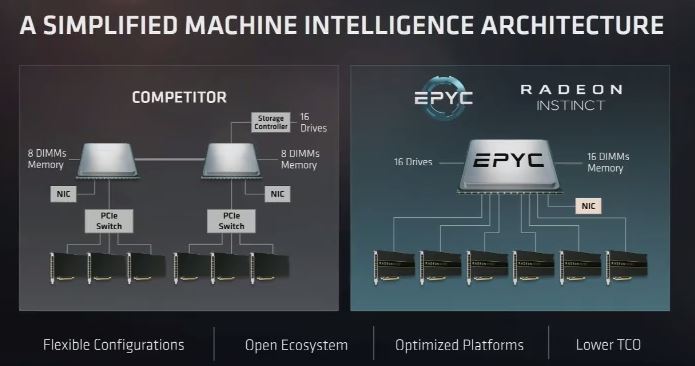

For us, one of the biggest impacts will be in the 1 socket market, and AMD confirmed this was intentional today. With the Intel Xeon E5-2600 V4 generation based loosely on the platform that was released with the V1 series, PCIe bandwidth is severely constrained. Applications, where we see EPYC being a major improvement, are those that need PCIe lanes. GPU compute and NVMe storage servers are two that are pushing bounds of PCIe today.

AMD chose to highlight the GPU compute version (which should be no surprise.)

As we speak to deep learning/ AI companies in the Silicon Valley, 2 socket GPU compute configurations are the most popular since they give up to 80 PCIe 3.0 lanes. With two sockets, it also means that there are NUMA performance penalties that need to be avoided in these architectures today.

What we are seeing in the 8/ 10 GPU market is the use of PCIe switches to give a single root complex which the large AI firms are giddy over.

Beyond the GPU compute side, we expect to see NVMe storage servers be a major beneficiary of the additional PCIe lanes. The storage firms will get much better performance from AMD Ryzen than current single CPU Intel platforms. Looking forward, running with one CPU per node instead of two will also yield significant power savings.

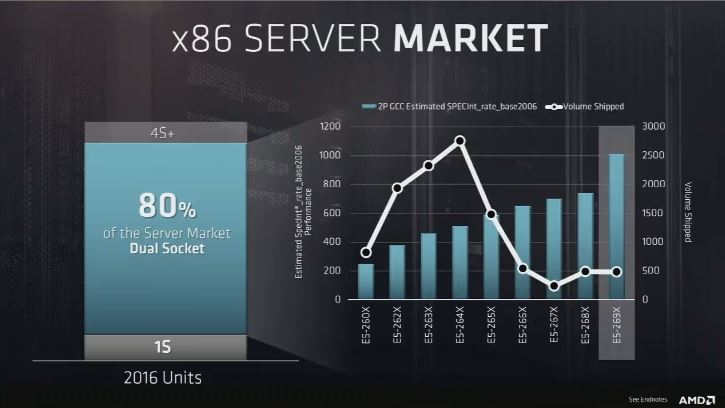

AMD is clearly targeting moving existing dual socket Intel customers to single socket AMD EPYC:

The point AMD is making with this chart is that the majority of all x86 servers today are running on Intel Xeon E5-265x or lower-spec CPUs. AMD believes it can convert many of these server buyers into single socket EPYC buyers.

AMD EPYC June Launch

We had another confirmation of a June launch of AMD EPYC.

Given we are in mid-May, this launch is just around the corner. Stay tuned for more coverage.

And Epyc for workstation? With Higher frequency and UDIMM, RDIMM.

Absolutely amazing. Intel is finished.

Come on though AMD, release the info on the PSP already. We need secure products, not just fast ones.

It seems like Xeon servers are hopelessly underspec’ed as regards PCI-e lanes, Just wondering will Skylake-X be able to address the shortfall, or are Intel doomed?

As I expected, we’ll get 4 NUMA nodes on a single chip, with all the problems that entails, giving you a 2 socket system that behaves like an 8 socket system. This is rather disappointing and puts the amount of memory channels and PCIe lanes in perspective.

2 Frede: Epyc for workstations is probably Threadripper.

2 Nils: homogenous SMP times are well behind us. NUMA is the way to go unfortunately so OSes need to be aware of the fact anyway.

Patrick,

what’s the word on CPU clocks? std and boost? for 32 core part.

Hi John, we are under embargo but will be covering the launch.

What I may suggest is that if it was essentially 4x 95W TDP 8 core Ryzen 7 that would be too hot for a mainstream CPU.

Any word on esxi 6.5 compatibility?