AMD EPYC 7552 Market Positioning

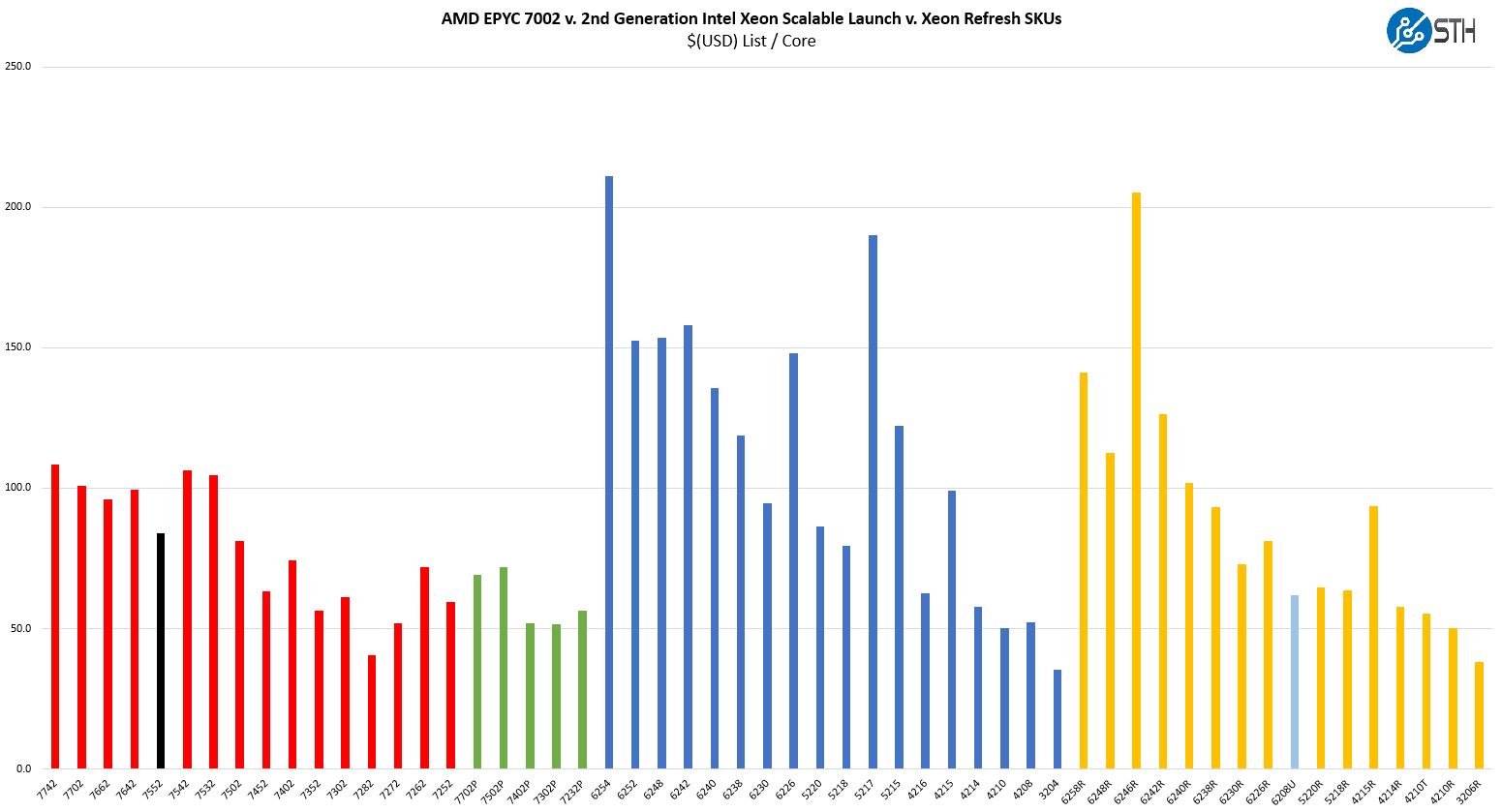

Thes chips are not released in a vacuum instead, they have competition on both the Intel and AMD sides. When you purchase a server and select a CPU, it is important to see the value of a platform versus its competitors. Here is a look at the overall competitive landscape in the industry:

We are going to first discuss AMD v. AMD competition then look to Intel Xeon.

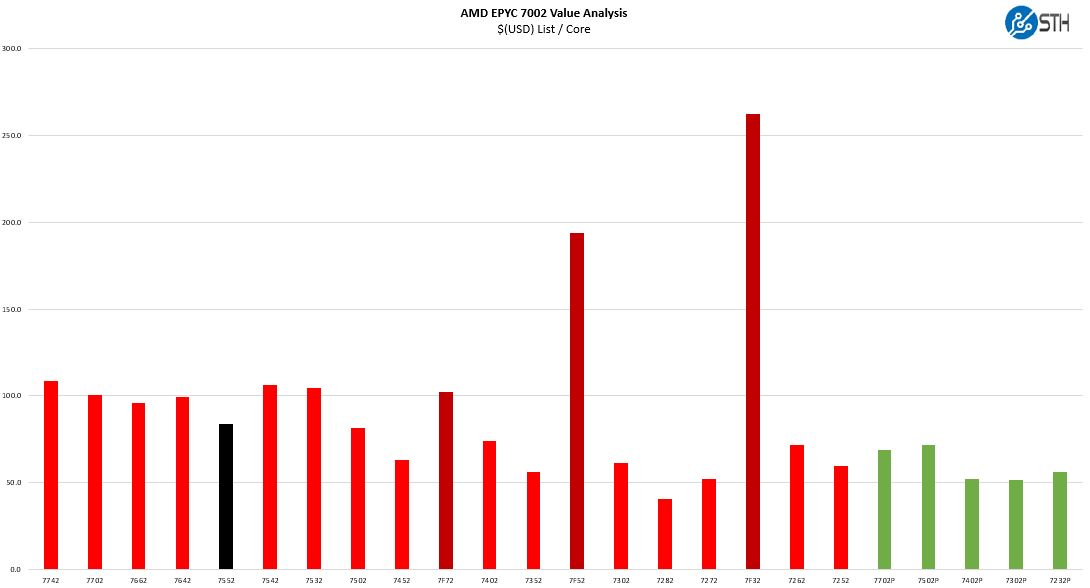

AMD EPYC 7552 v. AMD EPYC

With the addition of the Frequency Optimized EPYC parts, this chart has changed drastically.

While most parts are in the $100-110/ core range, even at the high-end, the frequency optimized parts cost much more on a per-core basis.

Even with that, the EPYC 7552 one can see at just under $84/ core is a relative bargain in this range. One can see that the higher-end AMD EPYC 7642 and lower-end EPYC 7502 parts are closer to $100/ core.

If one wants more nodes to balance PCIe and storage connectivity instead of using high consolidation ratios, then there is an interesting aspect to the AMD EPYC 7002 line. As of now, 48-core parts do not have a single-socket only “P” variant. The EPYC 7702P is a 64 core part for about 10% more, but there is not a 48-core P part. As a result, this may be the value-oriented option for doing 48 cores in a single socket as well.

AMD EPYC 7552 v. Intel

Since the EPYC 7552 was launched in 2019, Intel made a strong competitive move in early 2020 with the Big 2nd Gen Intel Xeon Scalable Refresh altering the competitive landscape. Intel effectively dropped prices across most of its mainstream CPUs.

The challenge here is defining the Intel competition even with the Xeon Refresh. Intel still has a maximum of 28 cores per socket, which carried over to the 3rd Generation Intel Xeon Scalable Cooper Lake generation. Still, that is nowhere close to the 48 cores AMD is offering for around the same price as the Xeon Gold 6258R at 28 cores. While power and TDP limits mean we do not necessarily get perfect performance scaling, we saw in our benchmarks that the chips are much faster than the Xeon Platinum 8280 which is a proxy for the Gold 6258R. The numbers are close in our upcoming review.

The other option is to look at the Intel Xeon Gold 5220R which has 24 lower power cores, and some de-featuring compared to the higher-end chips. A pair of those chips sells for around $3000-3100 so one could argue that those two Intel chips are more of a competitor to the EPYC 7552. There may be chip savings, but at a server and system-level, the AMD EPYC 7552 is likely to be less expensive since there is effectively a 2:1 consolidation ratio. Even if purchase prices were similar, that consolidation ratio will drive operational savings for lower TCO.

AMD offers a larger memory footprint with up to 4TB in 8-channel DDR4-3200 versus 1TB in 6-channel DDR4-2933. AMD has more PCIe I/O with 64, 96, or 128 PCIe Gen4 lanes per socket compared to Intel’s 48x PCIe Gen3 lanes. Intel has features such as AVX-512 and VNNI instructions (DL Boost) that AMD does not have but are new for 2nd Generation Intel Xeon Scalable. Perhaps the biggest feature Intel has is Optane DCPMM support. We should note here that DCPMM support is limited by memory capacity since the Gold 6258R is not a “L” SKU. Still, if one wants fast storage, DCPMM is a significant option that AMD does not have. If you are not using small DCPMM footprints, nor the new instructions, then AMD is extremely competitive.

Final Words

The AMD EPYC 7552 is unique for a number of reasons. While Intel may argue its 28-core processors are similar in compute performance to AMD’s 32-core processors, at 48 cores the gap is much wider. AMD does not have a P-series 48-core part at this time which makes this part almost the defacto “P” part if you wanted a single socket 48-core design. In that case, many will likely choose a 10% premium for the EPYC 7702P instead.

Pricing on the EPYC 7552 is excellent at under $84/ core list price putting it close to what we see in the middle to low part of the Xeon Gold 62xxR range. That pricing reflects the fact that while the chips have more cores, they are not necessarily running at high clock speeds which means the chips are more suitable for software not licensed on a per-core basis.

Looking ahead, another way to view the EPYC 7552 is that it has almost a year of building a PCIe Gen4 system ecosystem. Vendors have been rolling out new form factors and the ecosystem has adopted the EPYC 7002 series as the go-to platform for PCIe Gen4. These systems have been out for some time which means they are gaining hours of time running and being further tuned and hardened. As the next-generation Intel Ice Lake Xeons are further pushed back into late 2020 (but realistically 2021), an IT buyer may look to the EPYC 7552 and other “Rome” platforms as something that can be qualified today, and then updated easily to handle next-gen EPYC 7003 “Milan” chips later this year, likely before Ice Lake arrives. Due to Intel’s 10nm delays, AMD is now seeing some benefit from being first to market by well over a year with features such as their PCIe Gen4 platforms.

For real datacenter work Bang per powerconsumption is also an important metric.