Broadcom has a new 51.2Tbps switch chip. The Broadcom Tomahawk StrataXGS 5 is the company’s newest merchant silicon solution for switches. For next-generation networks, this new switch chip can provide up to 64x 800GbE, 128x 400GbE, 256x 200GbE, or 512x 100GbE links. Realistically, these switch chips are designed for faster than the 100GbE generation of switching.

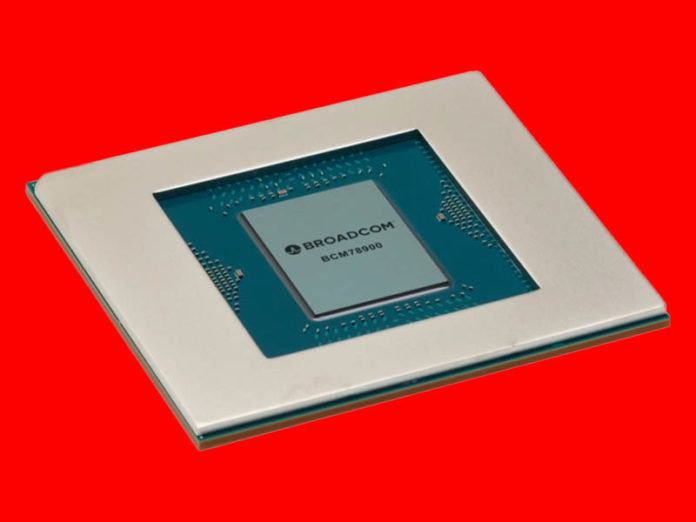

Broadcom Tomahawk 5 51.2Tbps Switch Chip

The new Tomahawk 5 is a 5nm monolithic die. That is quite a feat as server CPUs are still on 7nm processes and are looking for disaggregation/ chiplet opportunities. The new process helps to lower overall power consumption, a challenge for switch chips. Onboard there are six Arm processor cores. VxLAN single-pass and features like PTP and SyncE are also supported on the switch.

At these speeds, physical interfaces become important. The Tomahawk 5 has 64 clusters of 106Gbps / 100G PAM4 SerDes. Something else that is important is that Broadcom is offering several different connectivity options. One can use 100G PAM4 to DACs for in-rack communication, but it can also interface with standard pluggable optics. One of the new features is that Broadcom will offer co-packaged optics solutions using its Silicon Photonics Chiplets in Package (SCIP) platform. The company says that this lowers power consumption by more than 50%. We covered co-packaged optics in 2020 in our Hands-on with the Intel Co-Packaged Optics and Silicon Photonics Switch piece.

With the improved capacity, Broadcom says that one of the new Tomahawk 5 switches can effectively replace 48 of the 2014-era Tomahawk 1 switches for massive radix consolidation (if an organization is running very old switches.)

Broadcom Tomahawk StrataXGS 5 Key Specs

Here are the key specs for the Broadcom Tomahawk StrataXGS 5:

| Spec | |

|---|---|

| Lifecycle | Active |

| Distributor Inventory | No |

| Bandwidth | 51.2 Tb/s |

| Front Panel I/O | 512 x 100G |

| Market Segment | Data Center |

| PHY Data Rate | 106G PAM4 |

| PHY Type | 100G PAM4 |

| Primary Market | Data Center |

| RoCEv2 | Yes |

| Sample I/O Configurations | 64 x 800GbE, 128 x 400GbE, 256 x 200GbE |

| SerDes / GPHY | 512 x 100G |

| Type | L3 Multilayer Switch |

We pulled these from Broadcom’s website.

Final Words

These types of advancements are a big deal. As servers increase in density, especially as we get to the Genoa/ Bergamo era where each socket will have 96/128 cores and 192/256 threads, and DPUs deliver storage and more over the network, having faster Ethernet switches to increase link speeds becomes more important. Those individual nodes increasing speeds then put pressure on aggregation layers of the fabric, so having faster chips there again keeps the overall radix in check. We are excited to see switches based on these soon.

In the meantime, the fastest we have looked at hands-on is the Innovium (now Marvell) 32x 400GbE switch.

Small typo but it is IEEE 1588 PTP, not P2P in the features list. This is a huge thing if it supports a full TSN stack.

Yes , ptp is great, one need to know which profiles it supports though.

Could you report on the power consumption? Will this still be air cooled?

I really really want to see you do a POET Technologies video!!! PLEASE!!!