With all the recent controversy regarding WD, Toshiba, and Seagate slipping SMR drives into retail channels and failing to disclose the use of their slower technology, we thought it would be interesting to dive into the actual impact of using a SMR drive. We can hypothesize that there is a negative impact, but it is better to show it. To that end, today we will be comparing a WD Red 4TB SMR drive to its CMR predecessor, as well as CMR drives from other manufacturers.

Accompanying Video

With this piece, we have a companion video:

While our YouTube presence is still small compared to the STH main site, we thought this was an important enough finding that we should try reading those who may be impacted. Feel free to listen along while you read.

SMR vs CMR – A quick primer

Many of our readers may already be familiar with the differences between SMR and CMR, but a quick refresher never hurt anyone!

First up is CMR, which stands for conventional magnetic recording. This has been the standard technology behind hard drive data storage since the mid-2000s. Data is written on magnetic tracks that are side-by-side, do not overlap, and write operations on one track do not affect its neighbors.

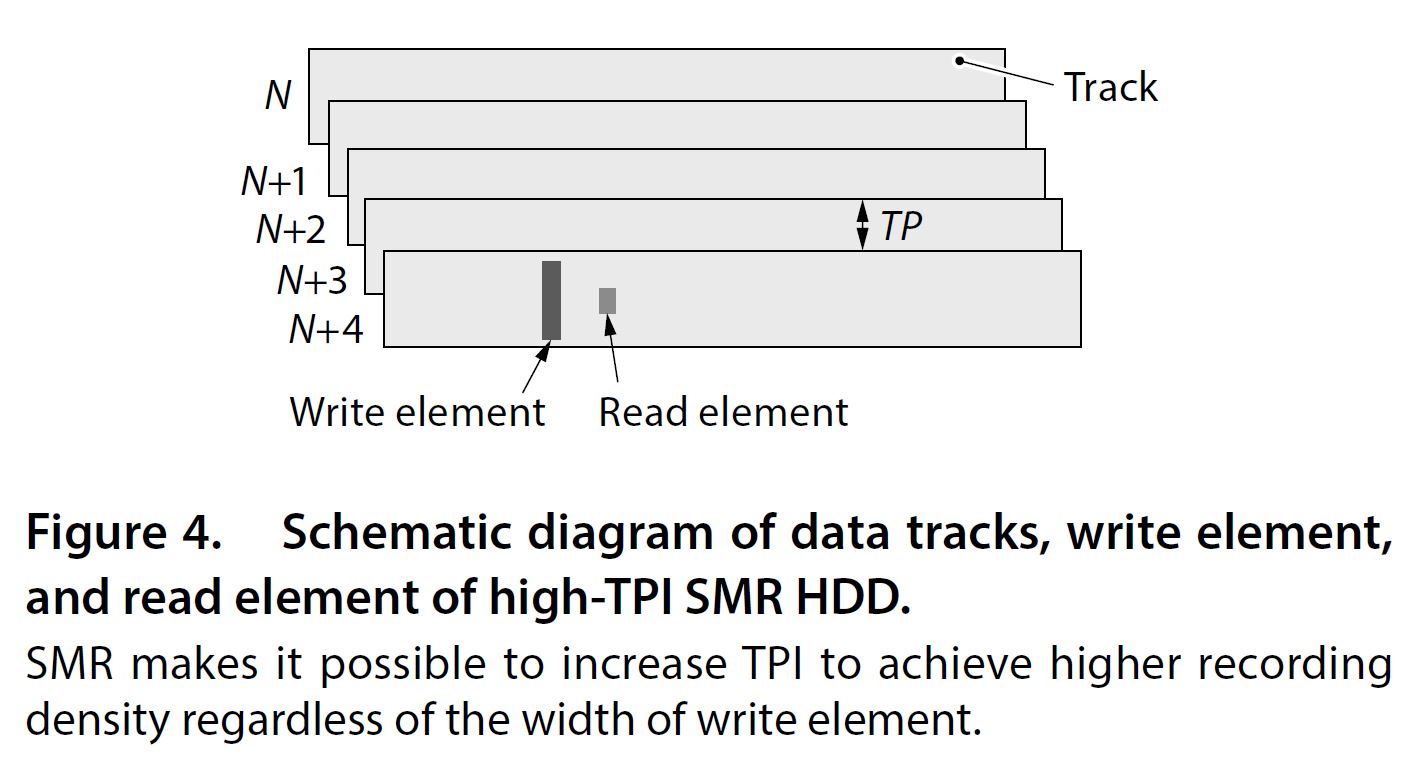

The newer contender is SMR, or shingled magnetic recording. It is called shingled because the data tracks can be visualized like roofing shingles; they partially overlap each other. Because of this overlap, the resulting tracks are thinner allowing more to fit into a given area and achieving better overall data density. The WD Red is a device-managed SMR drive, which presents itself to the operating system as a normal hard drive.

The overlapping arrangement of SMR tracks complicates drive operations when it comes time to write data to the disk. When data is written on a SMR drive the data on the overlapping tracks will be affected by the write process as well. This forces the data on the overlapping tracks to be rewritten during the process, which takes extra time to perform.

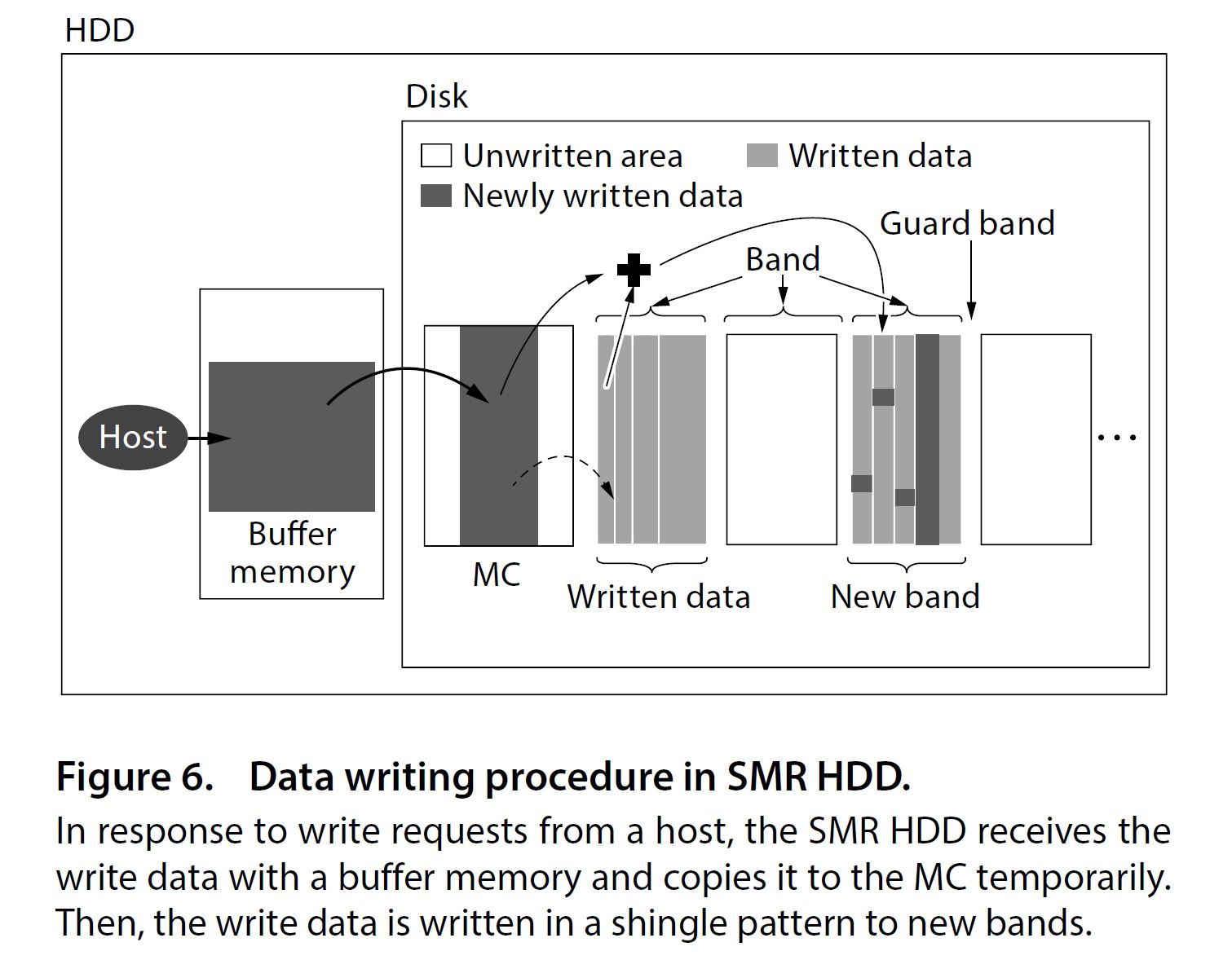

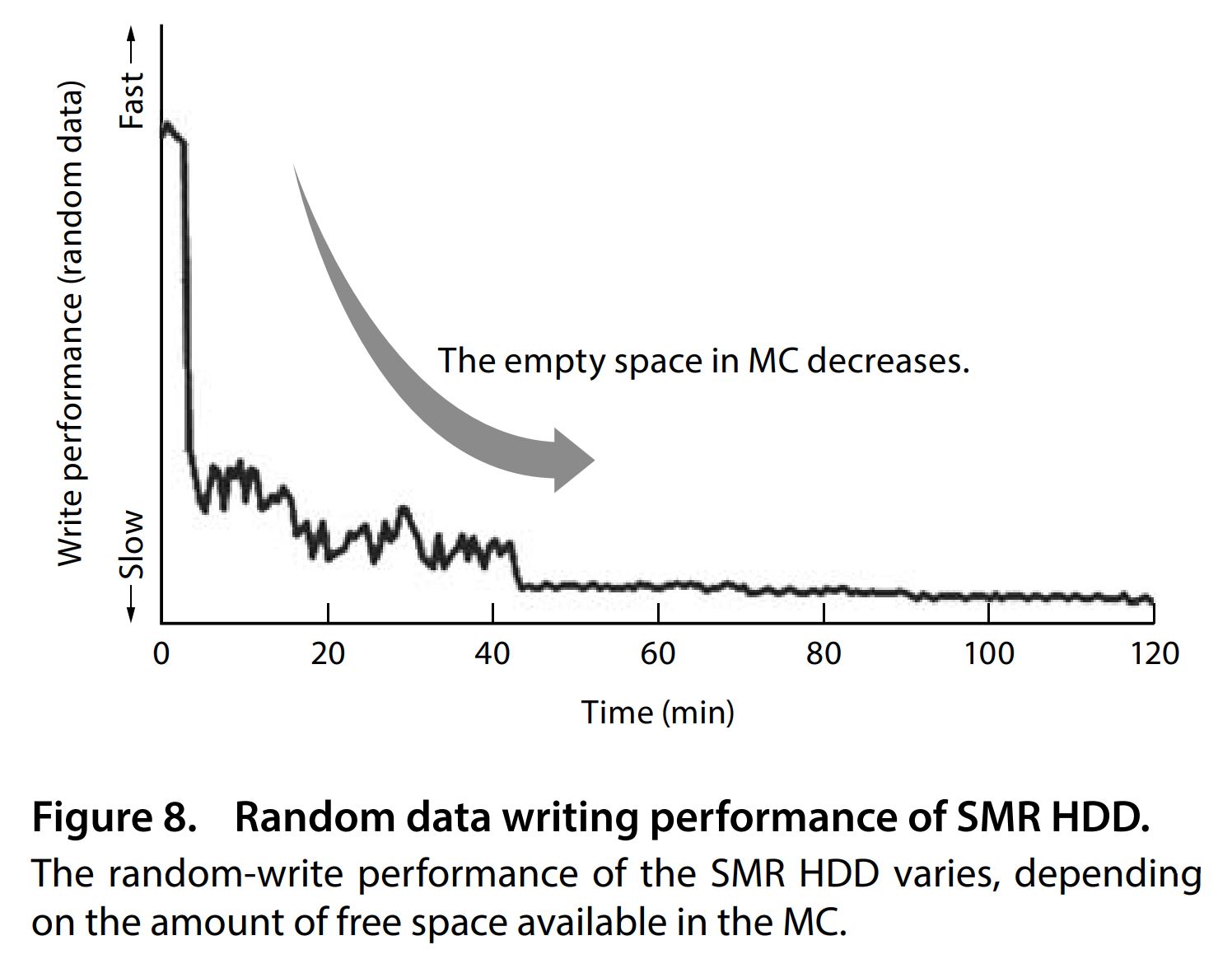

As a mitigation against this penalty, writes can be cached to a segment of the drive that operates with CMR technology, and during idle time the drive will spool those writes out to the SMR area. Obviously this CMR cache will have a limited capacity, and with enough write operations can be exhausted.

When that happens the drive has no choice but to write directly to SMR and invoke a performance penalty. WD has not provided the specifics of how their drives mitigate against the performance impact of using SMR, so we are operating on guesswork as to the size or even existence of a CMR cache area in the WD Red.

If you want another 3rd party description of this, you can see the great 2015 paper by Toshiba: Shingled Magnetic Recording Technologies for Large-Capacity Hard Disk Drives.

After the previous article on STH, the question we received was how this impacts arrays. Specifically, RAID arrays our readers use. We utilize a lot of ZFS at STH, so in mid-April 2020 we started a project to see if, indeed, there was a difference. In short, there was, and in a big way.

Test Configuration

For the test configuration, we wanted a configuration that de-emphasized CPU performance. Effectively we wanted to take CPU performance out of the equation to focus on drive performance. Here is what we utilized:

- System: ASRock X470D4U

- CPU: AMD Ryzen 5 3600

- Memory: 2x Crucial 16GB ECC UDIMMs

- OS SSD: Samsung 840 Pro 256 GB

- OS: Windows 10 Pro 1909 64-bit / FreeNAS 11.3-U2 (note, the latest is FreeNAS 11.3-U3.1 but it was released well after we started the project)

- RAIDZ array disks: 4x Toshiba 7200RPM CMR HDDs

Here is our list of drive contenders:

- HGST 4TB 0F26902 (7200 RPM)

- Seagate Ironwolf NAS 4TB ST4000VN008

- WD Red 4TB WD40EFRX (CMR)

- WD Red 4TB WD40EFAX (SMR)

The WD40EFAX is the only SMR drive in the comparison and is the focus of the testing.

Testing the WD Red 4TB SMR WD40EFAX Drive

We had two main areas of testing. First up, the new SMR drive has been put through a handful of standard benchmarks just to see how it performs in the context of a larger pool of drives. After that, some more targeted tests were run, pitting the WD40EFAX against three other CMR 4TB drives in a standard ZFS RAIDZ operation: rebuilding an array with a new drive after a drive has failed.

Prior to beginning this sequence of tests, the drives were prepped by having 3TB of data written to them, and then 1TB of that data is deleted. Testing commenced immediately after the drive prep was completed. First, a simple 125GB file copy to test sequential write speeds outside of the context of a benchmark utility. Following that, CrystalDiskMark was used to see if the large sequential write from the first test would have an lasting impact on the drive performance. These tests were performed as rapidly as possible to minimize drive idle time between them. Finally, a FreeNAS RAIDZ resilver was performed.

These targeted tests are not designed to be comprehensive, but instead, illuminate any obvious differences between the SMR drive and its CMR competitors.

The RAIDZ resilver test is of particular interest, since the WD Red drive is marketed as a NAS type drive suitable for arrays of up to 8 disks. A resilver or RAID rebuild involves an enormous amount of data being read and written, and has the potential to be heavily impacted by the performance penalties of SMR technology.

The test array is a 4-drive RAIDZ volume that has been filled to around 60% capacity. A drive is then removed from the array, and our test drives will be inserted in its place and the resilver timed. The other three drives in the array remain consistent. In an attempt to add additional stress to the scenario, during the resilver some load will be placed upon the array; 1MB files will be copied to the array over the network, and 2TB of data will be read from the array and copied over the network to a secondary device. This is a significant workload, but we wanted to stress the drives to ensure we could get separation. Also, NAS units and RAID arrays are designed to continue serving applications and users when in degraded states.

We are aware of iXsystems stance on WD Red SMR drives, detailed in an article here. The short version is that they advise against use of these drives. That blog was posted after we had already embarked upon this adventure. It says look to WD for more information and WD has not, over the course of the ensuing month, provided an update. Even after it came out we thought the experiment worthwhile since the number of users that read the iXsystems blog is likely a minority, even among STH readers.

We are also testing a common use case that many may not think of. Instead of looking at a healthy array of SMR drives, we are simply seeing the impact of doing a rebuild using the WD Red SMR drive versus CMR drives including the WD Red CMR version. This is important because it touches on those who are not just buying a new set of drives for an array, but instead is a commonly-known case where a user may have to purchase a drive quickly to get a NAS back to a healthy state as soon as possible. Think of this as you know you have WD Red 4TB drives (likely CMR) in your FreeNAS array, a drive fails so you go to Best Buy. They have a WD Red in stock so you buy it and install it without doing a day’s worth of online research.

Next, we are going to get to our test results before getting to our final words.

Thanks for putting your readers first with stuff like this. That’s why STH is a gem. It takes balls to do it but know I appreciate it.

You didn’t address this but now I’ve got a problem. Now I know I’ve sold my customers FreeNAS hardware that isn’t good. I had such a great week too.

If you watch the video, it’s funny. There @Patrick is saying how much he loves WD Red (CMR) drives while using this to show why he doesn’t like the SMR drives. At the start you’d think he’s anti-WD but by the end you realize he’s actually anti-Red SMR. Great piece STH.

It’s nice to see the Will cameo in a video too.

I know I’m being a d!ck here but the video has a much more thorough impact assessment while this is more showing the testing behind what’s being said in the video. Your video and web are usually much closer to 1 another.

Finally a reputable site has covered this. I’m also happy to see you tried on a second drive. You’d be surprised how often we see clients do this panic and put in new drives. Sometimes they put in blues or whatever because that’s all they can get. +1 on rep for it.

NAS drives are always a gamble, SMR or not, you should always keep away from the cheap HDD drives and that also includes cheap SSD’s if you are trying to have a NAS that have a good performance in a Raid setup.

Spend a little bit more money for the 54/5600 – 7200 RPM drives that are CRM. And for SSD be aware that SSD QLC SSD drives will fall back to about 80MBps transfer rate as soon as you fill the small cache that it has built in.

So take your time and pick your storage depending on your needs.

1) For higher NAS use stay away from SMR HDD, and QLC SSD’s

2) For backup purposes SMR HDD and QLC SSD is a good choice.

CMOSTTL this basically shows stay away from SMR even for backup in NASes. Reds aren’t cheap either, but they’ve previously been good.

I’d like to say thanks to Seagate for keeping CMR IronWolf. We have maybe 200 CMR Reds that we’ve bought over the last year. I passed this article around our office. Now we’re going to switch to Seagate.

would be interesting to see RAID rebuild time on a more conventional RAID setup. perhaps hardware raid or Linux mdadm etc, instead of just ZFS.

Would be worthwhile to at least update the following articles with a warning to avoid SMR HDDs when using ZFS:

https://www.servethehome.com/buyers-guides/top-hardware-components-freenas-nas-servers/top-picks-freenas-hard-drives/

https://www.servethehome.com/hpe-proliant-microserver-gen10-plus-ultimate-customization-guide/2/

Shucking external drives (which are often SMR) is mentioned on both pages.

At the end of the youtube, it clearly shows a WD produced spec sheet that shows which drives are SMR vs CMR. Any chance anyone has a link to that? I’d really like to go through all my drives and explicitly verify which ones are SMR vs CMR.

Robert Dole,

I think this is the link you are looking for: https://documents.westerndigital.com/content/dam/doc-library/en_us/assets/public/western-digital/product/internal-drives/wd-red-hdd/product-brief-western-digital-wd-red-hdd.pdf

Fortunately I bought WD Red 4TB drives a long time ago and they are EFRX and they were used in a RAID system. Since then, I standardized on 10 TB which are CRM. I had followed the story on blocksandfiles (.com) and this is really good that it landed on STH and then followed by a testing report.

It is indeed a good sign to see STH calling BS when it is… BS.

Thank you!

CONCLUSION: one more checkbox to check when buying drives, not SMR?

Dear Western Digital, you thought you could get away with it because a basic benchmark does not show much difference OR you were not even aware of the issue because you did not test them with RAID. Duplicity or lazy indifference or both? On top of which you badly tried to cover it up before finally facing it up.

Dear Western Digital, I will probably continue to buy WD Red in the future, but I just voted with my $$$ following that story. I needed 3 x 10TB drives, I went with barely used open-box HSGT He10 on eBay (all 2019 models with around 1,000 hours usage). They were priced like new WD Red 10TB ;-)

Thank you to Will for doing this testing and Patrick for making it happen. It’s about time a large highly regarded site stepped in by doing more than just covering what Chris did.

I want to point out that you’re wrong about one thing. In the Video Patrick says 9 days. If you round to nearest day it’s 10 days not 9. Either is bad.

Doing the CMR too was great.

Luke,

If we’d said 10 days, someone could come along and say we were exaggerating the issue. We say 9 days and we’re understating the problem, which in my mind is the more defensible position. An article like this has a high likelihood of ruffling feathers, so we wanted to have as many bases covered as possible.

So glad I got 12TB Toshibaa N300’s last year that are CMR.

I didn’t specifically checked for it back then because, you know, N300 series.

Would be very unhappy if I had gotten SMR drives though.

Thanks, Will. Good analysis. I learned this lesson a few years ago with Seagate SMR drives and a 3ware 9650se. Impossible to replace a disk in a RAID5 array, the controller would eventually fail the rebuild.

I think you should explain how SMR works with a bunch of images or an animation. Most people do not understand how complex SMR is when data needs to be moved from a bottom shingled track. And really nobody (you, too) mentions how inefficient this is in case of power consumption as all the reading and writing while moving the data on a top shingle consumes energy while an CMR drive is sleeping all the time.

At least WD is now showing which model numbers are CMR or SMR on their spec. page https://www.westerndigital.com/products/internal-drives/wd-red-hdd

AFAIK, the SMR Reds support the TRIM command. I wonder to what extent can performance be regained with its use. Also, if you trim the entire disk (and maybe wait a little), does it return to initial performance? Has anyone tested this?

Not that I would use SMR for NAS. In my opinion, the SMR Reds are a case of fraudulent advertising. Friends don’t let friends use SMR drives for NAS.

Maybe I’m in the minority here. I’m fine with the drive makers selling SMR drives. I just want a clear demarcation. WD Red = CMR, WD Pink = SMR. Even down to external drives needing to be marked in this way.

Clearly the problem is with the label on the drive

WD Red tm

NAS Hard Drive

should read

WD Red tm

Not for NAS Hard Drive

If people can sue Apple for advertising a phone has 16GB of storage when some of that is taken up by the operating system, those two missing words may make a huge different in the legal circus.

Yes, there is an array running here, due to the brilliance of picking drives from different production runs and vendors, that has half SMR and half CMR. While it’s running well enough at the moment, does anyone know if a scrub is likely to cause a problems with SMR drives?

Great article as always. :) Great to see some hard facts related to this after reading about it from others.

And it looks like WD got caught and now have a class-action law suit brewing:

https://arstechnica.com/gadgets/2020/05/western-digital-gets-sued-for-sneaking-smr-disks-into-its-nas-channel/

Form to join the class:

https://www.hattislaw.com/cases/investigations/western-digital-lawsuit-for-shipping-slower-smr-hard-drives-including-wd-red-nas/

I just ordered 3 WD 4TB Red for a new NAS and had no clue! Checked the invoice and they are marked as WD40EFRX (phew)…

Great article!

Such a shame, I was happy with putting red drives into client Nas now I will be putting ironwolf, what were Western digital thinking? Was there nobody on the team who realised the consequences?

I have a problem with your RAIDZ test: normally I replace failed disks with brand new, just unpacked ones, not the ones that were used to write a lot of data and immediately disconnected. But you are not showing how long does it take for an array to rebuild under those conditions? Granted, this is a good article that demonstrates what happens when SMR cache is filled and disks don’t have enough idle time to recover, but I doubt this happens a lot in the real life, and your advice to avoid SMR does not follow from the data you’re obtained. All I can conclude is “don’t replace failed disks in RAIDZ arrays with SMR disks that just came out of heavy load and did not have time to flush their cache.

How often do you force your marathon runners to run sprints just after they’ve finished the marathon?

This is a a great article. Ektich we load test every drive before we replace them in customer systems to ensure we aren’t using a faulty drive. You don’t test a drive before putting it into a rebuild scenario? That’s terrible practice. You don’t need to do it with CMR drives either. CMR was tested in the same way so I don’t see how its a bad test. Even with a cache flush they’re hitting steady state because of the rebuild. Their insight into the drive being used while doing the rebuild is great too.

More trolls on STH when you get to these mass audience articles. But great test methodology STH. It’s also a great job having the balls to publish something like this to help your readers instead of serving WD’s interests.

Thank you for the article and thank you in particular Will for the link to the WD Product brief. From the brief I now know the 3TB drives I bought for my Synology are CMR. I was under the misapprehension (along with that sinking feeling) from reporting from other sites that all 3TB WD Reds are SMR when in fact there are two models. I’m guessing the CMR model is an older one as I bought mine a few years ago now.

I second the motion to re-test with Linux MD-RAID. Without LVM file systems, just plain MD-RAID single file system. Plus, I’d like to see some stock hardware RAID devices tested along the same lines.

To keep the comparison, use the same disk configuration of 4 x 4TB CMR disk in a RAID-5. Replacing with 1 SMR disk.

About 5 years ago I bought a Seagate 8TB Archive SMR disk for backing up my FreeNAS. To be crystal clear, I knew what SMR was, and that the drive used it. Since my source had 4 x 4TB WD Red CMRs, using a single 8TB drive for backups was perfect.

Initially it worked reasonably fast, but as time went on, it slowed down. I use ZFS on it, with snapshots, so it actually stores multiple backups. For my use, (it was the only 8TB drive on the market for a reasonable price at that time), it works well. My backup window is not time constrained, I simply let it run until it’s done.

But, selling SMR as a NAS drive, AND not clearly labeling it, (like Red Lite), that should be criminal.

https://www.youtube.com/watch?v=sjzoSwR6AYA

here they compared a Rebuild with mixed drives and the results were not as sever ?

Does it strongly depend on the Type of RAID and Filesystem ?

Would be interesting to test on consumer devices such as Synology or QNAP ?

I do get the unhappyness about not branding correctly but I cannot beleive that the results are this severe for consumer NAS especially ?

Robert, that video is very hard to follow. They are using smaller capacity drives with different NAS systems. They are also not doing a realistic test since it seems they are not putting a workload on the NAS during rebuilds? The ability to keep systems running and maintaining operations is a key feature of NAS/ RAID systems. It is strange not to at least generate some workload during a rebuild.

People are seeing very poor performance with these SMR drives and Synology as well, even in normal operation. A great example is http://blog.robiii.nl/2020/04/wd-red-nas-drives-use-smr-and-im-not.html

The systems and capacities used will impact results in different ways. We wanted to present a real-world use case with ZFS so our readers have some sense of the impact. We use ZFS heavily and many of our readers do as well.

hey thanks for the quick reply!

yes indeed they only compare rebuilding while there is no other access.

But the question for me (as somebody who is about to buy a new NAS as a media hub for Videos and Photos) I still have two old st4000dm005 lying around and would use them and upgrade two additional a cheap 8TB (SMR – st8000dm004) or with the whole SMR NAS drive debate, a very expensive CMR Ironwolf or something like that ?

My use case would just be me and my wife, and once the newborn is at age, perhaps him?

But for a consumer case is the whole SMR debate a real problem? I get that it’s not OK to hide what the drive actually uses, but on a Media Server/Backup level ? Taking into account that you regularly make backups from the backups.

I truly would like to know in order to make a decision. Do I need an expensive CMR (Ironwolf Helium), a “cheaper” SMR Red NAS drive or will a standard barracuda 8TB SMR “Archive Drive suffice”, for Media (Plex) and Photos.

Robert – I generally look for low-cost CMR drives, and expect that they will fail on me. While I expect the drive failures, I also look for a predictable level of performance during operation and rebuilds. In both cases, the WD Red SMR drives would not work for me personally. I will also say that a likely part of the problem here is that these are DM-SMR drives that hide the fact they are SMR from the host. SMR drive support is getting better when hosts know they are using SMR drives.

I generally tell people RAID arrays tend to operate at the speed of their slowest part. If you mix drives, the slower ones tend to dictate performance more times than not.

On the WD Red drives, the 64MB cache CMR drives are still available and worked great in our testing.

Guess I should be happy all mine are EFRX as well…

Someone said this is part of a RACE for BIGGER capacities. It can be… BUT, before that happens, WD is probably using the most demanding customers / environments to TEST SMR tech so they can DEPLOY them in the bigger capacity DRIVES: 8, 10, 12, 14TB and beyond (do not currently exist). I say this because, WD has the same “infected SMR drives” using the well known PMR tech! https://documents.westerndigital.com/content/dam/doc-library/en_us/assets/public/western-digital/product/internal-drives/wd-red-hdd/data-sheet-western-digital-wd-red-hdd-2879-800002.pdf

Why is that? Why keep SMR and PMR drives with the SAME capacity in the same line and HIDING this info from customers? So they can target “specific” markets with the SMR drives? It seems like a marketing TEST!!! How BIG is it?

Note that currently, the MAX capacity drive using SMR is the 6TB WD60EFAX, with 3 platters / 6 heads… So… is that it?? Is WD USING RAID / more demanding users as “guinea pigs” to test SMR and then move on and use SMR on +14TB drives (that currently use HELIUM inside to bypass the theoretical limitation of 6 platters / 12 heads)??? Is that the next step? And after that, plague all the other lines (like the BLUE one, that already has 2 drives with SMR). I’m thinking YES!! And this is VERY BAD NEWS. I don’t want a mechanical disk that overlaps tracks and has to write adjacent tracks just to write a specific track!!!

Customers MUST be informed of this new tech, even those using EXTERNAL SINGLE DRIVES ENCLOSURES!!! I have many WD external drives, and i DON’T WANT any drive with SMR!!! Period!

Gladly, i checked my WD ELEMENTS drives, a NONE of the internal drives is PLAGUED by SMR! (BTW, if you ask WD how to know the DRIVE MODEL inside an external WD enclosure, they will tell you it’s impossible!!! WTF is that??? WD technicians don’t have a way to query the drive and ask for the model number?? Well, i got new for you: crystaldiskinfo CAN!!! How about that? Stupid WD support… )

So, if anyone needs to know WHAT INTERNAL DRIVE MODEL they have in their WD EXTERNAL ENCLOSURES, install https://crystalmark.info/en/software/crystaldiskinfo and COPY PAST the info to the clipboard! (EDIT -> COPY or CTRL-C). Paste it to a text editor, and voila!!!

(1) WDC WD20EARX-00PASB0 : 2000,3 GB [1/0/0, sa1] – wd

(2) WDC WD40EFRX-68N32N0 : 4000,7 GB [2/0/0, sa1] – wd

Compare this with the “INFECTED” SMR drive list, and you’re good to go!

P.S. I will NEVER buy another EXTERNAL WD drive again without the warranty to check the internal drive MODEL first!!!! That’s for sure!

Just read this bollocks: https://arstechnica.com/gadgets/2020/06/western-digitals-smr-disks-arent-great-but-theyre-not-garbage/2/

I saw that Ars piece. I thought it was good in explanation, but it’s odd.

They go way too in-depth on the technical side, but when you’re looking at it, they did a less good experiment. They’re using different size drives, more drives, they’re not putting a workload and just letting it rebuild. They’re using the technical block size and command bits to hide that they’ve done a less thorough experiment. So they go way into the weeds of commands (that the average QNAP, Synology, Dobo user has no clue about) then say it’s fine… oh but for ZFS its still sucks. They wrote the article like someone who uses ZFS though.

I’m thinking WD pays Ars a lot.

Ars articles always lack the depth of real reporting, but do provide an entertainment factor and many times the commenters have much more insight (which is what I love finding and reading).

STH articles have always had the feel of ‘real news’ to me–from the easystore article to this one, highlighting the true pros and cons.

Is this CRM or SRM??

https://www.cnet.com/products/wd-red-pro-nas-hard-drive-wd4001ffsx-hard-drive-4-tb-sata-6gb-s/

Just got off the phone with a Seagate rep. And I’m fuming right now.

Background:

I am running a 6×2.5″ 500GB RAID10 array for a total of 3TB for my Steam library. The drives are Seagate Barracuda ST500LM050 drives from the same or similar batch. The drives perform terrible ever since day 1, causing the whole PC to appear unresponsive for minutes the moment 1 file in the Steam library is rewritten for game updates. Things get worse when Steam needs to preallocate storage space for new games, often I have to leave the machine alone for two to three hours. I already changed motherboard once because I thought it was a motherboard issue. Even with a new motherboard the problem persisted.

Then I found out about this lawsuit. And upon further investigation I found out that these disks are SMR. I filed a support request with Seagate.

I received a phone call from the rep this morning. They were apologetic, but then they dropped the bombshell: All Seagate 2.5″ drives are SMR, they no longer make 2.5″ PMR drives.

I’m really frustrated. There was no information on whether the drives are SMR or PMR, and there were NO indication whatsoever that they should not be used in RAID arrays.

Great article, thanks for the info. Using older WD Reds in a server with ZFS raid, and thinking about buying more on sale… big eye opener here. Had no idea this was a thing but glad I googled it now.

9 days to rebuild a RAID is just bonkos.

Very interesting, very disconcerting. Thanks for testing and reporting!

“An article like this has a high likelihood of ruffling feathers, so we wanted to have as many bases covered as possible.”

Bollocks to the feathers. Keep calm and ruffle on.

STH: do you have a more complete followup article? There are tons of loose ends in this old stuff. For instance, is this effect specific to ZFS’s resilver algorithm? Or has someone else done better followup? (Including raid6/raidz2, since plain old raid1 has been not-best practice for a long time…)

yap. SMR drives actually created a new tier for my server… basically a read only tier that i need to read from but rarely write…they are indeed cheap though

Hi Will Taillac,

The RAID rebuild problem you refer to is a peculiarity of ZFS. It does not occur in Hardware Based RAID and it does not occur in Windows RAID or Storage Spaces. It does not occur in other Software Defined Storage solutions.

In fact, ZFS ‘resilvering/reslivering’ is the only known ‘workload’ that stumbles over SMR.

Isn’t it time to place the ‘blame’ where it properly belongs — on ZFS?

KD Mann you mean to blame the 15+ year old storage platform with millions of installations instead of the company that marketed those drives as suitable for ZFS? That’s a really weird position unless you work at WD.

I knew of the WD SMR scandal. Fortunately, I have four of the WD40EFRZ CMR models, so breathed a sigh of relief. However, WD have gotten off incredibly lightly here. They marketed their Red range as NAS drives, all of them, not just those they knew were CMR. That’s completely unacceptable and the fact they weren’t forced to change those SMR Red’s to Blue or Blue Plus or something, is outrageous.

If you scour Amazon for WD Red’s, you will rarely, if ever, see whether the drive is CMR or SMR. I have had to resort to a lengthy table of drives by model number to find out whether a WD, or other for that matter, is CMR or SMR.

The last time I checked, WD’s 6Tb Red was SMR and I couldn’t find any CMR counterpart!

WD, stop selling the drives as Red models and at higher prices than the desktop Blue range!!!