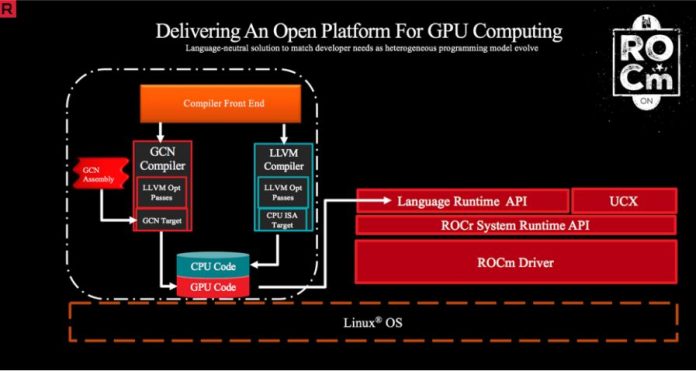

AMD announced support for ROCm in conjunction with Tensorflow 1.8 (see this blog post.) We applaud that AMD is pushing its TensorFlow support forward. At the same time, we cannot help but note that the gap between AMD and NVIDIA experience and efforts is widening. The latest announcement is that the company is maintaining a Tensorflow 1.8 ROCm enabled stack. It is also releasing a Docker container with Tensorflow 1.8 and Python2. Since there are a lot of industry analysts who will look facially at this announcement as closing the gap, we wanted to take a second and show that the gap is still enormous. AMD needs to do this work, but they also need to speed up efforts by a large amount.

AMD Announces a ROCm Tensorflow Docker Container

Containers are extremely popular in deep learning. One of the major problems they solve is keeping environments packaged and working. AMD announced a packaged TensorFlow with ROCm solution.

alias drun='sudo docker run -it --network=host --device=/dev/kfd --device=/dev/dri --group-add video --cap-add=SYS_PTRACE --security-opt seccomp=unconfined -v $HOME/dockerx:/dockerx -v /data/imagenet/tf:/imagenet'

drun rocm/tensorflow:rocm1.8.2-tf1.8-python2

For comparison, here is the NVIDIA packaged TensorFlow launch command:

nvidia-docker run -it --rm -v local_dir:container_dir nvcr.io/nvidia/tensorflow:<xx.xx>-py<x>NVIDIA is on its second generation nvidia-docker integration. That basic building block means that NVIDIA’s base implementation can be used for more than just TensorFlow. NVIDIA also supports a wide variety of frameworks and applications with maintained containers.

AMD Needs to Pick-up the Pace

Putting it frankly, AMD needs to pick up the pace of its software support for its GPUs in deep learning. This announcement happened on August 27, 2018, which was a bit strange. TensorFlow 1.10 was released several weeks prior (see here.) TensorFlow 1.8 was released April 27, or about four months earlier. Tensorflow 1.9 was released July 10, 2018. This industry moves so fast that a four-month lag time is enormous.

We know teams in the deep learning training community want AMD to compete. NVIDIA is charging huge premiums for its deep learning GPUs. AMD needs to pick up the pace if it wants to become competitive.

Working to Move Upstream

AMD said it is planning to upstream its work into Tesnorflow. AMD is committing to releasing future updates. Here is the excerpt from the blog post:

“In addition to supporting TensorFlow v1.8, we are working towards upstreaming all the ROCm-specific enhancements to the TensorFlow master repository. Some of these patches are already merged upstream, while several more are actively under review. While we work towards fully upstreaming our enhancements, we will be releasing and maintaining future ROCm-enabled TensorFlow versions, such as v1.10.”

In order to gain momentum, AMD needs more. AMD needs to state which versions of TensorFlow they will support (e.g. TensorFlow 1.9 which is not mentioned.) AMD needs to give a timeline. Will they be four months behind in the future? Finally, and more importantly, AMD needs their work to be mainline so data scientists can leverage investments in AMD GPUs immediately upon a new TensorFlow release.

Final Words

I asked our Editor-in-Chief, and STH has AMD Vega cards in our lab, even working in conjunction with AMD EPYC. The general industry perception is that a healthy AMD is good for the industry and customers. AMD has some promising hardware, despite NVIDIA’s focus on specific Tensor Cores. As an industry, we need AMD’s software ecosystem to deploy.

This article is rather specious. Comparing run commands to execute docker container: perhaps the author does not know an alias goes into your bashrc or profile file. Only an idiot would type the alias repeatedly. OTOH, if he really would type the alias, let’s have him cat out the contents of the nvidia-docker script and present it as well for a more reasonable comparison.

AMD is avmuch smaller company than nVidia. Carping about that doesn’t help anything. ROCm is open source, it’s up on github, the author could build/validate the latest tensorflow and post details on github.

If he wants to be spoonfed, well, nVidia will be happy to hold the spoon for him.

Erich Merth – AMD’s also has the volume mapping in the alias. And NVIDIA, Microsoft, VMware, AWS, GCP have all shown us IT people like to be spoonfed.

In some ways I agree with Eric. But Cliff has a point. The people doing training don’t want to do another step. AMD being smaller is fine but people who use their cards have to do more work to get AMD working.

This article fails to mention that Intel has done a great job getting their tools upstreamed. They are a bigger company and they don’t have GPU performance for some of today’s workloads but they’ve legitimately gotten big performance gains that are accessible because of their work on the software side. I’d still use NVIDIA for performance, but Intel is doing what AMD needs to do

As an ML engineer I’ve experienced pretty good forward compatibility of models from TF 1.2.1 to TF 1.10 so I can’t say that I get too excited about the latest version of Tensorflow.

1.9 and 1.10 have good stuff in them and I am looking forward to 1.11, etc. Getting it to work right at the framework and driver level is a matter of getting all the little details right so the port of the backend is probably more treacherous than application level porting.

It is not crazy for them to target a particular version, such as 1.8, get it working, but fixing the target controls “changing requirements” and it is better to be able to ship something predictably rather than chase a moving target and have no idea when you will land.

It would be nice to seem them catch up… Ah, maybe I need to get a Radeon card for my spare matchine.

TF 1.10 ROCm support is ready:

https://github.com/ROCmSoftwarePlatform/tensorflow-upstream/blob/develop-upstream/rocm_docs/tensorflow-install-basic.md#install-tensorflow-rocm-port

In addition to docker images it’s now supporting pip installation as follows:

pip install –user tensorflow-rocm