The Supermicro AOC-S100GC-i2C is perhaps one of the most interesting NICs that is on the market today. This is a dual 100GbE solution designed for Supermicro servers. What is somewhat different is that this NIC uses the Intel 800 series or Columbiaville networking first announced in 2019. Intel went into production in the summer of 2020 so this is one of the first NICs out there with Intel’s new “foundational” NIC stack. There are some major benefits and changes to Intel’s networking offering here. In this review, we are going to take a look at the AOC-S100GC-i2C, discuss what makes it tailored to Supermicro, and why it is different than the AOC-S100G-i2C. We are also going to do a small deep-dive into some of the Intel Ethernet 800 series features.

Supermicro AOC-S100GC-i2C Dual 100GbE NIC Overview

Starting off with the ports, we get two QSFP28 ports each supports 100Gbps networking. While many compute nodes have focused on 25GbE SFP28 NICs, there are many network processing, storage, and other applications where 100GbE is attractive.

We wanted to discuss what makes this card more unique than a general Intel card such as the Intel E810-CQDA2. If you look at the right side of the PCB, you will see a Nuvoton controller and a header with a set of pins. For those wondering, that connector on the bottom right of the card is the NC-SI header that allows this card to also handle out-of-band network duties for a Supermicro server’s IPMI / BMC. If you are buying a Supermicro server, this ability for tighter integration is why you get the Supermicro card versus the Intel card.

Perhaps the biggest feature is that these NICs support PCIe Gen4 x16 connections. In the previous generations of 100GbE NICs that were PCIe Gen3 x16 based, there was not enough bandwidth on the PCIe side to fully saturate two 100GbE NIC ports with 16 PCIe lanes or a single port with 8 PCIe lanes. There were some novel solutions to get around this such as multi-host adapters with a second cabled PCIe Gen3 x16 connector to go to a second motherboard socket. Still, that added complexity that using PCIe Gen4 simply does not have. Although the Columbiaville chip is still 100Gbps, it can achieve that host bandwidth even with half the PCIe lanes required.

We are showing the rear of the card just for completeness’ sake. There is not much on the rear of this card.

If you were wondering about the difference between this card and the AOC-S100G-i2C is that the S100G used the Intel Red Rock Canyon FM10420 NIC. That was an interesting technology itself, but given the complexities of Red Rock Canyon, we expect the AOC-S100GC-i2C Columbiaville-based Intel Ethernet 800 series NIC to be more popular.

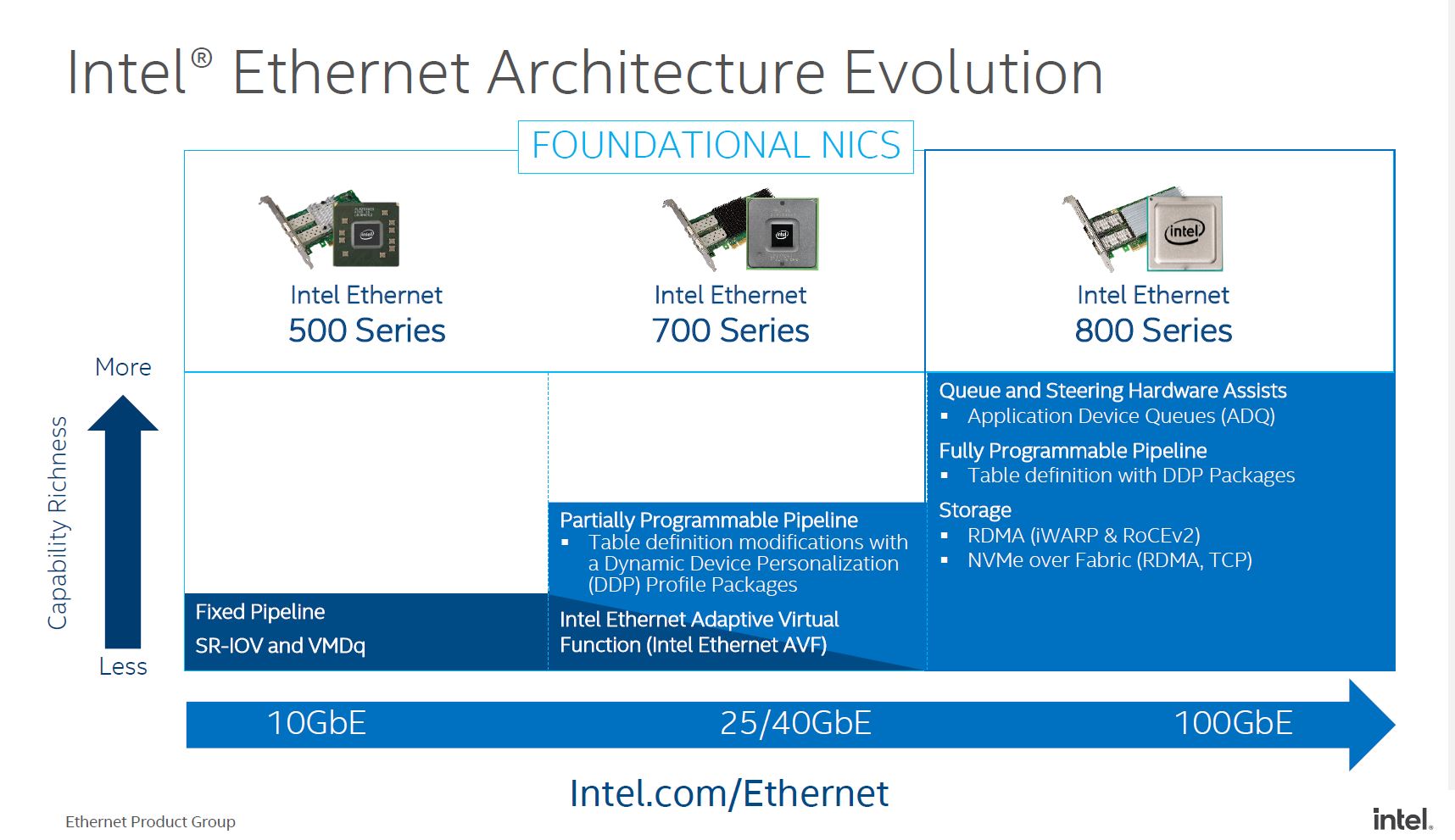

The physical attributes are only a part of the story with this NIC. Mentioned earlier in this article is that the new Intel Ethernet 800 series is what Intel considers part of its foundational NIC series which means it is a successor to the Intel Ethernet 500 series and Ethernet 700 series.

As you can see here, Intel is showing a higher-level feature set than it did in previous generations. While we are not going to call this a SmartNIC or DPU, Intel is adding more functionality to the device. Some may see this as Intel trying to move up the stack, however, this is more of a necessity in order to operate in the 100GbE performance realm.

Next, we are going to discuss some of these key features of the Supermicro AOC-S100GC-i2C and why they are needed.

Intel Ethernet 800 Series Functionality

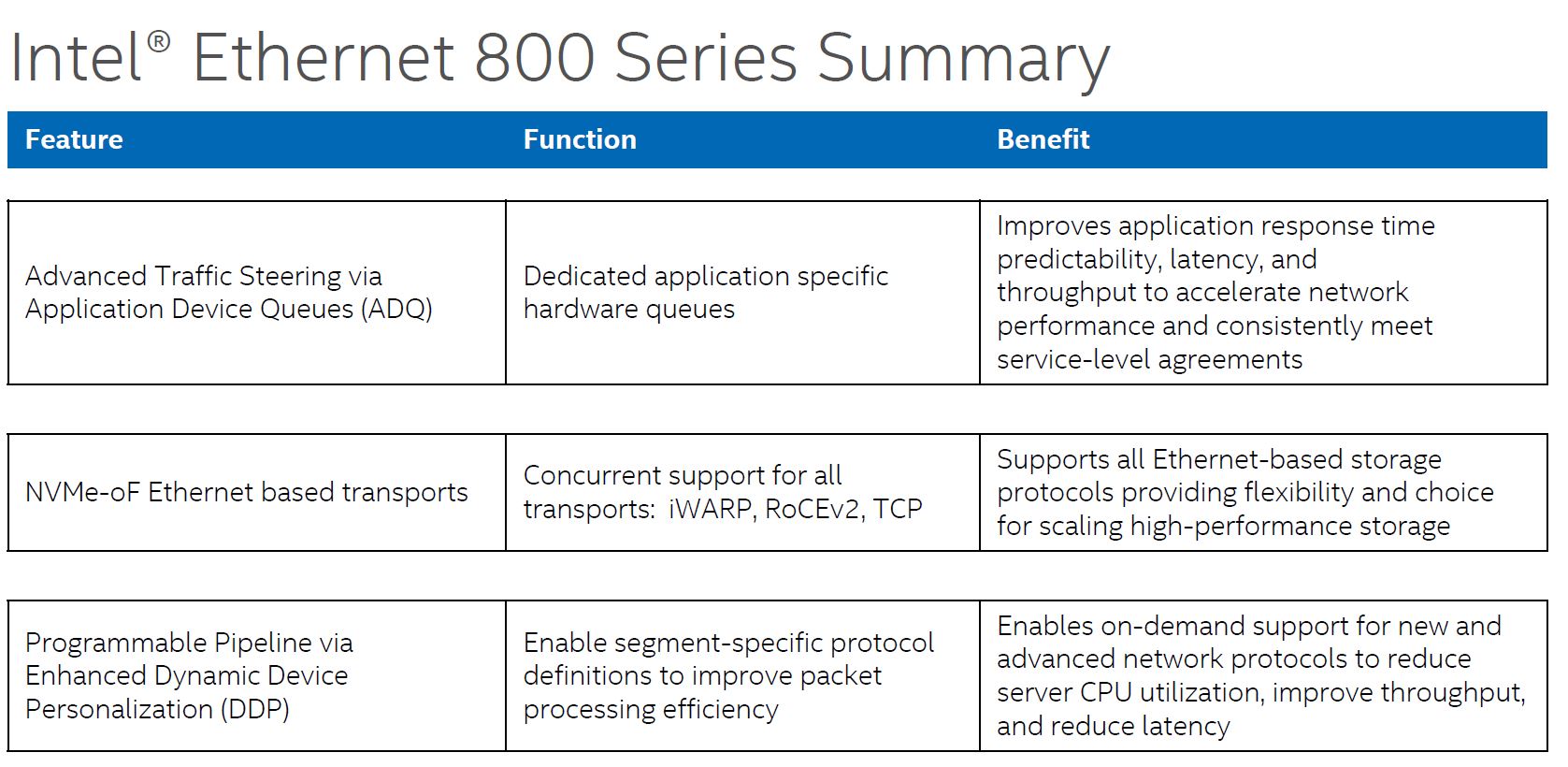

As we move into the 100GbE generation, NICs simply require more offload functionality. Functions that at 10GbE or 25GbE were manageable on CPU cores simply create too much CPU utilization at 100GbE speeds. Remember, dual 100GbE is not too far off from a PCIe Gen4 x16 slots bandwidth, which puts a lot of pressure on a system. With modern CPUs, if you fill every PCIe x16 slot with 100GbE NICs and try to run them at full speed with an application behind them, systems will simply not be able to cope. The Fortville dual 40GbE (XL710) NICs were on the edge of not having enough offload capabilities for many applications. They were known as lower-cost NICs, but not necessarily the most feature-rich which makes sense. Still, with the Ethernet 800 series, Intel needed to increase feature sets to stay competitive. To do so, they primarily have three new technologies ADQ, NVMeoF, and DDP. We are going to discuss each.

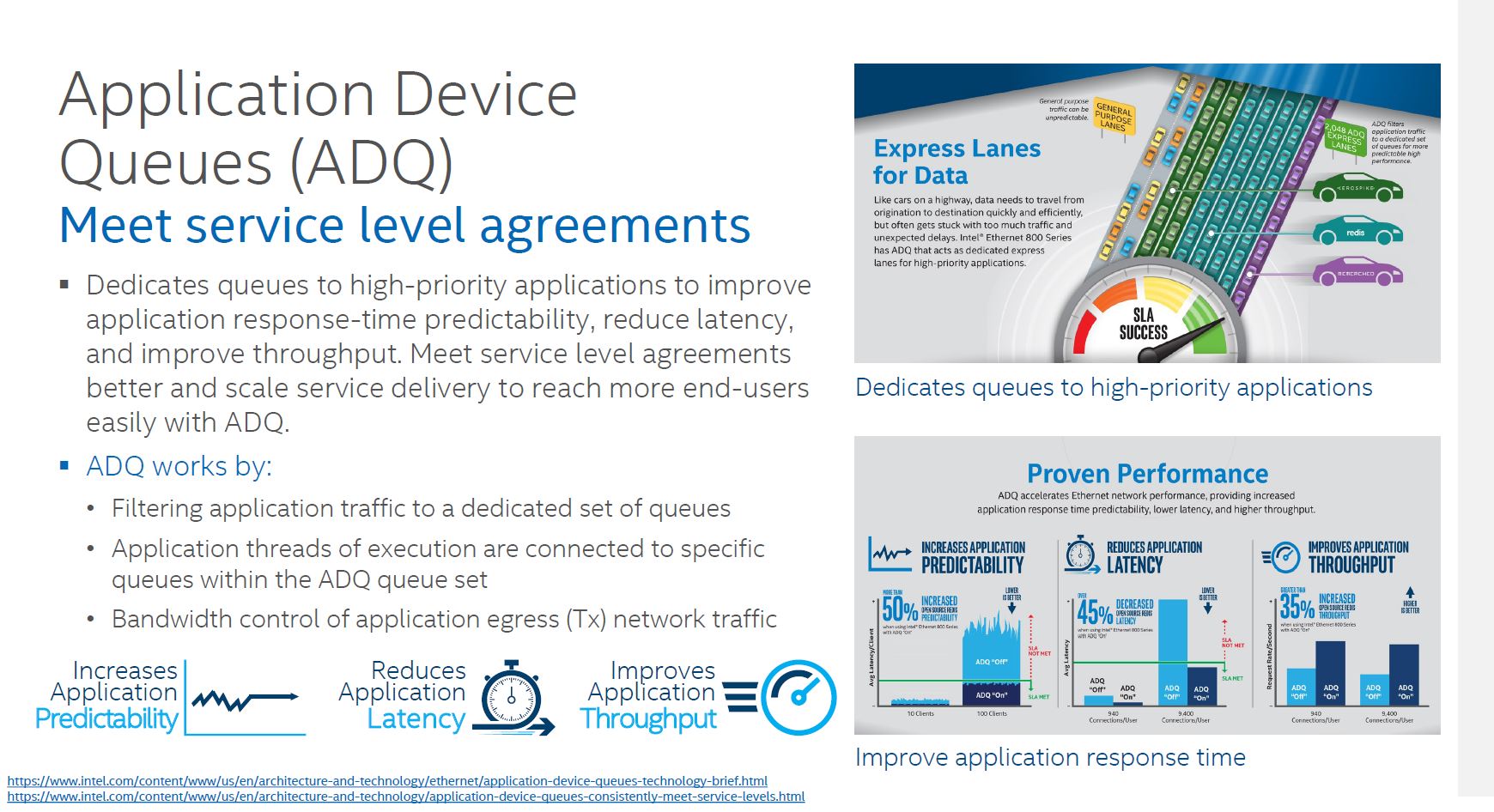

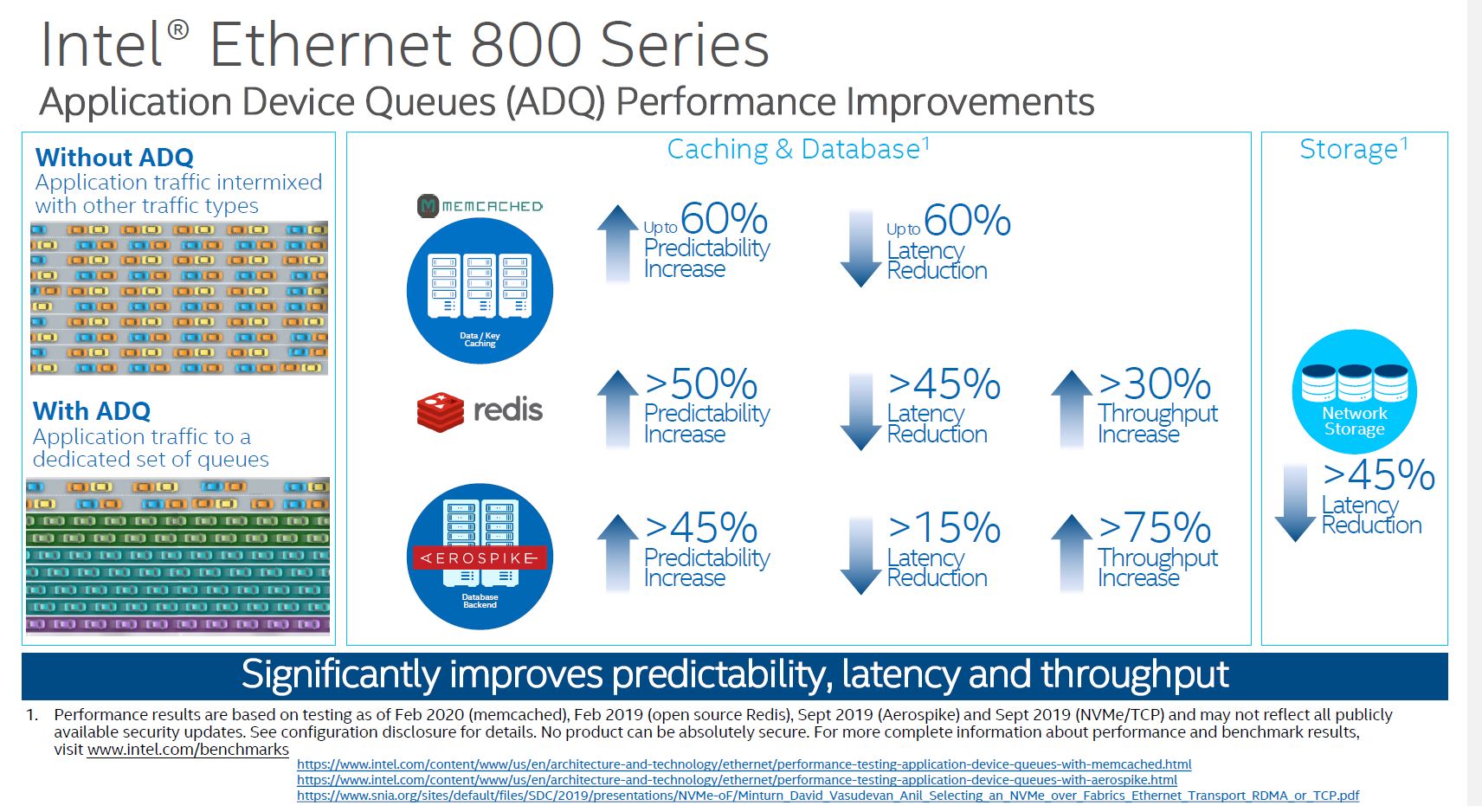

Application Device Queues (ADQ) are important on 100Gbps links. At 100GbE speeds, there are likely different types of traffic on the link. For example, there may be an internal management UI application that is OK with a 1ms delay every so often, but there can be a critical sensor network or web front-end application that needs a predictable SLA. That is the differentiated treatment that ADQ is trying to address.

Effectively, with ADQ, Intel NICs are able to prioritize network traffic based on the application.

When we looked into ADQ, one of the important aspects is that prioritization needs to be defined. That is an extra step so this is not necessarily a “free” feature since there is likely some development work. Intel has some great examples with Memcached for example, but in one server Memcached may be a primary application, and in another, it may be an ancillary function which means that prioritization needs to happen at the customer/ solution level. Intel is making this relatively easy, but it is an extra step.

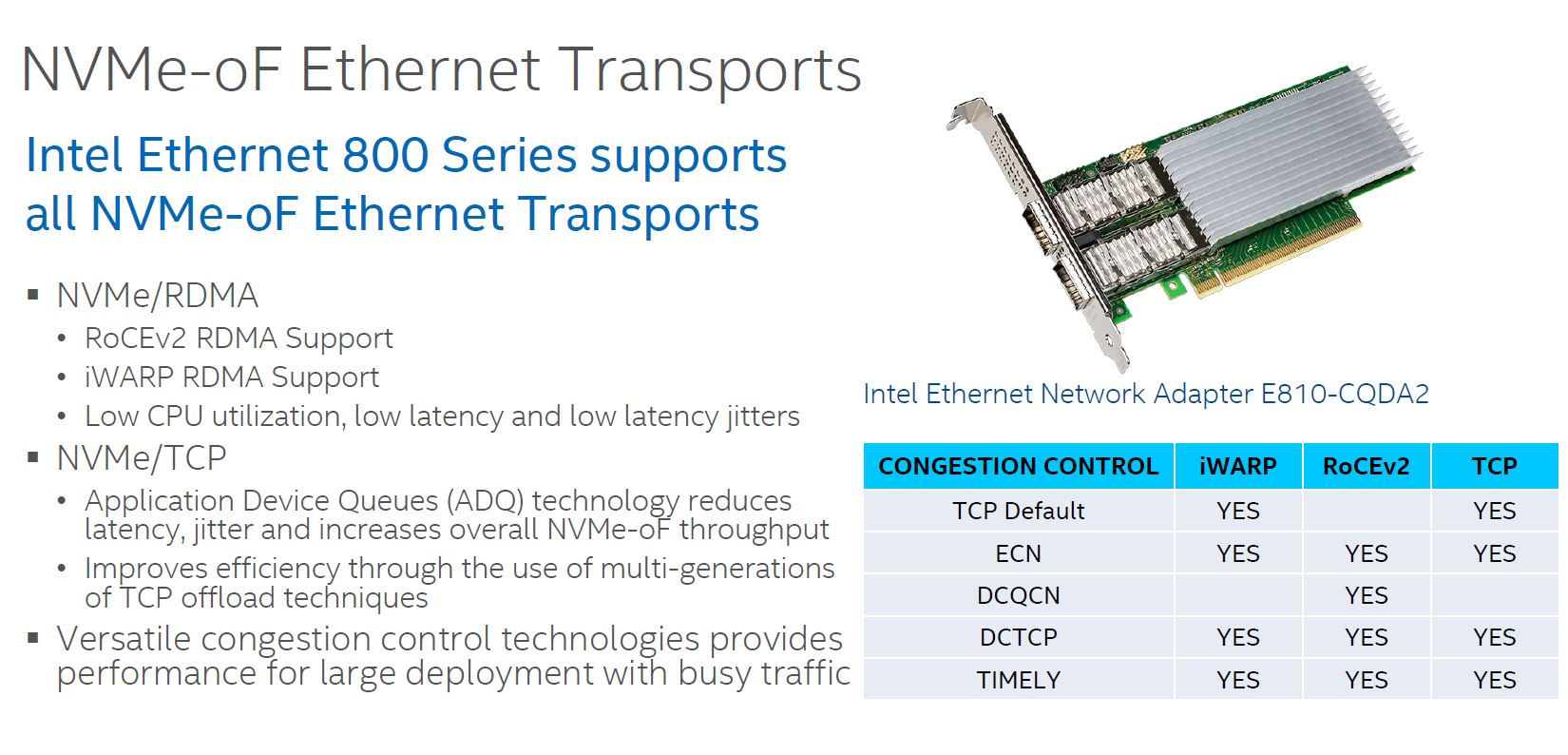

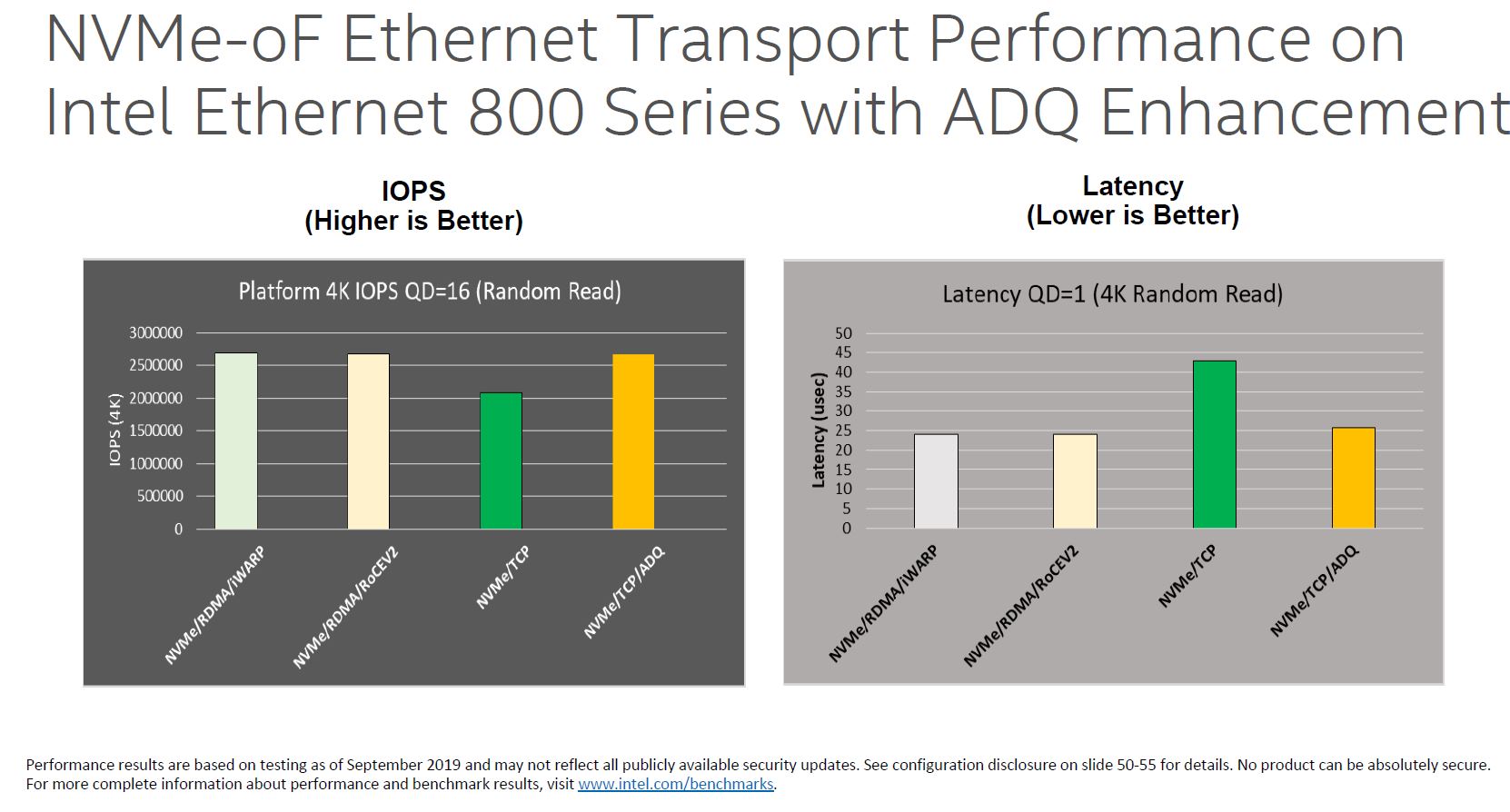

NVMeoF is another area where there is a huge upgrade. In the Intel Ethernet 700 series, Intel focused on iWARP for its NVMeoF efforts. At the same time, some of its competitors bet on RoCE. Today, RoCEv2 has become extremely popular. Intel is supporting both iWARP and RoCEv2 in the Ethernet 800 series.

The NVMeoF feature is important since that is a major application area for 100GbE NICs. A PCIe Gen3 x4 NVMe SSD is roughly equivalent to a 25GbE port worth of bandwidth so a dual 100GbE NIC provides about as much bandwidth as 8x NVMe SSDs in the Gen3 era. The PCIe Gen4 NVMe SSDs like the Kioxia CD6 PCIe Gen4 Data Center SSD we reviewed are getting close to being twice that speed, but many are still using Gen3 NVMe SSDs. By increasing support for NVMeoF, the Intel 800 series Ethernet NICs such as the AOC-S100GC-i2C become more useful.

What is more, one can combine NVMe/TCP and ADQ to get closer to some of the iWARP and RoCEv2 performance figures.

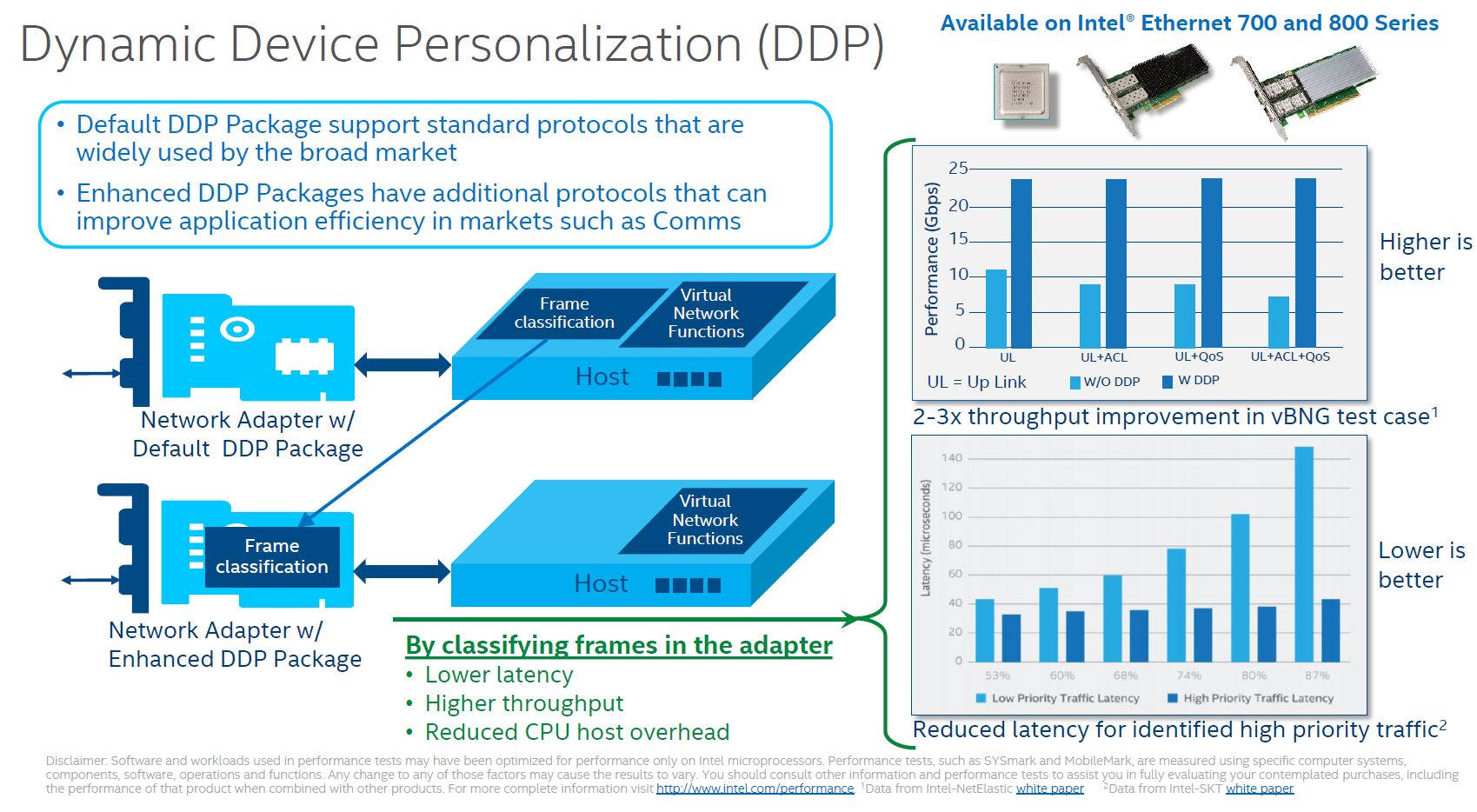

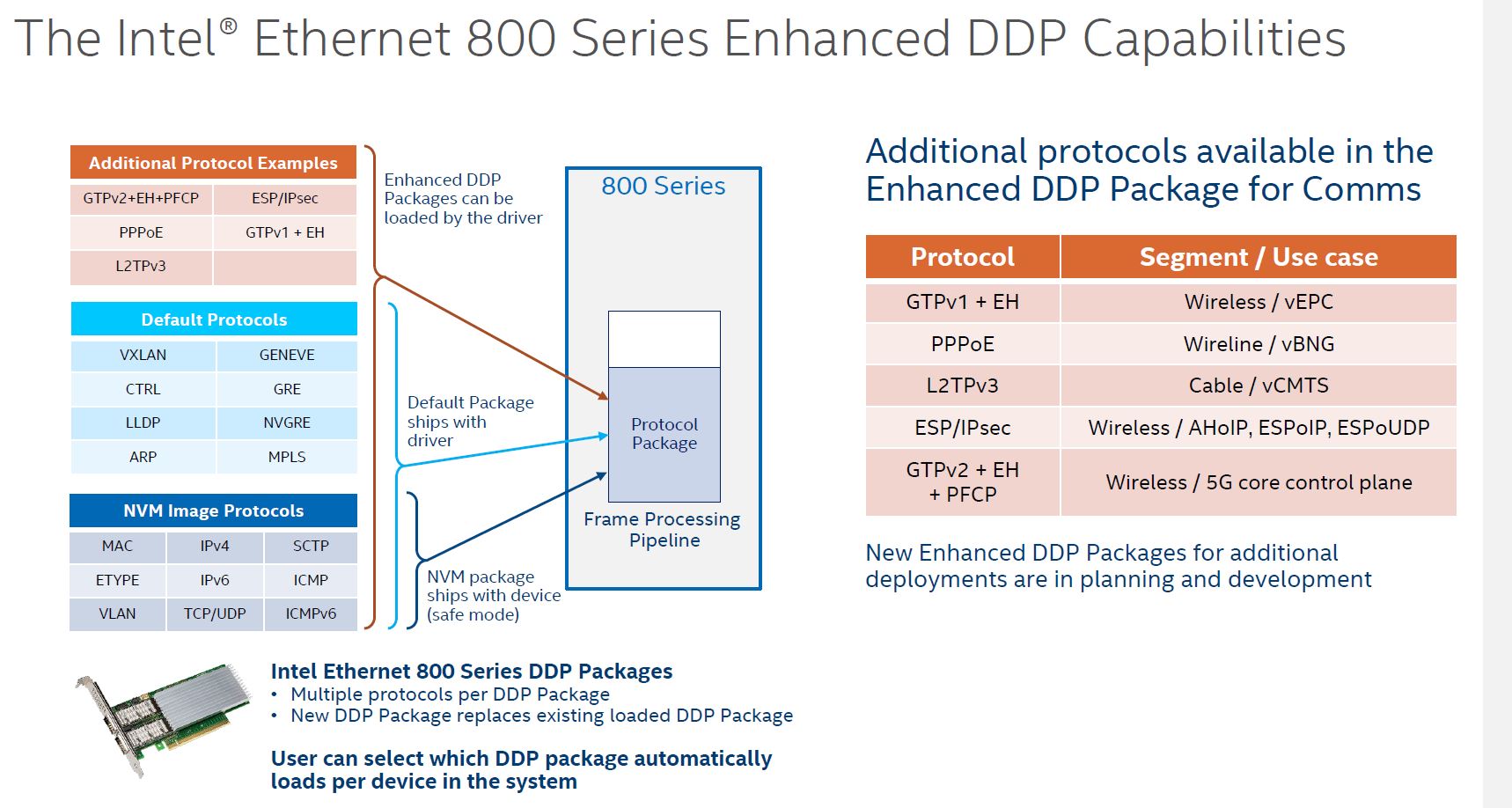

Dynamic Device Personalization or DDP is perhaps the other big feature of this NIC. Part of Intel’s vision for its foundational NIC series is that the costs are relatively low. As such, there is only so big of an ASIC one can build to keep costs reasonable. While Mellanox tends to just add more acceleration/ offload in each generation, Intel built some logic that is customizable.

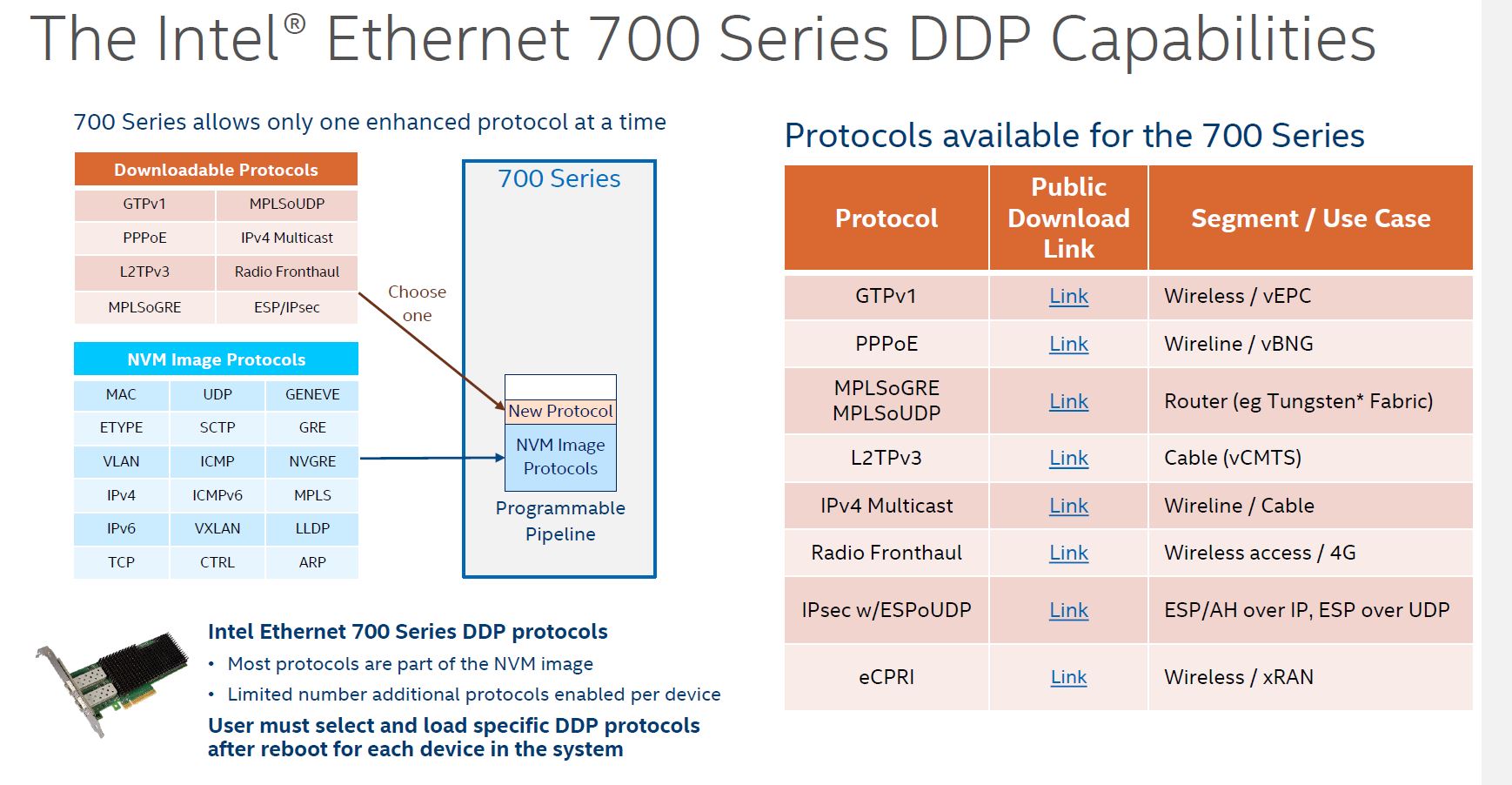

This is not a new technology. The Ethernet 700 series of Fortville adapters had the feature, however, it was limited in scope. Not only were there fewer options, but the customization was effectively limited to adding a single DDP protocol given the limited ASIC capacity.

With the Intel Ethernet 800 series, we get more capacity to load custom protocol packages in the NIC. Aside from the default package, the DDP for communications package was a very early package that was freely available from early in the process.

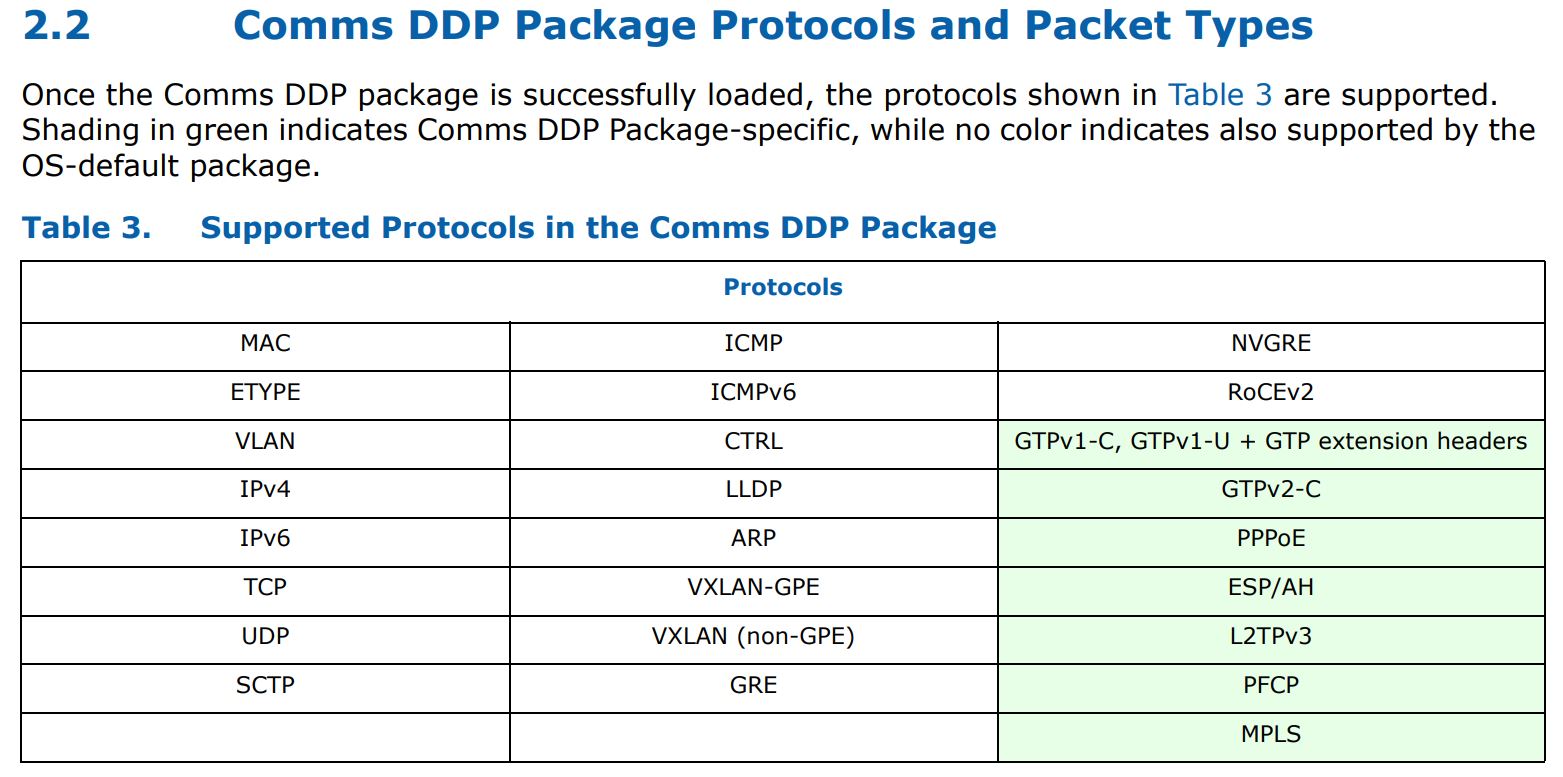

Here is a table of what one gets for protocols and packet types both by default, and added by the Comms DDP package:

As you can see, we get features such as MPLS processing added with the Comms package. These DDP portions can be customized as well so one can use the set of protocols that matter and have them load at boot while trimming extraneous functionality.

Next, we are going to take a quick look at some of our experience with the NIC including driver, performance, and power consumption before getting to our final words.

sed “s/network traffic bade on the application/network traffic based on the application/g”

No Open VSwitch offload like the Mellanox/Nvidia ConnectX line. That’s unfortunate.