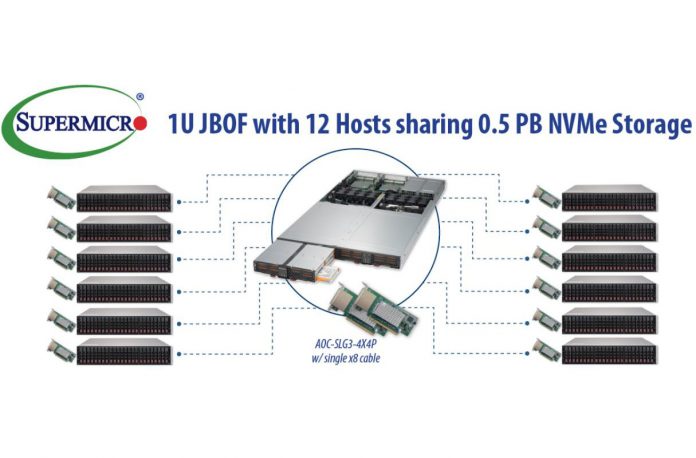

Supermicro today has two new announcements. First, the company is announcing a 1U, 32x hot-swap 2.5″ storage solution that can directly connect to up to 12 hosts with up to 0.5PB per U. The second announcement is Supermicro RSD 2.1 which is based on the Intel Rack Scale Design standard. Supermicro RSD 2.1 can be used to allocate storage from the new 1U between hosts.

Supermicro 1U JBOF DAS Units

Supermicro’s release was a bit light on specifics, however, there are at least two multi-host JBOF DAS units that we found. Supermicro is trying to brand them disaggregated NVMe solutions for composable architecture. While they are using HPE buzzwords, there is more innovation behind this than one would think. One of the two servers uses the Intel ruler SSD architecture. The second utilizes two 16-bay tray enclosures to provide up to 32x 2.5″ NVMe SSDs per 1U of rack space.

We are going to discuss the differences between this and other typical designs in the industry shortly, but it is more prudent to spend time discussing this design. These systems are essentially large PCIe switch boxes. Some of the PCIe lanes go to the drives. Other PCIe lanes go to host servers. You can directly attach up to twelve nodes to each JBOF using PCIe host adapter cards. The storage is then allocated using Supermicro RSD 2.1.

At this point, you may be wondering, “but why?” This is a project that started as a design for a major Supermicro customer. The essential idea is that one can move NVMe flash to one or two boxes per rack. The customer later can decide how to allocate the NVMe drives and re-allocate dynamically.

Why Disaggregated NVMe in Practice

That has major implications. Enter the Supermicro BigTwin as an example. STH was the first site to review the Supermicro BigTwin architecture in the Intel Xeon E5 V4 generation. That particular solution had NVMe in the server, but a quick look at a BigTwin node shows why this can be powerful.

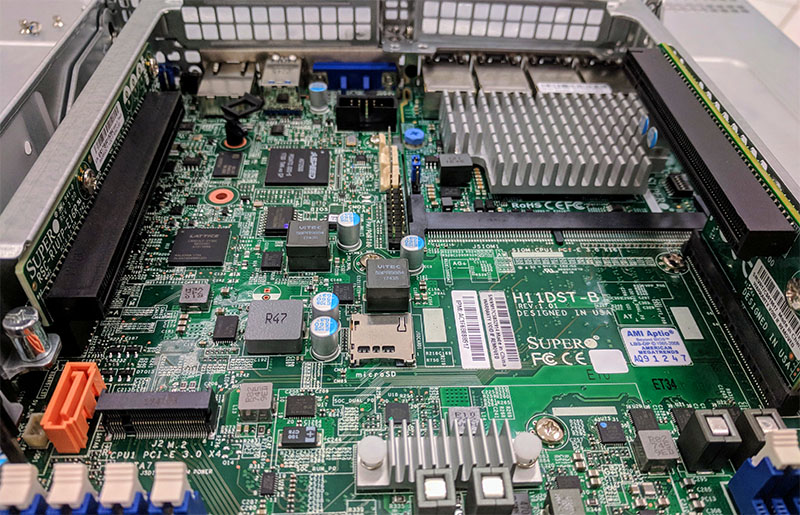

As you can see, Supermicro has two PCIe 3.0 x16 slots in the BigTwin. In the current BigTwin there are up to 6x 2.5″ U.2 NVMe SSD slots on the front of the chassis. Using one of the PCIe slots to deliver NVMe storage to the system allows a customer to use 3.5″ drive chassis or no drives at all for better cooling and cost savings. Here is another view of a more modern AMD EPYC BigTwin which we will have our review of shortly.

There you can see that the node is configured with a 4x 10Gbase-T SIOM networking module, but there are other options for 25GbE/ 50GbE and etc. The two PCIe x16 card can be used to connect these NVMe JBOFs or provide 100GbE networking. Essentially what this means is that you can use a JBOF alongside three high-density 2U 4-node chassis and disaggregate the NVMe storage.

Doing so, you are not limited by the capacity of 6x NVMe drives per node, and you can allocate the storage later. For example, you may only use 8TB of storage per node today, but two quarters after deployment need 40TB on a node, this is a software allocation instead of moving hardware in a data center. You also get benefits of sharing relatively expensive flash storage among more nodes which is a concept familiar to the network storage teams.

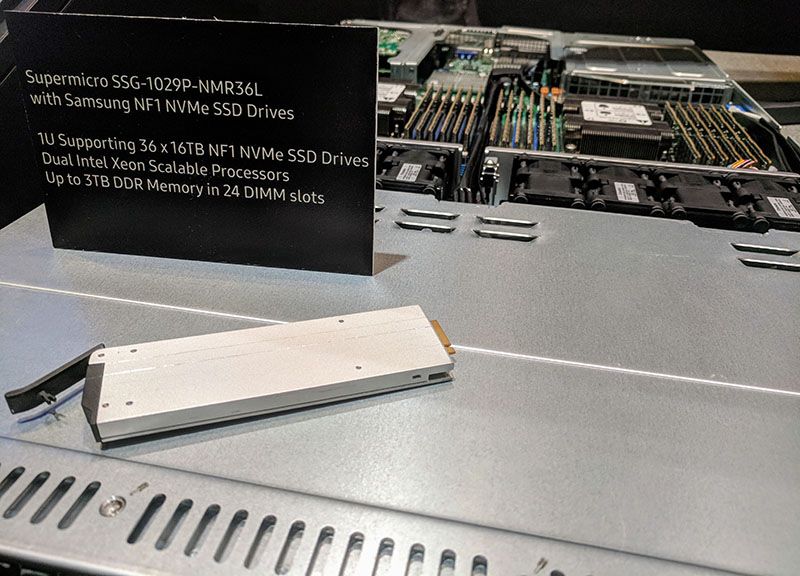

We are going to contrast this to other high-density designs like the Supermicro SSG-1029-NMR36L we saw at OCP Summit a few weeks ago. Here you have 36 NVMe SSDs, but they are all attached to a single dual socket server. If that server goes offline for maintenance, all 36 SSDs are offline. Exporting and allocating that storage to other nodes usually means Infiniband or NVMeoF.

Supermicro shows off the solution with 12x 2U servers. Disaggregating NVMe has the potential to be a source of major cost savings as 6-8 NVMe drives can easily be half of the cost of an entire node these days. Moving them out of the chassis does limit performance to the PCIe fabric (usually PCIe x16 or four NVMe drives worth of bandwidth), but it also can yield large cost savings, higher utilization, and greater infrastructure agility.

Supermicro RSD 2.1

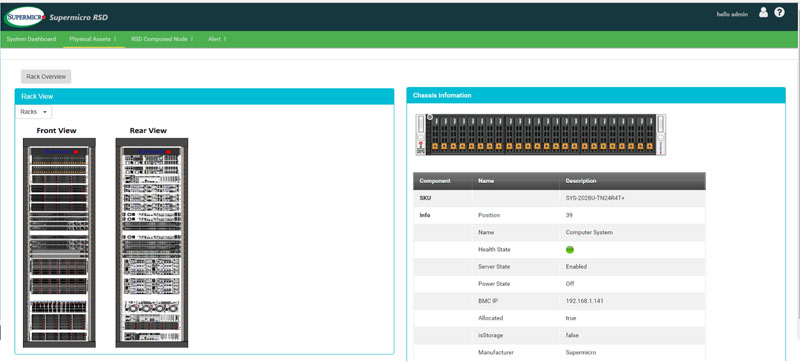

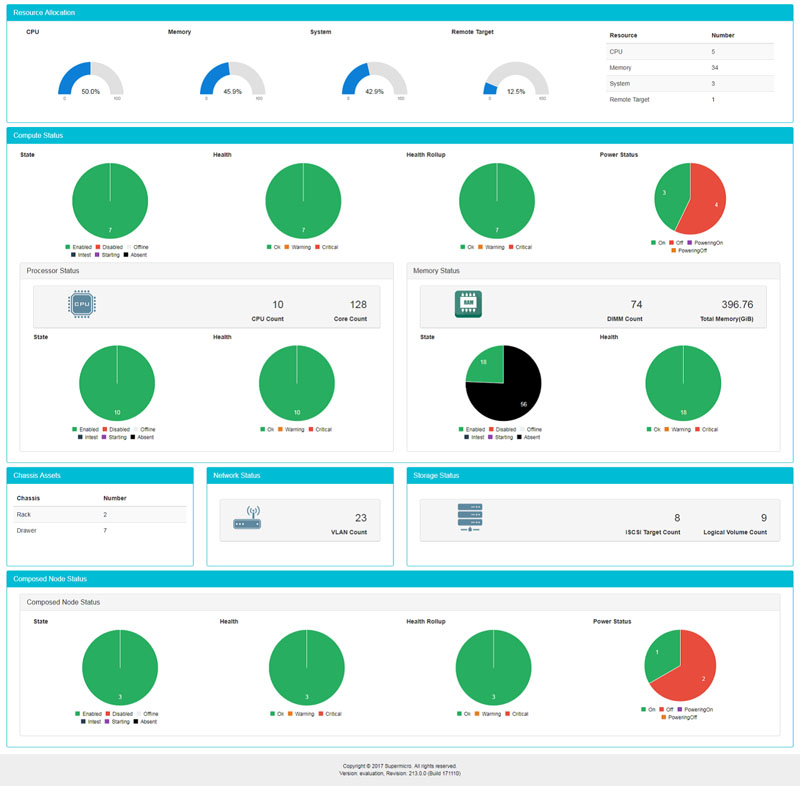

The initial Intel Rack Scale Design spec was released publicly in 2016, and it has been refined over the years. The basic goal is to disaggregate compute, storage, and memory and then manage resources through a common framework. Supermicro RSD 2.1 is the next step beyond that.

Supermicro RSD 2.1 uses standards like Redfish APIs to manage and monitor infrastructure. Here are some of the key features from the Supermicro press release:

- An interactive dashboard that provides the datacenter administrators an overview of all physical assets under RSD management

- An interactive, topological view of the physical rack and chassis in the Supermicro Pod Manager UI. Administrators can further select chassis in the rack to drill down on details at the component level such as BMC IP address, physical position in the rack, and so on

- Analytics and telemetry about system overview, utilization, CPU, memory and composed nodes. The historical data will not only help users understand how their systems were used but also predict possible future system behaviors.

Key target segments for Supermicro RSD 2.1 are telcos and those who are operating infrastructure at scale. Supermicro’s approach of using industry standard tools to build its management suite offerings is in contrast to more proprietary approaches such as Dell EMC iDRAC, HPE iLO, and the Lenovo IMM.

Final Words

The Supermicro press release today focused on Supermicro RSD 2.1, but the nuggets of next-generation architectures were in there. The disaggregated NVMe storage solution is somewhat akin to the OCP Lightning designs, yet built for those who have personnel and processes accustomed to more standard enterprise hardware and software. The move to this disaggregation and the RSD management is the way larger installations are looking at direct attached flash these days.

I looked up the press release after seeing this. Storage review copied the PR so that didn’t help. STH’s explanation actually explains why I should care about this. I hope you guys review this. It looks like a great solution but I want to know how it works and long term. We buy several million of Supermicro servers a year and it’s wierd that I have to come here to understand what and why they’re releasing. Good work. Thanks for putting in extra effort and not just copying the pr like everyone else.

Can I vote up Alex Weinberg? This is useful. The press release sucked. They should hire STH to write their PRs or is that against the rules?

What cards go into the host servers to connect to the external array?

Jared AOC-SLG-4X4P is what it says in the first picture on the page so i’d say that?

Great article. What’s the cost though? Is the only way to use this with RSD? How much does RSD cost?

I get the composable benefit but is this a viable solution if you just want to put a set of NVMe in for each 10-12 servers.

Imagine this as a NVMe cache tier for big JBOD Ceph nodes!