One thing that virtually every major storage player and enterprise has been using for the past few years, and is continuing to use, is a tiered storage approach. This is mainly due to the fact that lower capacity, higher-cost drives tend to have higher performance than larger and less expensive alternatives. The basic premise is to have the most frequently used data stored in the fastest accessible physical storage so that more transactional requests can be accomplished quickly. Augmenting this fast storage is lower cost, high-capacity storage that keeps less frequently stored data online. This guide is meant to be a primer on how this works in a few common scenarios for small businesses and home servers. It should be noted that for home servers primarily storing media files, tiered storage makes less sense since waiting a second or two extra to do hours of relatively low bandwidth sequential transfers is not taxing on storage subsystems.

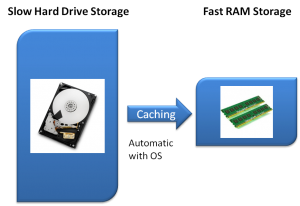

Hard Drives and RAM: The Most Basic Storage Tier Example

The easiest place to start is with something that most users have a lot of experience with, the PC with a fixed amount of RAM and traditional rotating hard drives. In this simplified example, a user starts a Windows PC and is in an environment where no applications have been launched. All application and document storage is on the rotating hard drive and RAM utilization is generally low (assuming that there is a healthy amount of RAM in the system.) Once an application is launched, the operating system takes some portion of the program and data and moves it from non-volatile slow storage (hard drive) and moves a copy to volatile and fast storage (RAM.)

The net effect is that the system calls data it needs from fast RAM with bandwidth in the multiple GB/s range and few nanosecond access times instead of storage with in the 60-160MB/s range and several ms access times. That is why initial loads seem to take a long time, then successive tasks take less time in desktop scenarios, and why in many cases adding RAM can help system “feel” and performance.

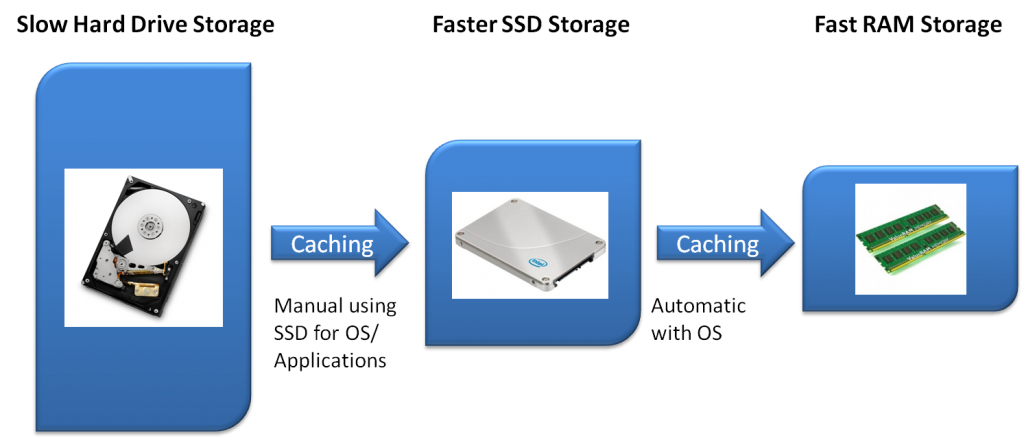

Hard Drives, Solid State Drives, and RAM: A Faster Storage Tiering Scheme

One of the most popular enthusiast configurations today is to use 3.5″ disks for media storage and other storage that is not highly dependent on performance. For the operating system and applications enthusiasts use solid state disks which offer much higher throughput and random I/O performance. This manual provisioning of random I/O sensitive applications and data to flash storage is an important differentiators in this model because system responsiveness is greatly improved.

Depending on the mass storage requirements, the 3.5″ hard drives can actually be installed in a NAS and that layer of storage accessed over a Gigabit LAN connection. One advantage of putting 3.5″ storage in a NAS is that the files stored on the NAS can be easily accessed from the network by a host of devices including desktops, laptops, tablets like the Apple iPad and smartphones like those that run on iOS or Android.

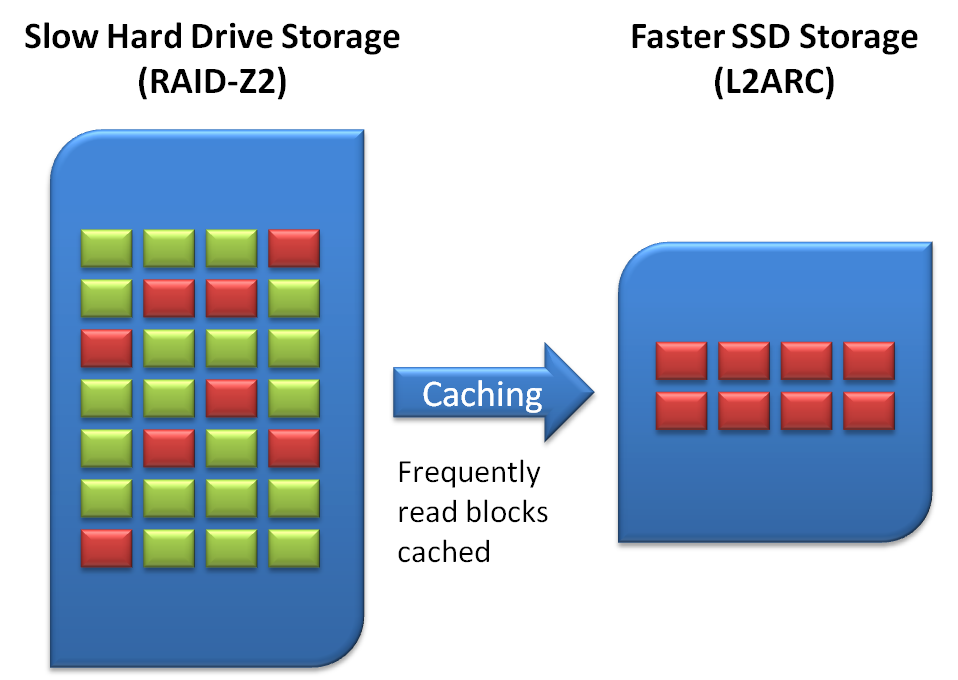

Hard Drives, Solid State Drives, and RAM: ZFS Caching and Intel’s Z68 Conceptual Preview

Although the current hybrid hard drive and solid state drive usage by enthusiasts works generally, it is not the most efficient way to cache commonly accessed data. Installing all applications to a solid state drive does not take into account usage. ZFS has, for quite awhile now, utilized both RAM and solid state drives to cache frequently used data in the fastest possible storage layer. While Windows 7 and OSX can use solid state drives, and they have some minor tweaks for them, they do not intelligently allocate solid state drives in this manner, essentially Windows just uses them as standard disks.

The basic way ZFS utilizes the solid state drive is different. Developed for enterprise environments, ZFS has algorithms that differentiate between frequently accessed information and less frequently accessed information at the block level. For an example of why this is better, imagine a user that has Microsoft Office installed in a system and uses PowerPoint frequently. Assume also, for this example, that the user has installed, but does not use things like animated transitions and clip art libraries. Under the above example titled “Hard Drives, Solid State Drives, and RAM: A Faster Storage Tiering Scheme” the animated transition information and clip art would be stored on expensive fast storage (the SSD) even though it is not used.

By intelligently interpreting usage patterns, faster storage tiers are used more effectively. This generally means one needs less of expensive NAND storage to maintain performance benefits. One negative to this caching is that storage system needs to learn usage patterns. One does not want immediate writing to SSDs of every piece of data read because that would cause excessive writing to the SSD. ZFS is intelligent enough to slowly populate the L2ARC (SSD) tier. This learning time means that first-use, and upon changing usage patterns, there will be more data access to hard drives.

This type of storage tiering and SSD caching is conceptually similar to both Marvell hybrid storage as found on their 88SE9130 controller and what Intel will most likely introduce with its Z68 Sandy Bridge chip set. That is precisely why many users are anxiously awaiting Intel’s Z68 caching technology.

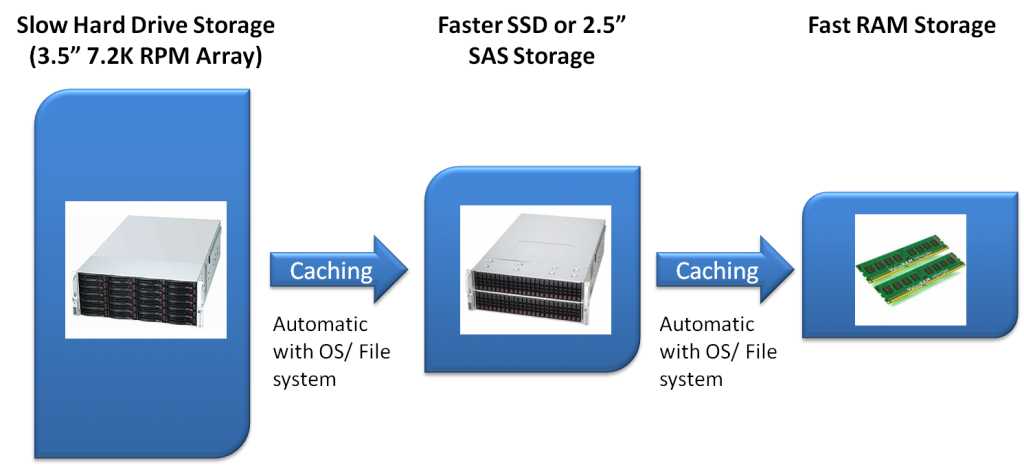

Hard Drives, Solid State Drives, and RAM: Enterprise Applications

In larger enterprise systems, one typically sees 3.5″ SAS or SATA disks as the slowest storage tier. More frequently accessed data is then stored on faster 10K or 15K rpm 2.5″ SAS drives, often in other enclosures. Finally, large memory sizes are used to cache data, if possible in main system memory. That is why dual LGA 1366 Xeon systems that are meant for storage applications can hold up to 192GB of RAM.

The reason one uses SSDs and 15K RPM drives in enterprise applications is that the traditional response to performance issues was to simply add more spindles to an array thereby adding performance. The major issue with this is that having online storage with low percentages of capacity running to meet performance needs requires additional rack space and power. Smaller 2.5″ drives add IOPS for lower storage footprints and lower power consumption for a the same IOPS which is why the transition from performance 3.5″ to 2.5″ drives occurred so quickly.

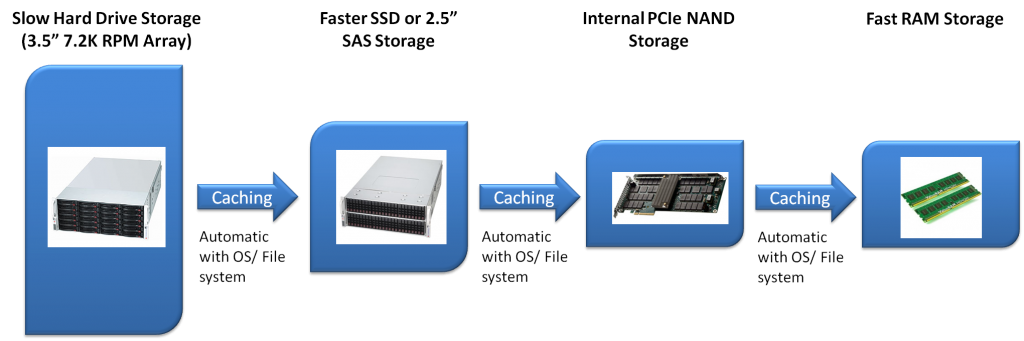

Hard Drives, Solid State Drives, and RAM: Enterprise Storage and PCIe Solid State Cache

Answering the concern of adding power consumption (including heat), additional rack space and etc. for additional enclosures, as well as performance concerns is the larger enterprise storage chain. 3.5″ SATA or SAS drives are used for mass storage (including potentially disk based backup instead of tape), 2.5″ and/or 3.5″ SAS or SSDs are used as the next tier of storage. With these large systems, disks are often contained in multiple shelves requiring a Fibre Channel, SAS, or Fibre Channel over IP connection between the dedicated controller (or controllers) and the disks.

To answer the need for more performance, many vendors such as HP, NetApp (PAM II module pictured above), EMC, and etc are using local NAND cache, often using a PCIe add-in card. This gives the system very high performance solid state caching inside the controller enclosure instead of through external cabling. Things like having 4TB of NetApp’s PAM II PCIe modules are probably out of reach for most small businesses and home server aficionados, however they do serve a great value in enterprise applications. As an aside, this is similar to what happens when one uses a RAID controller that can incorporate SSD caching on the hardware RAID card.

Conclusion

Hopefully the above primer gives a user a simple framework for understanding the various forms of using tiered storage to achieve both capacity and performance requirements. For many applications such as back-up servers, having such elegant storage setups is not really necessary. Conversely, servers handling a high number of transactions, or VDI servers (especially with Windows Server 2008 R2 SP1 Hyper-V’s Dynamic Memory and RemoteFX technologies), caching becomes a very important part of a storage architecture. In the near future, especially with Intel’s Z68 chip set’s rumored features and other potential applications, this technology is poised to come to desktop users.