Oftentimes, users running file servers such as Windows 2008 Server R2, Windows Home Server, Linux variants (including Openfiler), OpenSolaris, FreeBSD (including FreeNAS), and so forth will require more storage than their server can physically store. One option is to add more servers to the SAN. Another option is to add more storage to an existing server. Adding a second (or third) enclosure for additional disks is a great option. This allows a server administrator to build a massive DAS storage system very inexpensively for applications like iSCSI, backup storage, media storage, virtual machine storage, and etc. Oftentimes, the ensuing research will lead IT professionals to JBOD DAS enclosures with SAS expanders built in.

While a common solution, large JBOD enclosures with SAS expanders built-in can often be quite pricey. For those unfamiliar with what these are, these are direct attached storage (DAS) enclosures that connect directly to a server or system via one or more cable, and hold numerous hot-swap drives. The included SAS expanders are not meant to run RAID natively, but rather to allow numerous disks to connect directly to a server though fewer cables. In essence, for this 20 drive enclosure, there will be one SFF-8088 cable connecting the DAS SAS expander enclosure to the main server for twenty JBOD drives.

This does not mean that the drives in the SAS expander cannot be used in Raid. Quite the contrary, as oftentimes SAS expanders will have their disks controlled by a host raid controller. The cost savings with this setup are achieved because it is cheaper to use SAS expanders when high port counts are needed.

Many will note that one can easily purchase a SAS Expander enclosure from a variety of sources. These solutions often range in the $2,000 to over $3,000 per 3U or 4U enclosure. My goal, show you how to DIY a JBOD DAS enclosure with a SAS Expander for half that (potentially less). The unit will be able to support over 40TB of data today, and by the end of 2010 when the 3Tb and 4TB disks are out will scale to 60TB to 80TB in a single 4U enclosure.

In the near future, I will have some updates to this article showing some improvements I have made. So stay tuned!

In this guide I will use:

- Norco RPC-4220 which is a 4U case

- HP SAS Expander

- 550w power supply (some suggest a higher rated power supply, but without a CPU the power consumption is very low.)

- A cheap $35 motherboard

- Miscellaneous SFF-8087 and SFF-8088 cables

Total cost, was under $800. A great place to spend a bit more money (and will be required for many) is on a redundant power supply. One thing to note is that I tried this with a few different low cost mATX motherboards, and did have an issue with the MSI socket AM2 board I used in the Sempron 140 in a box review. It is also the one I pictured since I used it for mock up purposes.

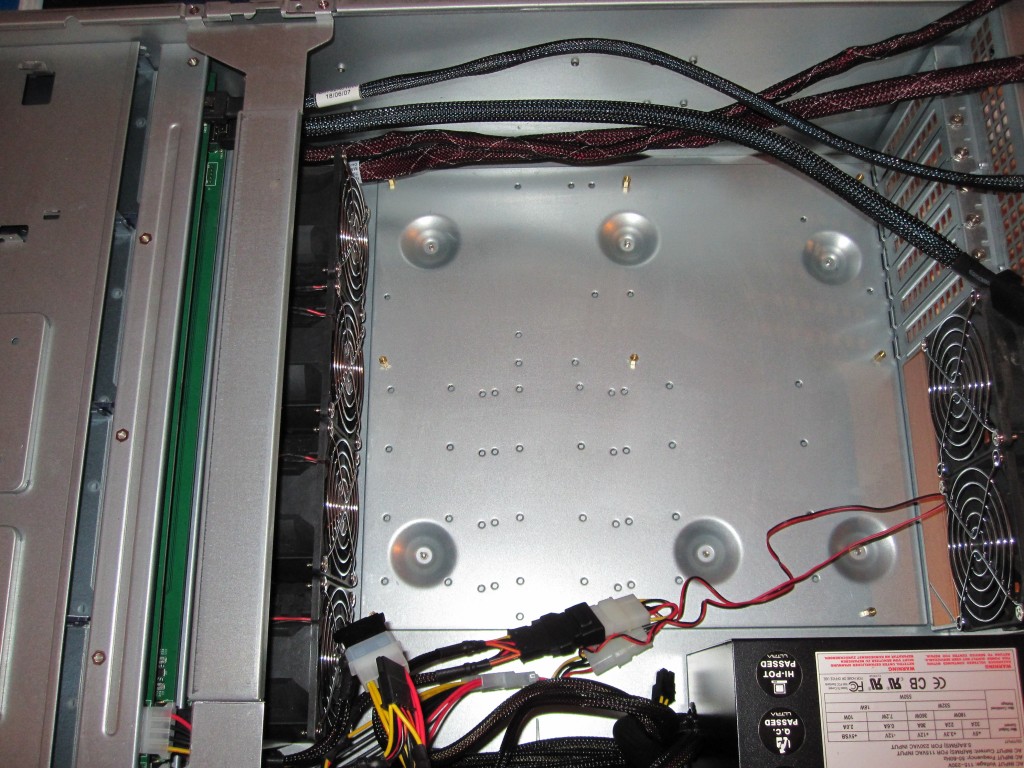

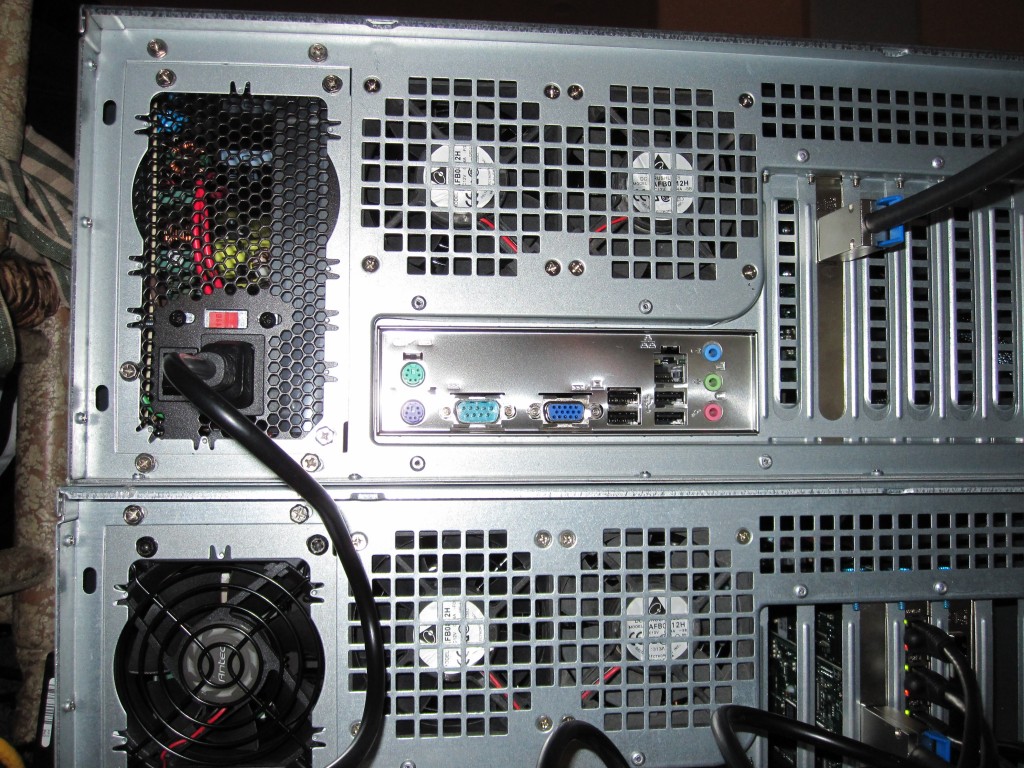

Step 1: Ready the chassis for installation. The first step is to get the Norco RPC-4220 opened up and ready for installation. Most users will want to uninstall the fan bracket as it makes installing power and data cables much easier.

Step 2: Install power supply. For this guide I used a low cost 550w power supply. Since there is no CPU and motherboard using power at startup, this PSU actually worked fine. Of course, doing this again, I would likely choose a PSU with more power output and built in redundancy.

Step 3: Install internal power cables and install internal SFF-8087 cables. This is a really simple step. Again, you will likely want to uninstall the fan bracket as that does make cable isntallation much easier in the Norco RPC-4220.

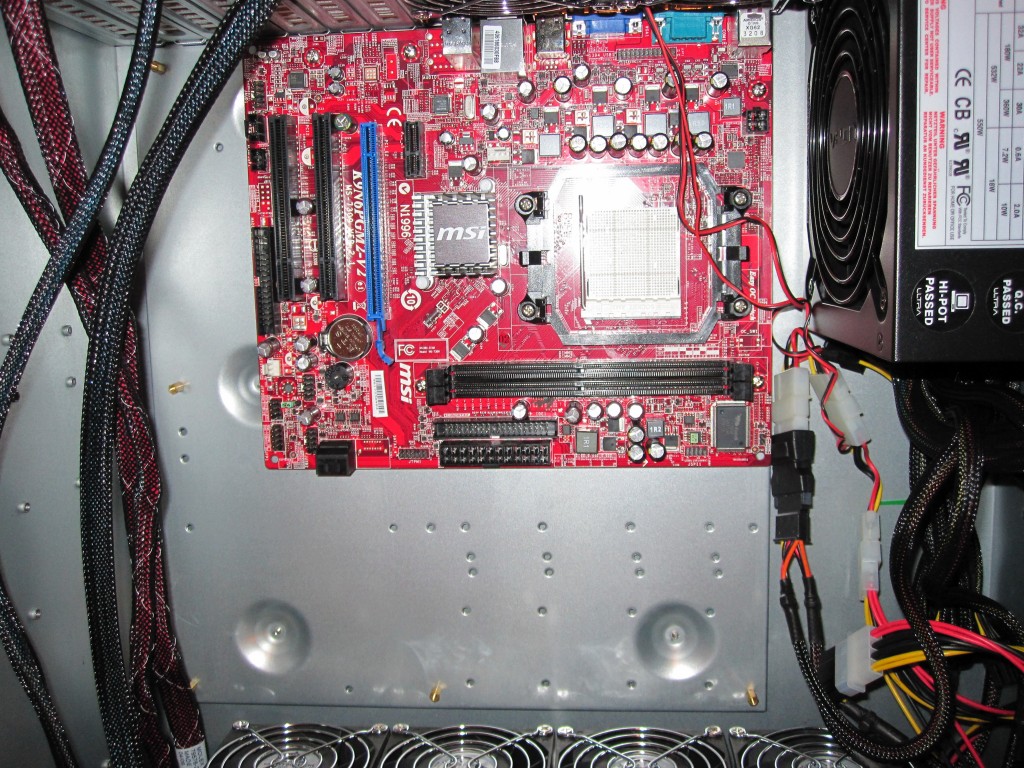

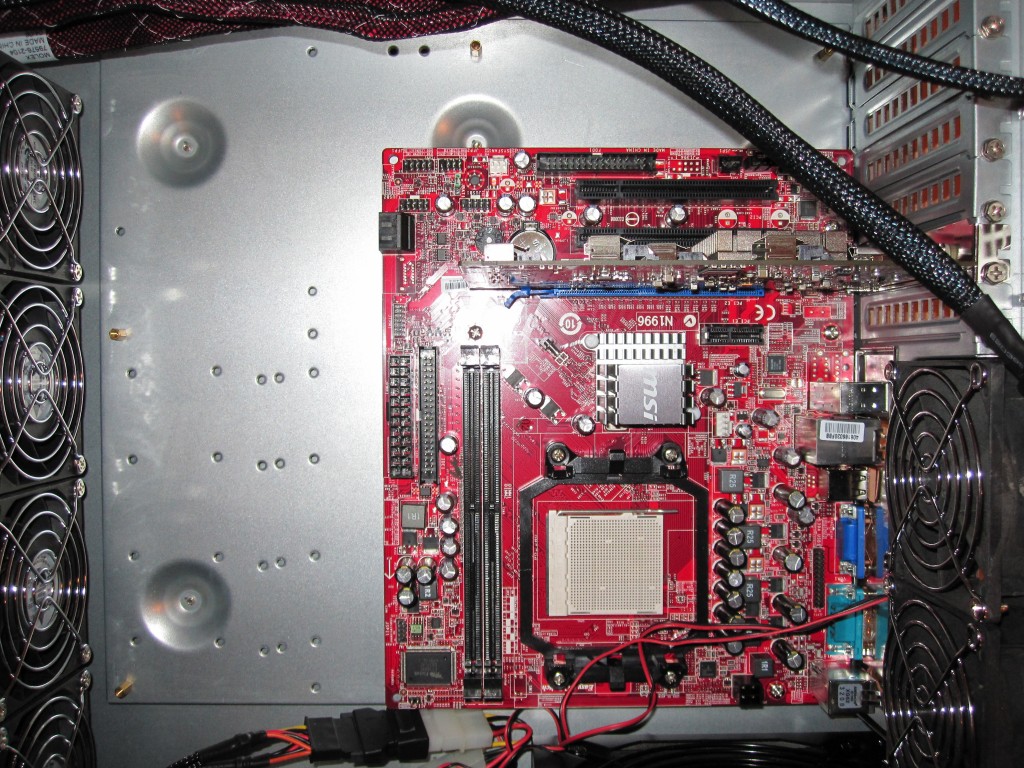

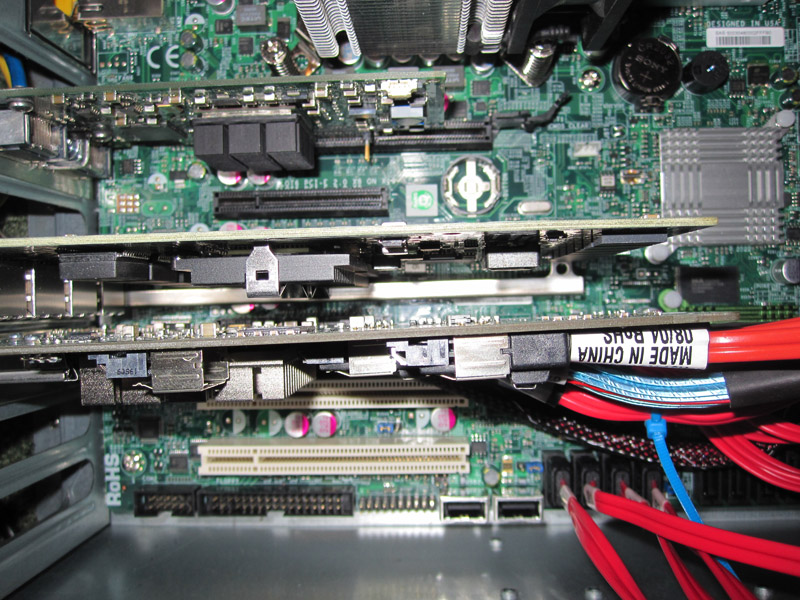

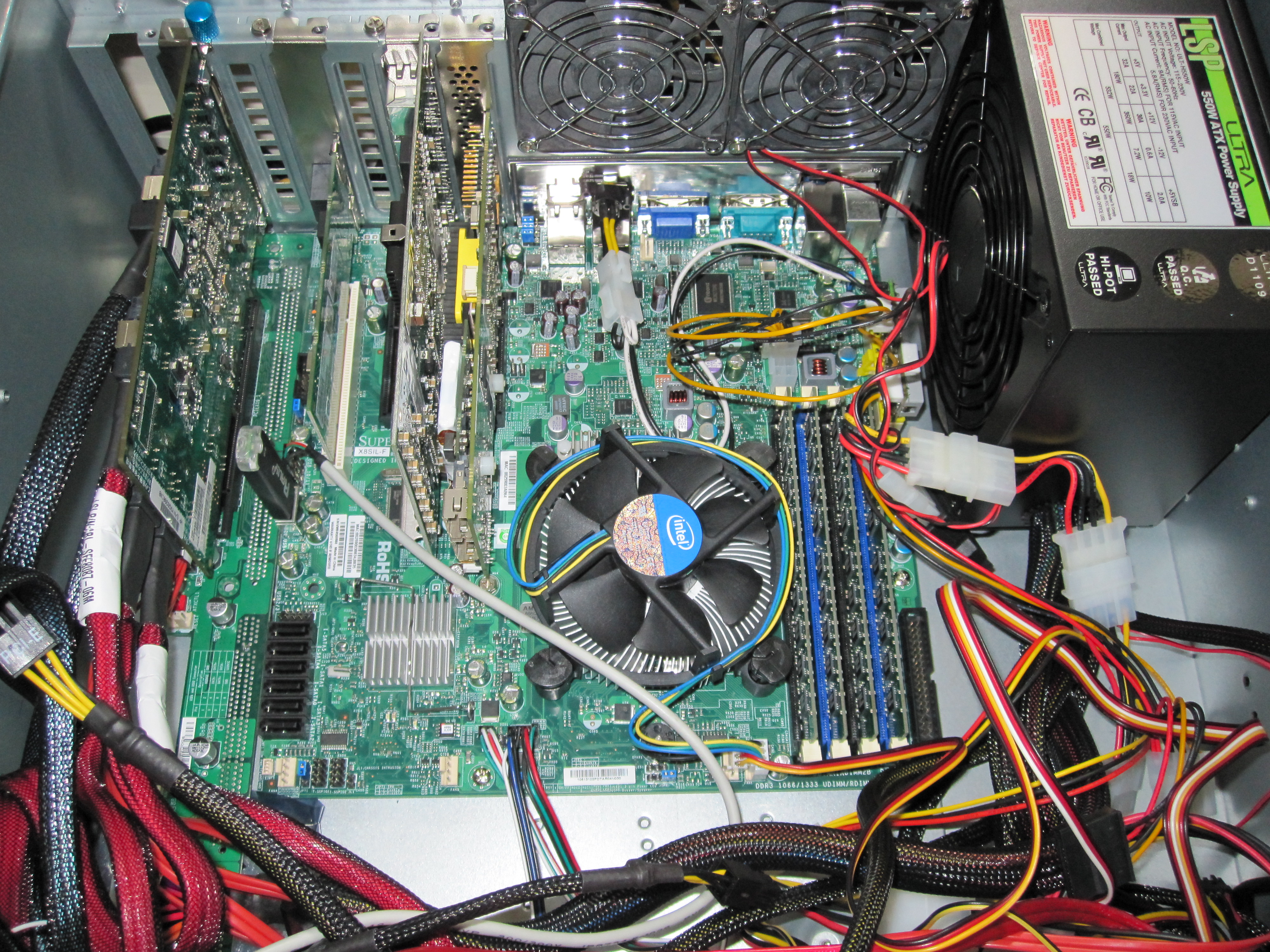

Step 4: Install motherboard. It should be noted the MSI motherboard depicted below had a SM bus issue that prevented the HP SAS expander from operating properly when there was no CPU installed. I ended up swapping this for an Intel mATX H55 board and that one worked perfectly, as well as old EVGA and ASUS Socket 775 motherbaords. Those boards all worked flawlessly without the CPU and memory installed.

I decided not to use a CPU and memory in the DAS enclosure because it provides less restrictive airflow without the additional components. In addition, there are less pieces to fail, and less parts to consume power, both which are good things for this enclosure.

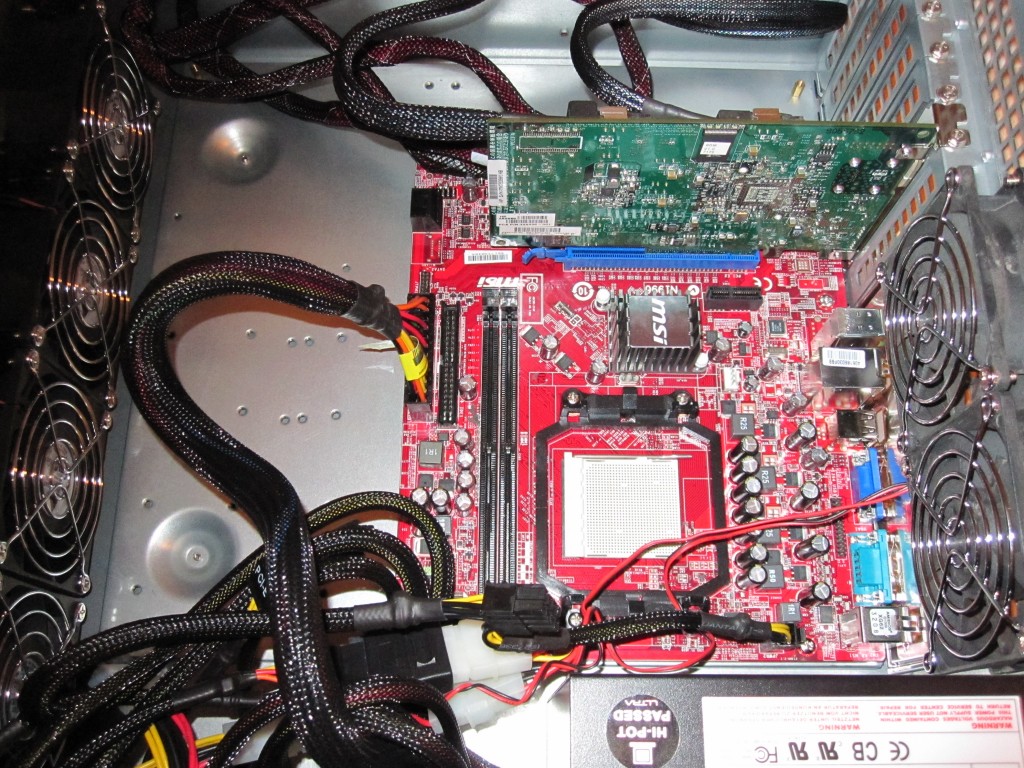

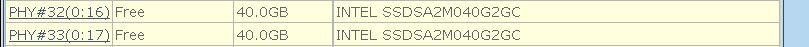

Step 5: Install the HP SAS Expander by simply plugging it into the open PCIe slot. The HP SAS Expander does not use the PCIe bus for data transfer.

Step 6: Connect internal SFF-8087 cables and power cables to HP SAS Expander and motherboard (respectively)

Step 7: Install disks like Hitachi Deskstar 1TB and 2TB drives (they work great in raid and are CHEAP!) Personally, I would only have one drive completely installed at first. You want to test everything is working before plugging in $2,500 worth of drives.

Step 8: Connect DAS box to server using a SFF-8088 cable between the HP SAS Expander’s external backplane port and the main server/ workstation’s SFF-8088 port (usually will be found on the servers RAID controller.) One huge advantage to using the HP SAS Expander is that it has this external port making it easy to use external cabling. To convert a SFF 8087 to SFF-8088 through a PCB backplane sitting in an expansion slot will cost $40 or more once all costs are added up. Using the HP SAS Expander with a supported RAID card that includes external ports allows one to save the unnecessary expense and space.

Step 9: Power unit on with one low cost drive and make sure everything is working properly including the fans, HP SAS Expander, disk, power supply and etc.

Step 10: Add more drives and start testing/ configuring

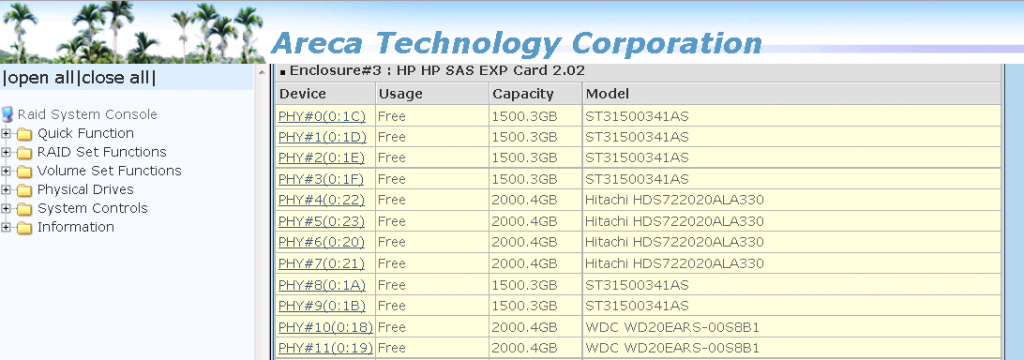

I have even tested this configuration out with SSD’s:

I would advise against attaching too many SSDs to the DAS box because the HP SAS Expander only is using an aggregate 12.0gbps uplink to the Areca 1680LP. For small reads/ writes, this may make sense but adding 22 SSDs to the Norco RPC-4220 will obviously have a bit of a bottleneck around the HP SAS Expander.

That’s it! While you are doing the above, think that each of the 10 steps saves you $100 to $200 per step over pre-built solutions. There are very few DIY hardware projects that can save so much money so easily.

One important note is that the HP SAS Expanders use do support SAS 6.0gbps (you want the newest firmware v2.02 for this, although it was introduced in the v1.5x firmware) but only SATA II 3.0gbps. Also, the HP SAS Expanders do NOT work with all raid controllers. For some quick information please see this post. You also want to consider a card with staggered disk startup capability. Spinning up 20+ hard drives can be strenuous on the power supply if they all come online together. Once spinning, the amount of power required is much lower.

Next steps for this series: A better power solution (I have this running in the current DAS box attached to the Big WHS), using the 4U of space more efficiently, configuration, other options. I will post Iteration 2 of this guide shortly with those changes.

wow… so this basically does the same thing as the SAS Expander enclosures? This costs the same as like 8 drive enclosures. How does it compare to Drobos?

Is this better than using eSATA + port multipliers?

You could also have used the Supermicro power board (~25$) instead of a motherboard. It has power connectors for Power, Reset, Fans, etc. I believe the part # is CSE-PTJBOD-CB1.

What SAS card are you using in side the server to connect back to the new SAS enclosure? Also I am finding this card you mention costing between 400 and 600 dollars – how does this apply to your statement about building this for under 800.00???

@Benjamin – I will give that one a try in the near future. I am actually running the expander chassis with a different power solution right now (modified PCMIG board).

@James Walker – Amazon (link in the parts list above) has the board currently for $350 (and less). Oftentimes, prices can dip into the low $200’s for the HP SAS Expander. I am using an Areca 1680LP in the main server to connect the SAS expander enclosure.

I was wondering if you could clarify something for me. How does the CSE-PTJBOD-CB1 work in this regard? where would the HP SAS expander card plug into? I have outgrown my current tower case for my WHS and I dont want to do external drive towers

The CSE PTJBOD is mainly a power board from what I’ve gathered. In the above guide, it would replace the cheap motherboard. I’m finishing up the writeup on my second iteration of this design using a different PCIe power board tonight/ tomorrow. Using a SAS expander + a power board is most practical when connecting the enclosure to a main server (similar to external drive towers).

In a week or so I will try to get a CSE-PTJBOD-CB1

Thanks for the post, it was very useful.

I’d like to build something like this, but for 2.5″ drives. Can you recommend any chassis like the Norco that houses 2.5″ drives?

Joseph, you could use something like a Supermicro SC213A (16x 2.5″ drives in a 2U), SC216A (or one of the E series chassis with expanders with 24x 2.5″ drives in 2U. The Norco 4Us can actually hold 2.5″ drives in the 3.5″ bays, but that may not be the highest density configuration if that is what you are looking for. Also, I think the new Supermicro SC417 chassis will hold up to 72x 2.5″ drives in 4U. Hope that helped.

FYI the SC417 chassis with 72x 2.5″ HDDs was released Tuesday 8/17:

http://www.supermicro.com/newsroom/pressreleases/2010/press100817_storage.cfm

Thanks.

What card are you using in the server?

In the main server (at the time) was an Areca 1680LP which has one SFF-8088 external port. Now I am using a LSI SAS 2008 based internal card via a SFF-8087 to SFF-8088 converter.

Pls does dis setup require any software if so wat is d name cos i can c something like areca techonology coorporation

tunji: That picture is of the drives showing up attached on the Areca 1680LP RAID controller, through the card’s web interface. If you use a LSI card, it will show up in the LSI OptionROM/ MSM.

I really still don’t get what these expanders and enclosures do. Can you nest JBOD and RAID on a controller? Because most controllers don’t support having a lot of drives in a single RAID array, usually 32.

How loud will this case be?

thanks in advance

I have a few of these Norco units with 120mm fans running nearly silent (from the fan side). The other bit of noise comes from the drives so it depends on which drives you use, especially with 20-24 of them.

Hi,

Why the link between controller and expander should be 12gbps? I think that it should be 24gbps (4 lanes * 6gbps)

You can create multiple logical drives. I usually do 10 disk RAID6. Your operating system will see them as separate HDDs./PVS. I just add the PVs in existing VG.

Nội thất Go Home hiện có 4 cửa hàng gồm 3 cửa hàng tại Hà Nội và 1 cửa hàng mới khai trương tại Hồ Chí Minh, bạn

có thể ghé thăm website nội thất Go Home để tìm hiểu hoặc đặt mua đồ nội thất gỗ cho gia đình mình như giường, tủ, bàn ghế

kệ tivi nhé!

i wish these servers have builtin sas controllers in it so that they can be used as no raid ,

It would be great if we could get an updated DIY SAS build. I’m looking to do this exact thing, but this article is twelve years old. Something with modern’ish parts would be extremely helpful.

garmon – These days the SAS expanders chassis are plentiful on ebay so we usually just tell folks to buy those as it is way less expensive.

Are SAS expanders chasis not a lot louder due to having server psus in them? Can you recommend a quiet chassis? I am in the process of setting up some drive expansion and considering this. I do have a Norco chassis that is a backup server but from what I see, the main server I have would need it’s SAS cards swapped out from the current 8 channel ones to a 16 channel so that it would free up a PCIe slot for an expander card with an external port.

I like this, but I think to use a similar solution instead of the SAS expansion card and put in a Thunderbolt 4 card instead. Would it work the same way, or would I need a motherboard supporting a Thunderbolt addon card?

so then if i am reading all this right could i just out right skip the whole motherboard and just use something like one of those cheap pice exstentions to provide power to the hp sas exspander