Are RAID controller lithium-ion batteries ticking time bombs within your server? Over this past weekend, we saw an IBM ServeRAID battery pack that looked ominous. Everyone knows that RAID controller batteries need to be replaced at regular intervals. That is one of the key drivers towards supercapacitors on RAID cards. Before this weekend, our assumption that it was a matter of keeping data safe in the event of a power failure. After this weekend, we now think it may be a safety issue.

Background: Why RAID Controllers Have Batteries

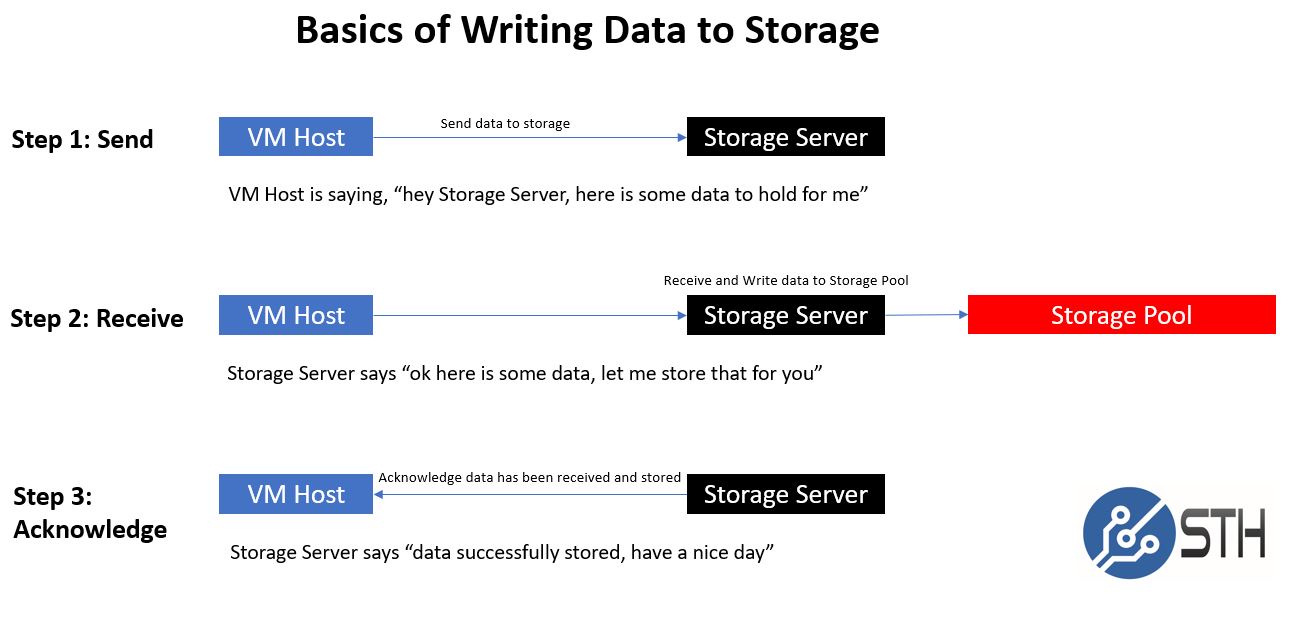

These days, the vast majority of RAID controllers are SAS (or specifically SAS3) based. There are some low-end offerings from companies like Marvell that offer SATA III RAID 0 or 1 as an example, but these are meant more for boot drives, simple NAS units, and similar applications. For parity RAID levels such as RAID 5 and RAID 6, the controller must do XOR calculations to figure out what data to write on each device anytime data is written to an array. By modern processor standards, this is not an enormously difficult calculation even on 8x 10TB hard drives, however, it still takes time.

For many generations, companies that produce RAID controllers have solved the challenge of getting better write performance by adding DRAM. The host system can then write data to the RAID controller. This data is temporarily stored in DRAM until the parity calculations are flushed to disk.

We first covered the basics of writing data to storage in our piece: What is the ZFS ZIL SLOG and what makes a good one.

That is a good article to read. Although it is focused on ZFS write caching, it is similar conceptually to write back cache on a RAID controller where you would typically have a battery back up unit (BBU) installed. By having the BBU installed, in the event of power loss, the RAID controller can keep data held in onboard DRAM awaiting a write for several hours. In the case of flash-backed write cache (FBWC) RAID controllers, the data in DRAM is written to flash during a power failure. That operation is powered by the BBU.

For those in the RAID industry, or operations teams with RAID cards deployed, RAID controllers with BBUs have been around for a long time and are essentially a known quantity. The BBUs need to be replaced at regular intervals as a loss in battery capacity can lead to unsuccessful backup during a power event. This weekend, we saw another reason to replace batteries.

A RAID Controller Battery Time Bomb Discovered

This weekend we had a major refresh of the lab since we have another 12kW of servers that just arrived or are inbound. It was time to clear out any server from the Intel Xeon E5-2600 V1/ V2 generation. Most data centers have already done this, but we keep machines in the lab simply to perform backtesting. These servers stay offline and are largely forgotten until the need arises to back-test a benchmark on an older generation CPU.

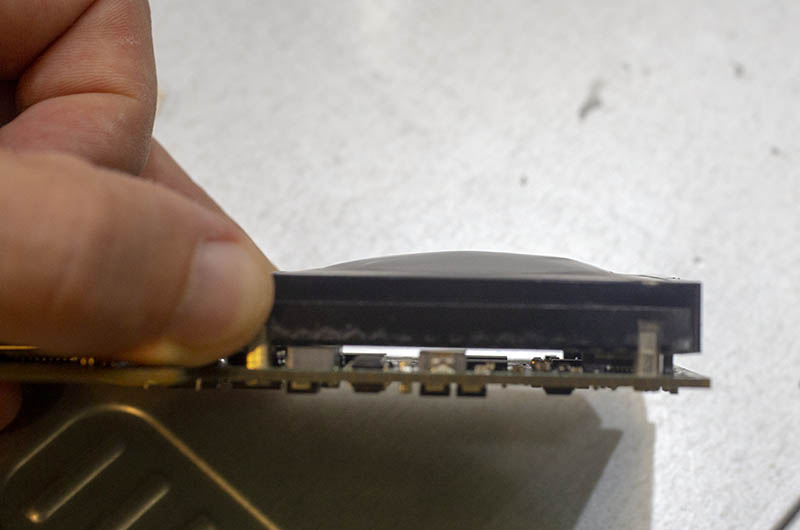

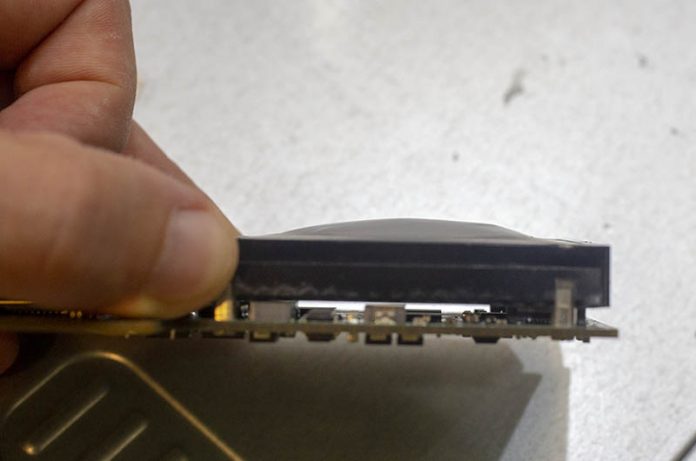

We pulled one of the servers readying it for final retirement. This was a 2011/2012 era server that had a (Broadcom) LSI SAS 2108 RAID controller, the IBM ServeRAID M5014 inside. After the Intel Xeon E5-2600 V3 Haswell-EP launch the server has been powered off save for a once a quarter or so benchmarking run. We also did not utilize the RAID controller as our benchmarking standard is a directly attached SATA drive so the controller was unused. It was physically in the server, but we had not used the IBM M5014 in years. When we pulled the RAID controller, specifically the LSI BAT1S1P battery pack, as part of the LSIiBBU08 kit, looked different.

We pulled the sticker off and there was indeed a large bulge underneath. The battery pack has deformed over the years.

The LSIiBBU08 and LSI BAT1S1P were extremely popular. They were used on IBM ServeRAID cards like the M5014 we have and the higher memory M5015. They were also used for just about every LSI SAS 9200 series RAID controller with onboard memory. These included popular cards like the LSI SAS 9260-8i, 9261-81, 9280-8i as countless OEM versions.

While the LSIiBBU08 is the lithium polymer version, the LSIiBBU07 was the lithium-ion variant with a 1-year replacement cycle. At this point, both kits are getting to the end of where their controllers are useful. The SAS2 generation was PCIe 2.0 only and designed for a hard drive world. Still, our battery was manufactured in 2011 and there are many organizations with Sandy Bridge and Ivy Bridge generation Intel Xeon E5-2600 V1/ V2 servers in the field. If our battery looks like this, there is a good chance there are others in the field that look the same.

This Should Not be an Issue

Being fair in the process means that we should point out that a 7-year-old BBU kit is something that should never happen. With these BBU kits, the 12-hour data retention time setting should yield a 3-5 year replacement cycle. Proper maintenance would have meant we should have replaced the battery. At the same time, had we not pulled the server, we would not have known that the BBU was in such a physically degraded shape.

These days, many RAID controllers utilize supercapacitors that should not have the same replacement needs as the LSI BBU units. At the same time, we just got a new HPE Gen10 server in the lab with a lithium-ion battery so these types of concerns are going to continue for years in the future.

Final Words

Everyone likes to think that their products are being used and maintained properly. With RAID controllers that utilize battery back up units, there is an extra maintenance required to refresh batteries. This is less critical for servers that are expected to last 36 months. Most organizations have a server that sits somewhere in their infrastructure that is well over 3-5 years old. For those servers, if you use traditional RAID cards, ensure that you have a plan to regularly replace batteries. It is not enough to stop using the BBU feature for power loss protection. Bulging batteries can be a precursor to larger failures on a range of devices. You do not want a battery bursting in a server.

If you are buying a new server, get a supercap solution or rely upon a HBA with software RAID or software-defined storage.

Funny you should bring this up. We had a pair of these on LSI 9260’s that were doing the same thing. Unfortunately we only noticed after one burst and took out a $30000 server just at the end of its 5 yr range. I’m not in a job where we can take pics of our gear but the two that we pulled looked just like the pictures.

Looking at the specs on the PERC H740p and PERC H730p both of those have a battery. I can understand the lower end ones still using a battery, but the high end should have gone to the super-capacitor.

I see bulging batteries on 610’s all the time, they are not quite as severe, but we have never had one physically burst in our experience. While I see this as a potential problem, it is definitely possible to design lipo batteries with expansion chambers and seal them in a way that prevents catastrophic failure.

A bad BBU on HP410 took out our entire RAID. The controller went nuts and has written random data all over the place. And we were in for a long restore.

After that incident we’ve thrown everything away e.g the ESXi and went with the ZFS on Proxmox on the same server just without the RAID controller.

I’ve had three separate BAT1S1P’s either arrive (second hand) or develop battery buldges – I don’t think its worth trying to buy second-hand LSI controllers with them, and costs for the aftermarket versions are quite high.

Funnily enough I had a few PERC6 cards with BBUs that were fine through their entire life in my home lab.

Have you never had a battery die and ooze everywhere in a home theater remote control? You’re lucky. Don’t store remotes with batteries in them!

I know it isn’t practical to have UPS’s for an entire data center, but at home – totally worth it. Bottom of my rack. :-)

I finally tried to remove the battery sleds from two old UPS’s. Battery sled slid right out with one, couldn’t get the other out. Was stuck on something. Took the lid off the rack mount UPS to discover the batteries were bulging and the sleds won’t be able to come out because the batteries don’t have room to fit through the opening.

I wonder if it’s possible to retrofit Supercaps to an older controller? Might breeth new life into old machines with less cost than finding a new controller?

Do you just pull out the card and replace the battery? No ill effect?

Hi I was wondering what the difference is between the batteries for an IBM M5015 and a LSI 9260-8i. Can I take the battery from an IBM M5015 and put it on a LSI 9260-8i controller? Are they inter-changeable? I think the battery for IBM M5015 also provides RAID 5/50 functioning besides the cache, but LSI 9260-8i doesn’t need that. Any comments?