As we all know, Windows Server 2012 has gone RTM and now a lot of folks are preparing hardware for one of the biggest releases this year in the server space. I am personally building a new test configuration and wanted to share a few tips and tricks that I am using to prepare the build. Just as a note, this is more for a workhorse server, not a small business server, I will probably cover something for Microsoft Windows Server 2012 Foundation and Essentials soon. Until then here are my thoughts looking at the build for my lab.

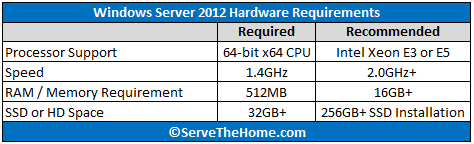

Windows Server 2012 Hardware Requirements

Looking at Windows Server 2012 Hardware Requirements, not much is actually required to run Microsoft’s newest server OS:

I added my minimum recommendations there. If you are spending a few hundred dollars on a Microsoft Windows Server 2012 and build a system with the minimum hardware such as 512MB of RAM, you are either crazy or are doing a special project. In theory, Windows Server 2012 hardware requirements would allow installation on something similar to my Intel Atom based pfsense appliance with a 32GB hard drive. I recently did my piece on what I thought would be good guidelines for Windows Server 2012 hardware requirements so that is probably a more detailed look. Needless to day, I am not going to take an old Athlon 64 and try running Windows Server on it. Well, I might try that one day but I am not going to use that machine for anything other than satisfying intellectual curiosity.

Choice of Windows Server 2012 Platform

I have been very happy with my AMD Opteron VMware ESXi 5.0 build which uses dual Opteron 6128 CPUs. For ESXi 5.0 that is fine since you get a lot of cores, a fairly robust platform, and are not spending a ton to build a 32GB max (in the free version) machine. I do think a new Ivy Bridge Xeon E3-1200 series CPU is great in that application, but let’s face it, Microsoft charges a lot for Windows Server 2012 so let’s not pretend this is going to be a budget build. With that being said, I am looking at a dual Intel Xeon E5-2600 series solution for my Microsoft Windows Server 2012 build. Dual Intel Xeon E5-2600 series CPUs provide up to 80 PCIe 3.0 lanes which is a ton. These PCIe lanes also directly are sourced from the CPU whereas AMD’s PCIe lanes still pass through a north bridge which adds latency.

When choosing a platform for Windows Server 2012 there are a lot of considerations. For those running Microsoft SQL server or other applications that are licensed per CPU core, the Intel Xeon E5-2643 is going to be a prime choice. The Xeon E5-2643 is a quad core with hyperthreading (4C/ 8T) CPU that runs at 3.3GHz base and a 3.5GHz max turbo frequency. One disadvantage is that you only get 10MB of cache. The big advantage is that the upfront cost is lower and licensing costs are going to also be significantly lower. I have been told by many server vendors that the Intel Xeon E5-2643 is a favorite among buyers for this reason.

Since this is going to be my test server, I am going to use two Intel Xeon E5-2690 CPUs which provide eight cores with hyperthreading each. These are the fastest 135w TDP CPUs Intel currently offers and the only faster octo core CPUs are the 150w Intel Xeon E5-2687W workstation CPUs. My main reason for going with the Intel Xeon E5-2690 is that I have them available. If I were looking for a decent CPU that is still budget minded, the E5-2620 is a 6 core 12 thread CPU running at 2.0GHz base / 2.5GHz turbo frequency and with only a 95w TDP. They retail for about $420 each so one can purchase a pair for less than an Intel Xeon E3-1290 V2 but one gets to take advantage of all of the LGA 2011 platform advantages.

Memory Selection

The big thing one gets with LGA 2011 is that aside from massive expansion options due to the PCIe configuration, one also gets to benefit from a huge amount of memory potential, either 512GB or 768GB maximum depending on the motherboard. For a test system, 32GB DIMMs are a bit pricey. My personal plan is to start with 8x 8GB registered ECC DIMMs and move up from there. Total memory is only 64GB but it is easy to add more. At around $550 the initial purchase is not inexpensive, but it is important to at least get eight DIMMs to populate the quad memory channels per CPU. Doing a quick comparison, the 4x 4GB registered ECC DIMM kits are slightly more per GB, so one can save on absolute costs but not relative. I just ordered two Kingston KVR16R11D4K4/32I sets which has 32GB in a 4 x 8GB DIMM configuration kit that run at 1600MHz CL11.

Drive Controllers and Configuration

One thing that I overlooked on my first-ever Windows Server 2008 R2 installation was installing the boot volume on a RAID 1 array. This is something that I regretted but managed to never re-do due to it being a local machine and not wanting to re-activate everything. I lost many sleepless night over the years this was active due to the fact that if that boot SSD failed, I was in trouble. Back then, SSD cost was a much bigger factor. Decent 64GB to 80GB SSDs were still over $200 each and as we know, Windows Server 2008 R2 installations, especially when running many Hyper-V virtual machines, takes up a big portion of that. This time around, SSDs are much less expensive so my minimum recommendation is to use two 240GB or 256GB SSDs. Free space is a good thing on SSDs as it lowers write amplification and often helps performance. Also good is not having to worry about running out of space as I did with the 80GB drive. These days, RAID 1 SSDs are fast enough, so I would even advocate that setup over RAID 5 which had been common.

After looking at my data on current generation SSD performance, I had a hard time deciding upon dual SanDisk Extreme 256GB drives or dual OCZ Vertex 4 256GB drives. I know the drives will utilize Intel RST for RAID 0 because that is one of the easiest use cases out there. One nuance with Hyper-V is that you you do disk passthrough versus controller pass through as you would with VMware ESXi.

After looking at my data on current generation SSD performance, I had a hard time deciding upon dual SanDisk Extreme 256GB drives or dual OCZ Vertex 4 256GB drives. I know the drives will utilize Intel RST for RAID 0 because that is one of the easiest use cases out there. One nuance with Hyper-V is that you you do disk passthrough versus controller pass through as you would with VMware ESXi.

For 3.5″ storage I am going to go with the venerable IBM ServeRAID M1015 for internal storage (see here for an ebay search) for the M1015 which normally runs around $75/ each) and the LSI 9202-16e that Jeff recently looked at for external storage. Ideally I could find a motherboard with both the eight Intel SCU 3.0gbps SAS/ SATA ports as well as a LSI SAS 2308 based controller which, alongside normal SATA ports, would give me capacity for 20 drives without even needing an expansion card.

As a bit of a lab preview after digging into this a bit more, I found a Supermicro X9DR7-LN4F which has both the Intel SCU but also a LSI SAS2308 controller built-in, which is what you would typically find on either a LSI 9207-8i or LSI 9207-8e add-in controller. Net drive capacity is 18 drives using onboard ports and there are four PCIe 3.0 x8 and two PCIe x16 slots open on the board. Without such a capable platform, as Pieter mentioned in his preview piece on the X9DR7-LN4F recently, there is much less of a need for add-in storage controllers. Odds are my Windows Server 2012 machine will feature a LSI 9202-16e and a IBM M1015 also.

As for hard drives, I have many 2TB and 3TB Hitachi drives still running in servers which have served me very well. On the other hand, until Microsoft Windows Server 2012 is fully tested I am not going to use this for primary storage. I do want to take advantage of SMB 3.0 so I will likely have some storage needs. At the outset, the plan is to have a four drive RAID 10 SSD array and four 3.5″ disks. Buying new I would probably use Western Digital Red 3TB drives, but since I have spare 3TB Hitachi drives, I will use those. In the new generation machine I have made a “rule” that I am not going to leverage anything smaller than 3TB if it has a rotating platter. Over time I do like to cycle out older generation drives to that I can either decrease drive counts or increase storage capacity.

As for hard drives, I have many 2TB and 3TB Hitachi drives still running in servers which have served me very well. On the other hand, until Microsoft Windows Server 2012 is fully tested I am not going to use this for primary storage. I do want to take advantage of SMB 3.0 so I will likely have some storage needs. At the outset, the plan is to have a four drive RAID 10 SSD array and four 3.5″ disks. Buying new I would probably use Western Digital Red 3TB drives, but since I have spare 3TB Hitachi drives, I will use those. In the new generation machine I have made a “rule” that I am not going to leverage anything smaller than 3TB if it has a rotating platter. Over time I do like to cycle out older generation drives to that I can either decrease drive counts or increase storage capacity.

Chassis Selection

Not sure on this one yet. I am stuck between using a 2U with 2.5″ slots for SSDs and a 4U 3.5″ storage chassis. The goal is to get as much as possible onto this server since the LGA 2011 Intel Xeon E5-2600 series platform provides an absolute ton of options in this regard. Luckily this is going in the local rack, not a datacenter so I can afford to utilize extra space for a controller node.

What I would love to see is a 3U with a mix of 3.5″ and 2.5″ drive options. I think the sweet spot is really a node with either four 3.5″ drives or four 2.5″ drive slots. This allows one to setup a small RAID 10 array either for speed or local backup storage in a chassis.

On the plus side, I think I will be using the old Norco RPC-4220 that I have modified with 120mm fans as there are twenty 3.5″ bays available and enough room for four non-hot swap SSDs. This is really driven out of the fact that I have it sitting around but I do think that chassis selection is a very situation specific choice. The one caveat is that if you use a motherboard like the X9DR7-LN4F with more or less in-line CPU sockets, you do want to make sure there is a lot of airflow because the second CPU is getting warmed air from the first CPU through its heatsink.

Conclusion

Overall I am fairly excited about the newest Microsoft Windows Server 2012 release as it does offer some great features. I did really want to see storage controller pass-through in this version but that looks like it is not going to happen. Still with high-performance SMB 3.0 and other great features, I am sure this will be a long-term build much like the Windows Server 2008 R2 build has been a mainstay in the lab for several years now. I may end up doing a piece on the build so that way others looking to do something similar can have an opportunity to follow along. I have not done one of those in awhile so it might be fun.

Nice one.

Looking forward to this build