Micron has new HBM technology. The new Micron HBM3 Gen2 offers more die stacks at the same height, leading to higher capacity. At the same time, the solution offers more bandwidth than in previous generations.

Micron HBM3 Gen2 with Higher Capacity and Bandwidth

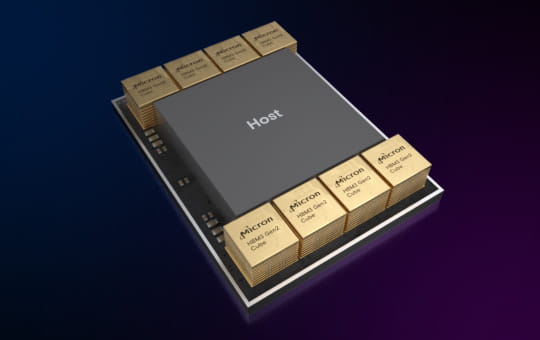

Micron made the HBM3 Gen2 announcement with an 8-high die stack 24GB HBM3 Gen2 product. This new generation increases bandwidth to 1.2TB/s offering a ~50% uplift compared to the initial generation.

Micron also says it has a new 12 die high HBM3 stack coming in Q1 2024. What is important is that Micron says that it will maintain the same package height. Package height is important for maintaining compatibility with existing designs that carefully place different silicon package to exact alignment. This new product should be a 36GB stack and could lead to 200GB+ GPUs from the 80GB HBM models we see today.

Final Word

Perhaps the big question is around pricing. There is currently a GPU rush on parts like the NVIDIA H100 and A100. As a result, something like a NVIDIA H100 with the new HBM3 Gen2 or a future part with additional capacity and bandwidth is an opportunity for NVIDIA and other AI chipmakers to charge more for their parts.

Several years ago, AMD bet on bringing HBM to its Radeon consumer desktop GPUs. While those were in development and launching, the NVIDIA data center GPU prices, an accelerator also using HBM, increased dramatically. The HBM makers, realizing they had a critical component, raised prices. That pushed HBM to the high-end data center space that we have today instead of on consumer GPUs. Now, the same HBM makers see that NVIDIA is drastically increasing its chip prices, so we would not be surprised if HBM3 Gen2 comes at a notable price premium. It would be silly for Micron to not charge more for better technology that is in high demand.

God bless it…

I only like supply and demand driven pricing when it works in my favor.

Isn’t Micron something of an also-ran in the HBM space? That could lead to lower prices from them to get more market share

Impressive news! The Micron HBM3 Gen2 with higher capacity and bandwidth is a game-changer for data-intensive applications. I’m excited to see the performance boost it will bring to various industries. Kudos to Micron for pushing the boundaries and delivering innovative solutions!