At Intel Innovation 2022, the company showed a few of us its next-generation accelerators built into its upcoming 4th Gen Intel Xeon Sapphire Rapids CPUs. This is a theme we have been discussing for some time on STH, where onboard accelerators that free cores and effectively bolster per-core performance. That is part of the Sapphire Rapids philosophy, and we saw in action in San Jose.

Intel Xeon Sapphire Rapids Shows Built-in Accelerators at Innovation 2022

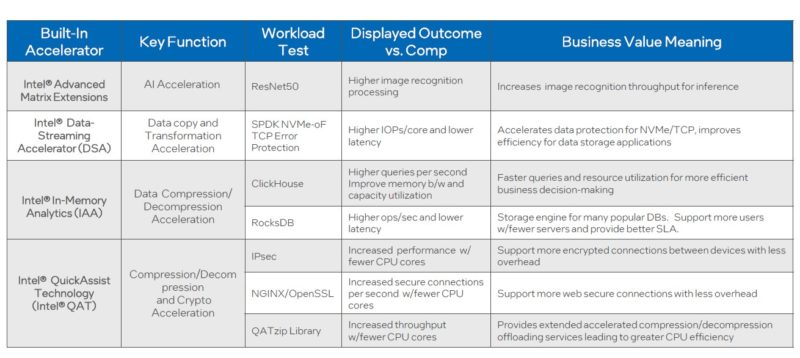

Here were the workloads that Intel was showing at Innovation 2022.

Intel had a number of systems there from QCT and Supermicro running Sapphire Rapids and Milan CPUs. We were able to take photos inside one of the Sapphire Rapids machines.

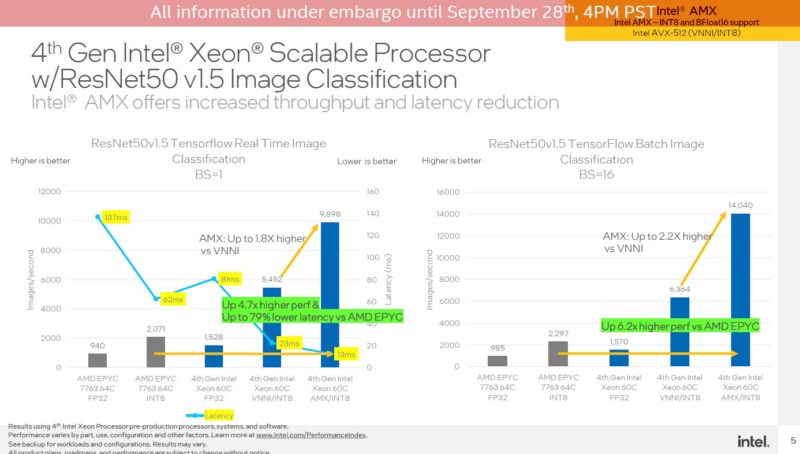

Here is an example of the new AMX (Advanced Matrix Extensions) running ResNet-50 inferencing and showing a large gain over the Ice Lake VNNI. Genoa we expect to have VNNI but in the current generation, Ice Lake enjoys a benefit here just because of the new extensions. With Sapphire Rapids, this will likely increase on a per core basis.

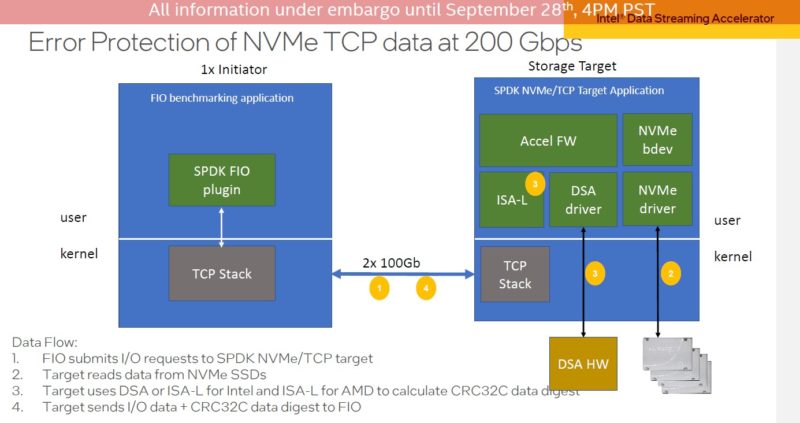

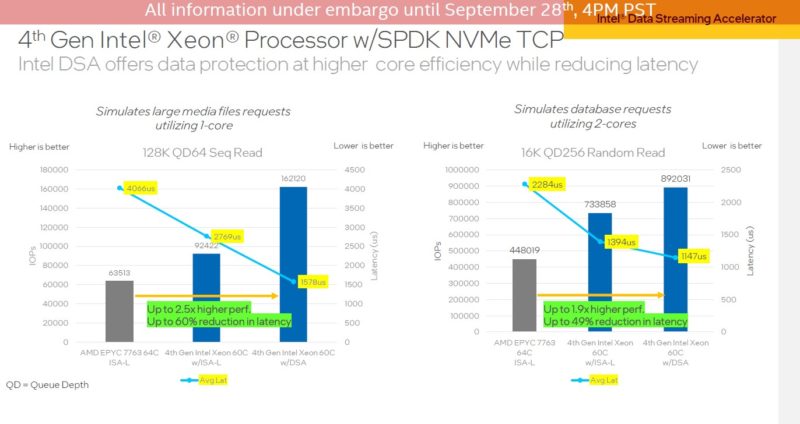

One of the other demos is of DSA (Data Streaming Accelerator.) Here is that test setup and Intel showed exactly what was going on in each.

These systems were running live, and we actually got to see the numbers on screen and watch things like the SSDs lights blink as the benchmark was running.

Here are the results that were in line with the live demo.

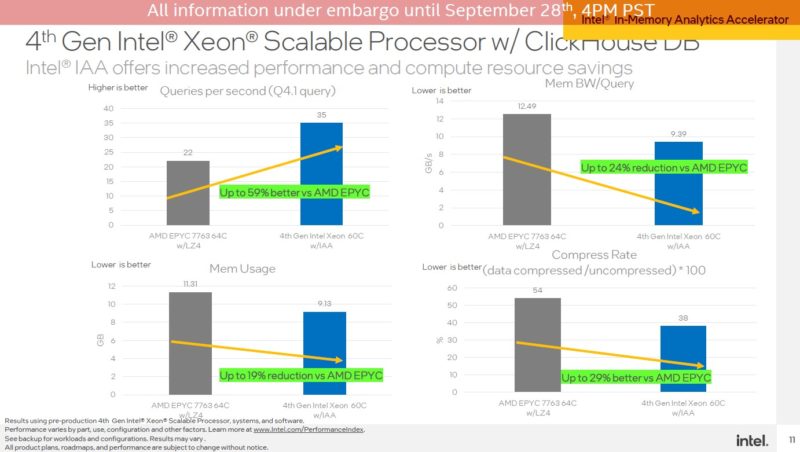

Here is the Intel IAA (In-memory Analytics Accelerator) running ClickHouseDB. Again, we want to make sure folks know this is Sapphire Rapids Xeon, using accelerators, to AMD EPYC 7003 Milan.

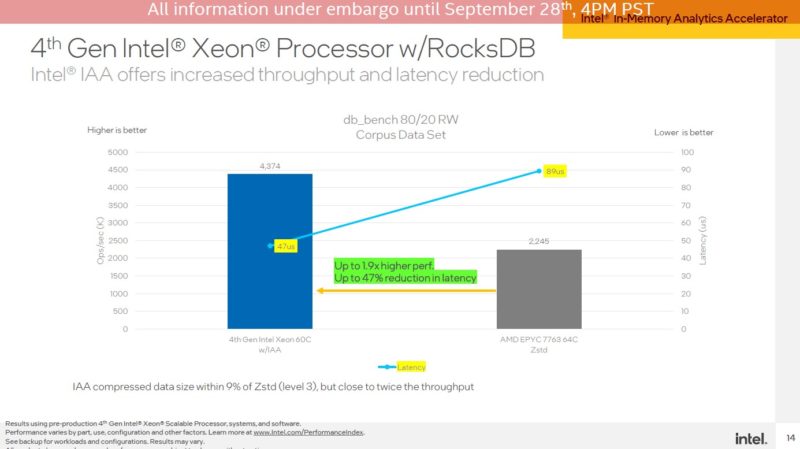

Here is the RocksDB setup using Intel IAA:

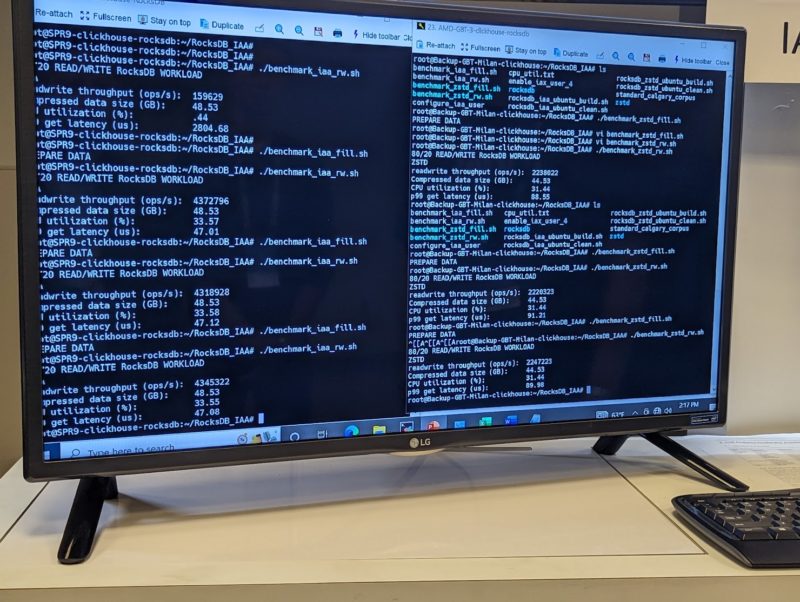

This is one I managed to take a photo of, not just video:

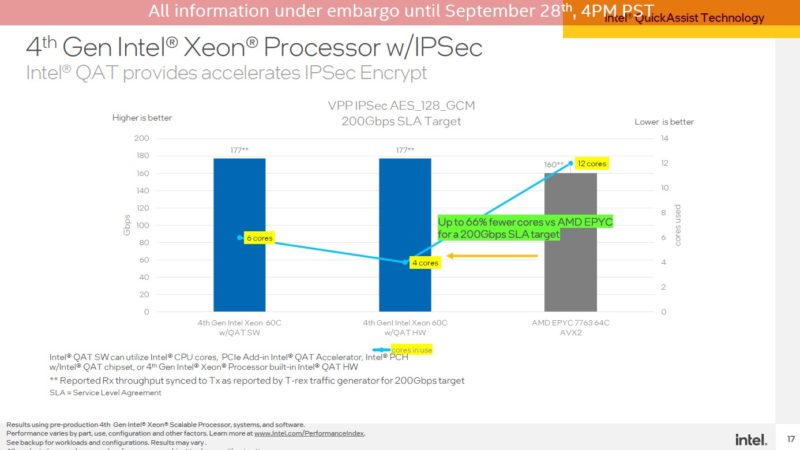

Next is Intel QAT or QuickAssist Technology. This is perhaps going to be the big accelerator. While many of the accelerators above are net new, the QAT is an existing technology that is being enhanced and brought onboard the chip itself. We last looked at QAT with the PCIe accelerator.

Here we have IPSec at 200Gbps. Note here that this is faster than what we could see on Ice Lake, even with a PCIe accelerator.

Here is that test setup in action. What is not being pictured is the large rack of servers to the right of these monitors.

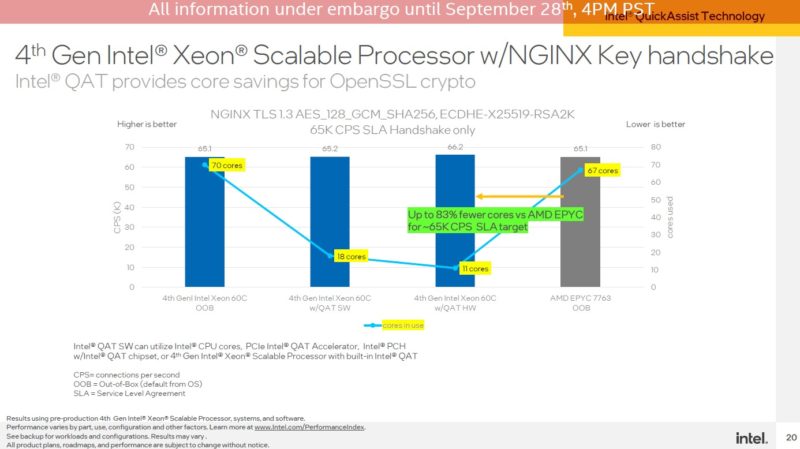

Here is the Nginx OpenSSL workload:

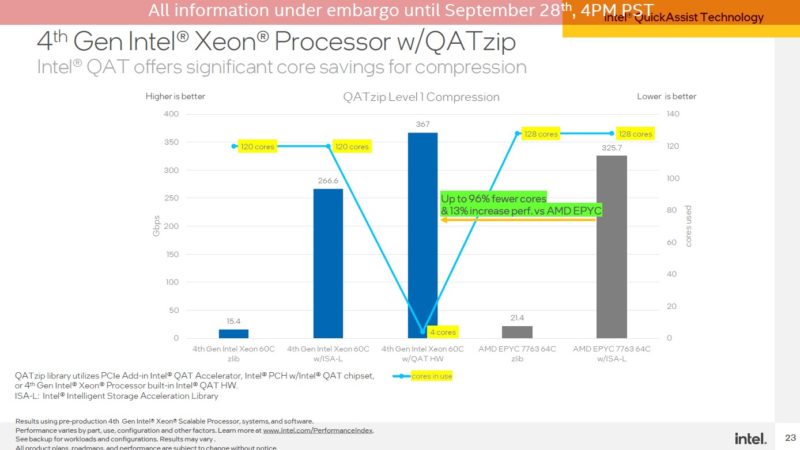

Here is the compression one. What is really interesting here is that if you look at some of the baseline data, without accelerators, AMD is actually coming out ahead. Here AMD with 128 Milan cores is actually out-performing the Sapphire Rapids Xeon system with 120 cores. That is about 7% more cores but around 39% better performance. Even with the ISA-L numbers, AMD is around 22% faster with only 7% more cores.

This is actually a benchmark that we would not have seen Intel show a few years ago, given how well Milan is performing compared to Sapphire Rapids. The big implication is that Sapphire will need accelerators to outperform Milan and Genoa. Instead of using the entire CPU for this one for both architectures, the onboard QAT accelerator will mean not only better performance but also using four instead of 120 cores.

Final Words

Hopefully, this aligns well with the key theme that we showed a few months ago. A big part of Intel’s focus in this generation will be “more accelerators, more better” as we discussed in More Cores More Better AMD Arm and Intel Server CPUs in 2022-2023.

We will have more on these in a few weeks, but the main point here was really to show the accelerators in action. Intel has several new accelerators, but their success depends on developers starting to use them not just in the examples shown here but throughout a broader software ecosystem.

After having achieved software portability through optimising compilers that implement standards-compliant high-level languages, who wants to write code for specific accelerators that may not be available in the next generation?

First, the accelerators have to become so common that the average developer has ready access to the hardware. Next, libraries, compilers and even programming languages have to be extended to make use of those accelerator features in a portable way. After that the accelerator performance will be balanced against the ease of writing, maintaining and running the software in the future.

In my opinion, Knights Landing and Optane memory were both difficult paths compared to adding more cores that work well with existing software development tools. Are these new innovations different?

Exactly what Eric wrote. The fun fact is most business software is riding off of decades old base code that has had updates stapled to it so many times it can fool most into thinking it’s “new”. These accelerators are great when you have a workload that can use them, but most mixed load systems just don’t care. AMD and ARM are simply brute forcing the solution through numbers and raw performance. Intel is trying to get smart because they can’t compete in that arena.