With the introduction of the HPE ProLiant MicroServer Gen10, the company pivoted on a number of fronts. We covered the initial launch for the server some time ago. Our AMD Opteron X3421 powered unit cost us under $375 which is quite reasonable. HPE’s goal is to make an edge server capable of servicing small offices, retail locations, and even high-end home environments. Hallmarks of the HPE ProLiant MicroServer include a focus on storage and low power, nearly silent operation. Here, the HPE ProLiant MicroServer Gen10 does not disappoint. In our review, we are going to tear down the server to see the design decisions HPE made. We are going to benchmark the unit and see how much power it uses. Finally, we are going to discuss the pros and cons of the Gen10 iteration.

HPE ProLiant MicroServer Gen10 Overview

In our HPE ProLiant MicroServer Gen10 review, we are splitting the hardware overview into two parts. First, we are going to look at the general purpose server hardware. We are then going to focus on the storage-centric aspects of the server. The ProLiant MicroServer Gen10 is a storage-focused box so we wanted to align our review along that axis.

HPE ProLiant MicroServer Gen10 Hardware Overview

Upon unboxing the HPE ProLiant MicroServer Gen10, one will immediately notice a diminutive stature. The entire unit is 9.25″ x 9.06″ x 10.00″. Finished in a matte material, one gets the updated HPE logo and two front panel USB 3.0 ports. The top of the unit has a slim optical drive bay, however we suspect most people will use it for a SSD if at all.

The rear of the unit has a power input, two more USB 3.0 ports, two USB 2.0 ports. Beyond this, there are three video outputs. The large blue one is a legacy VGA port and there are two DisplayPort headers. If you need to run digital signage, perhaps for office dashboards or menus, the AMD Opteron X3421 has a GPU to handle this. We wanted to note, there are lower-spec dual-core options, but we suggest that if you are reading STH, get the quad core X3421 model.

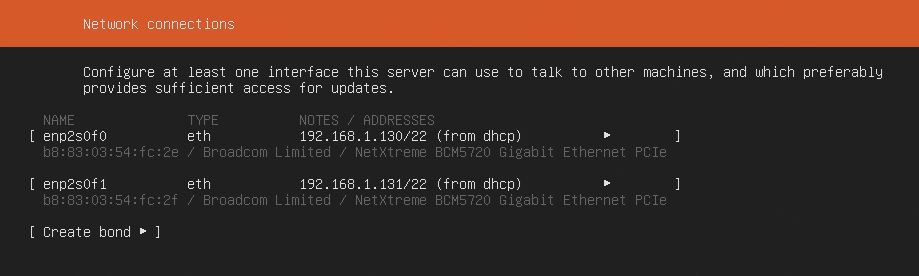

One can also see a pair of 1GbE network ports. Networking is handled via Broadcom NetXtreme BCM5720. There are a total of two ports and these are detected in most OSes out of the box.

Our readers will likely see these as a middle ground between using Intel i210/ i340 NICs and using lower quality Realtek NICs which cost pennies in a bill of materials. In this class of server, the Broadcom BCM5720 solution is more than acceptable.

The motherboard tray slides out after one disconnects all of the cables including the ATX power cable and SFF-8087 SATA cable. Inside there is a very functional layout.

Cooling the CPU is a passive CPU cooler. This is flanked by two DDR4 ECC UDIMM slots. It was a nice touch that our inexpensive review unit came with a single 8GB DDR4 DIMM instead of two 4GB DIMMs. 4GB DIMMs have become less common, and the implication is that one can upgrade this server to 16GB by simply adding an 8GB DIMM. If there were two 4GB DIMMs, they would be discarded in a memory upgrade.

The PCIe slots are interesting. HPE has a PCIe 3.0 x8 slot with an open-ended connector. The PCIe x4 slot is also open-ended but is only an x1 electrical slot. The open-ended design lets one install larger cards, although not with full bandwidth. Here, if the PCIe 3.0 x1 slot was an x4 slot, it would have made the expansion capabilities truly tantalizing. As an x1 slot, it simply does not have the bandwidth for high-speed NICs, NVMe storage, or most other add-in cards.

From a hardware perspective, this is mostly it. Our unit had a low-end quad-core processor with an integrated GPU allowing for 4K video output. Two DDR4 UDIMM slots with an 8GB stick of RAM installed. There are also dual 1GbE NICs. This is not a heavy compute platform, but for basic network services and storage, it is enough. We think of the AMD Opteron X3421 as an Intel Atom C3000 competitor in terms of performance and will have data to show why. For now, let us move on to storage.

That conclusion is really insightful. If you want multiple low cost servers with hard drives for clustered storage, this is a really good platform. If you just want more power, I keep buying up into the ML110 G10 because that’s so much more powerful. It’s also larger so sometimes you can’t afford the space.

Finally, an honest review that actually shows cons as well was what’s good.

We use MSG10s at our branch offices. They’re great, but servicing them sucks. We’ve been training finance people to do the physical service on the MSG10s.

One issue you didn’t bring up is that there’s a lock for the front to secure all the drives. We had one where it wasn’t locked, employee flipped the lock and ended breaking the bezel.

I hope you guys do more ProLiant reviews. They need a site with technical depth and business sense reviewing their products not just getting lame 5 paragraph “it’s great” reviews.

To anyone not using the MSG10, they do have downsides, but they’re cheap so you have to expect that it isn’t a Rolls Royce at a KIA price tag

It’s too bad this doesn’t have dual x8 PCIe. It also needs 10G. This is 2019. To get 10G or 25G you’ve gotta burn the x8 which leaves you with only a x1 slot left. Can’t do much with that.

What about the virtualization capabilities? In your article on the APU, your lscpu output reported AMD-V. It would be nice to know if it’s fully implemented in BIOS and if there are reasonable IOMMU groups. Also, Intel consumer/entry level processors lack Access Control Services support on the processors PCIe root ports, so would be nice to see what this AMD APU offers.

Why do I care? Because it would be nice to have a dedicated VM running less trusted graphical applications for digital signage. In a home office use case, one could use virtualization to combine a Linux NAS and a Kodi HTPC on the same hardware. Exposing the Kodi VM instead of the server OS to your family might have advantages.

Like the others small HPE ProLiant MicroServers in the past, an attractive looking little box. I wish it were possible to install an externally accessible SFF x8 mobile rack such as a Supermicro CSE-M28SAB and the motherboard had x2 SFF 8087 ports or better M28SACB and 8643, thus could have x2 RAID 10 volumes.

Well, I suppose you could put a ZOTAC GT 710 1GB graphics card in the spare slot, as it is a purpose-built PCIe x1 card. Marginally better than the iGPU if you have the need, and handy to keep around if your onboard graphics quits working on a server and a small x1 slot is all you have left to work with.

Is the idle power measurement with disks spinning?

It is a very good system, I got two with the dual core APU and 16G memory. It is possible to use the PCIe slt for an SSD card as well, I have an intel i750 that fits inside and works fine. I reckon that a couple of modifications to a future design could really make it quite good.

Running latest version of Solaris on this works a treat hosting files over NFS.

The price of these just doesn’t justify the performance. I have an older model I was able to pick up for a great price (NEW) and works great for very small stuffs, but choked when I attempted a large ZFS Freenas setup.

Under Test Configuration, you have 4x10Tb HDD’s and a 400GB SSD. I thought that that this machine could only handle a maximum of 16TB overall, is this not true?

Does anybody know, if the harddisks can be used in JBOD mode? I intend to run a Linux-based software RAID-5, because i do not want to rely on the controller hardware and RAID-5 isn’t supported anyway. Power outage is no concern to me as i am using an UPS. Thanks for any experience shared.

I cannot find anywhere if this little box does support hardware passthrough like VT-d for Intel. Can anybody tell?

Got it as micro server. Noise and energy focused. thoughts:

– louder than my strict requirements: 30dB, good for office, not good for audiofiles and bedroom. Need to sync it with drives (Seagate 2TB – 20dB) by 12cm fan replacement. Can’t really hear the tiny fan, usually opposite is the case.

– accessories: only electric cable, no optical drive as pictured everywhere

– annoying connection to optical drive / ssd, what a collosal waste of time for the customer straight away… need to search for very specific floppy cable hack, with very low availability on the market. Overall manhours very high, just to make HP save 1$

– annoying connection to fan by some generic 6pin port, replacement will be difficult as they shuffle pins and PWM management. Another monster expense to count on.

– paper limit of 16TB doesn’t apply in real life of course. My config is 3x2TB RAID0 + 10TB (nightly scrubber), all 4 is 20dB (the max for any of my setups), even the big one from the brand called “WD” (first time trying this brand after 20years with Seagate only, they now offer 10-12TB drives with idle 20dB!)

– BIOS is from AMI, triggers Marwell and Broadcom first, you can set them up to set up hardware RAID etc which you won’t do, but be sure boot time will be very long thanks to this. You can set TDP to 12(!)W to 35W.

– PCIx4 (unfortunate just x1) can be used for 10to8gbit (capped by PCI) Aquantia card which is now very widely used in appliances. I have direct link to QNAP 5to3gbit (capped by manufacturer) USB3 adapter, again Aquantia, this way i can use this connection for 3gbit locally, while server will connect aggregated 2gbit to switch. Use case: video editing..

– PCIx8 can then stay free for NVME, Gfx etc

– one use case is NAS showcase, with synology btrfs encryptfs not-so-cool implementation, getting 240MB/s on samba. I thought there’s much more potentional as the raw aes256 benchmark throws 700MB/s. Now I noticed the 1/4 CPU utillization, guessing 1 core is used, once i randd if=/dev/null of=/volume1/test bs=8k in 2 shells in parallel, the speed hit 400MB/s, still not challenging RAID0 cap of spinning drives, good enough for this whacky file encryption. Machine loves multiple users.

– I assume Freenas ZFS will give better numbers. Will continue tests with more use cases (different NAS systems, VPN gateway role, VM).

– without encryption getting 350MB/s, which is a limit of 5to3gbit USB QNAP card

– dont have 2 SSDs there to check 10gbit saturation, but prehistoric marvell controller is connected via PCIe2x2, guaranteeing 10gbit and nothing more, which is good to save energy

– system is so cold during testing, that i think of just turning off the fan or use fixed low noise low rpm one

– no vibrations are transferred from hard drives, this time i have no use for pads

– overall performance for price is good, and performance for energy is fantastic.

1)you want to compares commercial NASes, ok, the first model of same performance is qnap 472xt, 1200$, 33W (bleh) on idle…wait they told us commercial NASes always save more energy if not money? here you pay 2x for 2x energy waste

2)you want to compare embeds like top routers Nighhawk, WRT32, ok, they eat just a bit less energy but 4 to 10x slower performance on all ciphers – cant serve more than 1 user

3)you wanna compare xeon anything, you scale up performance with cores, but in each case, consumption will be horrid

4)finally you wanna compare with Gen8, seriously, i dont get this moaning about how gen10 sucks, you really love noisy 35w “micro”servers with half performance?

I’m thinking of getting one of these, can i use SSD in this unit, and does Windows Server 2016/2019 have any issues on this hardware?

Makes a nice Avigilon DVR… all decked out and still under $2k and much more peppy than the Gen8.

buy.hpe.com website had this listed as $109.99, but they canceled all the orders claiming a pricing error in their automated system. Course, they didn’t take the price off the website until after several complaints.

I`ve just bought HPE ProLiant MicroServer Gen10 and now I want to install SSD NVMe Transcend 220S 512GB into PCIex8 slot. I want to install it via SilverStone ECM24 adapter (M.2 to PCIe x4).

Does anybody know, if the SSD can be used as bootable for Windows 10 or Windows server 2016? Whether to configure the BIOS in this case?

Thanks for a help.

of course you can boot anything from any drive. Windows, FreeBSD, linux, proxmom from USB, HDD, SDD, NVME. I’ve got all of these drives inside and booted from all of them.

the only thing i dislike is the stupid fan there, it’s a very tough job to trick the system to believe it’s running its lousy fan. Wiring is completely different and i shorted one server and returned (later the seller, a biggest retailer, quit on selling them). If you use voltage reducer or wiring tricks, the motherboard will compensate (run faster). Really annoying to hear the fan go up and down all day.

Hi,

What to do to use SAS disks in Microserver Gen10 (not Plus); can I use SAS disks in this bay? Is it possible to use SAS disks and connect the disk bay cable to a hardware RAID controller with cache battery

Best regards

I can confirm.

SAS Disk work with the original Enclosure and Cables.

You only need a SAS HBA or RAID Controller 6/12Gbit