Dell EMC PowerEdge R740xd Hardware Overview

The Dell EMC PowerEdge R740xd is a 2U server with an attractive bezel. Behind that bezel is an extraordinarily customizable system. Our particular system came in a 24x 2.5″ hot-swap front bay configuration which we will cover more in-depth in our storage section.

On the right side of the server is the server tag along with power buttons and front I/O ports (VGA and USB) for servicing the machine from a cold aisle.

As one would expect from a PowerEdge, the drive bays are all hot-swappable including both the SAS3 and the NVMe varieties.

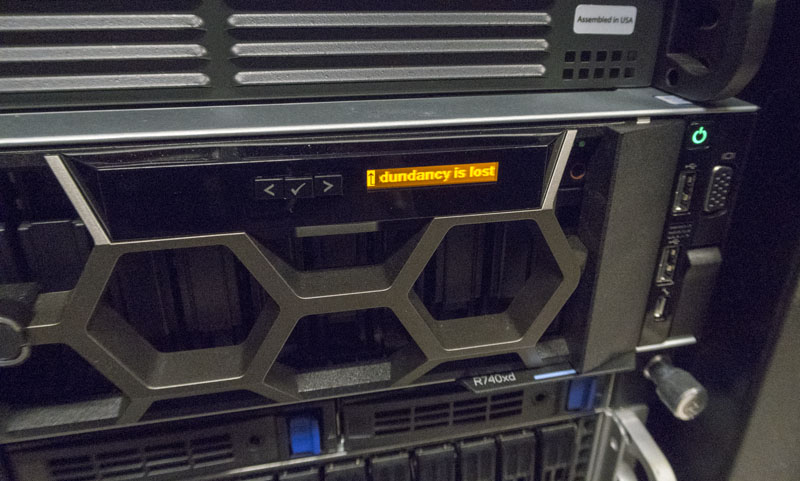

As an option, you can get the LCD bezel which can provide diagnostic information, such as warning you when power supply redundancy has been lost.

Even if you do not have the LCD bezel, the Dell EMD PowerEdge R740xd still has a warning system:

Perhaps the more interesting side is the rear of the chassis which we will look at before going in-depth on the server internals.

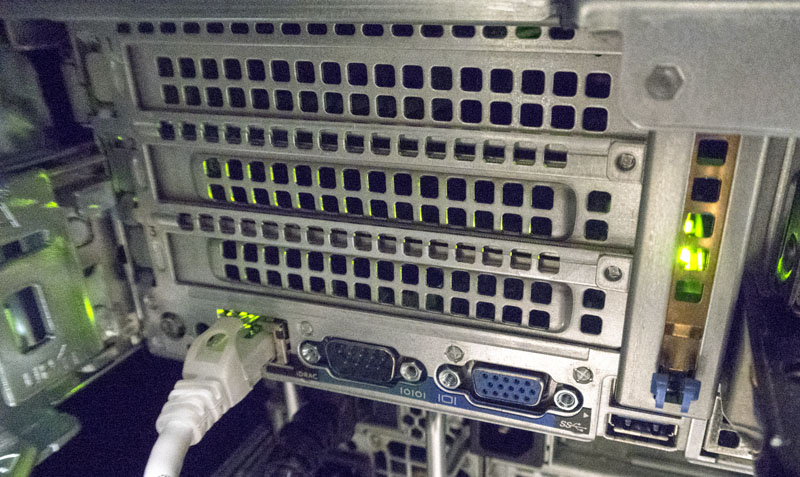

There is an RJ-45 Ethernet port for out-of-band iDRAC management. Many organizations have a separate physical network for OOB management interfaces and the left side orientation allows for easy cabling. There are legacy serial, VGA and of USB 3.0 ports on the rear panel for connecting KVM carts in the data center.

Like the Dell EMC PowerEdge R640 we reviewed, there are no 1GbE or 10GbE ports standard. The R740xd instead relies upon a mezzanine card. Our test system came with dual 25GbE via a Mellanox ConnectX-4 Lx based mezzanine controller. This solution worked with both our 25GbE infrastructure including 25GbE breakouts from our Dell Z9100-ON 100GbE switch and our 10GbE/40GbE infrastructure.

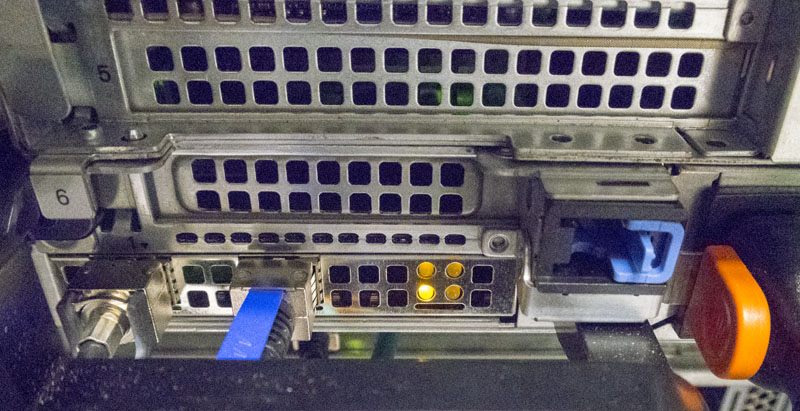

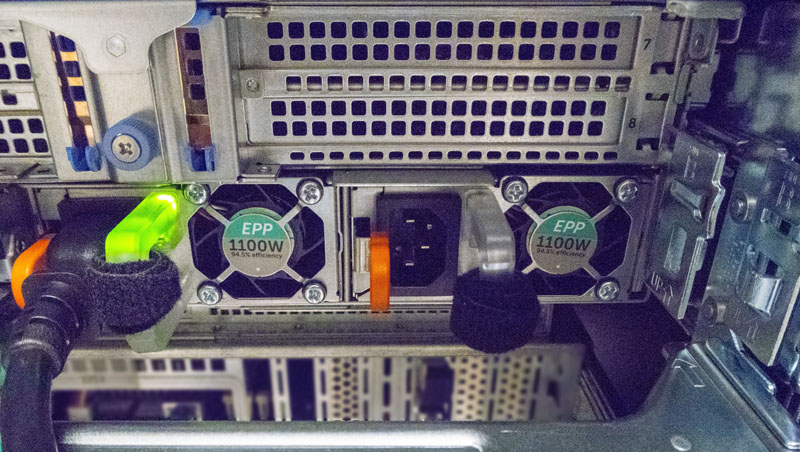

The rear of the chassis also includes the hot-swap power supplies. Our test unit came with redundant 1100W PSUs. Like other Dell EMC servers and switches, when a PSU is plugged-in and operating properly the handle lights up green. This was hard to show until we had the pedestrian observation that removing a power cable would show one PSU experiencing a power failure and thus no green light. During this test, the machine continued to run a workload using in excess of 800W so the redundancy worked as expected.

These three photos of the rear chassis had an ulterior motive. We wanted to show the three main PCIe expansion slot blocks which sit above these three sections. As we move to the interior of the chassis you will see how the Dell EMC PowerEdge R740xd leverages these three blocks of horizontal PCIe expansion risers to provide an enormous amount of versatility.

Inside the Dell EMC PowerEdge R740xd

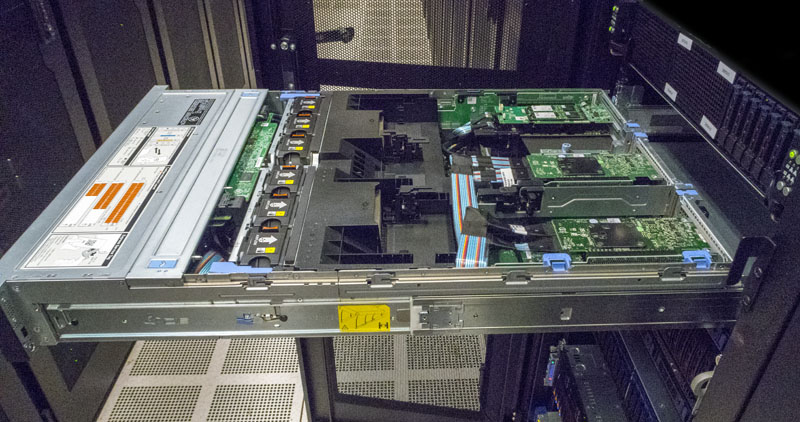

A key theme when we review Dell EMC servers is just how easy they are to service. An example of how the PowerEdge differentiates itself in this area is simple, yet important. The entire server pulls out of the rack and can be serviced easily without needing to remove the unit from the rack. You may think this is a standard feature on all current generation servers. It is not. Here you can easily remove the lid and access the rear PCIe risers as well. That can save several minutes per service call.

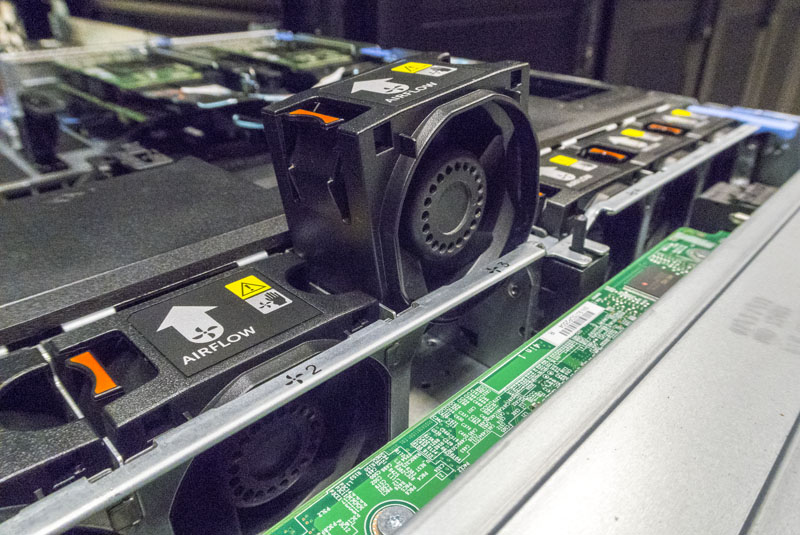

Almost everything inside the chassis can be easily serviced. For example, the fans are hot-swappable with a single hand. Some vendors have implementations they claim are hot-swap but require two hands, frustration, and a bit of luck to quickly swap them out. In PowerEdge, this is a problem solved generations ago and you get the benefit of a mechanical design and layout incorporating feedback from fleets of servers being serviced in the field. From a performance standpoint, these are not exciting aspects but a machine that can be serviced 2-3 minutes faster is a server that is back up and running 2-3 minutes faster.

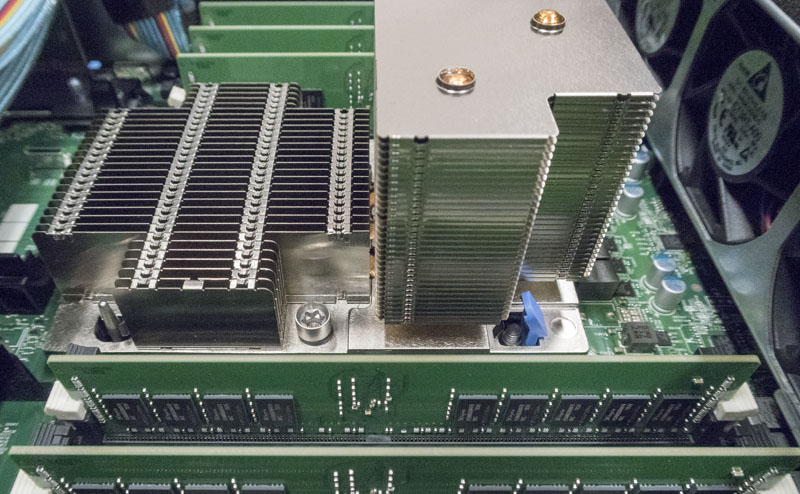

In that vein, here is another innovation which is exciting to people who operate servers in the field. A standard Intel Xeon Scalable LGA3647 heatsink has two pins and four Torx screws. At STH we even have a guide on the LGA3647 narrow versus square ILM implementations if you want to nerd-out on sockets and coolers. Dell replaces two of the Torx screws with a clip solution with easy to engage blue tabs. Since we simulated upgrading CPUs several times while doing our performance side, we can tell you this little design change makes upgrades a minute or two faster.

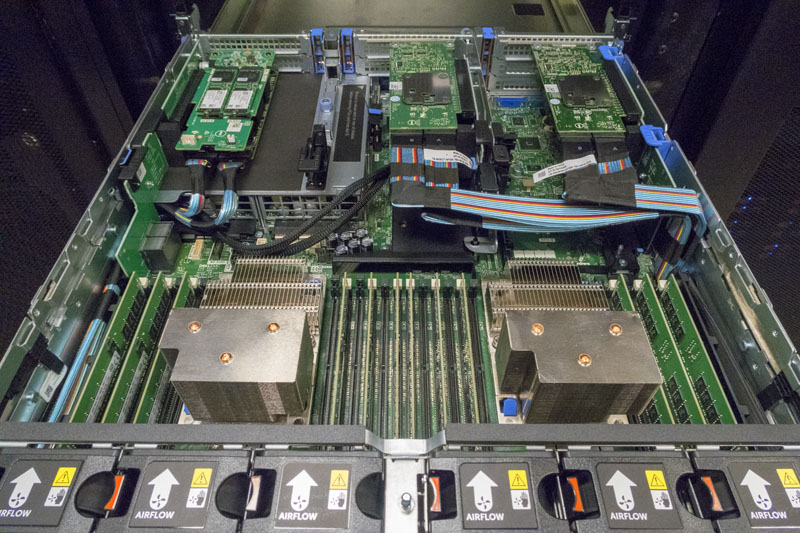

Removing the cooling shrouds we get a great overview of the system’s internals. After the fan partition, there are two sockets and a full set of 24 DIMM slots, 12 per CPU. Each Intel Xeon Scalable CPU in this generation can handle 768GB of RAM per CPU and some special “M” SKUs can handle up to 1.5TB per. The big change from the Intel Xeon E5 generation is the change to 6 channel memory. Even using Intel Xeon Silver or Gold 5100 series CPUs with DDR-2400 maximum memory speeds, you get 50% more memory bandwidth than the Intel Xeon E5-2600 V4 previous generation CPUs. The new platform can also take up to DDR4-2666 RAM for an extra 10% or so performance.

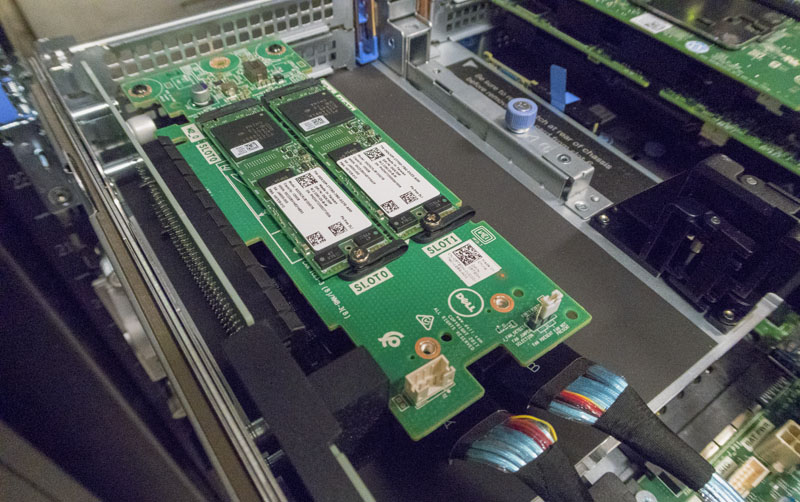

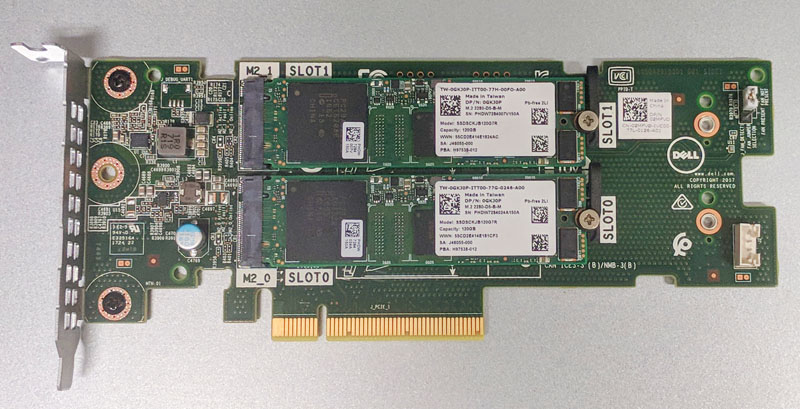

With this generation, Dell EMC has a solution we really like: the Dell BOSS. Perhaps it is just the name but technically it is an m.2 based OS boot device. You can use up to two m.2 SATA drives and load your OS.

The advantage here is tangible. You do not need to utilize a valuable front hot-swap bay for OS boot devices. Beyond that, the Dell BOSS can be ordered in RAID 1 configurations which allows you to have a mirrored OS boot media therefore able to withstand a drive failure. If you are ordering a Dell EMC PowerEdge R740xd get this and order the two drive RAID 1 version.

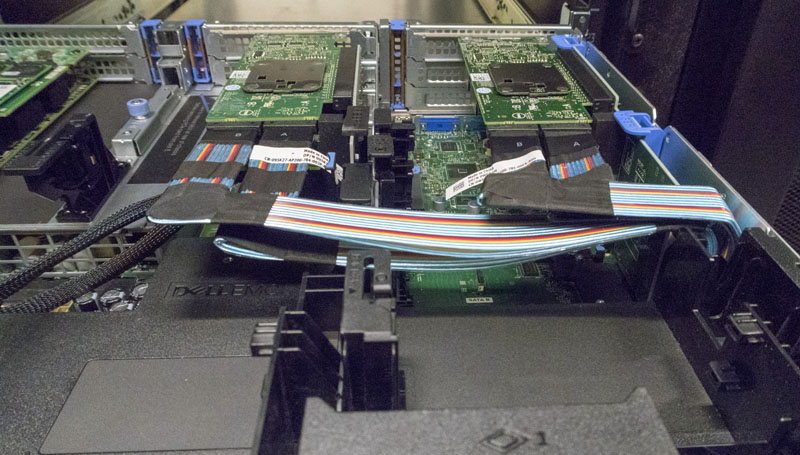

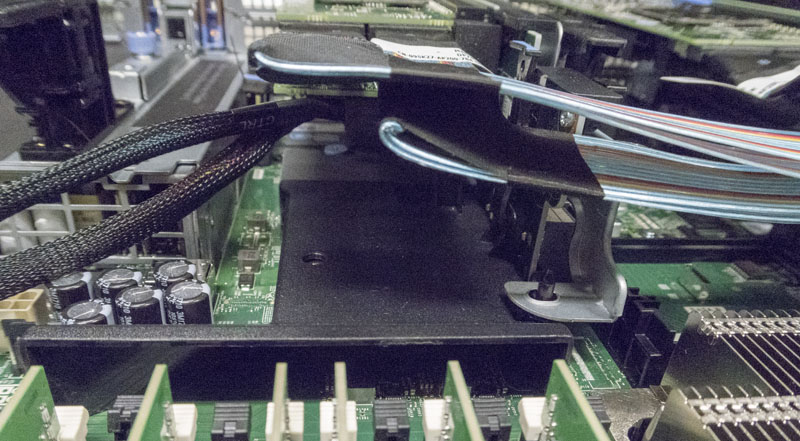

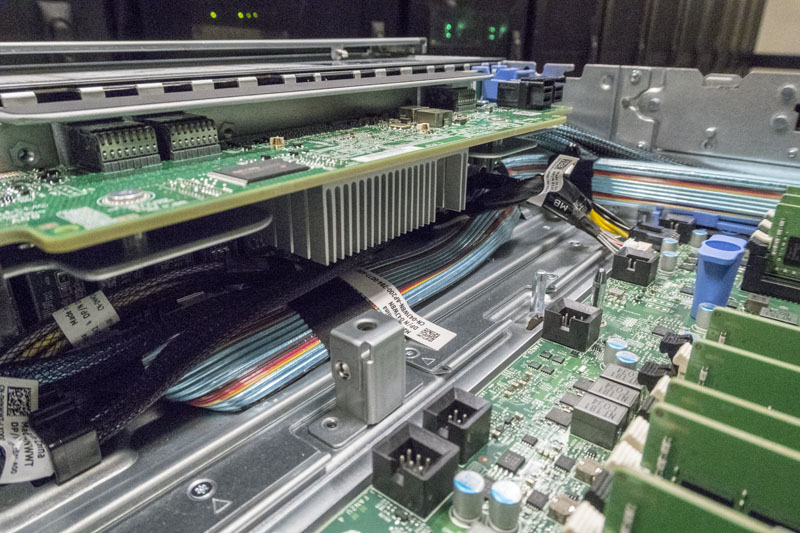

You are probably noticing that there are cables in the color of a 1970’s striped shirt laced about the chassis. These are all neatly sized and routed. In our test chassis, we have the ability to use hot-swap NVMe drives. These cables and the PCIe retimer cards they attach to essentially allow Dell’s engineers to route NVMe to the front of the chassis. There are a few other benefits to this cabling method. It turns out that pushing PCIe over long distances using cables can yield lower signaling noise than routing through PCB. The other major benefit is that you can use the same chassis with the same risers and add on the PCIe cards and cables as necessary in the systems you want to have NVMe capabilities in. For large cluster admins, this means a common chassis, common risers, and then the ability to have the factory customize slightly based on needs.

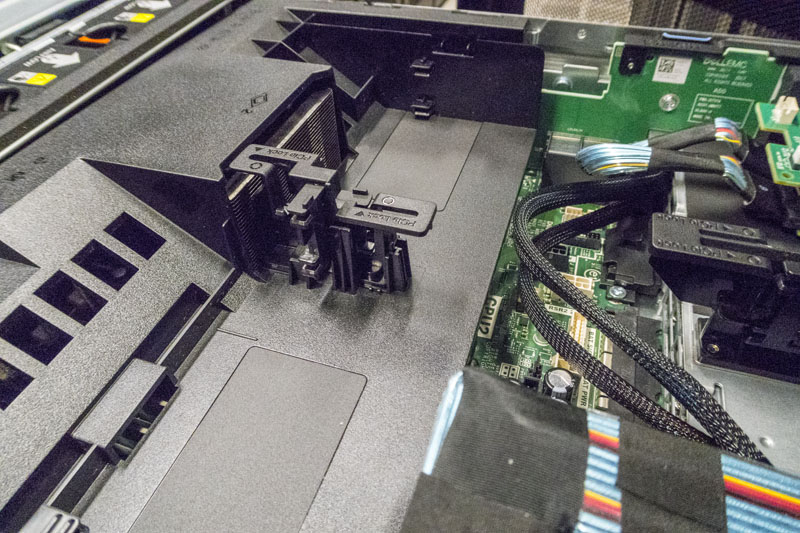

Here is a shot of what the triple slot riser 1 looks like without the PCIe card installed. Dell’s engineers did a great job of keeping the chassis relatively clear of obstruction so even longer cards can fit easily.

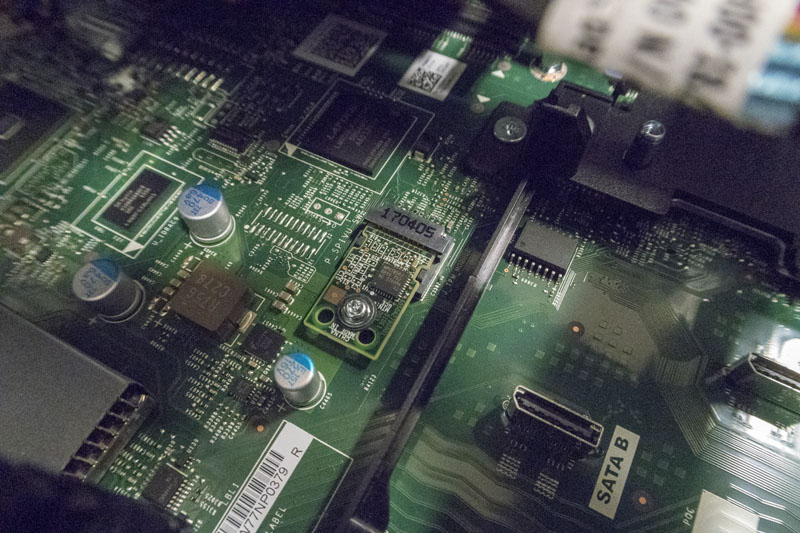

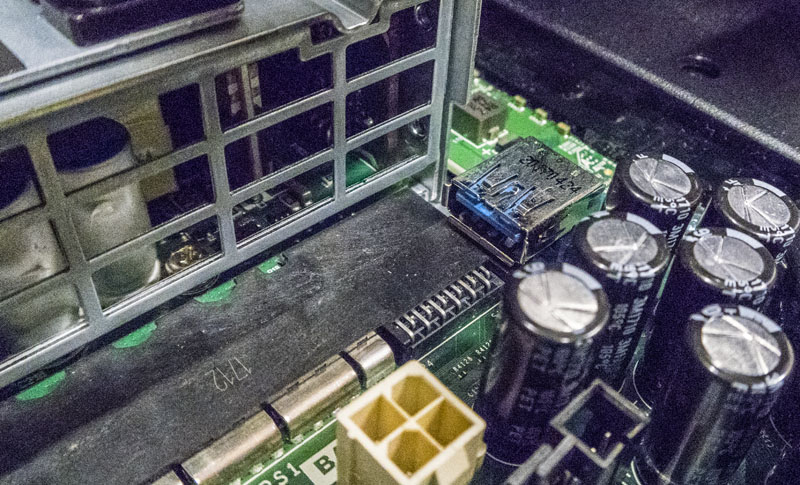

Under the riser 1 slots, we can find our TPM module. Dell offers either TPM 1.2 or 2.0 modules. They are also one of the few screwed-in components which makes sense. You do not want these to be removed and replaced easily.

Providing SAS3 12.0gbps connectivity is a Dell PERC H740P controller with 8GB NV cache. These are common controllers in the Dell EMC PowerEdge line. For example, you can get these in the 1U Dell EMC PowerEdge R640 as well.

When we were going through photos, this one stuck out. That black piece of plastic behind the DIMMs is another unique feature. It is a cooling duct for the networking mezzanine card that we discussed when we looked at rear I/O. Many vendors will leave an open networking card. As the industry moves to dual 100GbE cards that are running hotter, some of the OCP mezzanine card slot implementations we are seeing in servers cannot get enough airflow to cool 100GbE mezzanine cards. Dell’s engineers duct airflow specifically to the networking controller to ensure reliable operation.

There are small features all over the chassis that show just how well thought out the chassis is. One example of this is the multitude of PCIe locks in the chassis. They are at the end of the risers (right side of the picture below) and help keep cards secure for shipping and help keep PCIe slots safe from heavier and longer cards over time. GPU compute support is a major focus for Dell in this generation and you can see how the system supports longer cards with these locks. When we replaced the cooling baffle, we noticed there were more on this piece for even longer cards.

In a way, that summarizes the internals of the PowerEdge R740xd. There are featured tucked everywhere. If you are a VFlash customer, there is a small PCB that fits next to the power supplies that holds the SD card.

Another great example is the internal USB 3.0 Type-A connector. These are still popular for license keys and embedded OS deployments so Dell’s engineers found a place for one, just above the PSU mating connector.

You can remove the entire fan partition using two levers if you need to service the front of the backplane. Here you can see the SAS3 cables, expander and NVMe cables all connected to the R740xd’s backplane. The practical benefit of this is that not only are the drives hot swap, but Dell can also support NVMe or SAS3 SSDs in the same physical slot. This is a differentiating implementation as we have seen many servers where a hot-swap bay is wired for one or the other at the factory.

That is probably one of the more thorough hardware overviews you will see of the PowerEdge R740xd and as you can see, we tore the system apart and (successfully) put it back together. The process was surprisingly easy for a machine this complex and flexible. Through the process, you can see how the PowerEdge team designed the system for reliability but also makes an easy to service solution.

Now that we have looked at the hardware in detail, we are going to look at the management, followed by performance and power consumption before giving our final thoughts.

This is the most in-depth review I’ve ever seen on a server and 10x better than whitepapers. I really like that you went into performance with so many different CPU options. You could have said though that slower bronze and silver CPUs can’t use ddr4-2666 because of Intel’s limitation. You’ve still got a massive amount of information in here. I’m going to read again tomorrow and share with our team.

Holy hell. What a review. We’ve got a few racks of these already. I’d wholeheartedly concur.

It took me 32 minutes to read. You can almost turn this into a Kindle book but the pictures would suck. Really good STH. For me those servicing pics stand out and I’m normally a Lenovo admin. Maybe it’s time to look.

With the first few sentences I thought ‘wait.. 1U? It’s a 2U machine!’ :P

HC node for sure. I’d echo the thoroughness comments. You’ve got everything here.

Maybe you can do a video next time?

Read pages 1 and 2. Coffee break. Read pages 3-5. Lunch. I agree you’d be better off turning this into a Kindle book.

This is an awesome high quality review! Really good job STH.

Is this with SSD or only with SAS or SATA?