For anyone that has read the forums over the past few weeks or months, there has been quite a bit of discussion around the Dell PowerEdge C6100 XS23-TY3 cloud server. For those that want to see the quick rundown, there are two main resources. First, is the formal Dell PowerEdge C6100 XS23-TY3 review. The second is the now growing forum topic on the Dell C6100 XS23-TY3 that has information like complete (or as complete as we have researched) lists of common replacement part numbers and pages of user experiences. At this point I did want to call out a few contributors specifically for their contributions and interesting work on the Dell C6100 XS23-TY3.

Taming the Dell C6100 XS23-TY3 to Tolerable Home Lab Levels

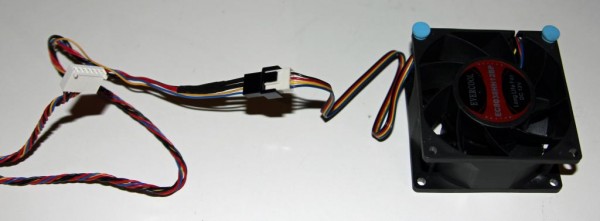

The first effort was truely innovative. Pig Lover experimented with his Dell C6100 XS23-Ty3 and managed to make it tolerable. At idle the server is not too loud. Under full load however, needless to say it can get noisy! PigLover spliced fans into a chassis and greatly reduced noise while also saving a bit on the power bill.

Check out this thread to see the origin though installation and tips to those of us trying the mod.

Adding an Internal USB Port to the Dell C6100 XS23-TY3

One area highlighted in many STH motherboard reviews, is that server motherboards need internal USB Type-A connectors. Chuckleb has done some great work (along with several folks helping) on making an internal USB header a reality.

Check out this thread to see the great work. Note – I am personally hoping that someone ends up selling these!

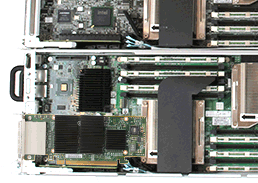

The SAN/ NAS Node C6100

One longtime contributor, dba, confirmed that a LSI 9202-16e can fit in a Dell C6100 XS23-TY3 low profile node and alongside a mezzanine dual port Infiniband HBA. From a practical standpoint, this means the node can support up to 6 internal drives and 16+ external drives (more with expanders) while still having dual 40gbps Infiniband and dual gigabit Ethernet.

Great to know that these can be turned into storage nodes for smaller cloud deployments.

Dell C6100 Spares

One other key question when purchasing previously used equipment is how to deal with the break-replace cycle. There has been a great discussion on the forums around how to plan for spares in the event of a unit going bad. The general consensus is that people are expecting these to run for 12-24 months. For any breakages, the thought is that it is less expensive to purchase spare chassis and use as cold spares, much as the STH colocation project did.

Closing Thoughts

Overall, I wanted to say thank you to these individuals and those who have helped with suggestions. The STH community has taken this platform and generated a significant amount of documentation around it over the past few weeks.

Hi there!

Great article. I got a C6100 for my home lab along with a “supposedly” soundproof rack. The rack does a decent job of muffling the stock fans but it is still louder than I want. I am looking into piglover’s fanmod but have some questions:

1) Do I need to cut the Dell cable at any point? I’m a bit nervous about damaging it and not being able to get back to the state I was in originally.

2) Why are there 6 wires on the Dell side and four wires on the fan?

3) Is there a way to turn down the fans in software? I’ve found sometimes that the C6100 fans are on full even when all the nodes are off.

Thanks!