PCIe GPUs in Standard Servers

We also covered briefly in the video that you can put GPUs into more standard servers.

We covered this in more detail in our PCIe GPU in Server Guide, so we will refer you there for more on that one.

Next, let us discuss the software.

SuperCloud Software: Managing the AI Factory

Hardware at this scale is only as useful as the software that manages it. Supermicro layers a software suite called SuperCloud on top of its Data Center Building Block Solutions (DCBBS) framework, with the stated goal of turning a collection of physical infrastructure into a turnkey AI cloud. The suite is organized into four distinct management layers.

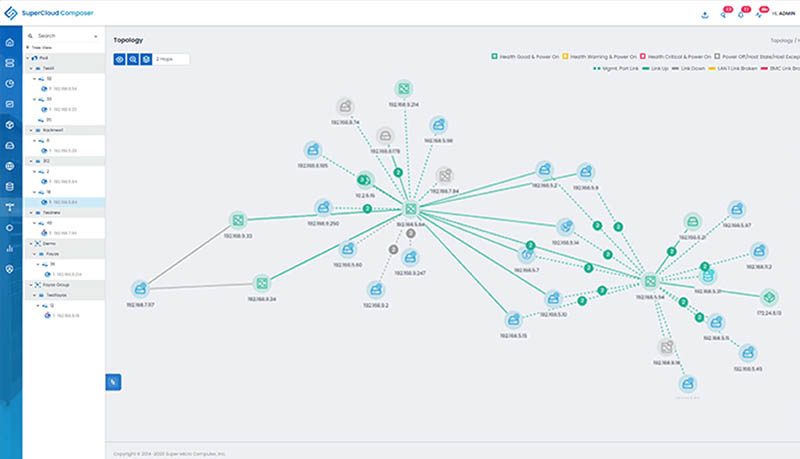

SuperCloud Composer is the single-pane-of-glass management layer covering the entire infrastructure stack, from individual servers to PDUs and liquid cooling loops. It provides lifecycle management across the full hardware estate.

SuperCloud Automation Center handles imaging and provisioning workflows. Pre-built automation workflows reduce the manual configuration burden on operators, allowing new nodes to be brought into production without requiring manual intervention at each step.

SuperCloud Director is a multi-tenant bare metal as a service engine. It uses the NVIDIA BlueField-3 DPUs embedded in the infrastructure to provide secure, isolated tenancy with bare metal performance characteristics and cloud-like operational flexibility.

SuperCloud Developer Console is a Kubernetes-based self-service portal for the engineers and data scientists who are actually running workloads. It exposes a service catalog that allows developers to provision environments for training, fine-tuning, and inference without requiring infrastructure team involvement for each request.

Across all of these layers, Supermicro systems carry NVIDIA certification, meaning they have been tested and validated for compatibility with the full NVIDIA AI software stack, including NVIDIA AI Enterprise, Omniverse, and RunAI.

Next, let us get into the liquid cooling.

I’m in awe of how much you cover. Small networking to 1.6T optical DSP’s. Small GB10 box to giant Supermicro AI Factory.

Great post, I love these factory visits! Thanks Patrick!

144 Blackwell Ultra in a rack. That’s way over 100kW so its more power than a NVL72 rack

Re-badged WHMCS for Super Director. What’s the actual tech stack providing it? (As WHMCS isn’t).

In the second to last paragraph “each NIC provides 400 Gbps, giving a total of 3.2Tbps across all four NICs for the full 8-GPU system.” I think you mean 800gbps per nic there.

@Scott

Right you are. Thank you for catching that.

I love many of Supermicro’s products. However, their corporate management has more nepotism and sleaze-balls than almost any other (besides, perhaps Enron back in the day).

Absolutely horribly run company with non-existent governance.