Over the last week I got an e-mail from the CEO of Backblaze letting me know that the company was going to release some additional information on their new data center which became their primary facility starting in September 2013. This new datacenter is supposed to allow the fast growing backup company to scale to over 1/2 Exabyte in the next few years.

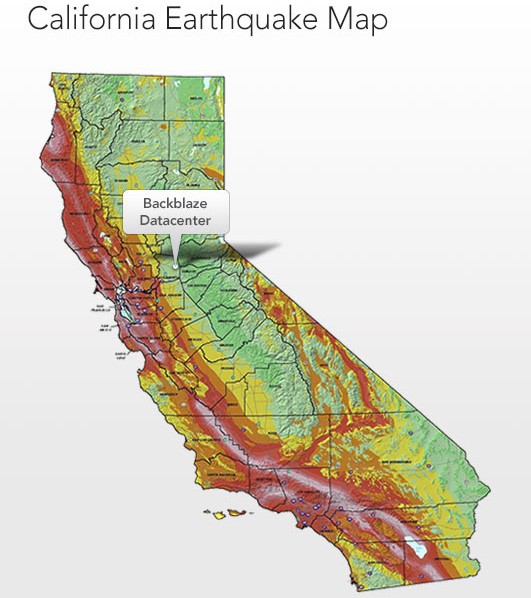

The new Backblaze datacenter is located in Sacramento, California. For many non-California residents, Sacramento is the state capital yet is much smaller than Los Angeles, San Diego, San Jose and San Francisco in terms of population. Unlike all of those cities, Sacramento sits inland, away from the cost and most of the state’s major earthquake fault lines. Backblaze selected Sacramento for many of the same reasons STH selected Las Vegas.

Sacramento has extremely low incidents of natural disasters from earthquakes to floods to tornadoes. In California, hurricanes and tornadoes are relatively rare. For Silicon Valley based companies much of the valley has a 40-50% probability of seeing a 6.9 magnitude earthquake in 50 years, San Francisco and Oakland have a greater than 50% chance. Sacramento has a 0.03% chance as it sits away from major fault lines. Even with small earthquakes, for a company with tens of thousands of spinning disks, vibrations from smaller earthquakes are probably best to avoid. For those interested, you can look at the new interactive USGS earthquake map here.

For those excited about the prospects of 6TB disks and if that means the 1/2 Exabyte datacenter will be 3/4 Exabyte soon, that is unlikely to be the case. I sent Gleb a link to our 6TB drives are available post and he let me now the 1/2 Exabyte figure took into account moving to 6TB disks in early 2015 (or so).

Sacramento houses a few important datacenters for businesses as diverse as Twitter and Pacific Gas & Electric (the major Northern California utility.) Having worked with companies that selected Sacramento datacenters the major reason is that they are still only 1.5 to 2 hours away from the Silicon Valley and San Francisco. Land prices are much lower in Sacramento. Here is the Zillow report for Mountain View, California where companies such as Google, Symantec and Intuit are headquartered. Likewise here is the listing for Sacramento. Power prices are still relatively high as is the norm in California but Backblaze’s selection certainly makes sense.

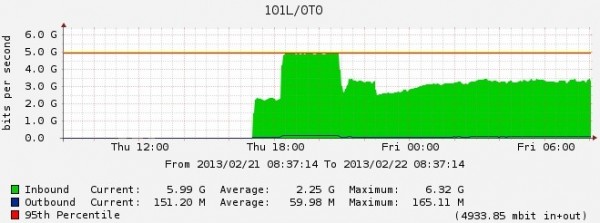

For those network gurus, consumer and SMB backup is certainly something one would imagine uses a lot of bandwidth and yet is less latency sensitive. Backblaze shared a graph of its first network link:

That is certainly interesting since one can start making conjectures regarding both how fast Backblaze fills containers and their in/ outbound traffic. Here we can see that a backup service like Backblaze runs at approximately a 38:1 ratio of inbound to outbound data traffic.

The big problem with Backblaze (and why I dont use them) is that they are vulnerable to data corruption. So they for sure, have gobs of silent data corruption. Each data bit has a very low probability of being silently corrupted (i.e. without notice). And Backblaze have extremely many bits in their hard disks, so although the chance is very small of a single bit being corrupted, when you collect extremely many bits you get data corruption for sure. If you want to catch bit rot you need to checksum all data and compare the data blocks before usage. Backblaze has no checksums, no data integrity checking. So your Backblaze data might rot away given time. You have to do all the checksumming and check the data you get back from Backblaze to see if it has been altered.

Half a exabyte will have even more bit rot. I dont understand why Backblaze abandon their Linux hardware raids, and switch to ZFS which does automatic checksumming and protects the data? That way they can discard the hardware raid cards too. And get automatic checksumming every time a data block is accessed. So data integrity is preserved, even in half a exabyte. And there are many petabyte large installations out there, IBM Sequioa supercomputer has 55 Petabyte stored on ZFS (using Lustre).

Too bad they don’t have good Linux server backup/ restore.