The Asustor Flashstor 12 Pro FS6712X is similar to a NAS we looked at recently, just with more. With 12x M.2 NVMe slots, one may think it is a superior performer, but it also has a relatively modest CPU without enough connectivity for those drives, and only 10Gbase-T networking. To be clear, the reason we have this one is that we thought the entire line was silly, but then we tried the FS6706T 2.5GbE version and realized we could actually use this. That is the kind of churn in the process that should make for a really interesting review.

Before we get too far in this review, we have a video review going into both the Asustor Flashstor FS6712X (12x M.2 and 10Gbase-T) as well as the Flashstor FS6706T (6x M.2 and 2x 2.5GbE.) You can find that here:

As always, check it out in its own browser, tab, or app for the best viewing experience.

Asustor Flashstor Pro 12 FS6712X External Hardware Overview

The sole USB 3 port on the front of the box is an interesting choice. The USB is mostly for external storage. During testing, we tried hooking up an external USB 3 2.5GbE NIC to the port, but Asustor’s management software would not give us another interface.

On the rear, we get base power, USB, and so forth which are typical of NAS units. We also get things like HDMI, S/PDIF, and so forth which are found more in desktops. The big difference externally between this FS6712X and the FS6706T we reviewed previously is that this unit has a 10Gbase-T port.

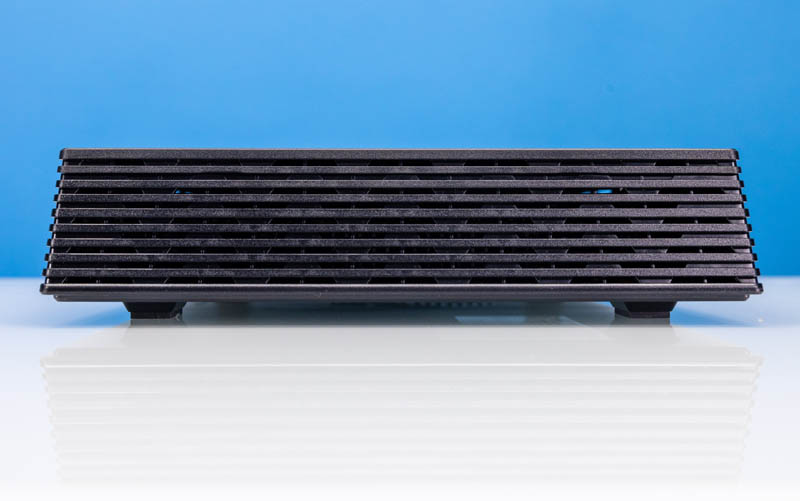

On one side, we get a vent.

On the other, we get a power button. This one lights up the side of the chassis red when turned on, which is a cool feature. We show this briefly in the video.

The bottom of the chassis has the same four large rubber feet and cooling fan design that we saw on the Flashstor 6 FS6706T.

Next, let us get inside the system to see what kind of magic Asustor used to get this all working, and it involves a lot of Asmedia.

Asustor Flashstor FS6712X NVMe NAS Internal Hardware Overview

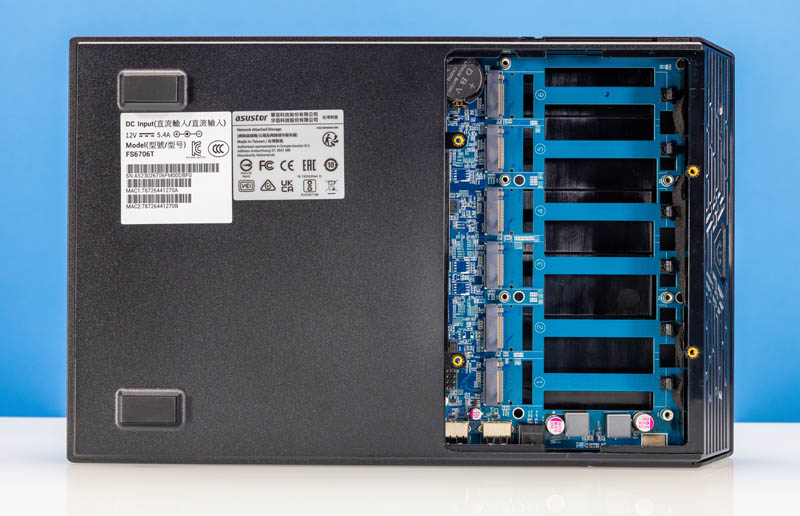

Originally we planned to review the FS6712X and FS6706T together. So we only took a picture of the common bottom 6-drive portal once. Accessing the bottom six drives is done via four screws to reveal the first six drive bays.

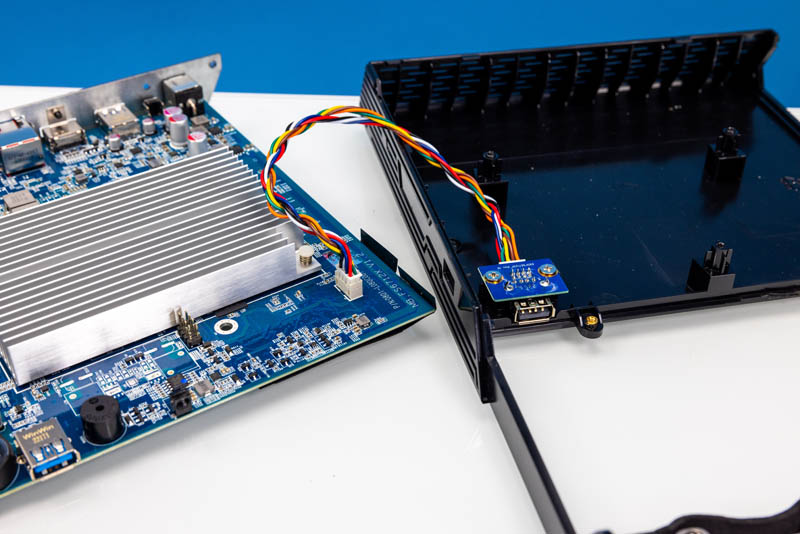

For those wondering, Asustor engineered the fan for this system to have a USB interface. Sliding the bottom cover off disconnects the USB Type-A port and that is how they made a quick disconnect fan. It works better than one might think.

Taking the chassis off for a second, we can see this side of the motherboard. We are using cheap Crucial P3 Plus 4TB drives that have been selling for under $225 each. At that price, we did not go up to 8TB drives since those cost ~4-5x as much for 2x the capacity. We also learned that the 1TB configuration we had in the Flashstor 6 pro was too little even though those drives were cheap. We did not use more expensive drives because we had a suspicion that the performance of the unit would be suboptimal. The net result was we were around $71/ TB to connect this flash storage to 10GbE with this setup.

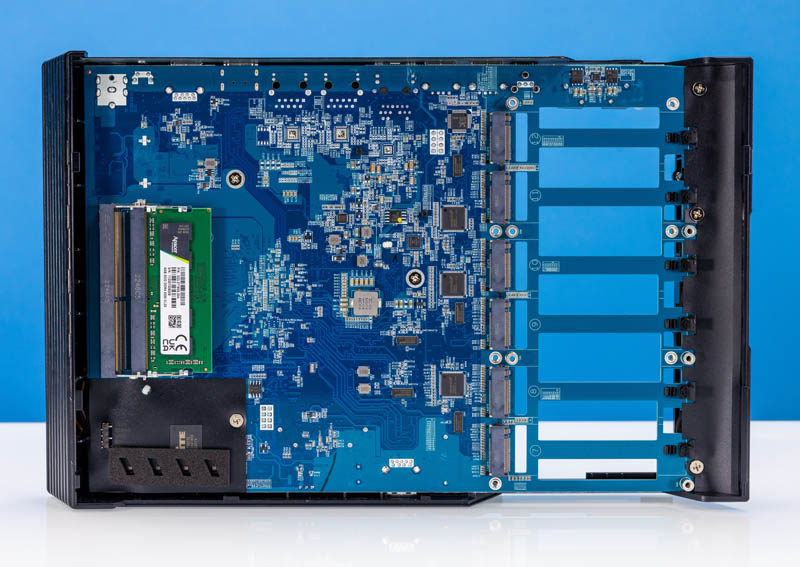

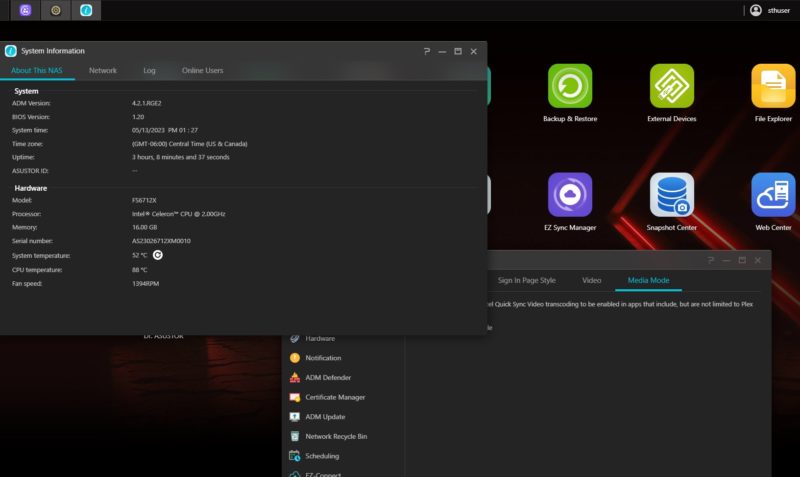

The two heatsinks above cover the Marvell AQtion 10Gbase-T 10GbE NIC controller as well as the CPU. This system is powered by the Intel N5105 which is a quad-core Jasper Lake CPU that we have seen many times on fanless edge devices at STH.

Flipping the unit over, two screws can be undone and give one access to the top of the chassis with the SODIMM memory and the other six M.2 slots. One can see that we have 4GB of memory installed as standard.

We upgraded this to 2x 32GB using three brands of SODIMMs. While it booted, we kept getting errors during file transfers so it was not stable. Our suggestion is to stick to 16GB (2x 8GB) max. 2x 16GB DDR4 SODIMMs are about $35 on Amazon (here is what we used via an affiliate link.) This worked perfectly. We really wish that Asustor either made 16GB standard or even a single 8GB standard to make the upgrade process easier. We could take the second 4GB module and make 8GB in the FS6706T, but it would have been nice for Asustor to add a few dollars of cost here and make this unit better able to handle apps.

One nice feature this unit has is tool-less drive installation. There are clips to keep the drives in place instead of screws. With twelve drives we are glad we did not need to install twelve tiny screws.

Here is a shot of the motherboard populated from the front where the sole USB port is.

Here is the rear view with most of the I/O including the 10Gbase-T NIC.

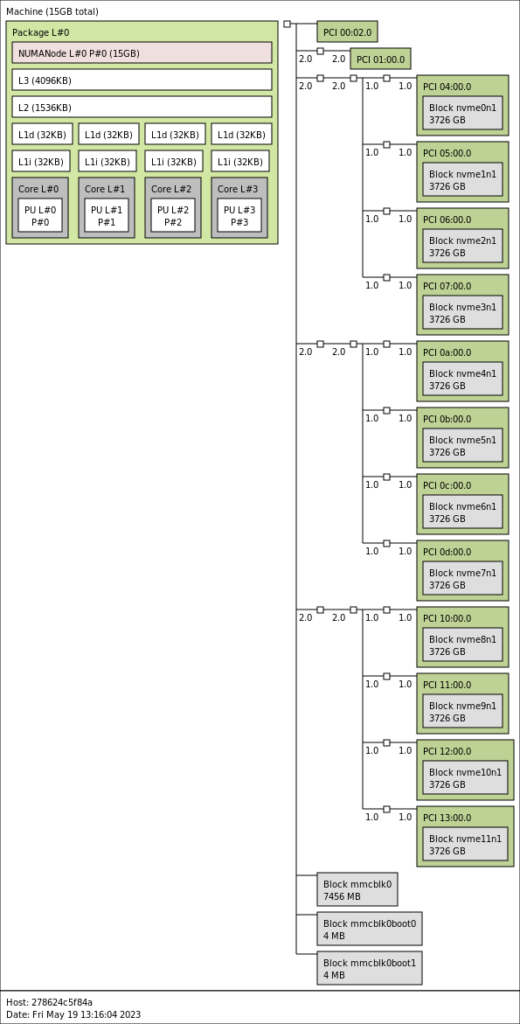

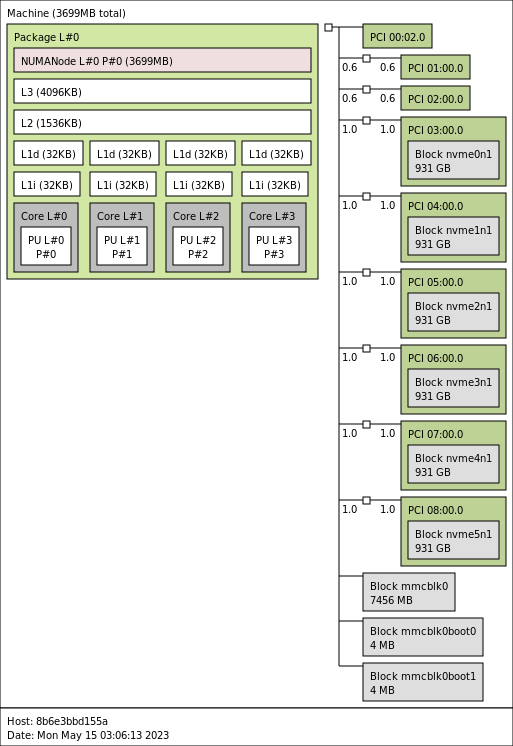

One of the big challenges with 12x M.2 NVMe SSDs is that the drives each need to connect to the system via at least a PCIe x1 link. With 12 drives, plus 2-4 PCIe lanes usually reserved for the 10Gbase-T NIC, that is a lot to ask of the Intel N5105 with only 8 PCIe lanes total. Asustor is using ASMedia ASM2806 PCIe switches and ASM1480 PCIe mux devices to help tame the PCIe needs in this system. The ASM2806 switches were not present on the FS6706T version since it only had six drives.

Still, on average, each drive here has roughly the bandwidth equivalent of a PCIe Gen2 x1 lane. While we are using fairly low-performance PCIe Gen4 NVMe SSDs, given that level of bandwidth constriction, this seemed like a good place to go for capacity over performance. As a plus, they tend to be lower-power than high-performance drives.

Next, let us get to the topology of how this is all hooked up.

Asustor Flashstor FS6712X NVMe NAS Topology

When we first heard about these units, the first question that came to mind was probably the topology. The question was how Asustor fit 12x drives onto an Intel N5105. Here is the topology diagram with everything connected:

Something to keep in mind is that there is also an 8GB eMMC device for the OS boot onboard. This is about what we would expect as we can see the three ASMedia ASM2806 devices each with four drives attached.

For comparison here is the 6-slot version without the PCIe switches:

We wanted to quickly note that we took these screenshots with non-standard memory configurations. The FS6712X had 2x 8GB installed which is our recommended upgrade. The FS6706T had a single 32GB SODIMM installed, and it was not stable with that. Again, 4GB is standard.

Next, let us look at the management and performance.

Definitely looks interesting. Kind of disappointed that the speed seems so low. I get that they had to use a low powered CPU to keep the power/heat low but those nvme drives are restricted (even with the pci switch it seems). If you’re dropping $2.5k on this machine, you would expect better performance.

Hopefully they’ll come out with an updated version that at has more pcie lanes because the power efficencies alone would make me consider moving from my HDD NAS that idles around 100W.

useful enough is neither a product that delivers on its promises nor indispensable with 12 NVMe SSDs much much more was to be expected

I have one of these. Opted to just load Linux on it, being it’s basically a standard x86 PC that didn’t take doing anything special. There is an 8 GB emmc storage built in that can act as the boot loader drive allowing you to put your / onto your main disk pool if you want. Overall I’m happy with it. I did opt to swap the fan and run the quieter fan at full all the time. This original fan just didn’t sound smooth for whatever reason so even though the new fan is running max all the time it’s actually quieter on top of delivering better airflow. I also decided to do the 2×16 GB. The processor is only listed as supporting 16GB so expecting it to do 4x as much seemed to be asking for trouble. No issues with 32 GB yet though. The only other thing I’d say is the power button is in a really awkward place, right under the overhanging ledge on the side where you’d naturally want to pick the box up from.

The upcoming AMD Epyc Sienna platform would be much more interesting for something like this. Much more expensive, but still. You could cram stupid amounts of M.2 drives into a 1U case, or 2U if you wanted more tolerable fan noise and room for a PCIe slot or two.

“If you want more performance than 10GbE, then it is worth looking at other solutions.”

I would say if you want more performance than 10GbE, then you are an enterprise, not a home user.

Looks like this supports Ext4 and Btrfs? How reliable is Btrfs? Are they doing the same trick as Synology where they still use the more reliable mdadm to manage the RAID array and then just format the individual drives as Btrfs?

Can you run TrueNAS on this? Is the network card supported?

This would have been somewhat more interesting with a part like the Atom P5322 or Xeon D-1702. Without ECC and with such poor PCIe resources this is a bit of a joke.

If the Crucial P3 Plus is such a bad drive (as documented in your own review), why did you choose to use it here?

Wouldn’t choosing the P3+ imply that it isn’t a horrible drive?

Or did you come to the conclusion that QLC has a place in mass storage systems where you won’t see the negatives once you are looking at multiple devices in aggregate?

In this system the bottleneck is the network, not the drives, so we went with the lower cost drives.

If I were to get the 12 drive version would I be running into a scenario where all the nvme SSDs would need to be replaced simultaneously due to the TRIM limitations for an all SSD Raid 5? My config would be the 12 of the crucial P3 4TB or Teamgroup M34 4TB drives.

This is an interesting looking NAS. When I first saw the review I thought ‘Why is STH reviewing a PlayStation’

One major huge miss in this product – not supporting M.2 lengths other than 80mm. You can easily find like-new enterprise 22110 SSDs, at high capacities, good endurance and PLP, at good prices on Ebay. Instead, with this product, we are stuck with consumer SSDs that charge way too much for their poor endurance and lack of steady state write performacne.

Asustor should have just gone with a regular boxy design but extend the board to support 22110.

opinions on using the solidigm 2tb p41 drives in this? look similar to the crucial p3 but with a larger tbw ( solidigm 2tb 800 tbw vs crucial 2tb 440). Is there any other factor that would indicate the Solidgm should not be used in this device?

Looking for some help… We have a mikrotik 10Gb switch with SFP+ ports. But using a MikroTik S+RJ10 10Gb SFP+ Adaptor, I can’t get the NAS to connect. Does anyone know if there is an SFP+ Media convertor that works with this NAS?

To the person that put Linux on this, had/have you tried TrueNAS (Core or Scale)?

Unstable behavior on Asustor NAS with higher amounts of RAM is quite well known. You need to increase the reserved kernel memory manually, because ADM doesn’t take care of that automatically and Asustor refuses to fix that issue.

I got to say I just received mine bought through Amazon but from Asustor site. Two of the clips that hold the nvme in place were broken off and the top of the case itself looks like it had something spilled on it and is all stained I have pictures to prove it was delivered at 7am and opened right away. They are sending me another one but I really hope the clips are not that brittle that someone broke them.