The release of AMD’s new Ryzen Threadripper 3970X 32 core has caused quite a storm. With this impressive processor, we have an equally impressive motherboard the ASUS ROG Zenith II Extreme. The ASUS ROG Zenith II Extreme is pushing the new platform to the maximum. Loaded with PCIe 4.0 expansion and M.2 NVMe drive slots, 10Gbps networking, water-cooling features, and expanded fan controller module. In our review, we are going to take a look at what this platform has to offer.

ASUS ROG Zenith II Extreme Motherboard Overview

The retail box for the ASUS ROG Zenith II Extreme Motherboard is colorful with a black matt patterned finish. Red logos and artwork highlight the box with a metallic product name to identify the motherboard. We see the AMD Socket sTRX4/ TRX40 identification broadcasted in the corner.

The back of the retail box clearly identifies the ROG Zenith II Extreme Motherboard’s main specifications and features.

After taking the motherboard out of the box we get our first look at the ROG Zenith II Extreme Motherboard with its factor of Extended ATX 12.2” x 10.9”. The ROG Zenith II Extreme is heavy and reminds us of the ASUS ROG Dominus Extreme.

Looking at the back of the ROG Zenith II Extreme we see the large solid steel backplate which aids in cooling and gives added strength to prevent bending. We also spot the 5th M.2 NVMe slot down at the bottom. If you are using HDDs for storage this M.2 slot would be perfect for using ASUS’s AMD StoreMI software that caches data to speed up HDD access. Otherwise, it is an almost crazy feature. SSDs are very reliable, but that placement in a system with this motherboard makes it one of the least serviceable SSD locations possible.

The AMD Socket sTRX4 is massive and takes up a large portion of motherboard space. Next to the sTRX4 Socket we see 8x RAM slots and just to the right is a DIMM.2 M.2 NVMe storage slot that uses ASUS ROG DIMM.2 module that can house 2x extra M.2 NVMe drives.

We also see at the right power connections which are 24-Pin, 2x 8-Pin and a 6-Pin for use if large amounts of storage and expansion devices are used.

Storage connections at the motherboard edge are 8x SATA 3 6.0Gbs ports. We think most buyers are going to opt for SSD arrays instead of hard drives, but there are still many who directly attach hard drives to workstations.

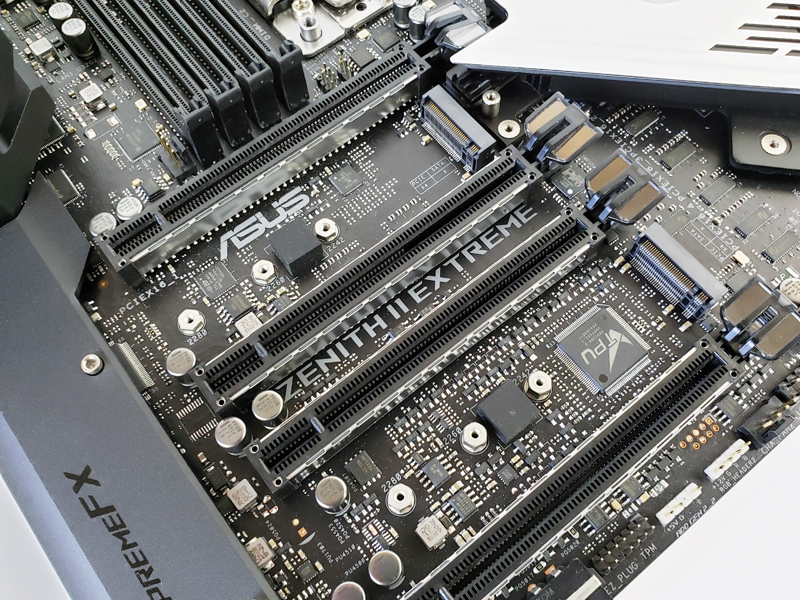

PCIe 4.0 slots are 4 x PCIe 4.0 (x16, x16/x16, x16/x8/x16, x16/x8/x16/x8). Two of them are double-width to easily accommodate standard GPUs.

We also have the cover removed to show the two M.2 NVMe SSD drive slots. Although we do not see the 6-7 PCIe slots of previous generations, having the M.2 SSD slots is more practical since they can be located below dual width GPUs. For many, this is more practical.

At the back of the motherboard, we find the rear I/O ports which are:

- Clear CMOS Button

- USB BIOS Flashback Button

- Wi-Fi 802.11 and Bluetooth V5.0

- 2x USB 3.2 Gen 1 Ports (Blue)

- 2x USB 3.2 Gen 2 Type-A Ports (Red)

- RJ-45 LAN Port

- USB 3.2 Gen 2 Type-A Port

- USB 3.2 Gen 2 Type-C

- 2x USB 3.2 Gen 1 Ports (Blue)

- 2x USB 3.2 Gen 2 Type-A Ports (Red)

- RJ-45 10G LAN Port

- USB 3.2 Gen 2 Type-A Port

- Audio Stack

The pre-installed IO cover finishes up the back. We really like this feature since it makes the motherboard installation easier. Also, looking to the board later in its lifecycle, one does not have to worry about losing the I/O shield.

Near the side SATA ports, we see a fan-cooled shiny metallic heat sink cover for the chipset. With this generation, the chipset is PCIe Gen4 enabled and there is a PCIe Gen4 x8 link to the chipset effectively quadrupling bandwidth over the X399 generation of Threadripper platforms.

Like the ASUS ROG Dominus Extreme, ASUS loaded the ROG Zenith II Extreme with an amazing set of accessories. In fact, there is so much we ran out of room on our table to take a picture of so we used a stock photo.

The retail kit includes a large number of accessories that break down as follows.

- 3x 2-in-1 SATA 6Gb/s cables

- 1x 3-in-1 Thermistor Cable

- 1x 2-in-1 Weave SATA 6Gb/s Cable

- 1x Extension Cable for RGB Strips (80cm)

- 1x Extension Cable for Addressable Strips (80cm)

- 1x ROG DIMM.2 Module

- 5x M.2 Screw Package

- 1x Q-Connector

- 1x ROG Coaster

- 1x ROG Logo Plate Sticker

- 1x ROG Big Sticker

- 1x ASUS 2×2 Dual-Band Wi-Fi Moving Antenna

- 1x 2-in-1 Rubber Strips for ROG DIMM.2

- 1x Dual Function Screw Driver

- 1x ROG Thank You Card

- 1x Fan Extension Card II

- 1x Fan Extension Card II Power Cable

- 1x Fan Extension Card II NODE Connector Cable

- 1x Fan Extension Card II Screw Package

- 1x Driver USB

- 1x User Guide

If we are being completely honest, it is unlikely every user is going to use all of these features. That means one is paying for accessories that they may not use. Still, as an ultra-premium motherboard, this almost excessive accessory set feels just about perfect.

Before we get on with our testing lets take a look at the ROG Zenith II Extreme Motherboard BIOS and Software.

For my use (video & audio editing) I would need at least 6 PCIe slots (2 x GPUs, 40GbE NIC, pro audio card, SDI video card, storage HBA). I also need Thunderbolt 3, or else I’ll have to replace some costly TB3 components with something else. I really, really want to upgrade from my Dual Xeon E5 workstation to Threadripper, but I can’t until somebody makes a motherboard that actually uses all those PCIe lanes for PCIe slots. Seems rather counter-intuitive that we’re seeing 4-slot solutions.

So you have a 40GbE, a HBA and TB3 for storage..

Tyler. what about some of Epyc based solution(s)? For example MZ32-AR0 made by gigabyte seems to offer your number of slots… Also I can’t quite believe that you need 40GigE and HBA in memory constrained environment like TR is. Remember it supports only ECC UDIMMs and since 32GB modules are nowhere to find, then you will be able to put only 128GB RAM there!

I’ve had overheating issues with m.2 SSDs located below a GPU. Do you suppose the “shield” will make thermals better or worse for the m.2’s between the PCIe slots??

I’d agree with others. HBA and 40G is redundant. Your 40G network speed is about the same as the HBA. If you need fast you’ve also got PCIe4 SSDs which will blow any other storage you have out of the water.

2 double-width GPUs for AV? Premiere Pro, AE, and DaVinci at least really use 1 GPU at most?

Quadro or Titan RTX in the first slot, SDI, Audio, 40G, and you’re still short a card for TB3.

“you have a 40GbE, a HBA and TB3 for storage”

Yes and it’s not that uncommon. 40GbE connects to the NAS which has all the bulk storage, 10GbE isn’t enough to keep up with 4K EXR files, etc. used for VFX or final masters. HBA is for connecting to internal U.2 NVMe drives striped in RAID0 that server as local cache, because 40GbE reliable enough to do guaranteed realtime playback of 4K EXR files, etc., so for hero systems we need to cached locally. TB3 is because clients often bring bulk storage on TB3 external RAIDs that we want to quickly connect and ingest files. Workaround is that we could have an ingest station do this, but it adds another step.

We also use TB3 for KVM extension as it is much more full-featured and affordable than other solutions. Some of our pro audio devices are also wanting a TB connection and the alternatives are either less featured and/or more expensive. So far I haven’t seen TB3 with Epyc/Threadripper.

“Tyler. what about some of Epyc based solution(s)? For example MZ32-AR0”

That’s an interesting board because we could probably use the 4 x “SlimSAS” connectors to control our U.2 cache drives, and the OCP for 40GbE, leaving plenty of PCI slots left over.

“I can’t quite believe that you need 40GigE”

“HBA and 40G is redundant…40G network speed is about the same as the HBA. If you need fast you’ve also got PCIe4 SSDs which will blow any other storage you have out of the water.”

I explained in another reply, but yes we need both because 40GbE is not reliable for realtime performance in video/VFX at the datarates we are working with. One uncompressed stream of 4K EXR files is 1.3GBps (gigaBYTES). Now imagine you’re typically using 2-4 streams at a time. The math will tell you that 40GbE cold maybe be enough, but in the real world you’ll run up against protocol limitations and high CPU usage. Getting even one stream is tough with most protocol stacks.

PCIe SSDs is what we’re using, but U.2 variants because we need about 40TB local cache, which you can’t get in M.2 form factor. I suppose we could try connecting U.2 drives with adapter cables to the M.2 slots. In theory it should work…

“2 double-width GPUs for AV? Premiere Pro, AE, and DaVinci at least really use 1 GPU at most?”

The two main apps we use that leverage this level of hardware are DaVinci Resolve (color grading) and V-Ray Next (3D rendering). While Premiere and After Effects don’t leverage multiple GPUs effectively, Resolve very much does and most Hollywood shops using it have at least two GPUs if not 4 or even 8. V-Ray Next scales almost linearly, so two GPUs is barely scratching the surface (a render node would usually have 8 GPUs but that is a different build than this).

I point all this out because the article is about a workstation board, not a server board, and we need lots of slots.

Than your only solution is to go EPYC and TB3 with a cheap hook up station with a 40G ethernet card.

I bet you could use an adapter on each side of the DIMM.2 to get yourself 2 u.2 ports.

Well, hopefully Supermicro or Tyan or somebody comes out with a 6-slot Threadripper 2 mainboard, and bonus if there’s a TB3 option that works with it. I can wait a few months.

If Asus would make a 7 slot Sage series board with 4 u.2 I’d buy that so hard.

Can somebody tell me please why this is being marketed as a gaming motherboard?

Okay, okay, no need to answer. Back in the day i was playing Crysis on a HP Z800 with two Quadro 5800 (i don’t recall the CPU it was using, but it was obviously some Xeon variant). Why HP did not market the Z800 as a “gaming PC” is a mystery… Also, i heard that physical exercises are beneficial to health, so lets do some eye-rolling for about 15 minutes.

@Madness

I think they are positioning it more as an enthusiast board. Threadripper is kind of in this weird limbo between gaming and workstation segments though. It excels at workstation workloads more than gaming, yet all of the boards available are just hopped up gamer boards. And then on the EPYC end of things there are so few boards at all, and the ones that ARE out there are server oriented.

@Tyler: I would wait a month for TRX80; Those will be the more workstation orientated systems.

TRX40 seems to target more the game streamers, enthusiasts and less the professional work station crowd like you are.

It is a bit sad that AMD did not provide a clear roadmap on Threadripper V3. And the infos on TRX80 are still only rumors or educated guesses. But by now they seem to be right, so wait till AMD will reveal TRX80 in January (the current likely launch/paper launch).

Thx for the advice Steven, I think I’ll do that.

I also wish we’d see some TR3 workstations from HP, Supermicro, Dell, etc. We’ll build our own if we have to, though.

I’m hoping a mobo manufacturer will use pcie 4 ->3 switches on TR boards to go from 8x 4.0 to 16x 3.0 so they can put 4+ non-over-subscribed 16x 3.0 slots on a board with plenty of lanes left over for other IO. Would make for a great DL workstation.

Ryan, I second that! Although I’d be happy with a PCIe expansion chassis that broke out the lanes as well. Something that takes your x16 4.0 and gives you 4 x x8 3.0 slots would be so nice.

Any one got a good explanation on why TR need a chipset and not epic? Was it mostly for moving some power usage off chip? Anyone know if it is the same io chiplet in both?

@STH

Can You kindly let Me know if this motherboard can support EPYC cpu, even if no tlisted on ASUS website?!

I don’t need all those cores but I do need the GPU slots, that AM4 dooesnt offer, so id be more than glad to drop in an 8 core 500 dollar EPYC cpu, and keep the extra cash to buy DDR5 systems this summer, as these DDR4 Motherboards and CPUs are end of life.