A commonly asked question is what makes up a good RAID controller. There are many purveyors of RAID cards out there that will sell a FakeRAID solution supporting RAID 5 or RAID 6 for a premium.

The RAID Controller

Here one generally looks for a RAID on Chip controller (RoC). While RAID 0, 1, and 10 are fairly easy for a low-end RoC or the operating system to run at high speed, RAID 5 and RAID 6 tend to run well using a dedicated CPU. One note, of course, is that some operating systems can do the required parity calculations quickly using the CPU or specialized CPU hardware. This guide is really looking only at the dedicated hardware controller.

Any good RoC controller will be covered by a heat sink. One needs to remember that the majority of hardware RAID controllers are destined for servers or high-end pre-built workstations. These systems tend to have very good airflow over the expansion ports that move air from the back of the card to the I/O backplane. It is very important to make sure that the main RAID controller chip gets proper airflow. Some vendors, such as Areca, provide an active fan on the cards and users that build low power/ low noise servers would be wise to add an active fan to a RAID card.

Volatile Memory

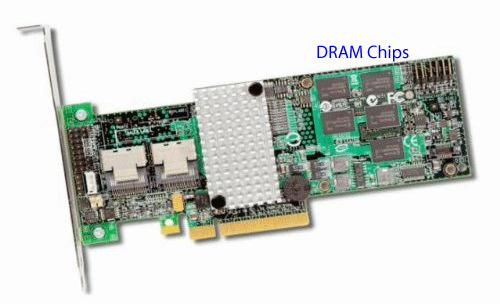

Memory on high-end hardware RAID controllers is a crucial performance differentiator. Enabling read caching and write caching are one of the key areas where hardware RAID shows major advantages over FakeRAID. What the memory cache allows one to do, specifically on the write side, is to temporarily store data that should be written to the array in local memory. This allows the controller to calculate parity bits, then write everything in a more sequential manner. Major storage players like EMC, NetApp, Hitachi and others use similar technology because as anyone who has ever benchmarked a hard drive knows, spindle disks excel at sequential transfers but tend to be slow in random I/O. Onbaord memory tends to smooth out reads and writes to make them relatively more sequential operations.

Memory can either be fixed or variable in size. In fixed configurations, the DRAM is soldered directly onto the PCB. This provides a lower cross section, which can be helpful for cooling and eliminates a socket as a point of failure. In variable configurations a user can add modules of different sizes to the card. Many Areca cards and Dell cards (both Intel IOP and LSI 2108 based) have sockets for a variable sized memory configuration.

To keep costs down, value RAID 5 and 6 controllers do not include onboard DRAM. This has the effect of either hurting write performance (since this will then be done in random I/O patterns more than sequential patterns that one gets with an onboard buffer) or writes cached in system memory which is suboptimal from a data integrity standpoint. Since parity calculations doe not need to be performed in RAID 0, 1, and 10, solid RoC controllers for these RAID levels to not require onboard DRAM.

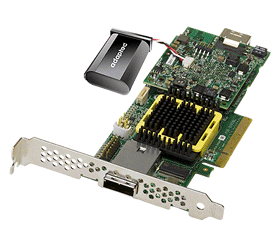

Battery Back-up Units and Capacitor plus Flash

The battery back up unit (BBU) is an extremely important part of any solid hardware RAID controller where RAID 5 and RAID 6 are implemented. Due to caching writes in DRAM as was explained in this article’s “Memory” section, a host OS may think that data has been written to non-volatile memory (hard disks) when in fact the data is sitting in a RAID card’s DRAM buffer. In situations where power is interrupted, data that was stored only in the volatile DRAM portion will be lost unless the card has either a battery back up or a capacitor and flash memory module.

With the battery back up, typically Lithium ion in today’s hardware, provides 48 to 72 hours of power to the onboard memory independent of the main power system’s power source. If power is lost unexpectedly, the BBU keeps the data in volatile memory intact until the card again comes under system power.

As is being used in newer generation solid state drives, larger capacitors are being deployed alongside flash memory to handle these power outage situations. Here in the event of a power interruption, the capacitor keeps the DRAM and flash memory powered long enough for the DRAM to transfer data to the flash memory. This provides chiefly two benefits over the older BBU setups. First, one does not have to worry about battery aging as one does in BBU scenarios where an aged battery loses the ability to store as much energy over time. That 72 hour BBU can fall to well under 10 hours in the lifetime of the card, and sometimes BBUs do require replacement. The second advantage is that the flash memory can hold data for years since it is non-volatile storage. Adaptec and Dell are offering capacitor backed flash NAND solutions and the rest of the industry will probably continue to move in this direction over the coming years.

Connectors

I will not go into much detail about this, but generally today’s cards have a mix of SFF-8087 (internal) and SFF-8088 (external) ports to provide connectivity. For a full write-up, with lots of pictures, please see the ServeTheHome.com SAS Cable/ Connector Guide.

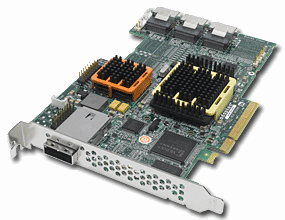

SAS Expander Chips

Oftentimes when one sees a RAID card with more than eight ports, and two heatsinks it is indicative of a SAS expander being present. These onboard SAS expanders work in a similar way to the HP and Intel SAS expanders often discussed on this site. The main difference is that instead of requiring a second piece of PCB, a n interconnect cable, and a separate power cable, those features are integrated into the host card. This allows a natural 8 port controller have many more ports, commonly up to 28 per card.

One negative here is that the onboard expanders are typically very well integrated with the host controller. That often causes issues with external expanders since some or all ports connected to another SAS expander would be daisy chaining SAS Expanders. Best practice is to use four or eight port controllers, without SAS expanders if one plans to use an external expander.

Board Layout

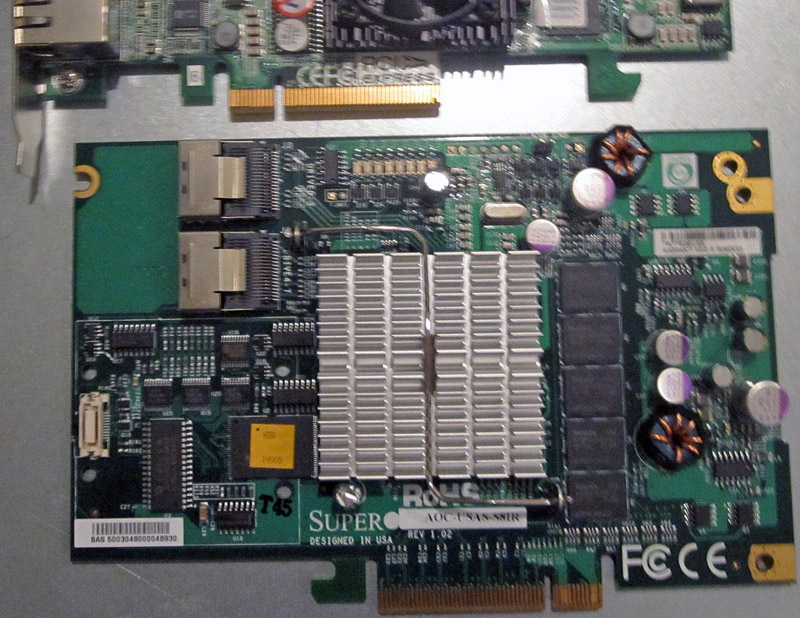

The PCB of a hardware RAID controller is an often-overlooked component to the equation. Some RAID controllers are full-height while others are low profile. Low profile cards are generally used in 2U systems, but can be found in larger systems also. Normal expansion cards tend to fit best in 3U and 4U systems.

Layout can be very important to RAID cards. Things like connector placements influence system build variables such as cable lengths, and airflow restrictions. It is important to look at a picture of any RAID card one purchases to make sure these variables are understood.

Today, users will typically want to look for cards with true PCIe interfaces. This means both physical PCIe connectors as well as native PCIe interfaces. Controllers such as the Silicon Image controllers used on the OCZ RevoDrive X2 and a few older Intel IOP (single core) based cards for example, use a PCI-X to PCIe bridge. In older cards this was an acceptable tradeoff, but with modern solid state and spindle disks, a PCI-X to PCIe bridge can be a significant bottleneck as native PCIe is a much higher bandwidth interface.

One other item that is good to note here is that many users utilize UIO cards by Supermicro as lower cost alternatives to standard parts. UIO parts have components mounted on the opposite side of the card so that it can be installed in special motherboard UIO slots commonly used in 1U configurations. Using a UIO card generally presents a few considerations including cooling, clearance, and brackets. Cooling and clearance are due to the components being on the opposite side of the card, including heatsinks. Brackets can be a bigger issue because the card will be mounted on the opposite side of the brackets in normal operation, thus users tend to fabricate their own expansion slot brackets.

Firmware and Management

As one can see above, RAID cards have CPUs, memory, power sources, motherboard layouts and etc., making them analogous to miniature computers. To manage these mini computers, RAID controllers tend to have a firmware-based operating system. The time it takes for the card to initialize firmware is basically the time for the controller to “boot”. This initialization or booting process is a well known process for any hardware RAID controller owner as the main system can often wait 30 seconds to two minutes waiting for this to complete.

The RAID controller firmware tends to have hooks for management platforms such as Adaptec Storage Manager, LSI’s MSM, Areca’s web interface and etc. Some Areca cards provide out-of-band management using a dedicated onboard management NIC that is driven by a web server based management platform. Each implementation has different strengths and weaknesses, so it is worth looking at those while choosing a RAID controller.

Conclusion

Overall, I hope this primer shows the basic parts that one should look for in decent hardware RAID controllers. One thing that is not present in this guide is the feature set. Things like the ability to add SSDs as cache tiers and having separate features for SSDs are newer developments that hardware RAID controllers are using to differentiate between one another.

“Bord Layout”. I think we’re missing an “A” here. Otherwise excellent article.

Thanks fixed :-)

hello, I have a question for you, what is the problem when the raid adaptor ( LSI 9360-8i- supermicro branded) boots, you can see the cards bios, but the card doesn’t see any connected HDD’s, no matter what type of drive(s) I connect. I have tried 3 different cables. BTW, the drives are segate 15k.7, Samsung ssd 850 pro, and wd- black.

This card is driving me crazy!!!!