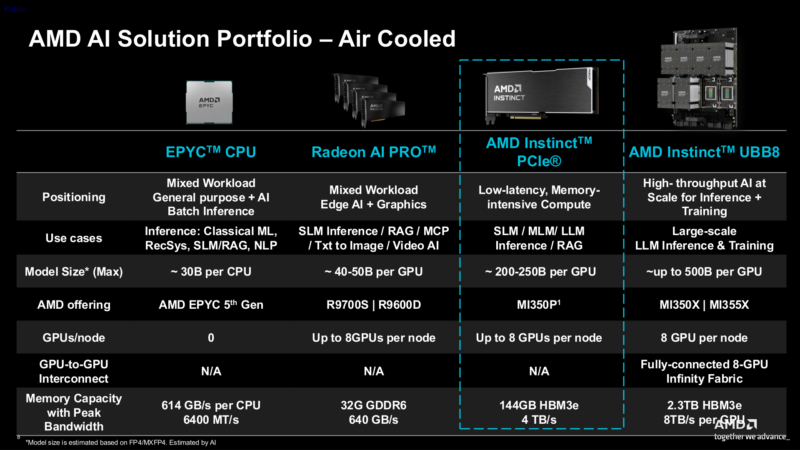

AMD this morning is launching a new member of their Instinct MI350 series of AI accelerators with a particularly interesting product: a PCIe card. Marking AMD’s first Instinct PCIe card product in nearly half a decade, the new MI350P sees AMD bring their current generation accelerator architecture to conventional PCIe cards. With it, the company is targeting customers who want to do on premise AI inference, but either cannot support the high thermal and power density of current server nodes, or want to integrate the accelerators with existing hardware. In short: customers who are not buying AI hardware by the rack.

To accomplish this, AMD is essentially taking one of their MI350X accelerators and cutting it in half, resulting in a card half as many compute resources, half as much memory, and perhaps most importantly, a bit over half of the power consumption. The end result is a scaled-down card that offers all of the AI functionality of the CDNA 4 architecture that underpins the rest of the series, but in a chip that is small enough and light enough in power needs to fit on an air-cooled PCIe card.

| AMD Instinct MI350 Series Key Specs | ||

| GPU | MI350P | MI350X |

| Compute Units | 128 | 256 |

| Matrix Cores | 512 | 1024 |

| Peak Engine Clock | 2200MHz | 2200MHz |

| Memory | 144GB HBM3E | 288GB HBM3E |

| Memory Bandwidth | 4TB/sec (8Gbps x 4096-bits) | 8TB/sec (8Gbps x 8192-bits) |

| Matrix Perf (MXFP8) | 2.3 PFLOPS | 4.6 PFLOPS |

| I/O | PCIe Gen5 x16 | PCIe Gen5 x16 7x Infinity Fabric (x16) |

| TBP | 600W (Optional: 450W) | 1000W |

| Form Factor | PCIe CEM, 10.5-inch FHFL DS | OAM |

| Architecture | CDNA 4 | CDNA 4 |

Addressing the PCIe Market Hole

With the high demand for high performance server AI accelerators for what has now been the past several years, both market leader NVIDIA and long-time rival AMD have been primarily focusing on delivering server hardware by the node – or more recently, the whole rack. The net impact has been, as our own Patrick Kennedy noted a few weeks back in a substack post discussing the subject, that in their current generation of products neither AMD or NVIDIA have released PCIe form factor accelerators based on their high-end server GPUs. With both companies selling modular accelerators destined for compute trays and racks as fast as they can make them, they have had little need to focus on much else.

In practice, most of the demand for Ai accelerators is for modular (OAM/SXM) accelerators that can go into modern compute nodes. These systems offer the highest hardware densities as well as the best scale-up and scale-out functionality thanks to the heavy use of proprietary GPU interconnects. But this leaves customers who need less hardware or are operating data centers that cannot accommodate an 11kW compute node of GPUs in a bind; it has left a hole in the overall market for AI accelerators.

For the past couple of years, both companies have been trying to plug this hole with products based on their respective workstation graphics GPUs – in AMD’s case, the Radeon AI Pro series. These graphics-based products offer a lot of the same AI functionality, but not all of it. And in particular they do not offer the higher performance of a flagship server GPU, nor the critical memory capacity and bandwidth afforded by its HBM. Consequently, while these graphics-based products can fill some needs for PCIe-based accelerators, they are not a perfect replacement by any means.

So for the first time since 2022’s Instinct MI210, AMD is releasing an Instinct PCIe card based on its current-generation architecture in order to fill this hole. The fundamental idea with the product is to give traditional server and data center customers access to the same caliber of AI hardware as their high-end modular parts, but in a readily replaceable and upgradable form factor. It is a bit more of a niche market these days, but with compute rack density and subsequent power and cooling needs presenting an issue for older data centers, it remains a very relevant one. And as an added bonus for AMD, it is a niche that rival NVIDIA is not currently addressing (nor has indicated they will be addressing), giving the company access to a market in which they will immediately be the front-runner.

Instinct MI350P: Half a 350X At Half the Power

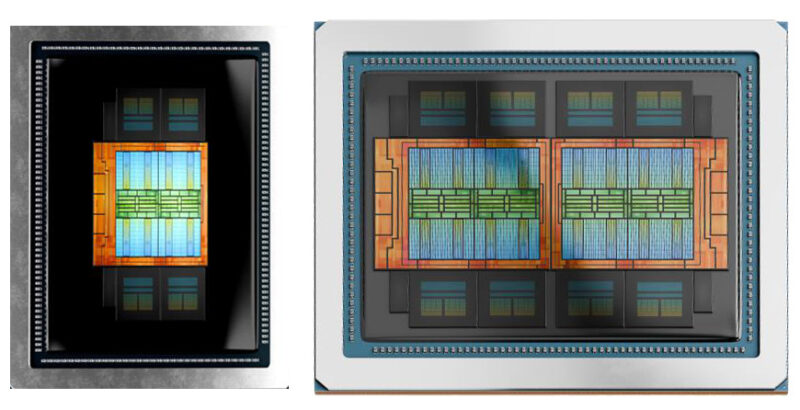

Diving into the hardware itself, as alluded to earlier, the MI350P is essentially half of one of AMD’s flagship MI350X accelerators. When AMD first informed us about the product, I had assumed that the PCIe card was being built using salvaged chips that did not make the spec to be used in an MI350X accelerator. But once AMD sent over some details about the hardware, the reality became much more interesting.

In short, AMD is not using salvaged MI350X chips for this product. Instead, they are building a smaller chip especially for use on the MI350P by leveraging the original’s use of chiplets to make a smaller chip out of the same silicon. Whereas the MI350X was built from two I/O dies (IODs) each with four accelerator complex dies (XCDs) stacked on top (for a total of 8 XCDs), the MI350P’s chip is half of that. It is a single IOD with four XCDs, which is clocked identically to the MI350X and, at peak performance figures, offers half of the performance of AMD’s modular accelerator.

With the reduction in IODs also comes a reduction in memory capacity and bandwidth. 8 HBM3E stacks has become 4, resulting in a card with 144GB of HBM3E memory and a total memory capacity of 4TB/second – once again half as much as the MI350X. As a result, the MI350P is an almost perfect scale-down of the Mi350X, offering around half of the performance and half of the memory capacity.

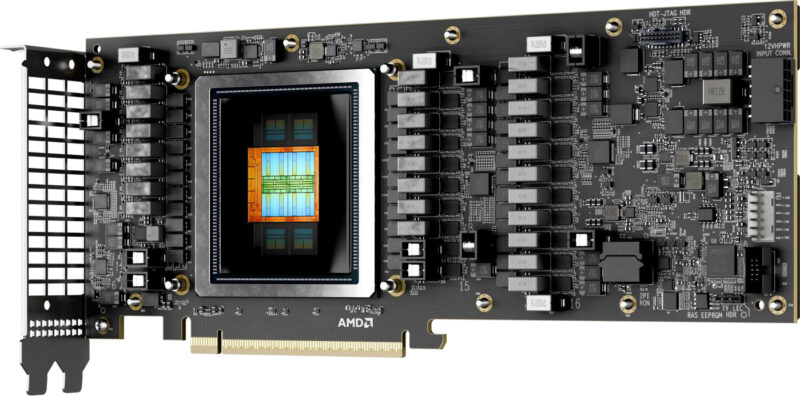

On paper, the only aspect of the hardware that has not been quite scaled down by half is the power consumption. Whereas the MI350X has a typical board power (TBP) rating of 1000W (and 1400W for the MI355X), the MI350P is rated for 600W. As a more standardized form factor, 600W is a magic number for PCIe cards as it is the limit defined by the PCIe CEM spec itself, with AMD opting to run the card as hot and fast as the spec allows. Even then, as not all servers can handle 600W PCIe cards, AMD is also offering a 450W TBP mode, which shaves off some performance to further bring down power consumption.

Physically, the card is a very standard and by-the-books full height full length (FHFL) dual slot card, which in both TBP configurations is designed to be air cooled. Typical for server cards, this is an entirely passive cooler design, with one large heatsink running the length of the card that is designed to be cooled by airflow coming from the server chassis itself. This configuration means that up to 8 cards can be installed in a single server tray.

Notably, however, AMD is not exposing their GPU-to-GPU Infinity Fabric links in any way on the MI350P. As a result, multi-card setups are limited to just using the PCIe bus (PCIe Gen5 x16) to communicate between the two of them. This is arguably the single biggest tradeoff of the MI350P versus the MI350X, as it limits the use of AI models larger than a single card’s memory pool. The end result is that an 8 card setup is a better fit for running 8 models than it is one large model spread over 8 GPUs.

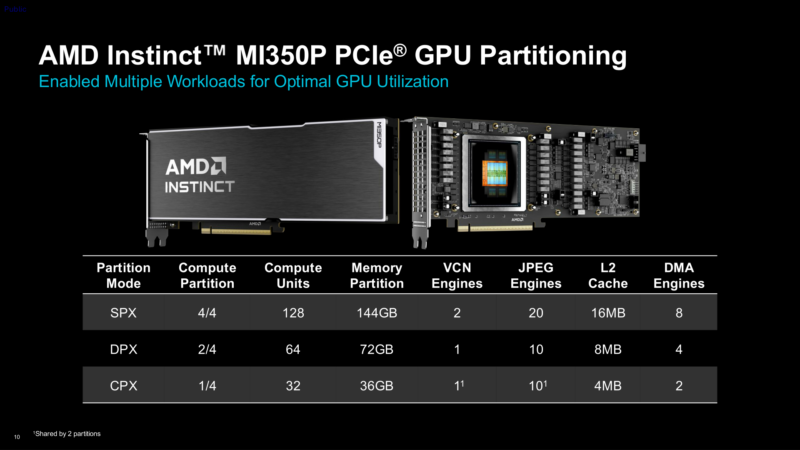

And speaking of multiple models, it is worth noting that the PCIe member of the Instinct MI350 family is also retaining the series’ partitioning support. With half as many XCDs that limit is reduced accordingly to just 4 partitions, but otherwise a CPX configuration is the same 1 XCD with 36GB of memory setup as it is on the MI350X.

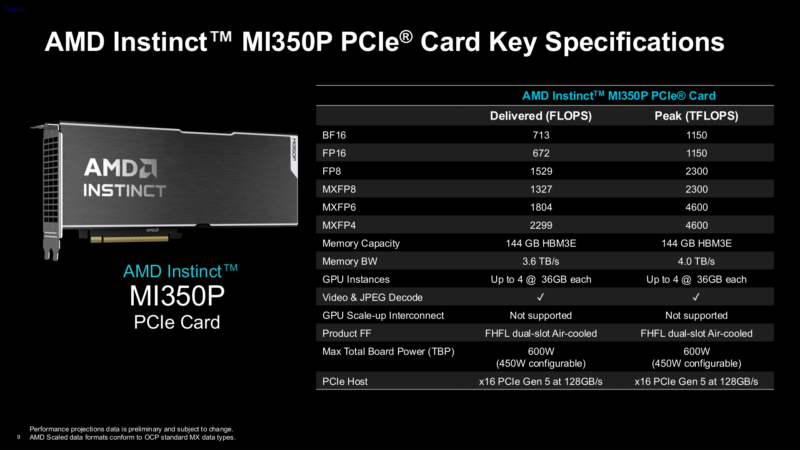

Finally, as far as the overall performance of the card is concerned, AMD has taken an interesting (and welcome) step for the MI350P by publishing both peak and typical/delivered performance figures for the card, something they did not do for the MI350X launch.

While AMD does not go into detail on how they have derived their delivered performance figures, these seem to be real-world numbers – a combination of architectural efficiency and the performance impact of not being able to max out every last transistor on the card with just a 600W power budget. Notably, AMD’s figures list both GPU throughput and memory bandwidth being impacted, with MXFP6 performance in particular falling well short of the card’s peak/theoretical performance. Overall, this is a welcome disclosure from AMD, and hopefully we will see similar disclosures in the future.

Final Words

For the past couple of years now AMD has been on a tear in the data center GPU market. Though still well behind market leader NVIDIA, AMD’s data center GPU revenues have been growing by leaps and bounds thanks to the success of (and high demand for) their MI300 and now MI350 series accelerators. And while the classic PCIe form factor market has been going largely underserved during this growth spurt, AMD is finally coming back around to addressing it with the Instinct MI350P, their first Instinct PCIe accelerator in nearly half a decade.

At essentially half of an MI350X on a PCIe card, the MI350P is a unique product in its own right. While its performance is not quite up to the level of AMD’s flagship accelerators, it none the less delivers as much performance as AMD can squeeze into the limits of a PCIe card – and it does so while delivering all of the CDNA 4 architecture’s capabilities. Coupled with the fact that AMD is now the only GPU vendor to be offering a current-gen server-grade accelerator on a PCIe card, it is fair to say that AMD has set itself up for further success by finally addressing a market segment that’s gone far too underserved this generation.