Over the past few weeks I have had the chance to play with some 8 core 16 thread Intel Sandy Bridge-EP based Xeon samples which will ultimately be part of the Xeon E5-2600 lineup. I will say that the turbo frequencies seem to be working a little strangely on these chips, but the one I am using for the benchmarks can maintain 3.1GHz across all eight cores quite nicely and the memory controller supports eight DIMM sockets fully populated. I figured I would share a preview of the Intel Xeon E5 series’ performance. Again, please see this as a preview so final shipping numbers should be a bit better. The most comparable Intel Xeon E5-2600 CPU is the Xeon E5-2687W which is a 3.1GHz 150w 8C/16T part designated for workstations. Yes, the new LGA 2011 dual socket Xeons are going to be expensive, but with AMD’s Bulldozer-based Opteron 6200 series CPUs putting out performance numbers well below expectations, Intel has a lot of leeway to price these for aggressive margins. I will also note however, that the AMD G34 platform still has some very compelling use cases, especially with 4P systems and using very low-end chips to build memcached 2P/ 4P servers.

Test Configuration

The test system I had access to did not have a server motherboard available for the Intel Xeon LGA 2011 platform.

- Processor: Intel Xeon E5-2600 series 8C/16T @ 3.1 GHz

- Cooling: Corsair H80

- Memory: 32GB Kingston 1333MHz ECC Unbuffered DDR3 and 32GB G.Skill consumer 1600MHz non-ECC DDR3

- Motherboard: Consumer X79 board

Overall, this is a pretty interesting platform since one gets to use a ton of RAM.

Performance Tests

I will start off this section by saying that the standard test suite was built to test 1-8 thread single CPU systems such as the Sandy Bridge and Ivy Bridge Intel Xeon CPUs. With platforms like this one, I have been slowly altering the mix. Clearly, one would expect a different workload between the Xeon E5-2600 CPU and something found in a low-end, low-power server like a Pentium G630. Moving to the 16-64 core realm where the Xeon E5 series will play, that is becoming the norm today, I think that it will become ever more important to develop a second test suite. For the purposes of this preview, the following should suffice.

A quick note, I applaud Microsoft for finally changing task manager. 16 threads per socket with the Xeon E5 series on the current Windows 7 task manager version looks a bit overwhelmed and underworked.

Cinebench R11.5

I have been using Cinebench benchmarks for years but have held off using them on ServeTheHome.com because the primary focus of the site until the past few months has been predominantly storage servers. With the expansion of the site’s scope, Cinebench has been added to the test suite because it does represent a valuable benchmark of multi-threaded performance. I have had quite a few readers contact me about this type of performance for things like servers that are Adobe CS5 compute nodes and similar applications. Cinebench R11.5 is something that anyone can run on their Windows machines to get a relative idea of performance and both Sandy Bridge and Sandy Bridge-EP systems run it well.

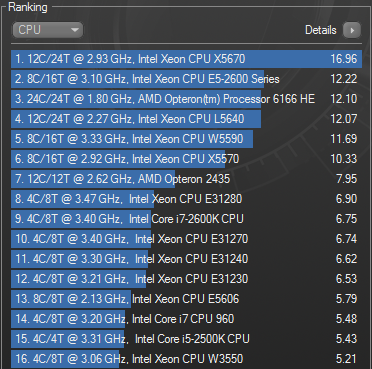

As one can see, this Xeon E5 chip is very impressive for a single CPU system. It is trending faster than many one or two generation old dual-quad plus hyper-threading systems. One interesting note is that this eight core chip provides slightly faster benchmark results than a dual 12-core Opteron 6166HE setup.

7-Zip Compression Benchmark

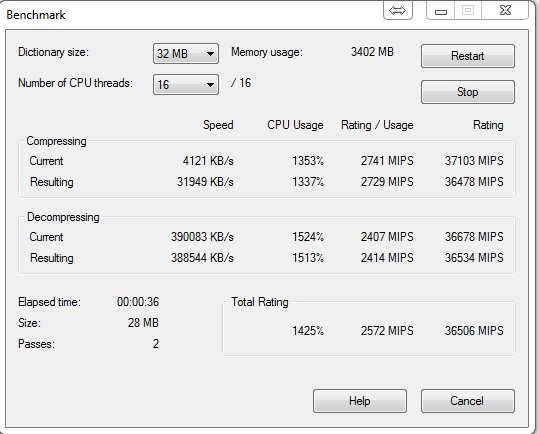

7-Zip is an immensely popular compression application with an easy to use benchmark.

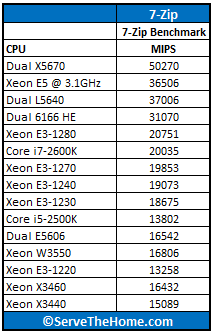

This is in-line with what I was expecting. With its new Xeon E5 server CPUs, Intel will be providing tremendous performance. Here are a few reference points I had handy:

Overall not bad for a single socket Xeon E5 solution and a significant bump from the older quad core 45nm W35xx workstation CPUs and the 32nm quad core 32nm Xeon E3 series CPUs. Then again, it is a single chip solution with twice as many cores and significantly more cache and memory bandwidth.

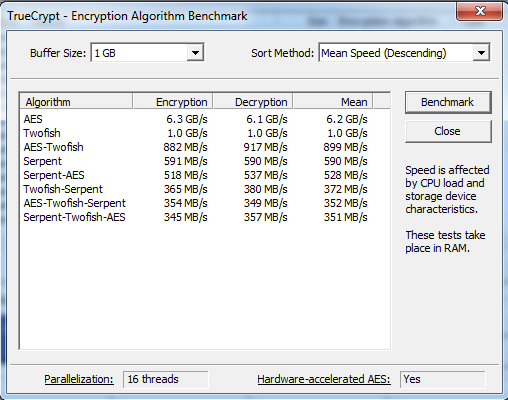

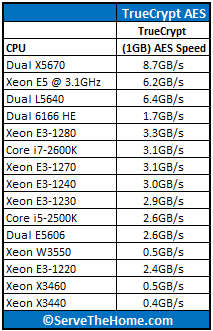

TrueCrypt Encryption Benchmarks

With Intel’s focus on its AES-NI features TrueCrypt can look a bit skewed. Unlike some dubious drivers over the years that were optimized for benchmarks over real world application, Intel’s AES-NI feature does encompass the addition of specialized hardware. This specialized hardware has many practical uses and is becoming more supported. For example, users of Solaris 11 can utilize the AES-NI features to see much higher throughput on encrypted volumes. AMD has started offering AES-NI with their Bulldozer CPUs, and I will have those results added to future pieces. Without further waiting, here is the Xeon E5-2600 series benchmark:

One can see that the Xeon E5 AES-NI enhancements are working here, and that the Xeon E5-2600 chip is a monster, and very respectable even against dual LGA 1366 32nm solutions. Again, here are some reference points:

The dual Xeon L5640 setup does pull ahead a bit on this one. Then again, this is not a dual socket solution. One can see that higher-end dual-hex Intel CPUs do fare well here. Also an interesting point is that one sees the Xeon E5-2600 at 3.1GHz running at just about twice the speed of a Xeon E3-1230 which is a four core eight thread solution clocked at 3.2GHz. It appears to show slightly better than 100% scaling.

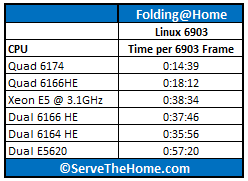

Folding@Home

I am working to make Folding@Home a standardized benchmark, as it is a mature distributed computing client. There is quite a bit of variability here, but the very interesting thing is that you can watch speeds scale very linearly with clock speed and cores (assuming the same architecture.) Times are generally measured in TPF which stands for Time Per Frame which measures the amount of time it takes to complete each frame. A bit of tweaking needs to be done for each setup as things like NUMA settings need to be tweaked for each setup for them to run optimally.

I am still working on getting more data here, but overall, one can see the Xeon E5 8C/16T CPU is putting out a lot of work in-line with what we would expect from the number of Sandy Bridge cores used. Folding@Home requires a decent amount of tweaking to get working optimally and I was not able to run more than 150 frames with the chip so actual results here may vary quite a bit, especially if one uses faster RAM and spends time with Linux kernel/ scheduling tweaks.

Power Consumption and Cooling

At first I tried using a Corsair H100 to cool the 150w part, but I soon found that I received a DOA unit. I instead moved over to a Corsair H80 which has the LGA 2011 mounting brackets needed and two 120mm fans located on the radiator that provide a range of airflow options through setting the fan speed. One thing I certainly noticed was that there was a big difference in cooling the 150w part between the medium and high fan speeds. With normal desktop usage, and running basic Linux web server tasks (Apache/ MySQL/ php test server) I did not have much of an issue on low fan speeds. When I cranked up Folding@Home which stresses all of the cores/ threads of the CPU concurrently for several days, medium worked for between 4.5-6 hours before the CPU overheated and crashed the folding run. Changing to high fan settings on the Corsair H80 allows the same configuration to run for 48 hours without issue. Bottom line, these chips are pretty hot! I will try again when I get a working Corsair H100 in the lab but I am a bit wary of trying to cool the 150w parts in lower-profile chassis as it seems like one needs a lot of airflow and heat exchange surface area to cool these things.

Conclusion

Overall, I think there are a few key parts here. First, the ability to use 8C/16T is great for applications that need it. One can almost think about it as adding a i7-2600 to a predecessor’s dual 32nm hex core 2P Xeon systems. One really cool thing is how the performance was better than two older 2P 4C/8T systems. One other major thought is that one can use eight DIMM slots per CPU with the Xeon E5-2600 series which simply allows for more memory to be added and more memory bandwidth for things like VMware/ Hyper-V servers. I will post more once Intel officially releases the parts. First impression is that they are great chips overall, but are really an evolutionary step. We will see final benchmarks in the near future as Romley is released. Probably the most exciting aspect of Romley is the fact that we get new high-end CPUs that will justify consolidating 45nm systems, but also the new PCH’s available with SAS ports on boards. More to come once these become widely available.

can you over clock the Xeon E5-2600 series? are these the chips you will use in the EVGA SR-3 and ASUS dual over clocking board? have any dual SNB-EP benchmarks?

Nice information. Looks to be a good performer, just want to see street pricing.

Any word on release date?

Intel sold Xeon E5 chips for a few big deployments already. That is why they have been shipped for revenue so they are not unreleased.

?

So when are we gonna get at these chips then? I’m starving.