We have another update on the WD Red SMR drive story. To be more precise, we have several updates. Since WD has remained silent on this subject, and we, as a community, need to get the word out to keep customers informed, it was time for a follow-up piece. In this edition:

- Jim Salter at Ars Technica confirms what we saw in ZFS

- We found evidence that WD-HGST has said DM-SMR is not good for reliable performance, even discussing ZFS issues for years. Effectively, they had reason to know the drives would not work well in, at least ZFS NAS units, if not other NAS applications.

- We cover a great user story of actual experience with 6TB WD Red DM-SMR drives in a non-ZFS Synology NAS. WD did a great job doing the right thing and replacing his drives.

- We discuss a theory on how these drives were qualified by WD and drive vendors, but now de-certified/ de-qualified.

- We discuss a theory on the conspicuous silence with no updates from WD.

There is certainly a lot to cover here, so let us get to it.

Accompanying Video

If you prefer to listen along, here is the accompanying video:

As always, more detail is below for those who are interested, but we recognize some prefer different media.

Ars Technica Confirms What We Saw in ZFS

When I set the scheduled publishing time for our first hard drive SMR piece, I felt like we needed to get some data. I was very worried that the time it would take would mean others would beat us to the story. Once the first set of results came in, we saw terrible WD Red DM-SMR performance in a ZFS NAS array rebuild scenario. One of the great parts of the tech community is that others will often try for themselves. Jim Salter at Ars Technica did just that.

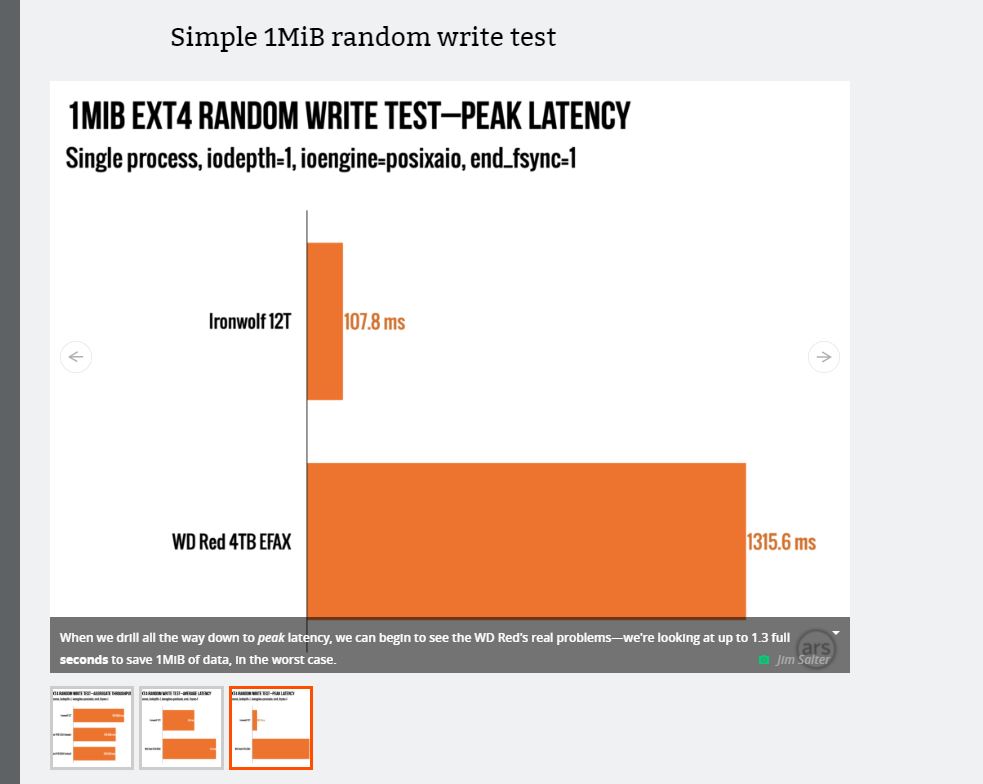

Jim tested a RAID 6 array using 12TB drives partitioned to 4TB to see rebuild times and various performance angles with the WD Red DM-SMR drives. He certainly found a difference in testing. In these tests, while he saw a difference, he did not see the dramatic difference we saw on the ZFS side.

Some of the charts in the article look not too bad, but when you click through to some of the next-level charts, some start to look not great. Jim mentions them in the article, but if you are skimming, you may miss this. Our advice, read Jim’s article thoroughly because he explains some less great findings that are not highlighted via charts in the default page loads.

In this example, one can see the first (default) thumbnail. If you are skimming the article, we suggest at least clicking on the other charts in these blocks because there are some that paint the WD Red DM-SMR drives in a less favorable light. Being clear, in the text, Jim discusses these in his text so it is not that they are hidden. They can just be hard to see when scrolling through and skimming.

Jim also profiled a ZFS rebuild more similar to what we did. He got similar results. Combined with what we have heard from ZFS NAS companies and heard from users, this is a fairly clear story that ZFS and DM-SMR are not a good match. We are going to talk more about that later, as well as some stories of real-users on non-ZFS platforms.

Frankly, I think it is great that Ars did this testing so there is more data out there. From experience, this requires a lot to produce. Please check out that Ars Technica article here. We may have differing views of the impact, however, I always encourage our readers to get more data to make better decisions for themselves.

As a quick note here, some folks have pointed to the “NASCompares” testing. Something I learned only in the last few days (via a major NAS vendor) is that the NASCompares folks are not an independent site like Ars or STH. Instead, according to the NAS vendor, they are affiliated with a NAS reseller who may have sold NAS units using these drives. While those results are meaningful, we are more focused on independent site results along with user experiences for this article. We encourage our readers to get any information they can, but also filter based on the context of the testing. Our testing was done without vendor support. We were simply interested in ourselves as consumers of the drives and for our readers. This is why we used ZFS as that is very common for us to use as well as for our readers.

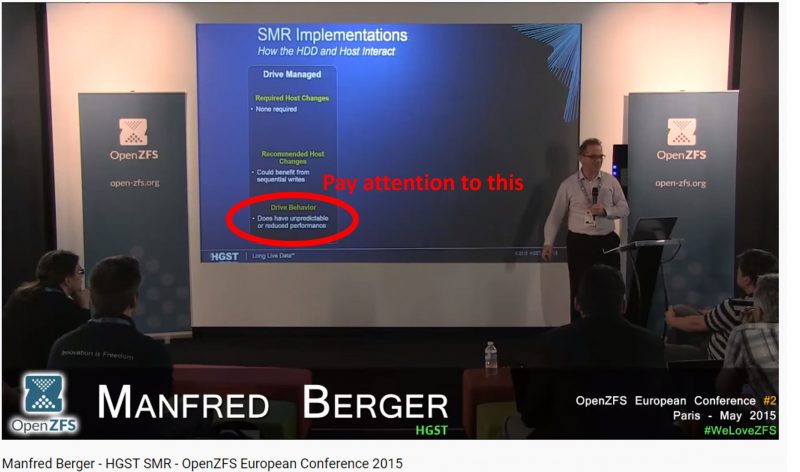

WD-HGST Said DM-SMR Drives Are Not Suitable at an OpenZFS Conference in 2015

HGST Engineer Manfred Berger speaking at OpenZFS European Conference in 2015 gave an awesome overview of SMR technology, what it is, and why we need it. You can see the video here. He did a great job explaining why the industry is moving to SMR for hard drives since it increases storage density and the amount of storage one can get per dollar. It is one of the better talks I have seen talking about technical and practical reasons behind a SMR transition. This is a great video worth watching.

Towards the middle of the video (timestamp here), the discussion is on how HGST sees DM-SMR as not a good fit with performance issues.

Manfred is discussing with the ZFS folks development efforts to support SMR, significant for ZFS. Later in the video, he was discussing with the OpenZFS developers just how much work it would take to make SMR drives work with ZFS (mostly focusing on host managed SMR. You can see that discussion during the Q&A starting around here.

You may ask why are we talking about this excellent presentation. It turns out Manfred works at HGST, a Western Digital company. Again, given his presentation at OpenZFS in 2015, this is a knowledgeable gentleman and from that video alone has the ability to articulate storage concepts in a way that any storage company would be better for having someone like this on their team. Of note, he is not on the WD Red team per his LinkedIn profile, but HGST and WD have been active in OpenZFS. This is just an example.

In essence, we have a WD employee saying five years ago DM-SMR is no good. He is saying there are performance issues. He engaged in a discussion on the implications for ZFS. Of course, that may not represent current thinking since everyone is entitled to change opinions as they get more information, but the idea that WD-HGST had no idea that ZFS may be an option, and people may see performance issues over time, is seemingly far fetched at this point. This is what we saw in our testing data so it seems what we found is what would be expected.

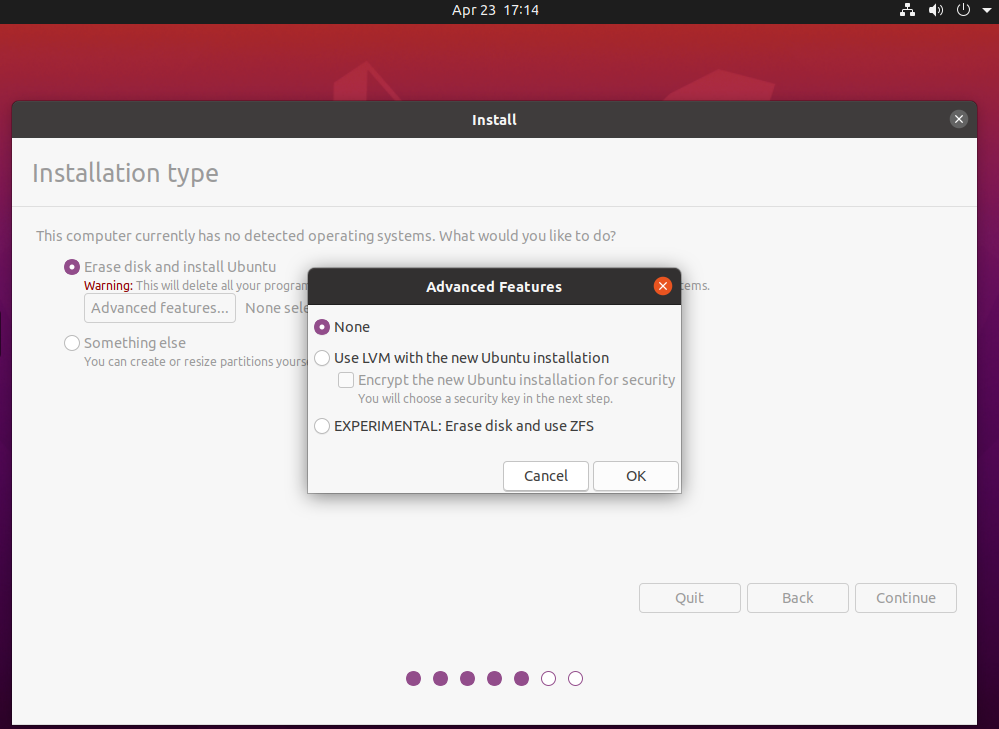

ZFS is not just FreeNAS, TrueNAS, or Oracle/ Sun. Instead, it is in products as simple as Ubuntu as a desktop installation option.

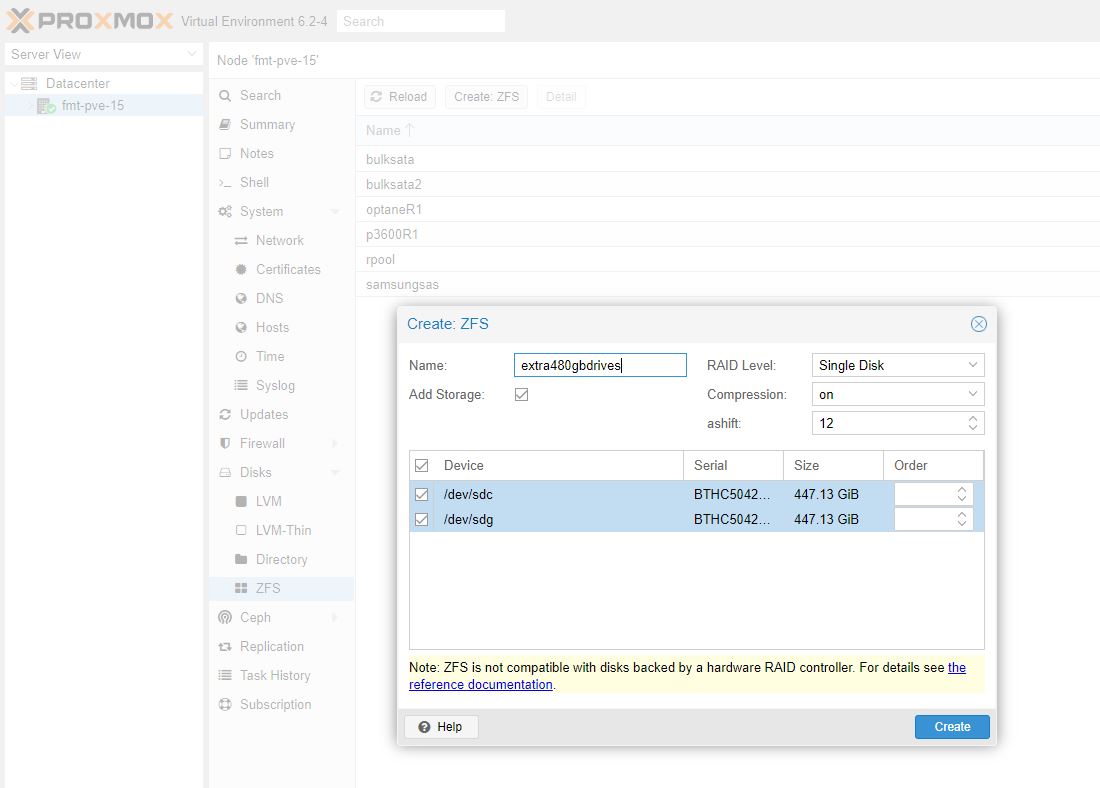

We see it in products such as Proxmox VE, and we use ZFS all over the STH hosting cluster from our backup NAS to web hosting nodes.

Other vendors are getting in on this too. There are a number of smaller players that use ZFS now for their NAS system and QNAP has a line that is dedicated to using ZFS and they are increasingly looking toward ZFS. In our recent “SwitchNAServer” or QNAP QGD-1600P Review, we were greeted by a QTS hero ZFS integration announcement.

It may not be the most popular, but it is growing in popularity, even among major Linux distributions and traditional SMB NAS vendors.

The Problem Goes Beyond ZFS Into Other NASes

After publishing our test results and video, as well as our original Surreptitiously Swapping SMR into Hard Drive Lines Must Stop piece, we had several readers and folks who watched the previous video and wanted to share their experiences. One that I wanted to highlight was a gentleman by the name of Rob Janssen from the Netherlands.

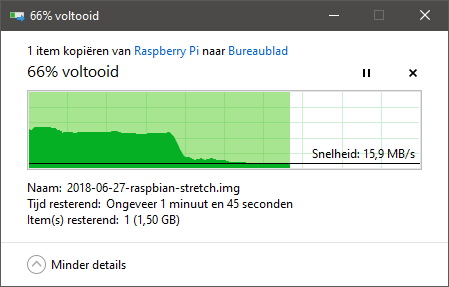

Rob reached out via e-mail and was extremely open and helpful sharing his experiences of using the WD Red SMR drives in his new Synology NAS. Rob did a great job in his personal blog (note currently HTTP) documenting his experiences with the WD Red DM-SMR drives in his Synology NAS. He talked about how he, like many users, reflexively purchased WD Red because he thought they were the same high-quality drives we have had for generations. He also discussed how after installing his drives, he saw some less than great performance. Here is one example:

Even more than that, he saw strange behavior such as the NAS array working overtime to shuffle data around on the drives even when he would expect it to be idle.

Ultimately, WD did the right thing and exchanged his WD Red DM-SMR 6TB drives for WD Red Pro drives. That is a good hint that if you have these drives and are seeing poor performance, you may want to request a swap.

ZFS Issues Surfaced in Mid-2019

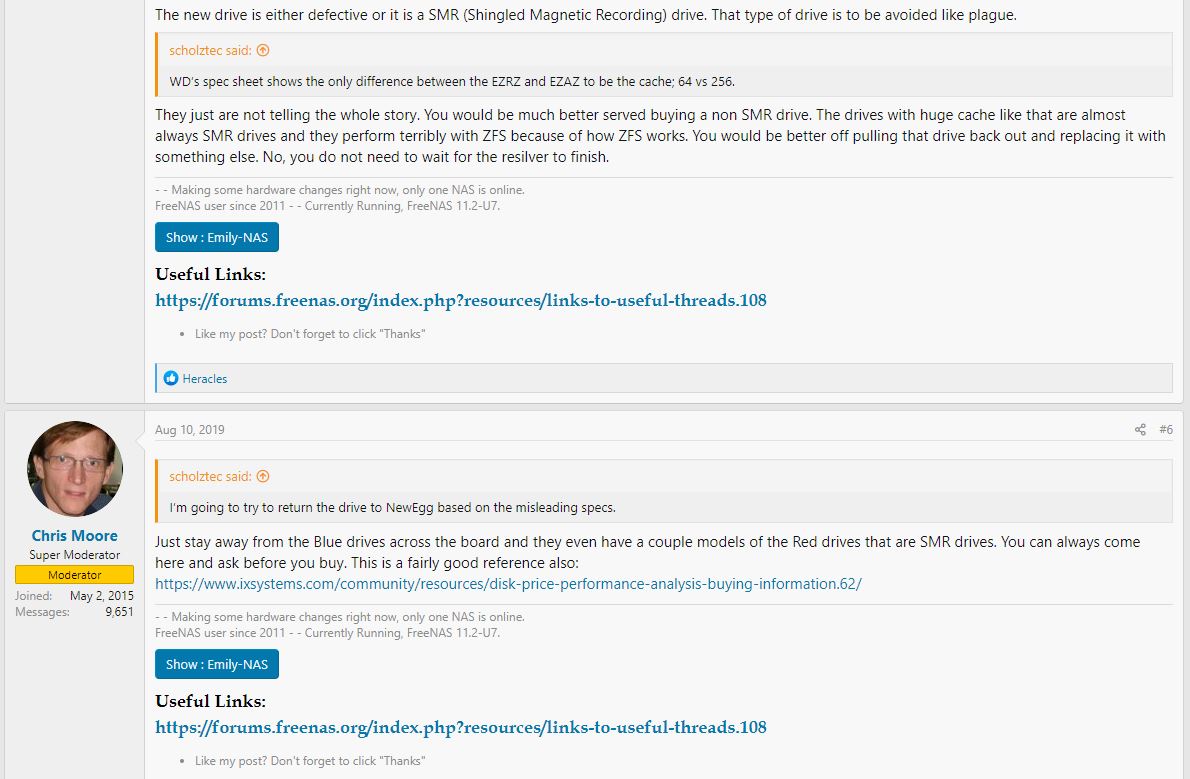

Rob was one of a number of folks who reached out on non-ZFS, but there were other sources pointing to issues with DM-SMR and ZFS. Chris Moore, Super Moderator at the iXsystems forums, even reached out to say how the issue has been thoroughly discussed on the iXsystems FreeNAS forms through a YouTube comment.

We double-checked and specifically searching, we confirmed there were threads in June-August 2019 where the moderators on iXsystems’ own forms were advising that the WD Red DM-SMR drives were no good (also before they shipped in FreeNAS systems in September 2019.)

One of the hard things is that this data on personal blogs, and dispersed in forums, is very difficult to corroborate and aggregate. We have a collection of user blogs (Rob’s above, as well as others), and even posts in impacted vendor’s forums not being seen by the general tech community. We had not even seen them for months due to the nature of the issue. That is a big reason consumers rely upon NAS drive vendors, as well as NAS vendors to test and certify drives.

Next, we are going to talk about how all of this discussion could be missed, and try getting some insight into what WD is doing about the situation.

Well fugglensaps. If you watch that OpenZFS vid that’s damning.

Thank you, again, for taking a stance here!

And just as your previous article on the subject, it is neutral, balanced, insightful. But also on point, clear and just great tech journalism.

On the topic: I hope WD will change course. No one could have imagined that they would be able to hurt their own brand image so much only a year ago.

But they did. And without changing course, they are in danger of totally loosing faith in the NAS space.

I am not sure that they realize this. To me it seemed from the start that they were trying to wait “till this news blows over”.

Only that it wont. People buying NAS drives are in the huge majority quite informed, and most of them will now know of this mess. And like me, either be happy to have bought Seagate, or feel so badly burned they will never touch WD again, short of a total change in course.

WD: Accept this wont go away. Clearly label SMR drives as such; Keep all NAS drives CMR/PMR; Communicate openly the drawbacks for SMR in all channels, advertising, marketing, etc; And replace ALL NAS-SMR drives with CMR when customers who were burned by this request it.

This may not fix the damage done, but without it, the damage will only grow exponentially.

You’ve got WD dead to rights with this. Nice work.

Maybe it’s just me but I appreciate you look for the best in people. It’s refreshing for online. I’d say it’s turning my head. Now I’m thinking what if they aren’t just a series of misses, assumption, and luck. You could’ve gone way harder on them the more I’m stewing on it.

What’s funny is if you buy WD PR4100 or Ex4100 they use FreeNAS for it.

Synology may not use ZFS, but their support for btrfs may also expose them to similar issues with SMR drives — hence dropping support for them. I couldn’t tell if Rob’s issues with RAID 6 involved btrfs or just ext4 and mdraid.

I wondered the same thing re: btrfs vs ext4. My tests for Ars Technica were dead simple ext4 on mdraid6, but a Synology uses btrfs, LVM, and mdraid.

It’s possible that my ext4 tests missed a pathological interaction between either btrfs and SMR or LVM and SMR.

Jim Salter – first off, let me say again, great work.

We have a had a lot of requests for different file systems/ storage arrays to be tested. As folks hopefully can appreciate from this article, testing all of them is a huge challenge for us and Jim/ Ars. We can help give insights and data points, but it is testing that vendors are being paid to do via the premiums in their products.

Great thanks for your information. It seems like WD is getting greedy. You have to clearly state what type of technology are using in your drive, not just hide it without clearly state that in the spec. It’s so dishonest to do business like this. Shame on you. WD. Time to say goodbye to WD including my customer till you clearly state which drive is using smr clearly with different pricing.

I see there in the screenshot from Synology’s drive compat list that the WD Red SMR drives do not have vibration sensors. I remember distinctively that WD Greens, as well as desktop HDDs from other manufacturers, had at least one or two vibration sensors on their PCB. Is this yet another way of cutting corners by WD? (Those vibration sensors do not seem cheap; looking at bulk pricing at Digikey, for example the Murata PKGS-00GXP1-R comes at USD 0.466 a pop for 30K units)

Anyways, thanks for the follow-up on this topic!

I agree with Steven, above.

I’ve been managing enterprise storage since c. 2000 and WD drives have by far been the most prone to failure, especially when used in RAID. Some of the big players that make their drive reliability data available do not bear out my experience on a brand-wide scale, but I would never buy another WD drive.

HGST improved WD’s hardware and engineering (opinion) but the company’s very long history of denial of drive issues (fact) has permanently steered me clear of their products. Your mileage may vary.

I’ve got about two years in to Seagate Ironwolf drives on a 108TB box with zero failures and super-low failure predictors (read/write error counts, bad sector counts). Two years? Time to swap them out and consolidate, but it’ll be on Seagate drives again.

Funny…. that video was one of the things I emphasized when I brought this whole stinking mess to the attention of tech media and it’s _WHY_ Chris Mellor ran with the original stories

There have been a bunch of wannabe reporters jumping on the bandwagon and failing to attribute sources or bother doing their homework since this hit Blocks and Files (the Register) but it was all published there first. Chris and I spent about a month going over various bits (including lots of forums posts containing warnings about the drives) in order to work out when they actually first started hitting channels and that’s when we discovered that DM-SMR was so widespread and had been around in small drives for at least 3 years.

As for why WD are staying quiet: what they’ve already said has been used against them in court and I explicitly warned them that unless they come utterly clean, whatever they say will end up as court evidence – plus they’re clearly aware they’re being investigated for Sherman act violations.

Many sites would’ve just bashed WD, but you gave them the benefit of the doubt. That’s quite remarkable.

Hanlon’s razor, isn’t it?

It is not only a ZFS issue. SMR drives kill Ceph too!

The rot at WD goes back further than the SMR debacle. I was very disappointed recently when I replaced some older 8TB WD red drives with some newer ones to find the newer ones run a fair bit hotter. Turns out the old ones were helium-filled. It just seems like another bait-n-switch. Build up a brand and good reputation for a product line – then over time cheapen it yet keep the prices the same, and trade off the reputation and cash in.

Eventually though, consumers wake up to this.

The sad part is there is just not enough competition in the HDD space. It seems almost like a two horse race now. It’s almost like these two vendors feel like they can dictate terms and do what they please with no consequences.

Re: Synology & BTRFS

Even something as simple as running Extended SMART Tests on a Synology will show a significant difference in my experience. I have an 8×8 Synology 1815+ with 3 WD80EMAZ CMR drives and 5 Seagate ST8000AS0002 SMR drives, and the WD CMR drives will finish something as simple as that Extended SMART Test in like 10-20% of the time it takes the Seagate SMR drives. The SMR drives take literal days to finish.

FWIW, originally my 8×8 was all Seagate SMRs, but I’ve slowly replaced them with the WD drives as they’ve failed. Luckily for me it turned out that the WD 8tb drives were CMR and so I wasn’t replacing SMR with SMR. The rebuild time for a failed drive on my original 8×8 SMR SHR RAID was over a week.

I can see the protests (and riots) now:

NAS Drives Matter!

LOL WHY DO YOU DO BOTH VIDEO AND TEXT?

I personally have 3 2TB WD Red (model WD20EFRX-68EUZN0 ) I had originally wanted to use the drives with an HBA card, in a FreeNas build but I did not due to my hardware not being lackluster to run it (not enough memory, and older LGA 775 system). So I put them in Raid and used them in Windows for my network storage. And since I did that, I have been having nothing but problems, drives dropping from the array, slow writes. Eventually I figured the HBA card was bad, so I ditched it and went with using the windows storage space solution. Still the problems have persisted. So I sought to add more storage, I added a 4TB Seagate Ironwolf drive. That kept getting flagged by windows storage space as being problematic. So I removed the drive from the storage space and have had no more issues. But these WD drives and this recent issues and news of doing this with their NAS Drives. It is clear I will not purchase another WD product.

Patrick,

Been a fan of the site for years, love the content, love the quality.

Please, calm down on the article and video length. I hate to be all ADHD but surely this and the video could’ve been trimmed a little.

Rather than watch your video, I opted not to at all, it was just that long.

Please consider keeping it a bit shorter, it’s painfully long, your audience are generally pretty clued up, making the video for bottom of the barrel and padding for 30 minutes does nothing for your great content

Just stumbled across this after having issues with what I now know to be WD Red 4TB EFAX drives.

Western Digital won’t help me as they claim they are OEM drives (I bought them from Amazon) and Amazon won’t send replacements, only refund me, which leave me in the dilema of having to be without any drives whilst I wait for the refund so I can order more. (Yes, I have backups!)

So beware, if you are buying from Amazon, or any other place, make sure you get the boxed retail version and not the OEM version…

I just read this article running exactly into these issues. But in my case I bought the new WD RED Plus(WD**EFZX) version, which should be the same as the old WD RED ( WD**EFRX). I bought one drive from Amazon and one from Newegg. Both failed with resilvering on ZFS. Faulted write errors, those of which you see from SMR drives. I have a sneaky feeling due to supply chain issues, WD is branding EFAX drives as EFZX drives. Giving them, correct serial and label. I returned all the drives I bought, was going to use them to slowly replace older EFRX drives. I guess I will have to go with Seagate Ironwolf CRM drives. Can’t trust WD anymore. Two drives from two suppliers both fail with same error, yet pass long smart testing.