Recently Backblaze posted some very interesting data from their population of hard drives. For those not familiar with Backblaze, the company provides $5/month (or $95 for two years) unlimited storage. Backblaze is interesting because like Google, the company tends to use primarily consumer drives In fact, Backblaze even ones removed from external drives. Prior to the Thailand flooding that changed the course of hard drive pricing, we first looked at a practice similar to what Backblaze uses in “Internal or External Hard Drives: Are Warranties Worth the Cost?” Unlike studies based on smaller data sets, the Backblaze platform now holds over 75PB of data, or about 1/4 as much as Facebook (given very different use cases and architectures.) All of that data requires well over 25,000 hard drives on drives similar to what consumers buy on Amazon or Newegg. This is certainly a large enough dataset to start seeing patterns in storage reliability, at least in Backblaze Pods.

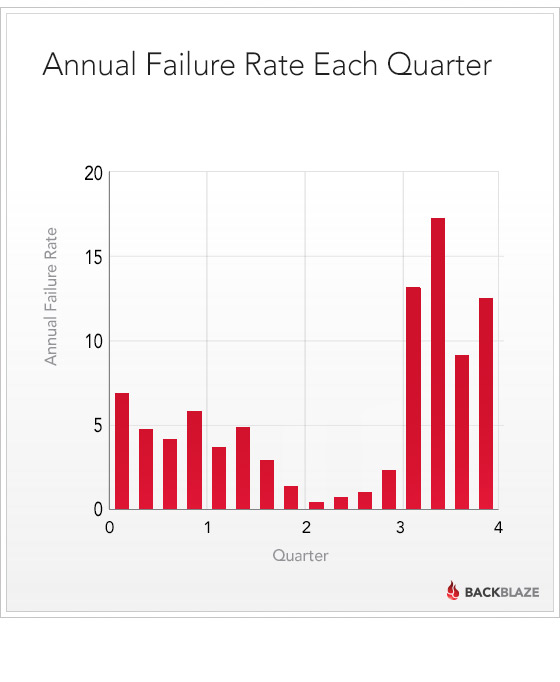

One post on the Backblaze Blog (certainly worth bookmarking) called “How long to hard drives last?” In terms of the standard bathtub curve Backblaze found something interesting: drive failures somewhat follow the standard bathtub failure curve model.

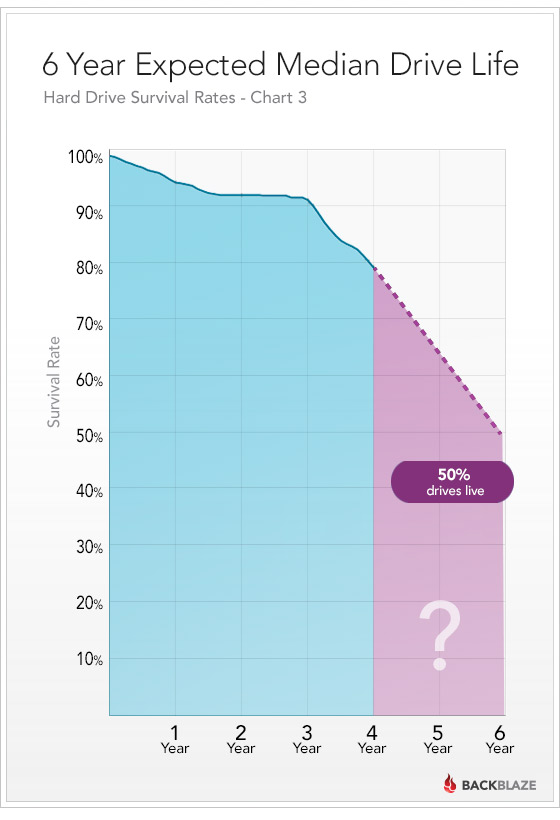

As one can see, after three years failures tend to increase significantly. Typical bathtub curves are generally dominated early by hard drive “infant mortality”, a constant rate of failure and then old age. As one can see, old age certainly takes a toll on hard drives. One can also see that between the second and third anniversary of putting a drive in service, the drives tend to be in the valley between the early and late factors in drive failure. Backblaze even extrapolated their failure data over a projected 6 year timeline:

The big message after reading this is that if you have a drive approaching three full years old (and out of many warranty periods) it is likely time to find replacements. Backblaze is also basing this off of a decent sized data set. In the real world, storage reliability has many factors even including the lifespan of power supplies, disk controllers, backplanes and etc. That makes drive reliability an important piece of the puzzle but not the only piece. See our Storage RAID Reliability calculator (MTTDL model) for and idea of what this may look like across RAID sets.

In the lab we have had only about 100 drives running in various enclosures over the past few years and my data seems to mirror what Backblaze found. The sole exception to that was the WD Green 2TB EARS drives. The WD Green 2TB EARS hard drives had a very high failure rate a few years ago with 8/8 drives being replaced under RMA within two quarters. Of the replaced drives only two have failed over the next few years. Further, the Seagate 7200.11 drives which were at the time found to be problematic had one drive arrive DOA and one fail early out of 25 drives. Absolutely not conclusive other than that the suspicions are the WD Green 2TB drives were mishandled during shipment which caused the extremely high failure rate. Perhaps the most interesting thing here is that more storage is going solid state, meaning fewer mechanical components that can fail.

Overall Backblaze has a great read with the “How long to hard drives last?” Certainly check it out if you have not thus far.

Are the Backblaze drives used 24/7, and never shut down? So, if you use the disks seldom, you get better life span?

I think this is just an indication of drive life span. You must remember that a drive working in this environment is doing 24/7/365 work. It is never resting. So for a home system or home NAS, the work load is in another world.

I have 6 SATA drives in a NAS from 2007. All were over 40.000 hours powered on when i got them (from a enterprice backup solution. They have been on 24/7 the last 1.5 years and are soon tipping the 50.000 hours markl.

They do get hot in the summer time… easily passing 50 degrees celcius.

Still, only one died so far.