Heading to the various meeting rooms filled with servers and the various exhibit halls across Taipei for Computex 2016, I had a few hypotheses I wanted to test. One of them was that 10GbE networking was going to be ubiquitous. What I found, in terms of server networking was slightly surprising.

10Gb Ethernet Proliferation: We are here

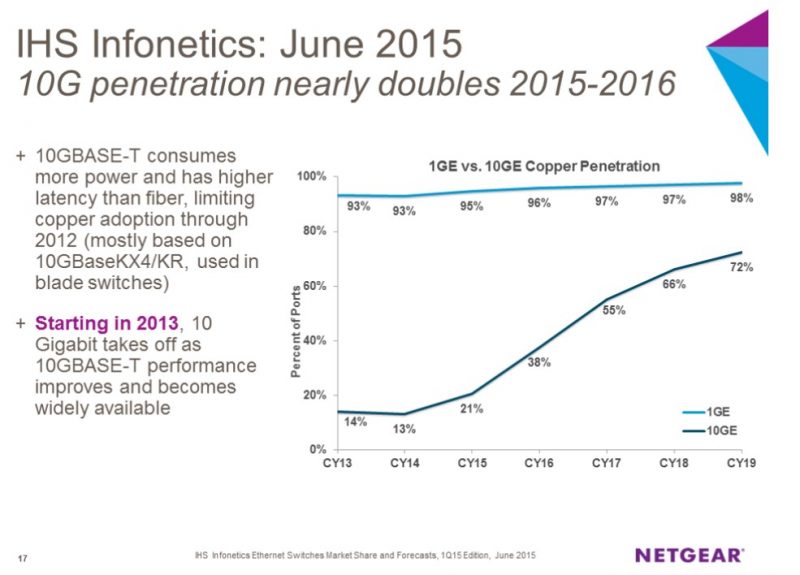

First the switches. I tallied 22 different vendors selling 10GbE equipped switches. Frankly, I did not have time to visit with every vendor and see every booth, however even small Chinese ODMs had working 10GbE (usually SFP+) switches that they could fire up and show you. We covered Netgear’s prediction that 2016/ 2017 would be the year of wide 10Gb Ethernet adoption in the SMB space. One indicator I was looking for was a high number of smaller ODMs with their own switches ready to brand. I did not tally a vendor that did not have a 10GbE switch with at least 8 ports.

On the server side it is still slightly more mixed than I would have expected. It is little secret that we are nearing the end of server platforms that do not support 10GbE out of the box. Without going into embargoed details, the road maps we have seen show 10GbE adoption for server platforms growing asymptotically towards 100% on new platforms. At the end of the day 1GbE still costs significantly less. It uses less power. Even cabling costs less. 1GbE switch ports are approaching extreme commoditization at under $5/ port. The 10GbE market is still running around $100/ port. It is little wonder why we have not transitioned to 10GbE yet. We saw plenty of future designs that will be released in Q4 2016 and later still incorporating chips like Intel i350 for multiple 1GbE networking.

With all of that said, as switch port prices drop, and power consumption on the server and switch side drop this year, 10GbE is going to be on every link that needs it. Frankly, if you are building a virtualization server or a hyper-converged infrastructure server and you are limiting yourself to 1GbE (e.g. Intel NUCs) by the end of 2016 this limitation will seem nonsensical. Even the micro server class designs we saw used 10GbE or multi-host adapters with at least 10GbE connectivity.

A new 19″ rackmount standard? OCP mezzanine card proliferation

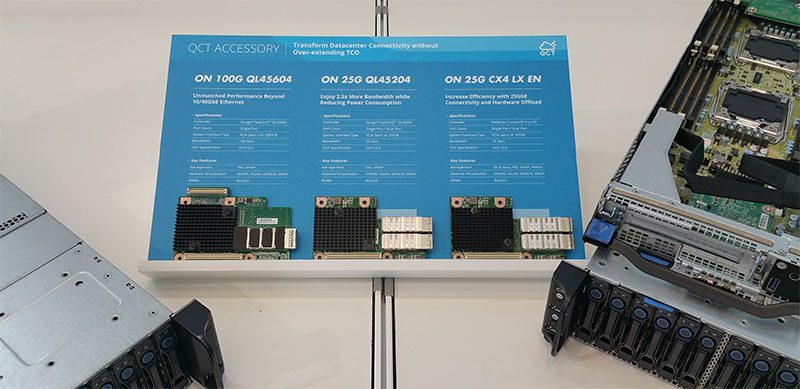

A trend we will see in 2016 is a continued adoption of OCP mezzanine cards, even outside OCP rack solutions. We have already shown solutions from QCT, ASUS, Tyan, Gigabyte and others adopt OCP mezzanine cards in their server designs. Although we did see solid adoption of the storage OCP mezzanine cards from vendors at the show, the networking side was completely different. We counted nine vendors with OCP mezzanine networking slots on their systems.

The take away is that server vendors are moving towards OCP mezzanine cards. We saw platforms ranging from traditional 1U/ 2U platforms, storage platforms, multi-node compute and microserver platforms and even mITX desktop server platforms with the slots. With so many vendors adopting the form factor, there is going to be pressure on vendors like Dell, HPE (outside of the CloudLine) and Supermicro to universally adopt a more standard mezzanine card form factor.

Adapter war winner: Mellanox

Mellanox absolutely dominated the Computex floor. We covered the ASRock Rack 3U16N server with Mellanox 50Gb adapters, but Mellanox ConnectX-4 was everywhere. We also saw them displayed at vendors as varied as Synology, Supermicro, QCT, Gigabyte and a slew of smaller players. The displays were usually small, but if you were looking for them, the dual 25Gb PCIe 3.0 x8 adapter was shown by just about every major server vendor. Whoever set this up on the Mellanox end staged a silent coup at an event that Intel heavily sponsored. We did see adapters from other vendors on a sporadic basis, but often accompanied by a ConnectX-4 adapter.

What is driving this? The Mellanox ConnectX-4 Lx EN (MCX4121A-ACAT) card supports dual 25GbE (SFP28) links and sells for under $500 even in single card quantities. Price wise that is about the same as an Intel XL710-qda1 (single port QSFP+ 40GbE port) yet gives the vendor the cachet of saying they have 25GbE rather than 10GbE. What Mellanox did a superb marketing job on was getting almost every vendor at Computex 2016 to simultaneously tout 25GbE. Even vendors such as Synology who we would not normally associate with cutting edge networking had the cards prominently on display.

We do not have 25GbE cards in the lab, but we hope to get our first 32 port 25/ 50/ 100GbE switches soon.