Early Performance Figures: Promising, But Incomplete

With NVIDIA’s ambitions to more directly compete in the data center space with Intel, AMD, and the other Arm vendors, NVIDIA is now also in a position where they need to promote the performance of their CPUs in comparison to the competition.

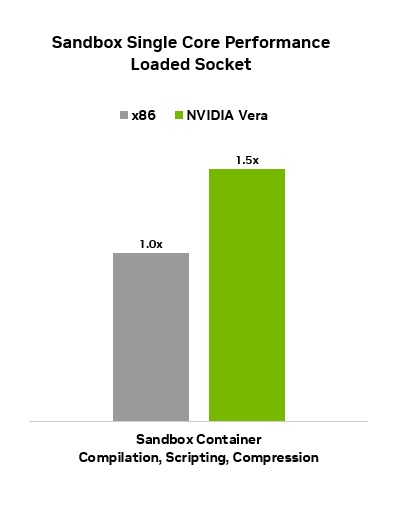

At a high level, NVIDIA is touting Vera as offering 1.5x the “agentic sandbox” performance of an unnamed rival x86 CPU. This is consistent with NVIDIA’s overall marketing pitch for Vera, which is that it is the ideal CPU companion for running numerous agentic AI tasks being spawned by the GPU (and LPU).

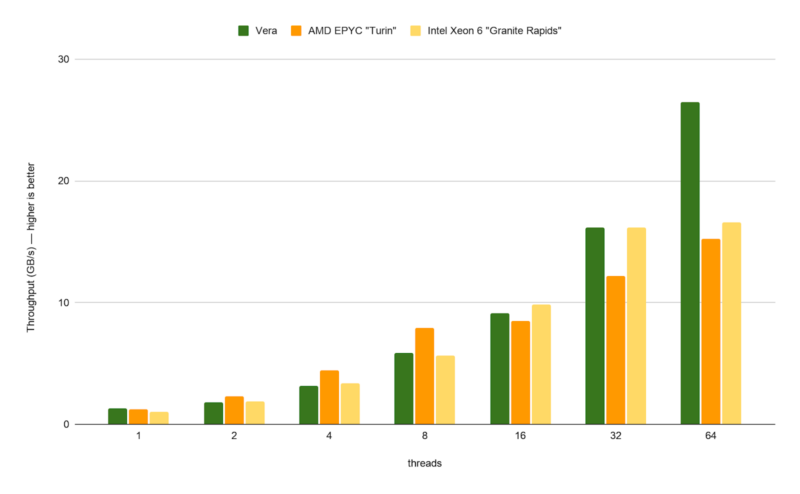

More interesting is that NVIDIA also gave Redpanda, a streaming data platform company, early access to Vera to benchmark it using their Redpanda Streaming platform. As a result, we also have some third-party (ish) benchmarks of what Vera can do.

The results, as you would expect from an NVIDIA-blessed benchmark, are very favorable for Vera. Compared to both AMD EPYC 9005 (Turin) and Intel Xeon 6 (Granite Rapids) hardware, Vera is significantly ahead in long-tail latencies and SQL performance. Redpanda also provided an additional interesting data point, which is their measured inter-core communication throughput, which has Vera behind at low core counts but pulling well ahead by 64 cores – outlining the advantages of NVIDIA’s single side, single NUMA domain strategy.

Redpanda’s benchmarks are far from comprehensive, but they are none the less an interesting first look at Vera. If Vera can put up significant data center workload wins once it starts shipping later this year, then that would be a major feather in NVIDIA’s cap following their decade and a half of CPU ambitions.

With that said, however, there’s still a great deal unsaid about Vera. We do not have clockspeeds, power consumption, or most of all pricing information for Vera. There are a great many details that go into making a product competitive in the cutthroat data center market beyond just performance, and how well NVIDIA does at these other factors is going to have a significant impact on how well Vera does outside of NVIDIA’s own Vera Rubin racks.

Expanded Ambitions: Vera CPU Racks, Vera Servers, & Vera in HGX NVL8

Speaking of Vera Rubin racks, one of the cornerstones about NVIDIA’s expanded ambitions with the Vera CPU is that it is not just for NVIDIA’s standard NVL72 racks. For the first time, NVIDIA is going to be offering dedicated Vera CPU racks for Vera Rubin ecosystem customers, allowing them to scale up just the CPU portion of their operations.

Plainly named the “NVIDIA Vera CPU rack,” these racks will pack 256 Vera CPUs into a single rack of 1U compute trays, with up to 4 nodes (8 CPUs) per tray. A compete rack in turn will contain up to 400TB of LPDDR5X memory, along with a hefty dose of additional NVIDIA technologies, including NVIDIA’s BlueField-4 DPUs. Meanwhile communication between trays in a rack will be provided by NVIDIA’s Spectrum-X Ethernet controllers – NVLink being off the table here as Vera only has an NVLink C2C PHY that’s suitable for talking to adjacent chips.

NVIDIA has not disclosed the power requirements for a Vera CPU rack, but expect it to be a hot item. The full 256 CPU configuration will require liquid cooling, just as with NVIDIA’s other Vera Rubin family racks. Fittingly, the Vera CPU rack is being designed around the same MGX modular rack architecture, so it will fit right in with the rest of the family.

Otherwise, for customers who need something other than a whole rack of Vera, NVIDIA’s other major expansion in this generation is that the company is working closely with its cadre of OEM/ODM partners to offer single and dual socket Vera CPU servers. Dell, HPE, Supermicro, Lenovo, and others are all stepping up participating partners, underscoring the amount of OEM interest and investment NVIDIA has garnered from these server vendors.

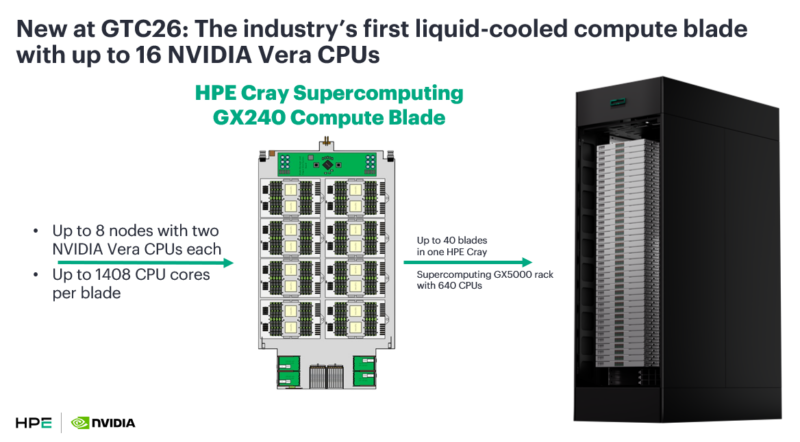

Individual Vera CPU servers are designed to fill the gap both above and below a whole 256 CPU rack of Vera trays, offering server operators more flexibility in how they deploy NVIDIA’s CPU. On the low end will be single and dual socket servers, with one or two Vera CPUs per tray/node respectively. This will be the backbone of air-cooled options. On the other end of the spectrum, some of NVIDIA’s partners such as HPE are going the other direction and will be providing Vera CPU racks that are even more dense, with HPE planning to offer GX240 compute blades with up to 16 CPUs per blade, which in turn can go into the Supercomputing GX5000 compute rack for up to 640 Vera CPUs.

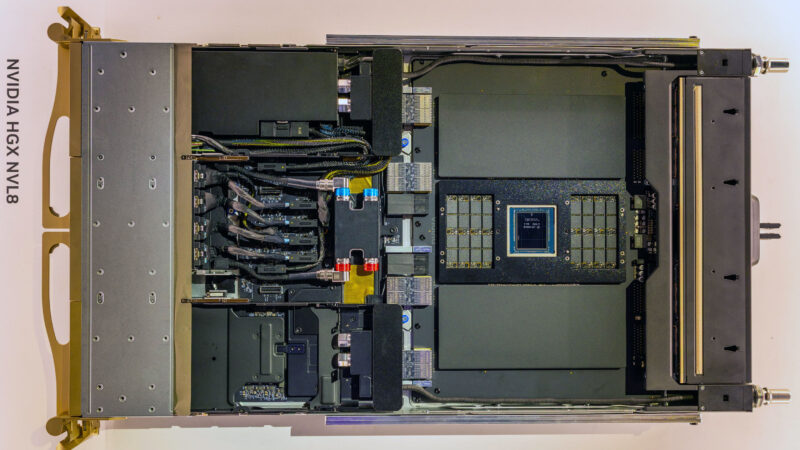

Finally, at the smallest end of the server scale, NVIDIA has confirmed that they and their partners are also going to be offering versions of the HGX Rubin NVL8 tray with Vera CPUs. As a reminder, HGX systems are NVIDIA’s traditional, PCIe-based systems with one or two CPUs wired up to 8 SMX form factor GPUs.

HGX is NVIDIA’s most flexible offering by using the smallest amount of NVIDIA’s proprietary interconnect technologies, and in previous generations it has been the backbone of partners’ x86-based offerings. The inclusion of Vera as a CPU option for the Vera Rubin generation is going to shake things up by offering an Arm host CPU for the first time. As a result, this will be a very interesting case where Vera is going to have to directly compete with chips from NVIDIA’s x86 rivals.

Final Words

With the initial announcement of their Vera CPU at CES 2026, and once again reinforcing it at GTC 2026, NVIDIA has made it clear that they have grand ambitions for their Vera CPU. Their first fully custom CPU design in almost a decade – and their first custom data center CPU design ever – NVIDIA is aiming higher and broader than ever before.

Whereas Grace was a cautious first foray into data center CPUs, the forthcoming release of Vera will be when NVIDIA takes their next step and actively starts trying to peel away data center market share from the likes of Intel and AMD. At the end of the day Vera is still a specialized piece of kit and is not going to be a true general-purpose CPU in the same vein as AMD and Intel’s extensive and varied CPU lineups, but with so much CPU demand being fueled by AI compute needs right now, just supplying an even larger portion of the silicon that AI customers are using these days would be a significant win for NVIDIA. And all the better for them if they can accomplish this while beating out AMD and Intel in single-threaded benchmarks.

As with the rest of the Vera Rubin platform, NVIDIA will begin shipping Vera CPUs in the second half of this year, with the company’s partners expected to ramp up their Vera-based server offerings throughout the next year.

This is to Patrick: Can you please ask your new writers, as good as they are, to properly review and spell check. Here’s one example I picked up: NVIDA

This site has a reputation for excellent reviews and well written articles, many others and I would like to see it kept that way.

I forgot to add, the positive not to this, is that they’re hand writing their articles :-)

@Mick

Thank you for the feedback. That one is my bad.

These do get spell and grammar checked (and I am probably harder about that than anyone else here at STH). However MS Word does not check words that are written entirely in capital letters, as those are normally acronyms that wouldn’t be in a dictionary. Which means it didn’t flag that misspelling of NVIDIA. Normally, if I screw up NV’s name it’s as “NVIIDA”, so I didn’t catch that more novel misspelling.

Best Vera write-up I’ve seen so far. And good to see Travis Downs writing again on that RedPanda blog….but…that blog is not ideal. In particular, the lack of any specs on the parts tested. You know how wide the performance delta on Epyc and Xeon within the same generation are based on what sku was used (even if testing on the same number of cores). Yet, nothing was included on overall core count, TDP, etc. So is hard to draw any conclusions just yet. Even basic things like was this with or without Nvidia’s “SMT”.

@Mick The irony is that you have a spelling mistake in your second post…

“the positive not to this”, do you mean the positive note or the positive nod?

@Blue4130

Yeh I saw that right after I posted it, real foot in mouth moment.

It was indeed mean’t to be “…the positive note to this….”

In a world of AI Slop, this site is one of the last bastions. Half the time I google for a review of a product, I’ll click on a given link and what I’ll find is an AI generated review with no actual views, opinions or anything.

>In a world of AI Slop

@Mike, I hope you do realise Ryan Smith was the Chief Editor at Anandtech for 10+ years before it was shut down.

The spatial multithreading reminds me of AMD’s Bulldozer . The front end here is huge as compared to Bulldozer so the backend should not be starved. but the performance gap between a single beefy core and split core is big enough to confuse most schedulers.

The latest linux patch from Nvidia suggest that they are seeing issues similar to bulldozer.