File this under “things that have us intrigued.” The MikroTik CCR2004-1G-2XS-PCIe combines an Arm CPU with dual 25GbE SFP28 cages to make a PCIe card that is also a router and can function as a router as well as a network adapter.

MikroTik CCR2004-1G-2XS-PCIe Router on a PCIe Card

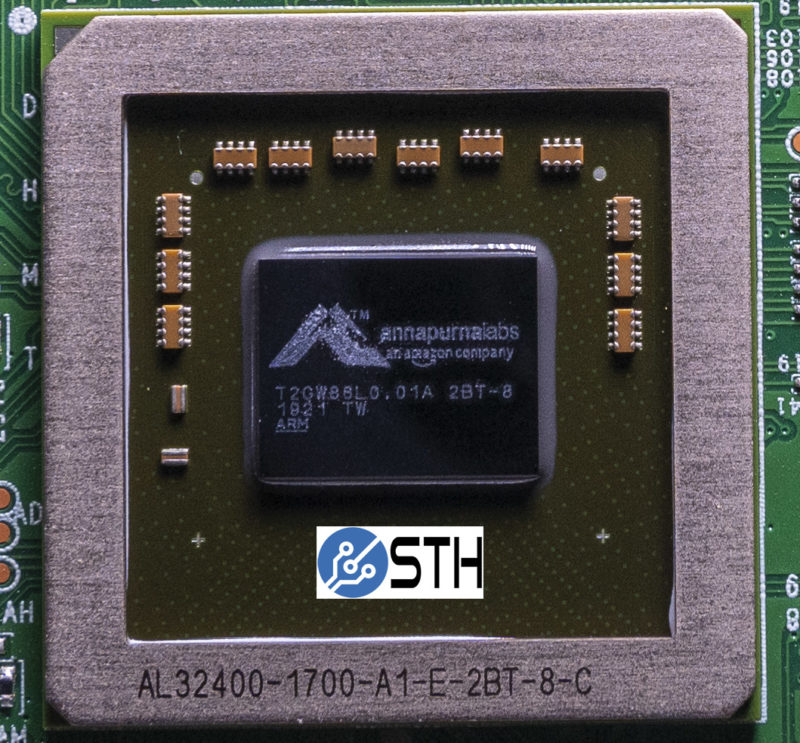

For key specs, this is a low-profile PCIe Gen3 x8 card that is actively cooled. The card itself has a quad-core Annapurna Labs (now Amazon) AL32400 CPU, like the CCR2004. Attached to that are 4GB of DDR4 memory and 128MB of NAND for storage. Frankly, these two may be the most disappointing of the features. This card with more memory and storage would have a much wider use case than just routing.

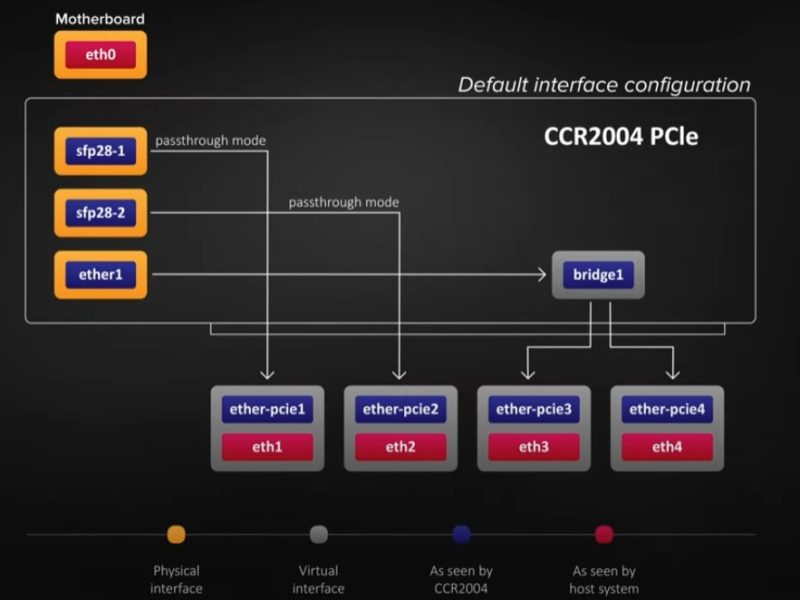

Here is the block diagram regarding what is going on:

Basically, the AL32400 can pass through the SFP28 25GbE ports, as well as the 1GbE port (for management) to the host system. Also, the AL32400 CPU will have access to the external ports as well as ports connected to the host machine. Checking the STH back-end, we have a picture of one of these CPUs from the Ubiquiti UniFi USW-Leaf piece. Ubiquiti, for its part, took our feedback, along with some others, and decided to pivot that line. Still, we have a picture of one of these chips because of it. The AL324 uses 4x Arm Cortex-A57 cores for those wondering.

Unlike many of the DPU options, this is a very low-cost CPU. The last we heard was $20-25/ chip but that may have changed. MikroTik says it can pass line speed traffic if using jumbo frames. Still, with some DPUs and SmartNICs, memory bandwidth is a key limiting factor with this generation. BlueField 1 DPUs actually could have more bandwidth than today’s BlueField-2 cards, but we expect that to change in the future.

As we can see from the photo above, the card itself is actively cooled and draws power from the motherboard.

Final Words

For those thinking this is a DPU, it is not quite in that class. The card itself needs to boot, and that can take longer than the server. That will require additional work on servers to ensure this device gets picked up after it is ready. You know this is going to be an interesting card when the 3-page announcement includes a reference to how to re-initialize devices in Linux to be able to use the card:

Cards like the Mellanox NVIDIA BlueField-2 DPU use multi-host adapter functions to solve this problem. With BlueField-2 and some others, the host system gets to access the ConnectX NIC while the ARM CPU subsystem boots, then when that is online it is connected to the same multi-host adapter logic. We are going to have a lot more on BlueField-2 and other solutions soon on STH as our new special test platform is in the lab. If these sell for the rumored $200 list price then we may have more than one coming.

Overall, this is a really interesting device. It is focused on running a low-cost RouterOS instance on the Arm CPU. Imagine the possibilities though with a Ubuntu OS M.2 storage and two SODIMMs for memory. We will get one of these as soon as we can.

Interesting idea. What’s the use case for having a router on a PCIe card inside a server? Is it because you can use the router hardware to firewall traffic without placing a load on the server CPU? Or are there other reasons I’m not aware of?

VXLAN encap with the option to use EVPN/BGP without touching the host could be useful. If it’s low cost, could be good for that.

Sure hope it (easily) runs Ubuntu

If the passthrough is optional and can be configured I need 10 of these for my data centers of not will have to wait tell rev 2 or later and stay with current solutions

Very interesting solution, looking forward to the Linux testest.

@Malvineous: Colo! This thing is absolutely ace for colocated servers, plug the 1GbE into your IPMI/iDRAC/etc, run VPN for accessing the remote console + firewall and routing for your physical host in the NIC, so it works even if the host OS is dead. Downside is it won’t work with the server powered off, but, we’ll see if I can’t modify it to fix that…

@A Citizen: Passthru is indeed optional, the device presents 4x interfaces to the host, by default the first 2 are direct passthru to the physical SFP28 ports and the others are bridged with the 1GbE management VLAN.

My biggest complaint, like Patrick’s, is the lack of anything vaguely resembling a useful amount of storage. 128MiB of raw NAND is inexcuseable in 2022, especially since RouterOS v7 has docker container support (currently disabled for a UX rework, but should be in v7.2 in a few months).

If it just had a microSD card slot or internal USB port, that’d be fine for most purposes, but MikroTik’s continued insistence on putting tiny amounts of old-tech storage in most of their devices is infuriating. 4GiB of eMMC is barely any more expensive than the parallel NAND chip that’s in this thing, and the AL32400 can boot directly from eMMC…

@Andrew H: I can’t figure out why, but there’s got to be a reason. I mean, they randomly speckle certain devices with USB or MicroSD ports. This one, in particular, I would have thought an m.2 slot would be easy. But yeah, MicroSD/eMMC seems really trivial trace wise, as you can stick the card/chip just about anywhere on the board.

This card also seems relatively long and power hunger, even for a smartnic in modern 25gbe territory…

>Ubiquiti, for its part, took our feedback, along with some others, and decided to pivot that line.

The word pivot here requires a direction – did Ubiquiti pivot to that CPU line, meaning they’re going to use more of it, or pivot from the line meaning they’ll use less? What the heck do you mean?

Worth noting that MikroTik actually got the CPU in use here wrong, it’s an AL52400, which is the same chip used in Amazon’s 2x25GbE Nitro cards (according to some FIPS certification documents)

Did they cancel the product? It hasn’t been in stock anywhere for a year.

Absolutely non sense product.

Waste of money.