This weekend I was doing some work on the new ServeTheHome storage test bed. It is a very fast machine. Once I got Windows Server 2012 working on the Supermicro X9DR7-LN4F, I ran into some troubling issues. I first added five different SSD combinations onto the onboard SAS 2308 controller. Write performance was simply abysmal. Benchmarking on an Intel PCH is very easy, everything just works out of the box. Benchmarking on the LSI SAS 2308 controller is a bit harder as I have been spending more time tuning the system. One area was to change the write cache setting from the default on to the off setting.

Drives were all 240GB or 256GB in capacity. This is a decent cross section and I did a round-up this summer of several SSD’s and their Anvil’s Storage Utilities Performance. What we found was some controllers respond differently to the default settings and changing write cache settings in Windows Server 2012 may help performance. Also, using Anvil’s Storage Utilities (which is a great benchmark) turning on the write through setting can help performance. Let’s take a look at those situations.

Test Configuration

For this piece, I am using the new SSD test configuration.

- CPUs: 2x Intel Xeon E5-2690 CPUs

- Motherboard: Supermicro X9DR7-LN4F

- Memory: 8x 4GB Kingston unbuffered ECC 1333MHz DIMMs

- SSD: Corsair Force3 120GB

- Test SSDs: OCZ Vertex 4 256GB, Kingston SSDNow V+200 240GB, SanDisk Extreme 240GB, Samsung 840 Pro 256GB

- Power Supply: Corsair AX850 850w 80 Plus Gold

- Chassis: Norco RPC-4220

- Operating System: Windows Server 2012 Datacenter

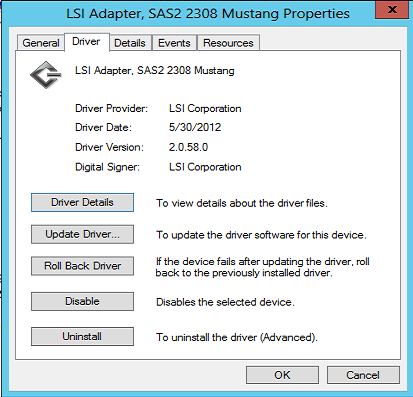

The strange thing here is that there has not been a lot by way of driver updates since the end of May. Certainly not around the release of Windows Server 2012 nor Windows 8.

Write Cache On Versus Off with a SSD on a LSI SAS 2308 and Write Through Mode in Anvil’s Storage Utilities

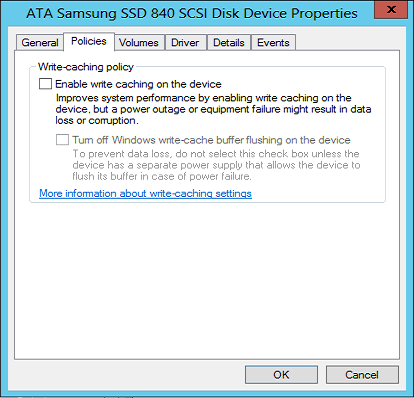

By default, Windows Server 2012 turned write cache on for new drives. The impact of this cannot be overstated. Write speeds plummeted across every drive. Here are a few examples of write cache on versus write cache off in Windows Server 2012 with a few popular SSD offerings. Just for those that are not aware, here is the setting we are changing:

Leading practice here is to change the setting then reboot the system.

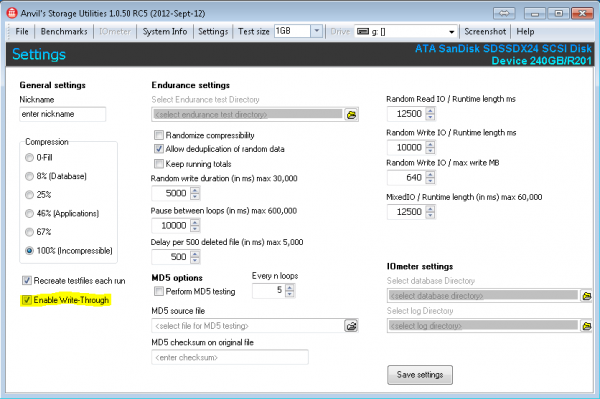

The other setting that we are looking at is enabling Write Through on Anvil’s Storage Utilities. You can find this by going to Settings.

Next we are going to look at a quick sample of SSDs. The first picture will be how the setup worked out of the box on the LSI SAS 2308. The second picture for each drive will show performance when write cache was disabled. The third picture for each drive will show what happens when the write-through option is selected. You will notice that some of these were taken on Windows 7 and others on Windows Server 2012. This was simply due to the fact we did 5x SSDs with On/ Off Write Cache, On/ Off Write-Through, two driver versions, two operating systems, two firmware modes (IR and IT) and etc. The reason we are highlighting the write cache and write through settings are that they did show a marked impact on the results.

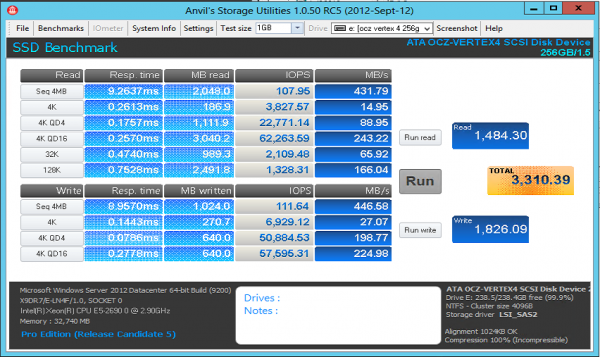

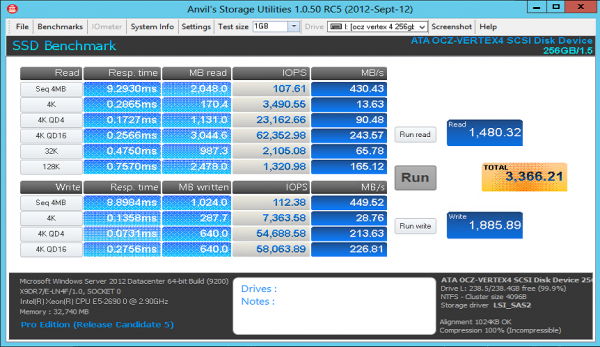

OCZ Vertex 4 Write Cache On v. Off and Write Through

The OCZ Vertex 4 is known for its speed on shorter tests. Using the 256GB variant and not so large test sizes means that the drive will be extremely fast if all is working.

Interestingly enough, using the Intel PCH the Vertex 4 achieves over 4,600 total and 2,800 on write. Here is the view with the write cache off.

As you can see, not much of an improvement by changing cache settings. Let’s try write-through.

Here we see a slight improvement. Nothing overly great but the best benchmark yet. Let’s see how other drives fare.

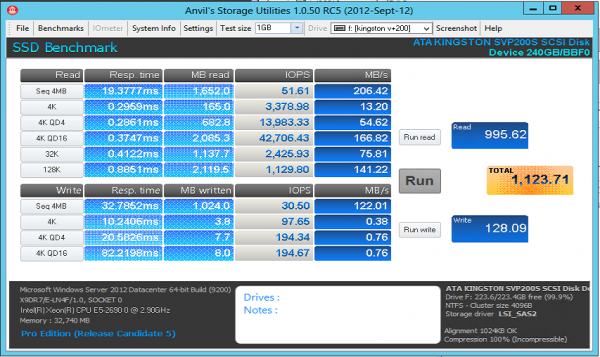

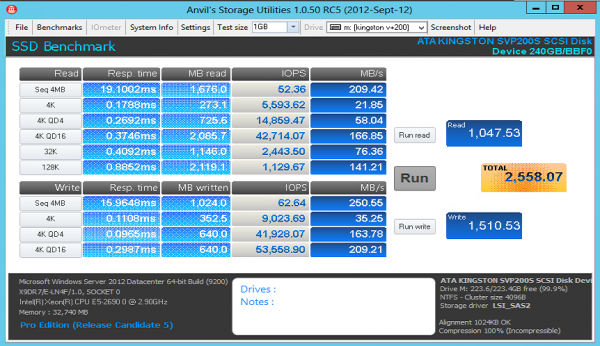

Kingston SSDNow V+200 240GB

The Kingston SSDNow V+200 240GB hit the scenes and is a decent performing drive given it uses asynchronous NAND. Let’s take a look at the Anvil’s Storage Utilities results.

Yikes! What is going on there? If you look at the 4K writes, performance is much lower than we saw in the previous Kingston SSDNow V+200 240GB review. For those that don’t want to click, we would expect to see around 76MB/s for the 4K and about 220MB/s for the 4K QD16 tests on an Intel PCH. Let’s see what happens when write cache is turned off.

Numbers here are still a bit low. With that being said, much better than we saw with Write Cache On. What happens when we turn on write through?

Not much of an improvement on the Kingston SSDNow V+200 with write through, but still around 2.5x the stock out-of-the-box speeds. Let’s see if there is a trend with SandForce controllers.

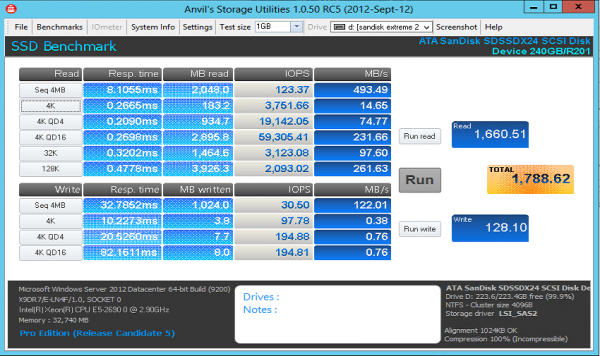

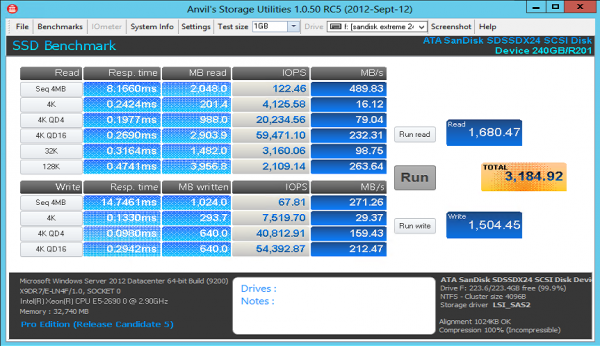

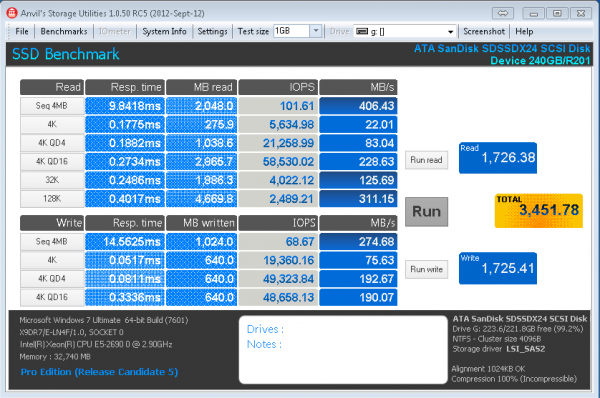

SanDisk Extreme 240GB

SanDisk made a splash with a SandForce SF-2281 based drive with Toggle NAND. I am keeping firmware consistent here to keep results somewhat standardized with the old ones. Plus, adding TRIM does not do much good on an LSI controller at the moment. Let’s again start with write cache on.

Again, yikes! Write cache in Windows plus the SandForce controller seems to absolutely kill write performance. Even sequential speeds are extremely low. Let’s see if the same pattern emerges as with the Kingston SSDNow V+200 with the Write Cache turned off.

Much better performance again for the SandForce based SanDisk Extreme 240GB. Turning off write cache in Windows Server 2012 improves write performance a ton. Performance still lags behind the Windows 7/ Intel Z77 PCH results but is vastly improved. Let’s try turning on write-through.

Here we see significantly better performance.

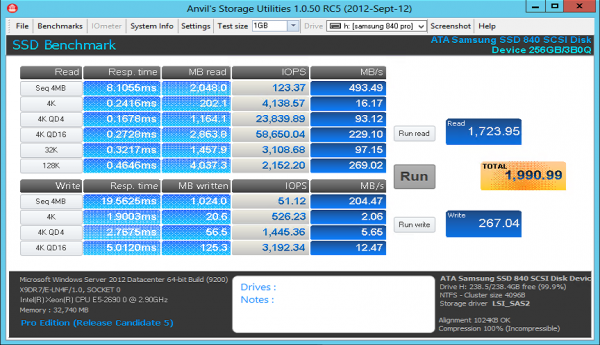

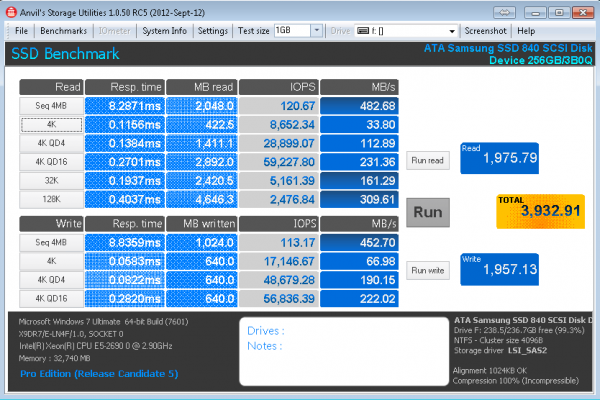

Samsung 840 Pro 256GB

When looking around the web, and all of the Intel PCH based benchmarks, the Samsung 840 Pro is fast. Yet, the Samsung 840 Pro out of the box was a drive that showed not so great performance with Windows Server 2012, a LSI SAS 2308 HBA and write cache on.

Again, write cache on in Windows Server 2012 takes the hottest consumer SSD around today and brings it to sub 10,000 4K IOPS. This looks very much in-line with the SandForce drives right? Here’s what happens when the write cache box is de-selected:

Performance dips by a small amount, more or less test variance. Similar behavior as to the OCZ Vertex 4. The Samsung 830 behaves in a manner similar to the Samsung 840 Pro on these test so those results are omitted since they are duplicative. Let’s see what happens when we toggle write-through.

Bottom line here, if you have a Samsung drive, on a LSI SAS 2308 controller be very careful with benchmark settings. I did want to point out that IOMeter managed to provide almost 17K on QD1 and over 40K on QD4 sequential 4K write tests so the above seems correct.

Conclusion

This is one of those times that we see totally unexpected results. When I first started testing, I expected performance to be +/- 10% of an Intel PCH chipset using Windows Server 2012 default settings. I also expected everything to be plug-and-play easy. It turns out, for SandForce drives, changing the write cache settings makes a huge impact. For Samsung drives, with Anvil’s Storage Utilities, you will want to enable write through mode. It was really interesting to see these results. The drives did not hit their Intel PCH speeds. Also, when using a LSI controller, one does need to pay attention to various settings. It seems like some of these benchmarks are optimized for the Intel + SSD platform. That makes sense as SSDs are commonly found in workstations. Bottom line, if you do run a similar setup, do spend the time to ensure everything is configured properly.

One thing I’ve noticed when using SAS controllers with SATA drives is you never get the equivalent of native SATA, due to the tunneling protocol being used to pass the traffic. It has some in built overhead. It’s the same for native SAS, with the drives being equal, a native SAS drive will far outperform a SATA drive on a SAS controller. This may not be true with the new controllers, but back when I was testing large arrays, I found that using SATA drives vs. the equivalent SAS drives resulted in a ~20% loss of performance. At the time I was using Seagate ES.2 1TB SATA vs. ES.2 1TB SAS drives for my comparisons.

Also, SAS is a parallel interface. That is a key performance driver.

First to clarify the differences between SAS and SATA: SAS and SATA use very similar physical interface. They both have two differential pairs, one Transmit (writes/commands) and one Receive (reads). The SAS protocol allows using multiple physical paths to devices, most commonly used for SAS expanders in STH’s market. Dual port SAS drives have two sets of differential pairs allowing for the drive to connect to two controllers or to a single controller with two data paths. SAS signaling uses a higher voltage compared to SATA allowing for a longer data cable. Both have a half-duplex speed of 1.5/3/6Gbps and use 8b/10b encoding(20% overhead on data rate compared to signaling rate), but SATA tunneling protocol (STP) does have extra overhead and will limit SATA SSD performance on a SAS controller.

Now looking at the Samsung 840 Pro from the Intel PCH to the LSI2308 with write through, we see limited decrease in speed for all read tests, with the worst being 4k. This makes sense to me, constant STP overhead and small datagram. We should expect to see more STP overhead performance loss on the writes because of SAS/SATA physical topology, but I feel something else is going on here. I would like to see data with the transfer size being constant and variable time, which is not what Anvil does. It would also be nice to see data from an SSD that has a SAS and SATA version, but similar internals like the Seagate ES SATA vs SAS drives if one exists.

Was having the same issue. Thanks for tip!

couple of questions –

i have a SUPERMICRO MBD-X9DA7-O and am in the process of trying to find the best possible solution for HBA’s in a 20 drive JBOD setup (Windows Storage Server 2012)

using WD “Greens” which state 6gb throughput

Issue: Read that HBA’s with 3gb throughput are enough for Drives as they never reach above 300mb throughput

Also, do HBA’s with CACHE increase performance for NON-SSD JBOD setups?

Hi Patrick. I’ve searched everywhere, and I cannot seem to find an intelligible breakdown of the 2308 and 2208 chips, and what they mean for performance and features. I’ve read in some places that the 2308 only supports raid 0, 1, 0+1 natively. Is that true? Supermicro refers to the 2308 as “SW RAID 0, 1, 10 support”. You seem to be one of the only people on the web doing real reviews and testing of server class hardware and equipment, so I’m wondering if you can enlighten. Thank you!

Sure, the LSI SAS 2308 does only basic striping and mirroring (RAID 0, 1, 10.) The SAS 2208 can do pairity raid levels like RAID 5 and 6. Also, the LSI SAS 2208 has onboard cache while the SAS 2308 does not. Lots of info in the fourms if you want to ask any probing questions there.

I have been doing a fair amount of testing with Samsung 840 Pro 256GB and Intel 520 240GB drives with the LSI2308 controller and Windows Server 2012. I have run into similar issues, but have had variable results.

The one thing that was constant is absolutely horrible write performance when the default policy “Enable write caching on the device” was set. Write performance returns to acceptable levels when this policy is enabled and “Turn off Windows write-cache buffer flushing on the device” is set. This policy is avoided when write caching is disabled.

I would prefer to just disable the write caching, but I found variable performance after reboots as the drive would show write caching disabled but it still performed like it was enabled. The stability of the policies through a reboot was poor: I would find them reset to different values on different disks after a reboot. I expect the reboot issue has to do with UPNP enumeration of storage devices during Windows boot.

Regardless, with the 840 Pro’s I see an ~50% random 4K write IOPs hit when moving to the LSI2308 from the Intel C602 sata ports – 88K IOPs drops to 43K IOPs. This was measured using SQLIO from MS (you can guess the work I do). The Intel 520’s dropped from 53K to 43K.

Overall, I find the LSI2308’s behavior problematic at best. I would like to see if you do better with a standalone 9207-8i.

So you concluded that with a Samsung 840 Pro and LSI SAS2308 HBA the random 4K writes were much improved with Write Through instead of Write Back? Correct?

I am trying to set up a LSI 9212-4i4e. That is a LSI SAS2008. It is in IR Mode with write policy set, according to msm, as Write Through. The Anvil and AS SSD are only about 2MBytes/sec. Seems counter to you SAS2308 experience? I could leave as Write Through and flash to IT, but you wrote above (I think!) that IR mode provided very similar results to IR mode? I could use megaSCU to change write policy to Write Back, but your results for SAS2308 HBA show Write Through is much better 4K write scores.

What do you suggest for LSI 9212-4i4e? SAS 2008. I want Raid 0 with two Samsung 840P (2x256GB). Currently I have P16 IR mode, with write policy=Write Through. Anvil and AS SSD show only approx. 2MByte/sec in 4K write tests. What do you suggest I try next?? Hope you can and will respond here, and/or to my email address. Thanks!