If you’ve ever shopped for a stable, high-performance SAS or SATA host bus adapter (HBA) then you have no doubt heard of LSI and the venerable LSI SAS2008 SAS controller chip. Cards based on the SAS2008, like the LSI 9200-8i, the 9200-8e, and in fact all of the cards in Pieter’s article have fantastic reputations for performance, reliability, and very broad driver support. Let’s take a look at a single slot PCIe card able to push over 5GB/s and 726,000 IOPS.

LSI SAS2008 cards start at around $220 new but used OEM versions like the IBM M1015 can be pick picked up on eBay for $75 or less. New or used, LSI branded or OEM, you’ll be getting an eight port SAS card capable of an impressive 2,500MB/Second of throughput and some 350,000 IOPS.

But what if the performance of a SAS2008 controller is just not enough? Well then how about two SAS2008 controllers? Presenting the LSI 9202-16e, featuring twice as many controllers, double the PCIe bandwidth, and double the number of disk ports. Will doubling everything provide double the performance, in spite of a quirky architecture binding it all together? We’ll soon see.

On paper the LSI 9202-16e is the biggest, baddest host bus adapter that LSI sells. Except they don’t sell it to the channel. You’ll find the card right here on the LSI web site, where it has been since November 2010. You’ll even find a full set of drivers and release notes on the LSI support site. The problem is that LSI won’t sell you one – and neither will anyone else. Their sales organization says that the 9202 is “OEM only” while their OEM organization insists that nobody has yet decided to OEM it. A few months ago I gave LSI sales a call and they hinted that a retail version would be making its appearance in June or July of 2012, but it’s August now with no sign of a retail SKU. Luckily, a few of these elusive cards have recently appeared on eBay as “used” and I managed to pick up several for testing (you can see an ebay search here.) How could I resist what may be the fastest SAS card on the planet?

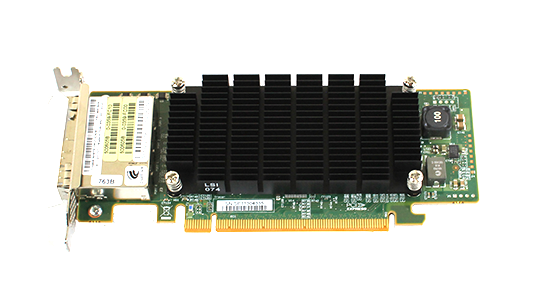

Physical Details

The best way to describe the LSI 9202-16e is as two LSI 9200-8e cards stuck together onto one PCIe card and covered by a giant heatsink. Instead of a single SAS2008 controller the 9202 has two. Instead of an x8 PCIe connector, this card stretches to x16. And finally, instead of eight SAS ports there are 16. Notably, the usual SFF-8088 external SAS connectors have been discarded in favor of newer and much smaller high density SFF-8644 connectors, driving up cabling costs, but keeping the overall height low enough to meet the low profile specification.

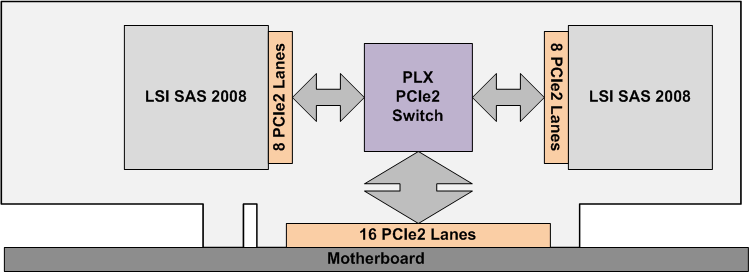

Of course you can’t just smash the guts from two SAS cards onto one board and expect everything to just work. Also present on the card, and key to the architecture of the 9202, is a PCIe switch from PLX. The switch allows each SAS controller to think that it is directly connected via its own x8 port PCIe port. See the diagram below.

The PLX chip has an x8 connection to each SAS2008 controller and an x16 connection to the server. Like an Ethernet switch, a PCIe switch chip routes packets of data as needed. Of course adding an extra switch to the 9202 means that each data block must traverse one more “hop” on its way from disk to CPU, potentially adding latency and harming performance. At the level of performance demanded from a top-tier SAS card like this, even a small amount of latency can destroy overall performance.

Visually the card is dominated by its massive heat sink – something seen in graphics cards more often than host bus adapters. The oversize chunk of Aluminum is there for a very real purpose. Officially the card draws 17 Watts (compared to 7 watts for the usual SAS2008 card) but you’ll conclude that it consumes far more than that. In the Supermicro test server, which sounds like a chorus of angry hair dryers when running and could probably blow out birthday candles four feet away, the card stays every bit as warm as the 80 watt CPUs while the standard LSI cards never feel anything above room temperature. This card requires very serious cooling.

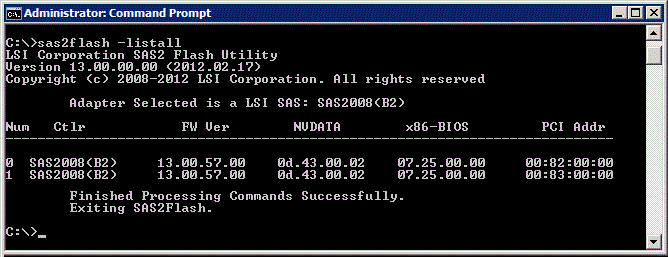

BIOS and Firmware

The “two-in-one” theme continues after installation. At boot time the 9202 card shows up as two SAS2008 controllers, no different than if two separate cards had been installed in two separate PCIe slots. The sas2flash command line utility (shown below) also thinks that it sees two separate cards. I updated my card to the latest firmware by running the standard sas2flash commands… twice of course. Windows device manager even sees the 9202 as two separate devices.

Benchmark Setup

Databases are the most likely – and the most demanding – use of the LSI 9202 card. For that reason, our testing derives from database usage scenarios. We are not, however, testing with Oracle or SQLServer. Our goal is to understand the performance of the 9202 card, not the database software. We therefore test with simplified, synthetic, but highly reproducible, and definitely “database like” workloads generated by Iometer.

There are two very different patterns of database IO. Transactional databases typically feature a high number of small random disk input/output operations per second (high IOPS) whereas data warehouses drive huge quantities of sequential reads (high throughput). For our testing we will run two simplified tests, one an IOPS test and the other a sequential read throughput test.

We will test using disks that have been initialized but not formatted – raw disks – in order to remove the operating system from the equation as much as possible. We will not use any type of RAID for the same reason. In practice, the individual disks would managed by some type of software RAID, perhaps ZFS or Oracle ASM.

We will test using Iometer 1.1.0-RC1, the latest version available. Iometer is configured with eight “workers”, each worker exercising two disks (16 disks total) to a queue depth of 64. Smaller or larger numbers of workers and different queue depths provide slightly different results, but this configuration is able to generate the highest results.

For the IOPS test we instruct Iometer to sequentially read 4kb chunks of data from the drives. A test using random reads would be a far more accurate way of simulating database usage than sequential reads, but a random workload ends up testing the SSD drives more than the SAS card. Since the card is our focus, we will use sequential reads.

For the Throughput test we randomly read 1MB chunks of data in order to very closely simulate a data warehouse database. While typical data warehouse IO is logically sequential from the point of view of the database engine, RAID causes the data to be widely distributed across disks, meaning that actual IO is closer to random. Fortunately, at this large chunk size, random and sequential reads yield the same benchmark results so we are able to use random reads.

Benchmark Configuration

For testing we have paired the LSI 9202-16e card with two large multi-CPU rackmount database servers. We’d probably get better benchmarks results from a finicky single-CPU water-cooled gaming machine, but this card is targeted at servers and so we are testing with servers.

Our first test machine is a Supermicro 2042G-TRF with 48 cores of AMD Magny-Cours CPU from four AMD Opteron 6172 processors. The Supermicro features five PCIe generation 2 slots, two of which are the x16 slots that this card craves.

Our second test server is an Intel R2208GZ4GC, a recent introduction sporting a pair of Intel Xeon E5-2690 CPUs and six PCIe3 slots.

Why two different servers? The Supermicro, with it’s PCIe2 x16 slot is able to drive the 9202 to its maximum throughput while the Intel, with its lower width x8 PCIe ports but more efficient Pcie architecture, is able to maximize IOPS. Had one been available , an Xeon E5 server with an x16 PCIe3 port would have made an even more capable test system.

The 16 port LSI 9202 SAS cards in our servers are connected to 16 SSD drives – one drive per port. The SSD drives are OCZ Vertex 3 120 GB devices residing in a 24-bay Supermicro SC216A chassis outfitted as a JBOD system using the Supermicro JBOD power converter board. These drives are well used – “conditioned” in SSD speak – and therefore give a very good indication of real-world performance. That said, brand new drives would probably not yield significantly different results since in all of our test scenarios it is the card, not the SSD drives, that limits the overall results.

Supermicro Server Details:

- Server chassis: Supermicro SC828TQ+

- Motherboard: Supermicro MBD-H8QGi-F

- CPU: 4x AMD 6172 “Magny-Cours” at 2.1Ghz

- RAM: 256GB (32x8GB) DDR3 1333

- OS: Windows 2008R2 SP1

Intel Server Details:

- Chassis: Intel R2208GZ4GC

- Motherboard: Intel S2600GZ

- CPU: 2X Intel Xeon E5-2690

- RAM: 16GB of DDR3 1333

- OS: Windows 2008R2 SP1

JBOD Disk Subsystem:

- Chassis: Supermicro SC216A with JBOD power board

- Disks: 16 OCZ Vertex3 120GB drives

- Cables: 4x 2Meter SFF-8644 to SFF-8088 shielded cables

Benchmark Software:

- Software: Iometer1.1.0-RC1 64 bit for Windows

- Disk setup: 8 workers, disk size of 4million sectors (around 20GB), queue depth=64

- Test setup: 60 second ramp-up time, 10 minute test duration

- Throughput testing: 1MB chunk size, 100% reads, 100% random

- IOPS testing: 4kb chunk size. 100% reads. 100% sequential

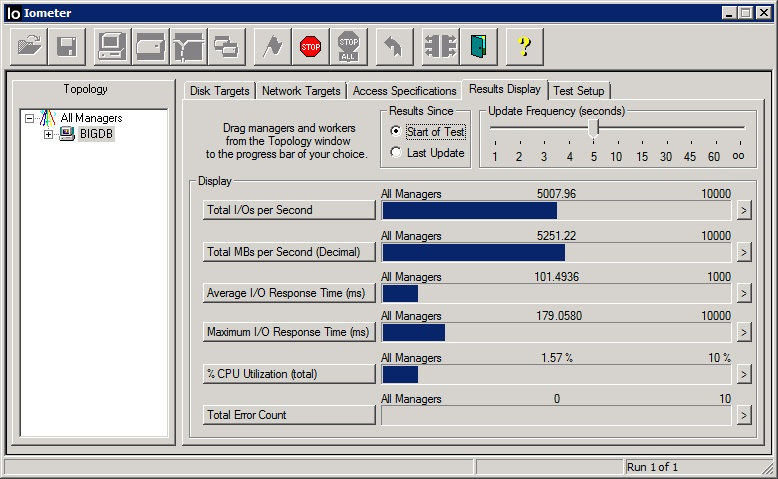

Throughput Results

With 1MB sequential reads against 16 SSD drives, the LSI 9202-16e manages an impressive, in fact record-breaking, 5,250MB/Second of throughput. Latency is reasonable given the deep queue depth setting and CPU utilization is miniscule, due in large part to the very large number of cores available on our Supermicro server.

Do we achieve double the performance of a single controller? Yes we do. The single-controller LSI 9200-8e tested in a prior session using the same server yielded 2,570MB/Second, which close enough to half of our current result to be within the margin of test variability. That extra chip in the 9202 – the PLX switch – certainly hasn’t harmed throughput in any way, and that is very good news.

While impressive, and twice as fast as a single card, we are still not seeing the equivalent of sixteen times the performance of a single SSD drive. Had scaling been perfectly linear, we should have seen over 8,000MB/Second. The 9202 is fast but still can’t quite keep up with a full set of SSD drives. Previous testing showed that a single SAS2008 based card is able to keep up with only about five of today’s SSD drives. That trend continues here, with two controllers able to deliver results about ten times as good as a single SSD drive, not 16 times. The latest crop of LSI PCIe 3.0 -based cards like the LSI 9207 shatter that limitation, at least when used in a PCIe 3.0 computer, but are currently limited to eight ports and therefore around 4,100MB/Second. The 9202 still reigns supreme for absolute maximum throughput per PCIe slot.

If you are familiar with disk benchmarking, you may notice that the latency numbers look a bit high. They are, but it’s not the cards fault. For a high-level test you can and should ignore latency results when doing saturation-level throughput testing. What we are doing is overloading the card in an attempt to eek out the maximum total throughput. Response time for individual requests suffers, but that’s not what we care about. This test used a queue depth of 64, overloading the SSD drives dramatically. Testing with a queue depth of 32 yields 1/3 the average latency (30ms) with only a 5% drop in total throughput.

As a side note: The first few runs of the throughput test were an absolute failure, with thousands of disk errors. It turns out that one nearly new SSD drive, which formatted and operated just fine under normal loads, was unable to handle high queue depth testing. Resetting the drive made it functional again but it would again drop off of the bus under load. Even a firmware re-flash did not solve the problem. I have been using up to 30 of these same consumer-grade SSD drives at a time for a very high performance data warehouse server for over a year with total reads totaling Petabytes and this is the first failure – and it’s a new drive that failed, not an older one. I am actually surprised that these very inexpensive drives have held up so well.

IOPS Results

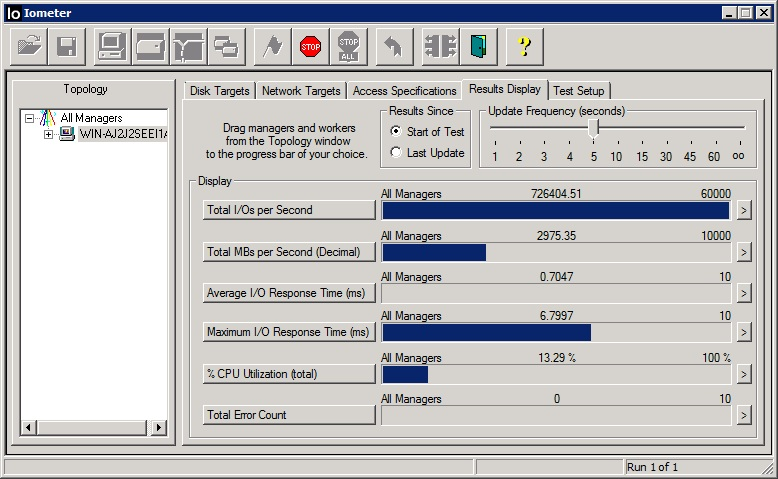

Our goal here is to measure the maximum number of Input/Output transactions per second – IOPS. For this test we are using our Intel server, with it’s low-latency PCIe hardware. The reduced bandwidth (X8 instead of x16) turns out to matter not a bit for this kind of testing. The test results in 726,000 IOPS for a single SAS card, higher than any card that I have tested and, in fact, higher than this cards rating of 700,000 IOPS.

Do we again achieve double the performance of a single-controller card? Absolutely. Our 726K IOPS result is almost exactly twice the 365K IOPS from a single controller LSI card running in the same Intel server using eight drives. In addition, the average latency is 0.70ms, versus 0.70ms average latency for the single controller 9200 card. While the PLX PCIe switch in theory some latency, in practice the result is not at all measurable.

Conclusion

If you need 5,000MB/Second from a single PCI card, or more IOPS than an entire fortune 500 datacenter circa 2005, or you just need a 16 port SAS card that fits in a low-profile slot, then the LSI 9202-16e is your only choice, and it might be worth your time to track one down. For everyone else, save time and probably money by buying two single-controller LSI cards. In fact, if you use Intel Xeon E5 processors then you have PCIe3 slots available to you and can evaluate the newest LSI cards like the 9207 that can push close to as much throughput as the 9202 (4,100MB/Second versus 5,250) and in theory just as many IOPS, albeit with fewer SAS ports available.

Does anyone else see most of the screenshots as broken links?

Yes I cannot see the screenshots either.

I see them working…

Great results Jeff and team. Appreciate finding gems in thr rough like this. Never had heard of the card earlier. Hopefully LSI releases this or a sas2308 version.

Wowsies! Great performance. Going to pick one up today. LSI get these out in the marketplace.

I actually have servers with lots of PCIe x16 slots so I can make use of this. With the PCIe bridge chip, do these still perform well in PCIe 2.0 x8 slots?

Yes the 9202 does perform well – very well – in an x8 slot. Testing using the Intel server, with the card in an x8 slot, throughput was 2992MB/Second and IOPS was the 726K as in the article.

RobotoMister,

I dream of a dual 2308 x16 card: potentially 8.2GB/S and 1.4 million IOPS. I wonder if the Atto ExpressSAS H6F0 GT might come close?

Jeff how were iops on the AMD x16 v2 slot??

Like this type of ifnormation. What’s next?

Bosstones,

I can’t report a definitive IOPS number for the 9202 in the Supermicro AMD server. My primary focus was the Intel since I knew that it would provide the best results. I did run two tests on the Supermicro but saw issues above 500K IOPS that I believe are related to the failing SSD drive that I mentioned in the article. Based on my partial LSI-9202 tests plus my earlier single-controller tests, I expect that the 9202 would be good for between 450K and 600K IOPS on the Supermicro, but again this is somewhat speculative.

Good card. Looks harder to find today versus yesterday. Any updated ETA to the market?

Would love to deploy these if they go on sale. Esp. if there is a SAS 2308 version.

Plz keep us posted on developments.

So strange you can find info about this thing from LSI but cannot buy one.

Only bad thing is tha the cables are $$$ and not everyone has sff-8644 sitting around.

Any chance you could give a bare board shot no heatsink? I see pictures online with three heatsinks instead of just that one.

That’s why this site is great. Community contributions and ya’ll find stuff nobody else does. Lots of sites cover the newest things. You cover a mix that is relevant. These are a prime example. Hope LSI listens.