With the launch of the AMD EPYC 7002 series, PCIe Gen4 has become a reality in x86 servers. You can read our in-depth AMD EPYC 7002 piece for more information about that game-changing platform. Now companies are looking to build devices that take advantage of that new capability. Liqid is getting in on the action. The Liqid Element LQD4500 is targeted to offer something decidedly different in the space.

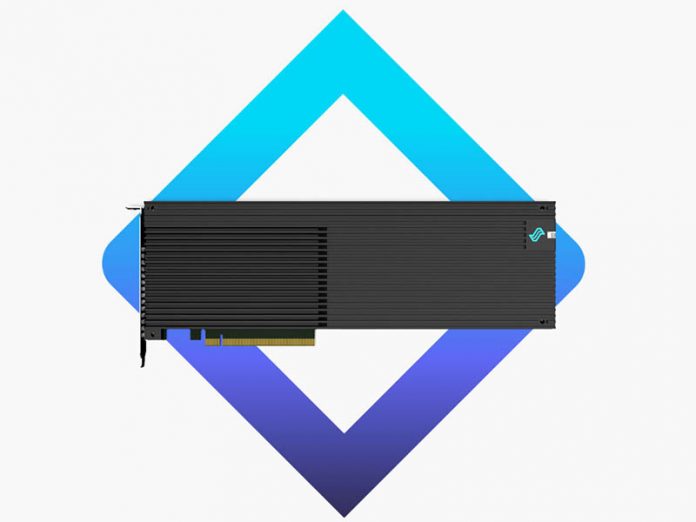

Liqid Element LQD4500 PCIe Gen4 x16 NVMe SSD

The Liqid Element LQD4500 is not a small card. The device comes in as a full-height full-length PCIe Gen4 x16 card. Most NVMe devices today are either U.2, M.2, or in add-in card format, low profile/ half-length. That size will mean that buyers need to check server specs carefully. We have a number of AMD EPYC 7002 servers in the STH lab that cannot handle this card’s form factor.

The card handles power-loss protection which most companies specify in their high-performance storage solutions. Other important features are NVMe 1.3 support, SED, TCG support, RAID on card support.

Performance figures that Liqid provides are up to 24GB/s and 16GB/s 128K reads/ writes. The company also says it can handle up to 4 million random read and write IOPS. Latency is 20us with no host caching enabled.

One of the first design wins for the Liqid Element LQD4500 is the One Stop Systems FSA4000. An OSS FSA4000 includes a 1U host server and an OSS 4UV PCIe Gen4 expansion system with up to 16 LQD4500 NVMe cards. The expansion system directly attaches to the server with two OSS PCIe x16 Gen4 links providing 512Gbps of bandwidth.

Final Words

Liqid’s composable infrastructure is fascinating, but the company seems to be going back to its SSD roots with this offering. The performance specs are excellent and if one needs a storage solution that it does not need to hot-swap the field replaceable unit (in this case the PCIe AIC) then this makes a lot of sense. We think that, for now, users will most likely see the LQD4500 in one of the OSS FSA4000 systems given its form factor.

Data center capacities for the LQD4500 are: 7.68TB, 15.36TB, and 30.72TB. Enterprise capacities are 6.4TB, 12.8TB, and 25.6TB.

Does this require PCIe bifurcation? I have an old Precision system which could use a storage expansion.

The Liqid Element controller card, with x16 PCIE 4.0 lanes reads like an interesting product. It would be nice if I could migrate RAID 10 volumes made with an Areca 1883ix series RoC RAID controller onto the Liqid Elements controller, thus not have to rebuild each volume. This card being the first drive controller in sometime, that is not intended just for proprietary enterprise level systems, that reads like an interesting evolution since SAS2 on PCIE 2.0 x8 lanes. The hardware transition to PCIE 3.0 x8 lanes was nice but not extraordinary since the hardware evolution for non enterprise users did not advance to x16 lanes.

Along with wanting to know if the card will be made available as a standalone product, would like to know:

i) is the controller card compatible with SAS2, SAS3, SATA II and SATA III or does it only function with NVMe?

ii) how many ports and port types are on each controller card?

iii) can a motherboard with 2 controller cards function in redundant pair mode (master, slave)?

iv) how many addressable devices and RAID 10 volumes can be attached to each controller card?

v) is there a matching, internal expander board in the works and if so with how many ports?

I envisioning 2 controller cards operating in a graceful failure, redundant mode, that are strait and cross daisy chained to 4 redundant mode internal expander boards, 2 expanders per controller.

The Liqid is not controller part as a product. It is final storage device/product.

@Park McGraw

You misunderstand the product completely. It is not a RAID or HBA card. It is a complete disk on a card, similar to Fusion.IO.

Holy cow! Four of these – or even just two – in a dual-CPU box and you have a really nice Data Warehouse server with a shocking amount of IO read throughput. Take my cash please!

Do you populate this with M.2 cards, or is it a sealed unit with its own storage built in?

Seems like a sealed unit implementing a PCIe switch with RAID functions like those Highpoint cards, just with 16 M.2 disks hiding under that heatsink I think (assuming 32TB=16x2TB M.2 sticks)