Today we have our Intel Xeon D-2183IT benchmarks and they may cause a bit of ruckus in the industry. The Intel Xeon D-2183IT is a SoC intended for edge deployments which is an application area that is set to explode. 5G network operators, as an example, want the ability to run some compute at the edge. The Xeon D-2183IT is a good example of a SoC that has significantly more power and expansion capability in this generation to meet those needs while keeping the same instruction set as the higher-end Xeon Scalable family that runs in the data center. We have often referred to the Intel Xeon D-2100 series as an embedded version of the Intel Xeon Gold 6100 series from a CPU performance standpoint, and we are going to show why in this review. It turns out that in our AVX-512 workloads, it performs more in-line with Intel Xeon Gold 6100 and Platinum 8100 than Xeon Gold 5100.

Key stats for the Intel Xeon D-2183IT: 16 cores / 32 threads, 2.2GHz base and 3.0GHz turbo with 22MB L3 cache. The CPU features a 100W TDP. This is a $1764 price point which is on the higher-end of the embedded spectrum. Here is the ARK page with the feature set.

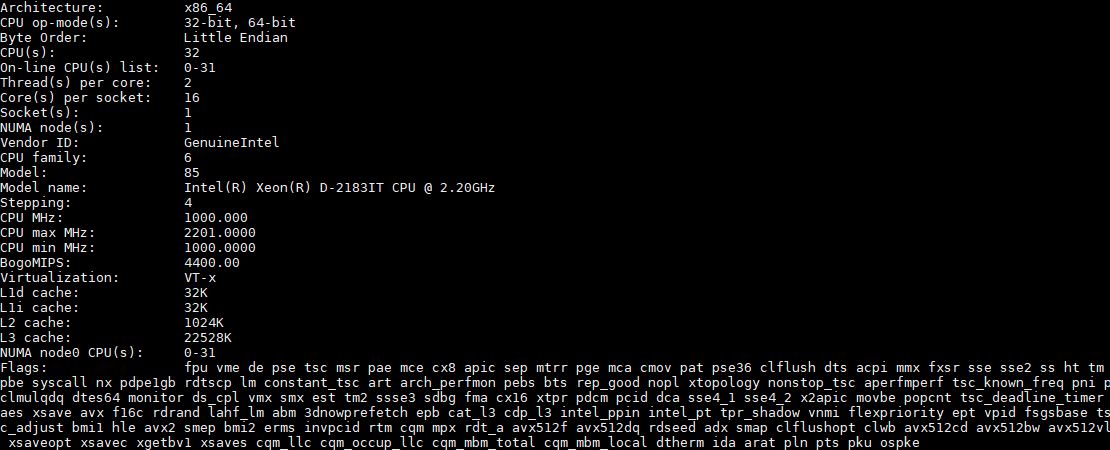

Here is what the lscpu output looks like for the chips:

You can see avx512f, avx512dq, avx512cd, avx512bw, and avx512vl which are hallmarks of the Skylake family of Xeon processors. As we are going to see, the AVX-512 performance is not what we were expecting.

Test Configuration

Here is our basic test configuration for single-socket Xeon Scalable systems:

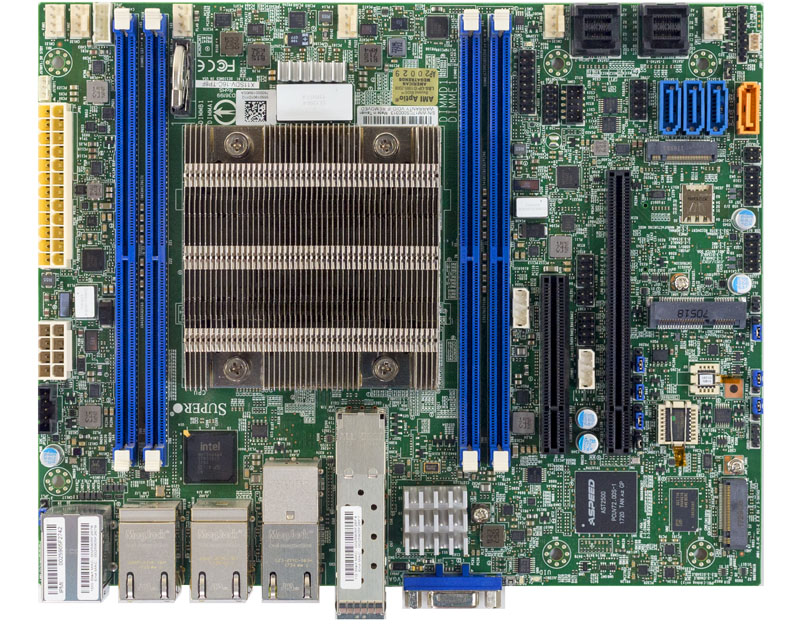

- Motherboard: Supermicro X11SDV-16C-TP8F

- CPU: Intel Xeon D-2183IT SoC embedded

- RAM: 4x 16GB DDR4-2400 RDIMMs (Micron)

- SSD: Intel DC S3710 400GB

- SATADOM: Supermicro 32GB SATADOM

The CPU itself supports up to 512GB of RAM, either in 128GB x 4 or 64GB x 8 configurations. One feature we would have liked to have seen on the Intel Xeon D-2183IT is DDR4-2666 support. On the other hand, compared to previous generation, publicly available Xeon D-1500 parts, there are two more DIMM channels so our four DIMM configuration is a single DIMM per channel.

Intel does not necessarily see the Intel Xeon D-2100 series as competition for its mainstream Xeon Scalable CPUs which makes sense. At the same time, we are going to use the mainstream CPUs as comparison points since we see a common question being around equating edge compute performance to mainstream data center processor performance. We are also going to have comparisons to the previous generation Intel Xeon D-1587 and the Intel Atom C3000 series which sits below the D-2183IT in the family of Intel SoCs.

Don’t I remember reading that the dual-FMA gold/platinum SKUs drop the AVX clock-rate by half, due to power/thermal issues? Maybe these are single-FMA but maintain full AVX clocks due to fewer cores/lower clocks? Could that explain the FMA workload performance here?

Haven’t tried it myself but,

https://software.intel.com/sites/default/files/managed/9e/bc/64-ia-32-architectures-optimization-manual.pdf

15.20 SERVERS WITH A SINGLE FMA UNIT

“The following example code shows how to detect whether a system has one or two Intel AVX-512 FMA

units. It includes the following: …”

Michael – they do lower clocks, but not by enough to explain what we are seeing, especially on the Gold SKUs.

Kyle Siefring – tried to do a quick gcc compile and it failed. Will try icc later. Things that I know are using AVX-512 (e.g. Linpack) are performing much better than one would expect which is a similar idea to the two loops.

“Still, months after the AMD EPYC 3000 series launch they are still virtually impossible to find in a channel board in the market. ”

AMD, we’re waiting here guys, can’t support the underdog if the product isn’t on shelves

Interesting results re AVX512 – thanks for posting the findings!

Are you able to run a full instruction latency/throughput test?

Agner has some test scripts here: http://www.agner.org/optimize/#testp

If you’re able to run Windows, you can also try AIDA64 (which would be compatible with all the details here: http://users.atw.hu/instlatx64/ )

Also are you able to post the clock rate during AVX512?

According to the information here [ https://twitter.com/InstLatX64/status/963046768849113088 ] the Xeon-D throttles slightly less than the Scalable Xeons, but not by much.

It was initially reported that some Core-X models only had 1x 512b FMA, but this turned out incorrect, so I wouldn’t be too surprised if there’s a reporting error here too.