Dear Intel: Do it.

For those who have not seen, in April 2023, Intel had a simplified instruction set whitepaper it is calling X86-S. The big change is that Intel is proposing dropping native 16-bit and 32-bit support from its instruction set. As stated above, Intel should do this, but there is a major cost involved.

Intel X86-S Streamlined 64-bit Instruction Set A Perspective

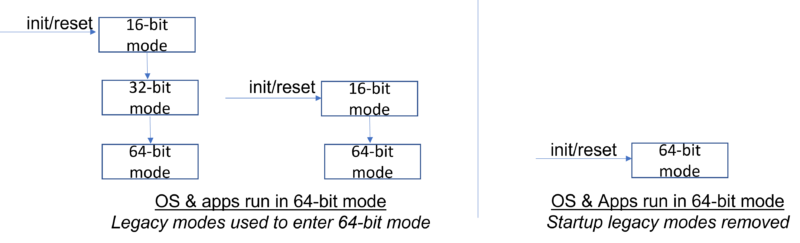

According to the Intel blog post and 46-page whitepaper, the main goal of X86-S is a cleanup of legacy 16-bit and 32-bit operating modes. Currently, to get into 64-bit mode, X86 CPUs need to traverse 16-bit and 32-bit operating modes.

Reading that, likely on a 64-bit capable system and in a 64-bit capable OS, you may think that this transition already happened. In the world of x86, backward compatibility is a big thing so those legacy modes are still there. When we started STH in 2009, it was in the 32-bit versus 64-bit OS transition. There were real concerns given the 32-bit OSes were more efficient at small memory sizes. That is a big reason many Arm projects, like Raspberry Pi’s were mainly 32-bit for a long time. In the sub 3GB-4GB of RAM range, 32-bit can make sense. For modern x86 CPUs, 4GB is the low-end, and when we see systems that come equipped even with only older E-cores and 4GB, we suggest that should be 8GB these days. An 8GB DDR4 SODIMM is around $17-18 at the time we are publishing this.

With Linux and Microsoft pushing 64-bit for over a decade, it is time, as the paper suggests to trim legacy instructions and functionality from X86.

Since backward compatibility is a big deal, the proposed solution is half serviceable. Intel proposes using VMs to provide legacy 16-bit and 32-bit support. Another thought is, why? 2023 hardware is more than capable of running legacy code. Today’s processors will realistically last until 2030 en masse in the field. For any applications that need to be bare metal, why not just use today’s hardware as part of an up-cycling story?

A transition tomorrow means that those using today’s hardware have plenty of time to transition most applications to either virtual machines or simply to leave them on existing hardware platforms.

In the meantime, work to streamline X86 helps solve tomorrow’s problems more efficiently rather than hindering those same problems by enabling legacy hardware. To put this in perspective, two-generation old 16-bit compatibility in 2023 is like SpaceX spending time to figure out how to strap a horse saddle to a rocket flight seat. If folks still want to ride horses, they can use horses and saddles. We can look back at 16-bit compatibility through ESG-themed upcycling as we do with nostalgic equestrian pursuits.

If this were 2007-2010 when that transition was in full force, my opinion would be very different, but we are a decade to a decade-and-a-half from the transition’s major push.

With all of that said, some thought should be given to what this means. While Intel’s core competencies such as notebook, desktop, and server CPUs may all use 64-bit these days, that is far from a given in the IoT space. There, 32-bit can make sense from a power and cost perspective. At the same time, that has not been the focus for Intel. Instead, E-cores are getting faster and Quark is continuing to be sunset (fun fact, you can buy new servers with 32-bit Intel Quark cores in the Lewisburg generation PCH.)

Final Words

Intel would effectively be surrendering IoT for X86 to Arm and RISC-V with this move. Perhaps it has already done so. This may not be the biggest change in the world to many, and there are still 32-bit apps out there. At the same time, we live in an era where 64-bit for client and server devices is mainstream, so those parts of the market are now due for a transition. The only question is when.

If you are one of the folks concerned about this, check out Intel’s page and white paper linked above.

64-bit doesn’t always require wasted memory. The Apple Watch transitioned to ARM’s 64-bit ISA but kept 32-bit pointers for example. That didn’t require any hardware changes either. It’s just an ABI and compiler toolchain problem. And x86-64 Linux has the equivalent feature called the “x32” ABI.

They aren’t making it very clear, but they’re not proposing to drop support for 32-bit userspace.

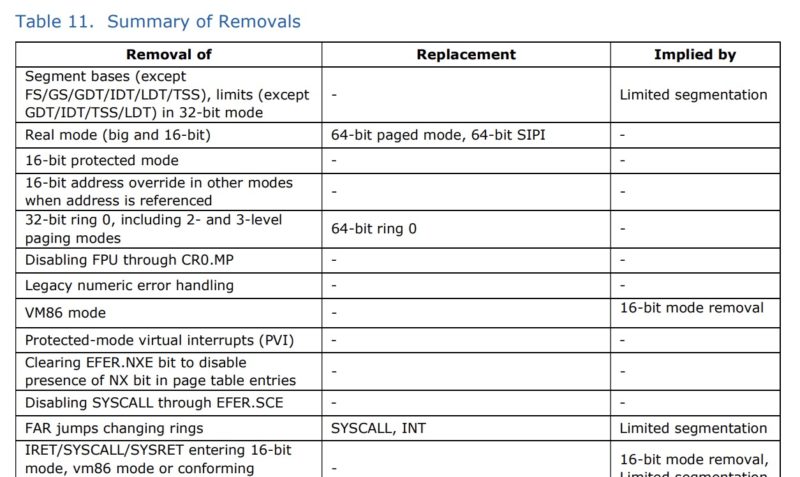

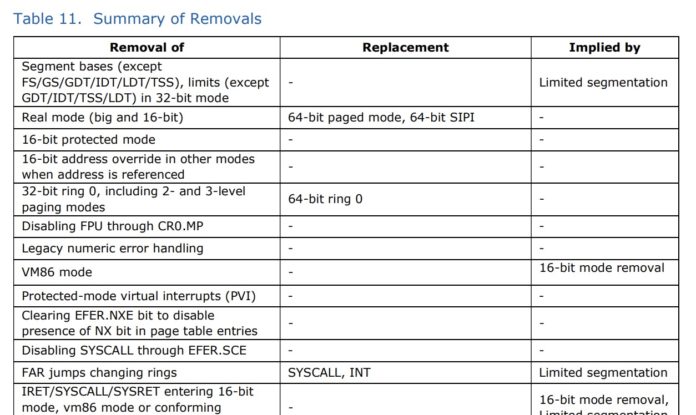

For example, they say they’ll be “Using the simplified segmentation model of 64-bit for segmentation support for 32-bit applications, matching what modern operating systems already use,” and “32-bit ring 0 [kernel mode] is not supported anymore and cannot be entered”.

The removal of 16 bit likely won’t be missed.

The removal of 32 bit kernel mode will require some work to drop in various environments. As mentioned, 32 bit mode can be more efficient when dealing with smaller memory sizes, this also held true for various virtual machines that didn’t need more than 4 GB of RAM. Generally speaking, 64 bit alternatives are out there and have been for sometime, rather there was little need to to migrate to a new 64 bit VM overtime. Overall a positive move but it’ll just be a minor pain for some people.

The bigger headache is the removal of the archaic x87 FPU. Legacy 32 bit user space applications were originally designed to have this present. So even if 32 bit applications can exist on the 64 bit x86-S host, they may still not work. This is especially true for custom applications in corporate environments as optimizing for the latest and greatest ISA extension is only done on a business need basis. It will boil down to when those applications were compiled and if the compiler at the time opted to use SSE2 as the default code path.

I would also hope that x86-S would also elevate AVX2 as the base FPU schema. Much like how SSE2 was made the default in the initial 64 bit mode, this would once again create a solid baseline for what is supported in the ISA.

Once again, it’s not clear, but I don’t think they are removing support for x87. The only thing I can see in the PDF that might support complete removal is “Always long mode, no legacy FPU modes” — but the details indicate the “legacy FPU modes” are the ones the OS might use to control an external FPU (like the 387 and earlier).

The pseudocode in section 3.10.3 includes handling x87 state, which you would only need if there might be x87 code running.

I probably ought to have added earlier that the x86-64 Linux kernel has always supported a fully 32-bit userspace on a 64-bit kernel, which limits the porting and memory problems for embedded Linux.

There is no direct replacement of 80bit extended precision arithmetic of x87 FPU in SSE/AVX instruction sets and this feature is still used, so x87 stays for some time

There’s a lot going on in this document, and some very funny wording.

It may well be my own background biasing me here, but I read this as a response to the bugs that delayed sapphire rapids for so long.

If I put myself in intel’s shoes and look at the impacts of what they’re proposing, the biggest (in terms $) change I see is a reduction in the cost and complexity of doing security audit. Especially around the early stages of the boot process.

That said, it’s a complex topic. I’d love to hear what other people think.

Take note that the 32-bit userspace will basically remain the same, so the efficiency will still stay here.

I don’t think Intel has any product in the space where this transition would take have any effect at all. Yes, there is the Intel Quark but that is basically just the Pentium ISA…

Even ARM for a lot of IoT devices has already moved to 64-bits, it of course depends on the device, something like Cortex M series will likely never move to 64-bits but we already see a lot of devices running in 64-bits mode with A53 and A55 and there will probably be small IoT and we will likely eventually see the sucessor of the A510 being 64-bits only.

So the only important thing is well, to not break the 32-bit userspace compatibility. I would personally be fine if it broke x87 compat.

“Intel would effectively be surrendering IoT for X86 to Arm and RISC-V with this move. Perhaps it has already done so.”

This battle was lost a decade ago. Hilarious thing is the place where Intel is most competitve on process is simple little cores.

Lenovo mailer this morning touting new product, nice smaller Intel based notebooks 2560×1600 13.3 screen, 16 GB, TWO INTEL REALCORES and EIGHT INTEL AREA-EFFICIENT CORES.

I bought a Lenovo 14″ in 2017… it had two real Intel cores too.

Thank you for sharing this insightful perspective on the Intel X86-S Streamlined 64-bit Instruction Set. It’s fascinating to see how Intel continues to optimize and enhance their architecture. This thoughtful analysis provides a clear understanding of the potential benefits it brings to the computing world. Great work!