HPE ProLiant MicroServer Gen10 Plus NIC Options

Standard, the HPE ProLiant MicroServer Gen10 Plus comes with a high-quality 4-port Intel i350-based 1GbE NIC. While this is a high-end 1GbE solution, sometimes one needs higher network speeds. We are going to discuss the 2.5GbE, 10GbE, 25GbE, and some of the higher

Inexpensive 2.5GbE Solutions

If you simply want a low-cost higher-speed port, 2.5GbE networking is low power, uses existing cabling, and is very inexpensive. We reviewed a number of NICs such as the Syba Dual 2.5 Gigabit Ethernet Adapter and the TRENDnet 2.5Gbase-T PCIe Adapter as internal options.

We also specifically tried the inexpensive CableCreation USB 3 Type-A 2.5GbE Adapter with the MicroServer Gen10.

We also tried the Plugable 2.5GbE adapter. You can read our full review here, but we would advise not to get this for the MSG10+ if it is more expensive than the CableCreation unit since it requires a Type-C to Type-A adapter.

Overall, we wish that the MicroServer Gen10 had 2.5GbE built-in, but the solutions to upgrade in this class are extremely inexpensive.

10GbE Solutions

If you are going 10Gbase-T there are a few items to keep in mind. The first is power and heat. If you are using the VMware HCL as your guide, we suggest looking at an actively cooled 10Gbase-T NIC. If you are more flexible and using Windows or Linux, the Aquantia-based cards may be the best option as they offer relatively low power and heat operation. Do not get anything older than an Intel X540-T2 NIC for 10Gbase-T.

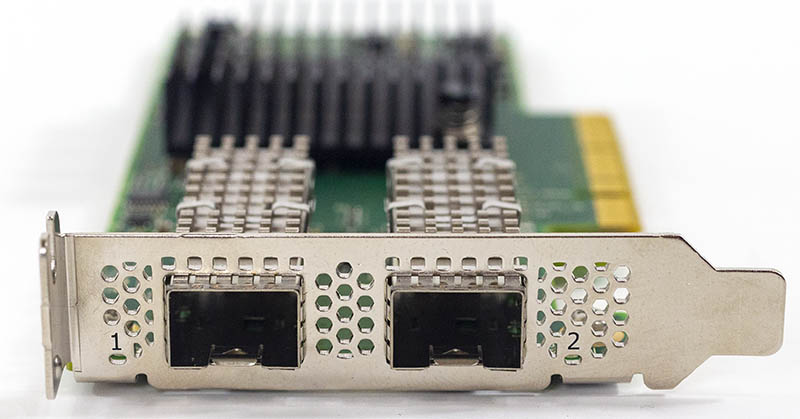

When it comes to SFP+, there are tons of great options with cards that use under 10W each. It seems as though <10-12W cards tend to fare better with the airflow provided by the HPE ProLiant MSG10+.

One can also add the HPE 867707-B21 dual-port SFP+ solution which is an official option. Beyond that, most 10GbE network solutions work well. For quad-port NICs, we like the Intel X710-da4 for its low heat and compatibility with a number of solutions. Also, adding a quad-port NIC allows one to do direct networking of up to four nodes in some edge clustering cases thereby avoiding using a switch.

We suggest getting SFP+ solutions here as they offer a low-cost and low power path to get 10GbE speeds. if you need 10Gbase-T, you can utilize SFP+ to 10Gbase-T adapter modules as necessary to accomplish the media conversion.

25GbE Solutions

25GbE is more interesting. One gets a balance of performance and power. While 25GbE is the current data center trend, we must remember that the MSG10+ is designed as an edge device. Frankly, in a server designed to utilize four rotating hard drives, 25GbE is likely too much network bandwidth. Perhaps the most used option here is the Mellanox ConnectX-4 Lx card. These are everywhere. You will want the low-profile bracket with these cards.

We also tested compatibility a bit broader including Broadcom-based 25GbE adapters.

The Intel XXV710 25GbE solutions worked great as well.

We also tried a HPE ProLiant Gen8/9 quad 25GbE solution based on QLogic NIC IP. This is a really interesting solution since it offsets the higher-power of the NIC with an active fan to aid in cooling.

25GbE is completely doable in the HPE ProLiant MicroServer Gen10 Plus, however, realistically, most installations are not going to have the disk throughput to surpass 10GbE speeds. Therefore, it may make sense to use lower-power and lower-cost NICs.

A Word on 40/100GbE

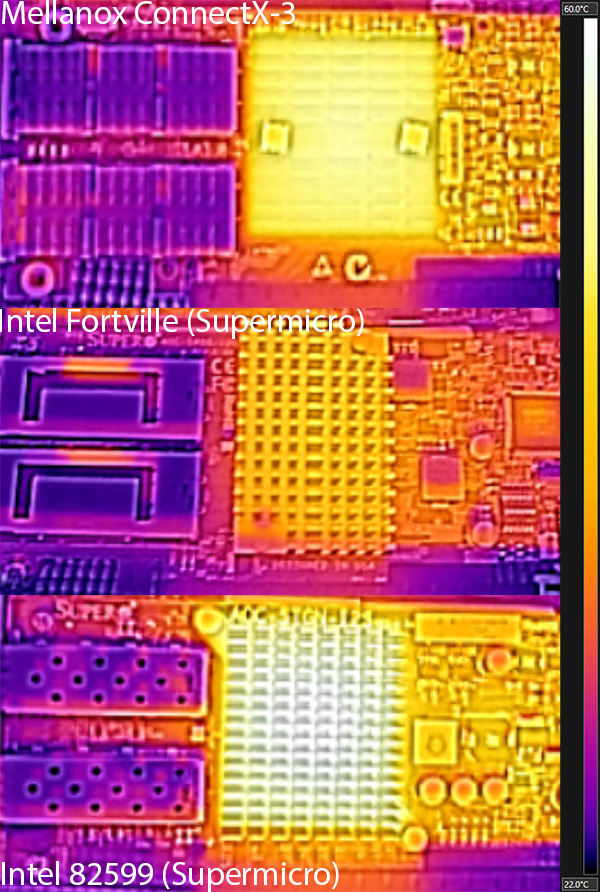

If one wants to know whether you can use 40GbE and 100GbE we tried a number of NICs. Heading to the higher-end NICs often means 15W or higher power consumption. For these cards, they can heat up. From our old Intel Fortville 40GbE Lower Power Consumption and Heat article, the XL710 NICs are likely one of the better options for 40GbE, but these cards can generate a lot of heat.

At 100GbE, we saw the chassis fan hit higher-power modes struggling to keep the NIC cool even without passing traffic using Mellanox ConnectX-5 VPI 100GbE and EDR InfiniBand cards. Our advice is that while 100GbE can be done, this is probably not the best option for passively cooled NICs. One of the advantages that HPE / QLogic quad-port 25GbE NIC had is that it offers active cooling.

Getting Out There: QNAP QM2 10Gbase-T Plus M.2 SSD

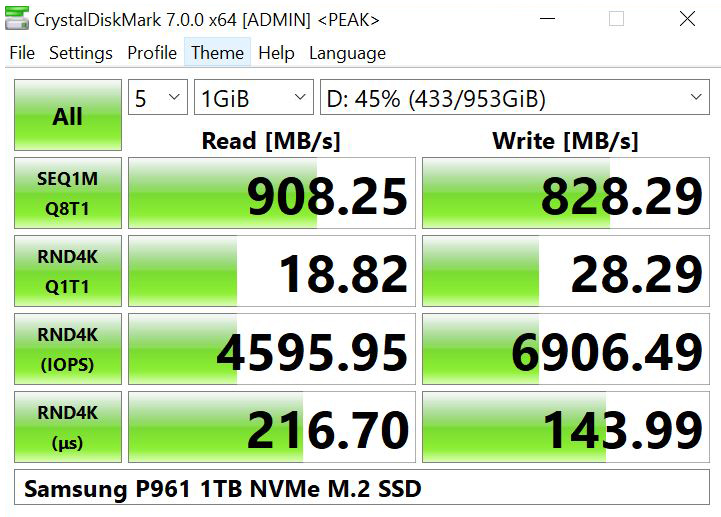

Perhaps the complete wildcard here is the QNAP QM2-2P10G1TA. This is a card designed for QNAP NAS units, but it can be utilized for other servers as well. It has a single RJ45 NIC port for 10Gbase-T via an Aquantia chipset. Onboard are also two NVMe slots as well. This allows one to install two NVMe drives and get a 10Gbase-T port on a single PCIe slot.

There are some major drawbacks to this design. First off, it is a PCIe 2.0 x4 card. That means that the entire card has about half of the available bandwidth of a single M.2 NVMe x4 drive.

Onboard this card utilizes a PCIe switch architecture. What that means is that the 10Gbase-T NIC, and two M.2 NVMe SSDs all connect to the switch chip and then share a PCIe Gen2 x4 backhaul to the system, even though the PCIe Gen3 x16 slot in the MSG10+ has plenty of bandwidth.

Where it gets even more restrictive is that each M.2 NVMe SSD slot gets only a PCIe 2.0 x2 lane connection to the switch chip. As a result, using a modern PCIe Gen3 x4 SSD in the slot yields around a quarter of the performance that one would get from a native PCIe 3.0 x4 slot, and that is the best-case scenario. In the worst-case of transferring data to and from a SSD over the network, data needs to be copied from the SSDs to memory then back out through the NIC and that done in both directions yielding more bandwidth than a PCIe 2.0 x4 slot can handle.

This card is also over $230 which means it is more expensive than purchasing a dual-port SFP+ NIC, and getting a USB 3.2 Gen1 boot drive plus a Gen2 data SSD by the time you fill it with drives.

As a simple, yet somewhat exotic solution, this works, but it is also not the lowest-cost nor the highest performing solution.

Final Words

In this guide, we have covered a lot of ground. Hopefully, this gives you some sense of what is possible with the HPE ProLiant MicroServer Gen10 Plus. The external 180W power supply and lack of internal storage connectivity limit what one can do with the machine, but there are plenty of ways to work around these limitations and customize these small-footprint servers exactly how you need them.

With a bit of creative work, we have been able to test just how far one can push the MSG10+. For example, an Intel Xeon E-2288G will work in the system, but it draws too much power to be practical. This took an enormous amount of testing, and we hope the community is able to take the work we have done and expand on it. Since we mean for this to be a resource, if we find new hardware recommendations in the future, we will update this piece.

This is just unreal. A–frickin’-mazing

Amazing work!

My god. You might’ve just single handedly better documented the Gen10 Plus than the community did with the Gen8 we all love.

And screw the man. That iLO Advanced should work w/o enablement kit on a shared port. You’ve created a system where you’ve got a paid iLO Advanced license but you can’t access the features of it.

Great guide, thanks a lot!

Longtime lurker. I don’t usually comment but I had to say this is fab.

Awesome article, one of the best for homebrewers that frequent This site

When using the HPE Quad 25GbE Qlogic Adapter, can it still work when the adapter is set to 4 * 25 and inserted into the 10GbE port?

Are there any options for Higher Power Supply Bricks. (Maybe not from HP but 3rd Party), That will work with the Gen10Plus to safely run a possible full config (All 4 Drives + Max Ram (64Gb) + PCIe 10GB and the E-2288G CPU). Going all out here!.

Thank you for all the hard Work getting all this info recorded.

Hi Jadehawk – we will be updating this piece with that information. We do not recommend using a higher wattage power brick. See https://forums.servethehome.com/index.php?threads/hpe-proliant-microserver-gen10-plus-ultimate-customization-guide.28013/#post-258541

Chitose Ikeda – It will negotiate the 25GbE links to 10GbE as well if that is what you are asking.

I currently have a Gen8 with 4x8TB drives in the bays and a SATA SSD in the ODD bay to boot from.

Are you saying I could move my 4x8TB’s over, and buy a StarTech PEX4M2E1 and an NVMe drive to boot from, and it would work fine?

Love the sound of this, but want to confirm before I spend nearly £700 :D

Thanks for giving practical advice not doing some dumb $10k of storage in a $600 server video.

Here’s hoping someone updates the QNAP QM2. In the meantime, can the four gigabit ports be teamed?

Thanks for this ultimate guide! But, what about iGPU support? Is that available in OS?

Noice!

For the ECC 32GB Stick do you have the part number?

Thats the only thing preventing me from buying one of those for a remote location.

Awesome article :O. Maybe it’s time to retire my gen8 and buy one of these

Super Article, thank you!!. You mentioned in the comparison with the MS10 that the MS10+ does not make use of the GPU in the stock Pentium CPU due to Firmware/Bios restrictions. Is that the same for all CPUS? Wondering if I could make use of the HW acceleration in the Xeon E-2246G and let the server run some rendering work over night.

Any chance to let us know the size of the fan? Not even HP support was able to tell me :-) I guess 80mm

Very nice good. I went to the online VMWare Hardware Compatibility guide. I filtered for ESXi 7.0 and in the Keyword text field, I entered Microserver. The search came up with two entries for the MicroServer Gen10:

1. Intel Xeon E-2200 (4 or 6-core) Series

2. Intel Xeon E-2200 (8-core) Series

I find it very interesting that currently, HPE only offers the 4-core version of the Xeon (in addition to the Pentium CPU, which isn’t compatible with VMWare). So, I wonder why the VMWware compatibility guide mentioned 6-core and 8-core. Are these CPUs planned for the future? Also, you had a “top pick” for CPUs. I assume that would work fine with VMWare as well?

We covered a bit of this in the Pentium G5420 review, but it is a reduced instruction set chip. That is why you see some differences.

On the E-2246G, that is a 6 core part. I hope HPE looks at putting that into a MSG10+ as a virtualization platform.

Hi There,

wonderful article!

I’m really thinkin about getting one Gen10plus.

But i would like to Add maximum RAM to it – but when i’m searching for “32GB DDR4 PC4-21300 / DDR4-2666, CL19, Dual Ranked, x8, Unbuffered ECC”, i can only find registered modules.

Can you please post a link to the RAM you used?

Thank you!

Great review – thanks!

Is it possible to go from stock-Pentium-CPU to an Xeon E-2234, or are there any restriction because of differences in cooling or chipset?

mantis – We have not tested the Pentium model. I did, however, ask the HPE product team and the units are identical except for the CPU and memory load-out. So if you buy the Pentium box, you should be able to remove the Pentium (sell it if needed) and replace it with a Xeon E-2234 without issue. I would suggest if you go Xeon E-2234 you probably want to add RAM as well since 8GB for 8 CPU threads is a bit low by modern standards.

Which RAM did you use for the 64GB configuration. I bought a stick and the system boots but throws a error for an unsupported RAM configuration.

Could you provide the product number of the ram sticks that you tested?

Thanks for nothing Patrick. You are clearly missing your audience here…

For all who are interested: 80x80x38mm measurements of the fan. Apparently too loud for the living room

Is it possible to get the product, brand or model number of the 32GB ram you used. I know this the past Microservers are very picky about the ram. Wrong one and you’ve made a big investment in nothing.

Thank you!

@James Crawford

@Karakal

@Max

Reading Patrick’s description of the RAM used I could find these part numbers (@Patrick: please correct me if I’m wrong)

16GB unbuffered non-ECC – Crucial CT16G4DFD8266

16GB unbuffered ECC – Crucial CT16G4WFD8266

32GB unbuffered non-ECC – Crucial CT32G4DFD8266

32GB unbuffered ECC – Micron MTA18ADF4G72AZ-2G6B2

Hope this helps,

Bart

Thank you, Bart Welvaert.

So, if i understand correctly, also this RAM should work?

Samsung – 32GB -DDR4-2666MHz-CL19-unbuffered ECC: M391A4G43MB1-CTD

https://www.samsung.com/semiconductor/dram/module/M391A4G43MB1-CTD/

I couldn’t find the Micron MTA18ADF4G72AZ-2G6B2 to buy.

Is samsung ok for server ram?

Bart, thank you for the help!

Thanks for the great review.

Is there any performance impact if the two memory slots don’t use the same size memory? For example, can this configuration work: 8GB + 32GB or 16GB + 32GB?

Also, how hard is it to get at and replace the CPU? Just standard remove heat sink, replace CPU, put some themal paste and put heat sink back on?

Randman we did not try, but it should be similar to what one sees on other desktop and server (Xeon E-2100/ E-2200 platforms.)

On the heatsink, that is the right idea. Open the chassis. Undo the four screws and there is a standard socket underneath.

Hi.

Out of interest does anyone know if you can boot from an NVME PCIe Add in card? I need to have my boot system and certain vm’s on an ssd and I don’t want to take a hdd a lot up.

I’m looking to get this server as well as the Intel Xeon E-2246G. Thanks for checking out these CPUs, and I can sleep knowing my server won’t catch on fire :-). Great article!

A couple of questions if I were to upgrade the CPU to the Intel E-2246G:

1. I like that the E-2246G supports Quick Sync. Is it safe to assume that the E-2246G’s Quick Sync functionality will be available from the OS (and any integrated graphics in the server won’t interfere with the CPU’s Quick Sync)?

2. The E-2246G has integrated graphics – would the DisplayPort output of the server take advantage of the E-2246G’s graphics?

Regards.

Does it support RDIMM RAM?

Highwind, it is Xeon E-2200 based so it does not. Only ECC UDIMM.

Great article!

Shame about the iLO enablement kit…

But Thanks for taking the time to review, and provide the CPU table matrix!

Hi, i received my new Gen10 + and i would like to upgrade the RAM.

Is this RAM model CT32G4LFD4266 (from Crucial) is working with my Intel XEON E-2246G CT32G4LFD4266 ?

I would like to get 2×32 GB RAM size.

Mémoire interne: 32 Go

Type de mémoire interne: DDR4

Fréquence de la mémoire: 2666 MHz

composant pour: PC/serveur

Support de mémoire: 288-pin DIMM

Disposition de la mémoire (modules x dimensions): 1 x 32 Go

ECC: Oui

Latence CAS: 19

Niveau de mémoire: 2

Mémoire de tension: 1.2 V

Configuration de module: 4096M x 72

Couleur du produit: Vert

Certification: CE

Hi Patrick,

can you tell me why the Xeon E-2244G is not recommended in the cpu overview?

It only takes 116 watts, so why is it not recommended?

Thanks!

Thank you for this amazing review.

Very good job !

Is there a chance that it works with the Core i3-8300T or i3-9300T without any issue ?

Thanks !

Hi. Great guide, but why no core-i5 (9500) or i7 (9700) in your test ? is it compatible ? they are 65watts no ?

Incredible guide. Thank you for this. How difficult is a CPU swap? The stock gen10 plus is very close to what I had in mind for a system, except that 4 cores and no hyperthreading is too lean. The ability to swap CPUs makes all the difference in the world to me.

Dan – these are the server/ workstation chipsets not consumer chipsets.

Richard – extremely easy.

Given all that, I’m inclined to purchase the Pentium G5420 with 8 gb, add the iLO board and immediately replace the CPU with either E-2236 or E-2246G and substitute a pair of 32 gb memory cards for the 8 gb. Does that seem like a reasonable thing to do or would I be better off just buying on of the other tower form factor boxes in the ProLiant line?

Further to my last comment/question. Do I need any specific thermal paste or equivalent to properly install the replacement CPU or special tools to swap the CPU? Can you point me to instructions for the procedure that you trust?

I answer myself, i just got my new RAM :

Crucial CT2K32G4DFD8266 64Go Kit (32Go x2) (DDR4, 2666 MT/s, DIMM, 1.2V, CL19)

and it works, 64 GB for this little guy :)

Benjamin,

Thanks for the memory info. What does iLO report for this RAM?

Benjamin,

Also, I think this is non-ECC. Did you also look into ECC (wondering what cost differential would be)?

Fantastic review! Really!

All info in single one place.

Patrick – what could be the damage, if a more powerfull power suply would be added, keeping in the same time the voltage but instead of 180w to have 300 for example?

And a second question – what voltage does provide the charger as output?

Thanks

@Rand :

The full specs : https://www.crucial.com/memory/ddr4/ct2k32g4dfd8266

I’w waiting my ILO card, but in the BIOS, it’s 64 Gb without warnings.

I tried to find Crucial ECC memory but they were nowhere to be found at the moment in my Europeen shops.

Thanks, Benjamin. I also looked everywhere online and couldn’t find any ECC 32GB UDIMMS. I only found 32GB RDIMMs. I’m also waiting for the out of stock iLO Enablement kit. Setting up a ProLiant without iLO will be a first for me.

@Rand

Same feeling. It was awkward but just 10 mn with a monitor to set up Esxi 7 and then SSH and Web to manage remotely this new toy.

I haven’t tried it but here is an advertised 32 GB ECC UDIMM: https://memory.net/product/m391a4g43mb1-ctd-samsung-1x-32gb-ddr4-2666-ecc-udimm-pc4-21300v-e-dual-rank-x8-module/

Samsung lists it as “Sample” for product status: https://www.samsung.com/semiconductor/dram/module/M391A4G43MB1-CTD/

Would be curious if it works. Really want to have 64 GB for an ESXi server…

There must just not be a market for 32 GB ECC UDIMMS. Everything is registered DIMMS.

Well done! Looks like a promising product, but I would prefer another PCI slot or two as well as more power. I’d appreciate a link to the next size up, even if it is a more standard server.

Gracias!

So I picked up a ProLiant Gen10 Plus with the Intel Pentium G5420 with a view to swapping that CPU for an Intel XEON E-2246G. I removed the Pentium and replaced it with the XEON, cleaned the thermal paste residue from the heat sink and applied Arctic Silver 5 to the 2246G. When I attempt to power on the system the system health and network light show green but the system will not boot. After a little bit the fan kicks on high and that’s about it. I’m at a loss for how to troubleshoot this. If I pull the XEON and replace it with the Pentium I get the very same result — the system health and network lights are solid green, the system will not boot and after a bit the fan kicks on high.

@Benjamin, this is the first HPE ProLiant I’ve had to connect a KVM to. A little inconvenient, since I have to put it in my office instead of the basement. Also, when I connected the MicroServer Gen10+ to my monitor using DisplayPort, I got no signal at all. It seems that maybe an active DisplayPort cable might be required? Anyway, a week before I got this server, I was going to throw out an old Gateway (remember them?) monitor that’s been sitting unused in my basement for years. Fortunately, I kept, since it has a VGA input that works with the HPE MicroServer Gen10+ while waiting for the iLO Enablement Kit. Having to create bootable USB sticks from HPE iso’s (such as to apply an SPP) is also inconvenient compared to doing virtual mounts via iLO.

@fellow – the next size up I believe is the ML30 Gen10.

@Richard – I replaced my E-2224 with the E-2246G using Arctic MX-4. Sorry, I can’t help hear, since I didn’t have any issues. Maybe you found this already, but just in case, take a look at the Troubleshooting Guide in case there’s any useful debugging tips:

https://support.hpe.com/hpesc/public/docDisplay?docLocale=en_US&docId=emr_na-a00017522en_us

Is anyone who has the server with a E-2XXXG CPU able to confirm that Plex is able to use QuickSync? You can test this by transcoding something in the web player and looking for “Transcoding (hw)” on the Activity page.

There are reports that the server doesn’t expose QuickSync but I would like to know for sure from someone who has one.

@Richard Robbins : Did you unplugged the cable to set up the new CPU ?

When i did this, i somehow managed to re-plug the cable in the wrong way … The system did not boot and only a red led flashing.

@AJ :

My plex shows for my differents devices :

iOS : SD(H264)-Transcoder

Chrome : Live stream

I just got my Gen10 Plus yesterday and realized the S100i SR doesn’t support ESXi…

Any suggestion of Raid controller for Gen10+? Would like to get a cheap Raid controller instead of getting e208i-p on the official support list which is very pricey…

Hi there,

I use an Intel X550 T2 10GbE PCIe card, found that there is no option about SR-IOV setting in BIOS. And ESXi always shows a tips ‘Enabled / Needs reboot’.

I confuse that whether Gen10+ support SR-IOV or not.

on the Gen8, I5 worked, so why in this generation, i5 will not work ?

I know the chipset are not “consumers”, but is there any reason it should not work ? (except what intel says)

i answer my question myself, as i received my unit.

I5 worked perflectly well on it; despite what someone said !

@Benjamin, any issues running your 64 GB non-ECC RAM you posted above (Crucial CT2K32G4DFD8266 64Go Kit (32Go x2) (DDR4, 2666 MT/s, DIMM, 1.2V, CL19)?

I have them in my cart just waiting for your results :-)

This and your previous article on the Microserver Gen 10 Plus drove me to buy one, but the DIMM I got is reportedly not supported (the memory POST throws an error and halts). The memory I got was Crucial CT32G4RFD4266. I’m running the Pentium Gold version of the Microserver, but both stock processors seem to have similar memory support, so I don’t think that’s the problem. Any chance you can be more specific on which memory you were using? Anyone else have any suggestions for a 32G ECC DIMM that’ll work with this server? I realize it’s officially not supported, but I was hoping for 64G, and this article gave me hope.

Replying to myself… As others in the comments mention, my problem is probably that I got registered memory and need unbuffered which apparently quite difficult to find.

Also just tested that 32 GB of (2x 16 GB) Crucial UDIMMs work with this system without issue. Am going to replace with 64 GB (2x 32 GB) instead.

In meantime, does anyone know of a PCIe card that supports 2 NVMe M.2 drives in the Gen10 Plus? Couldn’t get the Supermicro aoc-slg3-2m2 to be recognized by the G10+. However the Silverstone ECM22 works no problem, only it supports just 1 NVMe drive.

For anyone else wondering I can confirm that Crucial CT32G4DFD8266 (Crucial 32GB Single-Rank Unregistered non-ECC DDR4 2666 CL19) works just fine.

Hi,

Bought the cheapest – HP Proliant MicroServer Gen10 Plus (P16005-421)

I immediately changed the processor to – Intel Xeon E-2234 OEM

RAM changed to – 2x16Gb DDR4 2666MHz Samsung ECC (M391A2K43BB1-CTD)

Everything started up and running perfectly! Thanks for your article, it helped me a lot.

During operation, a question arose.

I tried to run a system stability test in AIDA64. The processor overheats in 3-5 minutes and trolling begins. At the beginning of the test, the cooling fan reaches its maximum speed, but after 20-30 seconds it reduces the speed and ceases to effectively cool the processor.

Please tell me where to set the operation mode of the cooling fan so that it works at full speed until the processor load decreases.

I solved the problem

In bios:

System Configuration > BIOS/Platform Configuration (RBSU) > Advanced Options > Fan and Thermal Options

– Enhanced CPU Cooling (when there is no load, it works quietly as in Optimal Cooling mode, at 100% processor load it increases the fan speed by sensations up to 60-80% and keeps them)

Great article.

Regarding CPUs: would any of the Core i5 units work?

Mine just arrived today and I really like it so far. The main issue I have at the moment is I am unable to get it to boot from the NVMe drive using a PCIe adapter card. In the BIOS I can see the card/drive listed in the boot order and controllers but when I switch to legacy mode to boot from USB it fails. I am assuming I need to keep it in UEFI mode and need to get a signed OS Install.

When I boot in legacy mode I can boot of the USB and the Windows install sees the 1TB SSD but tells me it can’t install as the system won’t boot from that drive and to check the BIOS. Anyone have any suggestions? Thanks

@typod

Have you tried this option :

https://www.servethehome.com/wp-content/uploads/2020/03/HPE-ProLiant-MicroServer-Gen10-Plus-PCIe-Bifurcation-Support.jpg

@Jason

Sorry comment was moderated.

No problem with my RAM CT2K32G4DFD8266 since March.

PCI Card for double NVMe : PCI Express SSD M.2 / startech / PEX8M2E2

Anyone have any other non-switch dual PCI-E card recommendations that work with the boards bifurcation support? I also was about to purchase the Supermicro aoc-slg3-2m2, until I saw Jason’s comment here. I’m trying to optimise on power and reusing some existing m.2 drives and not having much luck. Might have to revert to the Startech PEX8M2E2 that Benjamin mentioned.

Thanks for the long and great review.

I have my Gen10 plus since 3 days now and I can’t get any SSD SATAIII drive to be recognized from the BIOS or boot menu. I saw a similar complaint in a forum.

Did you never have a problem when trying different SSDs in the unit ? Is the HPE SSD you used so much different then other third party SSDs ?

Hi, so i got a problem. I recently bought a Microserver gen 10 plus. My setup is 3x 8tb wd red setup in a raid 5 configuration with a 1x 1tb Crucial 1TB X8 USB 3.2 Gen2 SSD for the OS. I’ve been trying to get it running but for some reason it doesn’t want to boot from the SSD you guys recommended. I tried in other ways. It did want to boot from a normal tumbstick but obviously thats slow and i bought the ssd for it. Can you guys help me solve this problem? I have almost tried everything.

Hi, I can confirm that the Memory Crucial CT2K32G4DFD8266 is running very fine on my HP Microserver GB available under ESXi 6.7 running about 19 VMs on one host.

Thanks for testing and recommending everyone!

FYI the bifurcation in the BIOS only supports 8×8 and NOT 4x4x4x4. That is why the Supermicro aoc-slg3-2m2 is NOT working. If you want 2xNVMe support you have to go with the Startech PEX8M2E2.

I’ve been in contact with HP about this. They only support bifurcation in this server for their own NICs (and then 4x4x4x4 is not needed from what I understood). I was assured it is a firmware/BIOS issue rather than a hardware one. So support might be added in the future. However they could not say if this was planned or if it ever will be added.

I’m curious as to if anyone found a power brick that can be used that is more than 180W

I took delivery of a MS G10+ today and it boots debian buster fine from a thumbdrive, but I also can’t get it to boot from a USB SSD using the same setup (it wouldn’t even boot the installation media from the SSD). Did anybody have any luck? In principle, I’m fine with a thumbdrive, but I’m afraid that /var/log will fry it eventually …

Hi, i just got my second G10+ server, first one is running with 32gb.

I ordered 2xCT32G4DFD8266 32GB DIMMs for this one and it only runs with 1. If I install the 2 dimms I get a error 00000000 saying that I need to re-sit the DIMMs or upgrade ROM.

Has anybody run into this and knows if a BIOS upgrade will fix it?

Quick update: BIOS U48 2.16 does not fix it. I can run with one CT32G4DFD8266 DIMM, boot get the 0000000 mayor/minor error code if I boot with 2.

Can I put 128gb memory in this machine?

Hi Patrick,

I tried to get the NC523SFP NIC running with the value version (Pentium Gold) of this server. To no avail. As soon as the card is plugged in the server doesn’t even get to boot the OS.

server boots up fine without the NIC. NIC itself runs fine in an DL380 G6 and G7.

I’d like to use this NIC since I have some of them lying around waiting for some work to do.

Any hints or tips on this?

br

Steffen

@Brendan Richman: I can confirm the StarTech PEX4M2E1 https://www.startech.com/HDD/Adapters/pci-express-m2-pcie-ssd-adapter~PEX4M2E1 works with the MSG 10 Plus.

The PEX4M2E1 only works with NVME disks, not with SATA M2 disks. I have it now with an Samsung 970 1 TB SSD. Even can boot from the PEX4M2E1

Loving these articles, waiting for mine to come in. Want to add an NVME when I get but also want to add an additional 4 drives and make an unRAID server (8 drives in total plus NVME and USB boot). What are the recommendations to add these drives? I have powered USB enclosures that do support eSATA as well. are there cards that would allow eSATA or SAS with single or dual NVME?

Would this card work?

Startech PEXM2SAT3422

Has two SATA and 2 NVME

Updated to latest System ROM but system will not boot with any of my drives installed using the default included onboard SATA controller. Contacted HP and of course they said they only support their drives which cost as much as the entire server for 1 4TB drive. These drives do not work:

– 6TB Seagate Ironwolf NAS ST6000VN0033

– Micron RealSSD C400 128GB

@GrandMasterV yeah… I’m in the same boat. My Gen10 Plus will literally not POST if a drive is installed and I’ve tried a myriad of different drives with no success. To cover my bases I ordered a cheap HPE HDD (801882-B21) to help determine if there’s an issue w/ the storage controller. If I can’t make this work I’ll have to return the Gen10 Plus and look at alternative products without strange HDD restrictions.

We did not try either of those drives GrandMasterV. The larger capacity WD drives and Samsung/ Intel SSDs we usually use on systems all worked when we did this piece.

Patrick, Thanks for your amazing reviews of this server! It made me buy one.

Any chance you could give a hint on which Crucial ECC 32GB sticks you used on your tests ?

Anyone tried with i9-9900?

FYI on memory: my setup works well with a Xeon E-2234 and 2 of Micron 32GB ECC UDIMM (MTA18ADF4G72AZ-2G6B2). The Micron memory were purchased from mitxpc.com.

Patrick,

I added a Toshiba MK1001TRKB 2TB SAS 7200RPM 16MB Enterprise Hard Drive to the Gen Plus 10 but the drive was not detected. Thought I could use a SAS drive without a controller. Can you confirm that SAS drives are natively support and the models that was tested.

For the SAS drive you need this controller

HP 804394-B21 Smart Array E208i-p SR Gen10 C

HPE Smart Array E208i-p SR Gen10 Ctrlr

MPN: 804394-B21

please remove my previous comment on HPE Smart Array E208i-a SR Gen10 Modular Controller

Thank you!

Hello! Thank you very much for the very good article!

I just buyed a “QNAP QM2-2P10G1TA” with a “Samsung EVO Plus 970 2TB” NVME SSD. If I try to boot the HPE Microserver 10+ with the QNAP Adapter WITH the EVO 970 (it doesn’t matter if build on Slot1 or Slot2) I only see an error message in red letters: “RIP address out of range”.

I googled about this and come to some articles which says that I have to disable “UEFI Optimized Boot”. I tried this but it wont work.

I I try to boot only the “QNAP QM2-2P10G1TA” without a NVME SSD everything is okay and the Microserver Gen10+ boots normally.

Does anyone knows whats wrong in my setup? I hope only a BIOS/UEFI setting is wrong but I can’t find the wrong setting.

Best regards!

In the Integrated Management Log I see:

“Uncorrectable PCI Express Error Detected. Slot 1 (Segment 0x0, Bus 0x8, Device 0x6, Function 0x0). Uncorrectable Error Status: 0x100000”

I GOT IT!!! :)

It is very simple: the NVME SSD from Samsung (Evo Plus) with 2 TByte is INCOMPATIBLE with the “QNAP QM2-2P10G1TA” and the HPE Microserver Gen10+!!!

I have a spare NVME: “Intel SSD 660p 2TB”. I just build this into the “QNAP QM2-2P10G1TA” and voila: The Microsrerver Gen10+ boots without any problems and I see under VMware ESXi 7.0b the 10Gbit Aquantia Chip and also the Intel 2TB NVME SSD!

I want to use the Samsung Evo Plus 970 2 TB as it is a very good combination of speed and reliability but if it isnt’t working from the ground up I will go with the Intel 660p and do some more backups. ;)

…so now I got 10Gbit in a tiny Microserver with the Intel 660p 2TB NVME SSD (a little bit slowlier than Samsung but this doesn’t hurt) and something new learned… :D

So in sum:

– 1 TByte Samsung EVO Plus NVME works very good

– 2 TByte Samsung EVO Plus NVME do NOT WORK with the “QNAP QM2-2P10G1TA”-Adapter in the HPE Microserver 10+!!!!

Per AJ’s comment above, has anyone found applications to work with hardware acceleration or Intel QuickSync on their HPE Gen10 Plus server after upgrading to a Xeon E-2246G CPU? Or is the system designed to not utilize any hardware acceleration based on a limitation of the chipset/motherboard design?

Great write-up. However it is not clear to me whether or not non-HP-branded drives are supported by the MSGen10+. I haven’t seen any restrictions on the old NL40 and the MSGen8 servers. Would be nice to get a definitive answer. I can’t even find an official statement on the HP datasheet.

I have a Gen10 Plus, it won’t recognise an Intel X540 or X550 10GBASE-T PCIe NIC that I install. The BIOS says the PCIe slot is unpopulated with both cards… LAN ports light up when I plug a cable in… heatsink fan fires right up. Any ideas on how to get it to recognise the NIC? Both cards work in lots of other machines/NAS that I have.

@easternnl

How do you boot from the PEX4M2E1? Do you need to use UEFI?

There is no option in the boot menu.

Update:

switched to UEFI (used legacy bios for USB booting), installed Ubuntu server 18.04 but it won’t boot from it.

I guess there is a bit more to it…

another update:

silly me, NOW there is an entry for the boot order and just moving the NVME controller up will make it boot just fine.

final update:

it seems that any pci card should allow you to boot from it, not just the PEX4M2E1, as long as the system can see the disk.

Me podrían indicar que SSD Internos no-HPE han probado hasta ahora y les ha dado resultado? Los que tuvieron problemas para detectar los SSD no-HPE, intentaron cambiar de RAID a AHCI?

Mi microserver llega en unos días, no consigo SSD HPE y necesito de al menos 4 x 1TB (y HPE no tiene esa capacidad)

Could you tell me which non-HPE Internal SSDs you have tried so far and it has worked? Those who had trouble detecting non-HPE SSDs, tried to switch from RAID to AHCI?

My microserver arrives in a few days, I can’t get HPE SSD and I need at least 4 x 1TB (and HPE doesn’t have that capacity)

Has anyone replaced the fan yet? Wondering which one to use and how to wire it.

My HPE Proliant Gen10 server failed today. I had upgraded it to the XEAM 2236 CPU and 64Gb of memory. It experience a critical power issue, the power supply test good. It is something on the MB. Anyone else having failures after upgrades?

Will the “Entry G5420” support 32 or 64 Gb. ram ? – The spec sheet says 32, but any chance for running 64 in this one ?

Thanx

BooX

Have tried three different Samsung evo adds.

Server stops due to hdd temperature rises over limits as soon as they are being copied to. Vsphere did install without trouble.

So they do not support any other disks than Hpe branded SAS. 200+$

Would almost a top CPU work if you only want RAM, processing and a boot SSD and all storage will be iscsi? (as a Virtual compute node)

I’m wondering – considering noone has provided a definitive “yes it’s working / no it’s not working” answer – is the QSV actually available upon PCU swap to one with integrated graphics? AFAIK HP has stated that the BIOS they’re using in the Microserver Gen10 Plus is using Intel’s SDS and that means QSV is by design/default not available regardless of the CPU in use due to Intel’s BLOB in the BIOS/firmware. So, according to HP HW accelerated transcoding using Intel QSV is out of the question… Then, the follow-up question – which GPU to use for HW accelerated decoding/encoding workloads with this device/appliance/server? Seems like Quadro P400 could be the next best thing with its lower power draw, TDP and LP form factor, but has anybody used it here? It’d take the PCIe slot so no 10G+ NICs or internal M.2s if planning to use 4×3.5″ HDDs slots…

As a follow-up, it seems like Intel’s DG1 could be the best solution for this thing – being more power efficient/low-powered it’d feel nice within available power envelope, plus Intel has planned to release it to major SIs, so HP surely is to get some… All they’d need to do is take the DG1 and put it into a low-power LP form, et voila… almost… One could then get ubiqitous QSV through the card without the Intel’s SPS interfering – great solution/purpose for the chips, chipmaker and manufacturer in this possible combo… Though I did hear in the press coverages mentions that DG1 will be restricted to specific CPU families/gens on specific boards with specific dedicated BIOS features. But still, HP could easily nudge Intel into loosening the restrictions noose for their Microserver and nettop/thinclient uses. It’s money for all after all… Furthermore, one could dream of a company going one step further and creating the GPU combo card – eg. by combining 2x10GbE NIC onto the board with GPU chip through PCI bifurcation to 8x/8x for both devices. Or maybe even better GPU + dual M.2 combo – if future direct storage solutions come to fruition in GPU workloads having the SSDs right next to it could be beneficial – while currently one could then use the slot for both GPU accelerated purposes and faster storage. Heck, I wonder why companies are not trying to differentiate their products with such unique approaches to PCIe lane optimisations (yeah I’m aware of those Vega/Navi compute and pricey cards with SSDs added for extended VRAM purposes but these were just that – the colder VRAM extension not directly accessible/usable general storage. I for one think that such combo solutions could be even more sought after for Microserver homelab/mediaserver builds than the iLO add-in NIC cards…

Any thoughts on the best way to get RAID1 when running Windows 10. AFAIK the HPE Smart Array S100i SR Gen10 Controller isn’t supported for W10? No idea whether the Windows Server drivers will install on W10? I don’t want to spend money on a PCI-E RAID card so am thinking of perhaps trying softRAID. FYI, My plan is for the OS to be on single m.2 storage (thus not RAID) and have 2 x 3.5″ for storage with the RAID1. Thoughts appreciated (BTW, this is for a home/office setup).

Another RAID1 issue – I’m having all sorts of issues installing Windows Server (2016 and 2019) Essentials into a RAID1 configuration (WD Gold 4TB drives) using the baseline S100i SR controller. Manual OS install fails, (it does not see the logical RAID1 drive) and trying to inject downloaded drivers (https://support.hpe.com/hpesc/public/swd/detail?swItemId=MTX-652001b1e4d142bb968146b571) during installation does not work. OS installation also fails when trying to use Intelligent Provisioning or Rapid Setup processes. RAID0 also did not work, but installing to a non-RAID single drive was not a problem. If anyone out there has RAID1 working through the S100i SR for WinServer 2019, your install process and which drivers used would be appreciated.

Regarding installing 2.5” drives in the 3.5” bay, in the UK at least it is just as cost effective to get the HPE specific part, 870213-B21 which is designed for these bays and works very well.

Has anyone been able to get a 4 TB SATA SSD to work in one of the internal SATA slots? I have it in a 2.5 to 3.5 drive adapter but even after rebooting, Smart Storage Administrator doesn’t see it at all. I have an internal NVME drive installed as the boot (in a PCIe adapter) and two 8TB drives installed in bays 3 and 4, respectively.

The 4TB SATA SSD is a brand new Samsung 860 Evo.

FYI – in reference to my previous post, I don’t think the drive adapter matters. I tried connecting the 860 Evo isn’t detected even when I connected it directly to the SATA port with no adapter at all. Very disappointing.

In case someone was waiting for the verification concerning the PEX8M2E2:

I’ve just added the PEX8M2E2 with 2x Samsung 970 EVO 2TB NVME in my microserver 10 plus and it works

Is anyway to build RAID with 2 SSD on QNAP QM2 2S Dual M.2 SATA SSD PCIe Card?

I got three 4TB 860EVOs and one 4TB 870EVO. If I only install one of these 4 SSDs, Gen10+ can normally POST and get the disk recognized. However, if more than 2 of these EVOs are installed at the same time, the disk light on the front panel will stay on and at least 1 disk won’t be recognized. I’ve even tried switch these disks in different SATA order but it simply didn’t work.

So I decided to order four 3.84TB PM883s and guess what? It simply works! Thus I highly suspect there may be a disk whitelist or something.

I bought one the “G” CPU for the graphics / hardware encoding. However, I can’t get to work. Digging a little, it appears that it’s not supported (office answer from an HPE product manager). So what is the point of upgrading to a “G” cpu? Might want to edit the article to indicate there is no point.

https://community.hpe.com/t5/ProLiant-Servers-Netservers/Microserver-Gen10-Plus-and-Intel-Quick-Sync/td-p/7084407

Hi,

Great article.

What do you think of Synology card that use pcie3 8x for 2m.2 and 1 10gbe ?

https://www.synology.com/en-us/products/E10M20-T1#specs

Came across this HPE advisory on drive compatibility:

Note that power consumption of the storage devices should not exceed either of the total power budget numbers provided below (max drive counts are four):

Total power budget for 2.5″ SATA SSD cannot exceed 2.23W. (Per each drive)

Total power budget for 3.5″ SATA HDD cannot exceed 12W. (Per each drive)

https://support.hpe.com/hpesc/public/docDisplay?docId=a00106659en_us&docLocale=en_US

Great article, just wondering if i could us NVMe on the PCIe riser to run my operating system EG windows?

I have a configuration with a Smart Array E208i-p 12Gb PCIe SAS-Controller and 2 original HP 240GB SATA 6G SFF SSDs. Now I tried to add 2 Samsung QVO 8TB, but they were not visible in the RAID Configuration Utility. So I tried an EVO with 2 TB, but still the same. Only 1TB and smaller EVOs [maybe SSDs in general] were detected.

Now I added 2 Seagate 18TB Exos X X18, which can be configured as RAID1.

Hey there!

Speaking of the Intel X710-DA4 SFP+ card anyone knows the exact manufacturer’s part number/EAN of the card fitting into the case/slot?

Kind regards

Martin

Hi – You have the E-2278G as untested and also as over 130w and Recommend no. There are other CPUs with 80w TDP that you *don’t* mark as over 130W.

Is there something not on your chart that pushes it over, or otherwise makes it not suitable (other than not being tested).

Thanks,

Cheers, Liam

Hi Bart Welvaert,

My system is able to run one of this Samsung – 32GB -DDR4-2666MHz-CL19-unbuffered ECC: M391A4G43MB1-CTD. if I put two stick onto the system it will show 221 Error. what can be the cause ?

Sorry for bumping in. There seem to be still a question open: If replacing the Pentium or Xeon with a Xeon with an iGPU, does iGPU work or is that still not usable?

I am working on mine gen10 plus now.. it has VMware enterprise on a SSD I shoved into one of the 4 slots. I have 2 4gb nas SAS drives… its it better to use the single pcie slot for a sas card than networking? This is in my house and no need for 10gb 25gb nics. I have a windows VM, a freenas VM and that’s it for now. I am getting 2 32gb of the ecc crucial sticks used in this to start off and want to work on storage.. or should I just got ALL usb storage? Its going to run blue iris for cams, MY bbs (lol), and software to stream my scanner feed but i do want a shit load of storage on it.

Hi Steven, I’ve seen others claim 2 of those modules work without any issue, is it possible you have a bad module? Considering getting a couple of these but now not convinced they’ll work!

I have been running Crucial CT2K32G4DFD8266 64Go Kit (32Go x2) (DDR4, 2666 MT/s, DIMM, 1.2V, CL19) in my HPE MicroServer Gen10plus for a while now and I’m seeing some unusualness that I was hoping someone might have some suggestions about. First off, ILO reports that it has an “unknown” memory config. dmidecode reports that there is memory there but can’t show anything else. I am using unraid as my operating system and, while all the memory in the system is usable, it displays as having no RAM installed.

Since this isn’t a supported configuration, I’m not sure that there is anything that i can really do to resolve the issue, but I was hoping someone might have a suggestion.

Hi, I have a question, has anyone tested the HPE NS204i-p ?

This is incredible, many thanks. As current Xeon availability and pricing are somewhat mad, I am wondering- if the i3-9100 works as I read from the table, I assume the i5-9400 would work, as well? It seems a reasonable upgrade for me. They are available for 175 EUR and provide 6C/6T within a 65W package.

Has anyone tested this, or is there an indicator it will not work?

@STH, thank you for the comprehensive review. I’m curious to know if an i3-9100T / 9300T will work in this server.

I hope they will make a new version with nvme support and 12th gen intel cpu.. this machine was born old, still has 1151 socket! The processors available are old, slow and not efficient. Any low power i5 would blow them away

What about E3-1200 series CPUs? they are 1151 socket as well. Are they work with this server?

Any chance to fit into it NVE drive and 10Gb NIC?

I see only one PCIe, but maybe there is some splitter or 10Gb USB 3.0 NIC (if it negotiates 10Gb and practically offers >2Gb/s then I’m satisfied)

Thanks for this super interesting and detailed review of the HPE ProLiant MicroServer Gen10 Plus and its capabilities.

It would have been ideal to add an Amazon link to the tested products in a legend. Otherwise, however, a 1A contribution.

I am thinking about getting one of these servers as a Hyper-V host (LAB) and have done some research on what I need.

Thereby I came across the following info on the Intel site:

https://ark.intel.com/content/www/us/en/ark/products/191036/intel-xeon-e2224-processor-8m-cache-3-40-ghz.html

According to Intel’s website, the max. supported memory is for the

E-2224 processor with 128 GB RAM.

Does HP limit the use to 64 GB via BIOS?

Has the operation of the HPE ProLiant MicroServer Gen10 Plus been tested with 128 GB, or is too much power required for the operation of 128 GB RAM?

Hi, I have a question, has anyone have recommendation for good/not expensive RAID controller?

I’m building VMware esxi and I need HW RAID option.

Can anyone recommend a GPU for this unit if you can’t take advantage of the Intel QuickSync on the HPE Gen10 Plus?

You can’t honestly recommend a server that you can’t even find SSD drives that work consistently! These servers are a pain the butt when trying to configure since I basically have to go to sites like this to see, through apocryphal statements, what SSD might work.

The only thing that makes sense is the 2.23W max requirement for SSD. And we all know how all SSD drive manufacturer make it easy to find wattage for their drives!

How do you get this Microserver to boot from usb ssd? Under boot options I only see my sata drives and network devices.

I’ve tried at least a dozen times installing Proxmox to my crucial x8 (in both legacy mode and uefi mode), install goes fine but after install on boot, the Microserver tells me that it can’t find a bootable drive.

I just tried installing Proxmox to one of my HDDs and that worked fine. I can’t figure out why when I install to my Crucial x8 why it won’t boot from it.

I tried installing Debian to my usb ssd and also ran into a problem. After install debian boots to grub. When I do ‘ls’ I’m not seeing my usb ssd, I only see my (4) sata hdds.

Guys, has anyone tried i5-9400f?? I would give it a try despite recommendations (above someone tried i5-9400 and reported it works) but before investing my money it would be great to gry 100% confirmation.

proper sentence above “great to get” – sorry for that

hi again, I can confirm i5-9400 works, didn’t try i5-9400f

hi guys, someone can help me?

i just buy 1x32gb crucial CT32G4DFD8266 but when i install it i receive error 232-DIMM initialization Error.

someone can help me?

I can confirm the i7-9700f works.

COnsidering above saying the i5-9500 works the i5-9400f should be fine.

I plan to use this server at home.

As I saw there is a loud case fan but CPU is fanless.

Can somebody tell is it possible to substitute case fan wih some heatsink to make the server completely fanless ?

And is i possible to power off/on the fan via iLo ?

Hello, I have a problem with my ram (Crucial mta36asf4g72pz-2g6d1qi – 32go – ECC)

I have a proliant gen10 with G5420 processor.

“error code 00000000 Dim is not supported in the system.”

is this ram reference not supported?

thank you

I can confirm i5-9400f works like a charm

ANother data point to add on the SSD issue; 4TB Samsung 870 QVOs were installed as non-boot storage. Initially worked fine, but then intermittently dropped out at reboot time. The HDD activity LED stayed lit when this occurred. Approx 1 boot in every 3 or 4 this would not occur and the drives would be detected.

If the drive ‘passed’ the initial POST check & was detected, it would remain detected and fully operational until the next boot, regardless of how hard it was used.

I believe this is down to the ludicrous 2.23w power limitation on SSDs; HDD drives in the same SATA port using the same power brick have a 12w limitation. It would appear that this limitation is an arbitrary one designed to limit SSDs to the single HPE 250Gb Sata SSD they make available.

The 4TB 870 QVOs have a claimed average read power draw of 2.2w; this probably accounts for the intermittent nature of the issue.

Does anyone know of any SATA SSDs that consistently draw less than 2.23w at boot-up and could therefore pass the ridiculous 2.23w limit?

Hi,

If anyone interested I can confirm that Gen10 plus works fine with:

2 x Kingston Server Premier – DDR4 – module – 32 GB – DIMM 288-pin – 2666 (KSM26ED8/32ME) and Xeon E-2234 processor.

After several years with 2 Gen10Plus of these I can comment on several points:

I9 9900 processor (65w) performs really well compared to xeon 2224.

I have tried non ecc udimm memories and with the latest bios they work very well.

The integrated controller has a terrible performance, however if HPE Smart Array E208i-p SR Gen10 is added, the operation is as expected in this type of equipment.

I have also tested various GPUs and recommend the Quadro P400 and Quadro P1000.

Any suggestions on a compatible 10Gb SFP+ NIC?

I bought a samsung pm9a3 nvme but I didn’t realize it was a 22110 type. Would you recommend a cheap adapter compatible with this HP microserver for this NVME memory?

Has anyone tried using the i9-9900k on this? I can’t seem to find any i9-9900 anymore and wanted to upgrade the gen10+ with a better cpu. I see lots of 9900kf, 9900k, and 9900t cpus still available these days.

Will you also carry out such a detailed test with the “HPE ProLiant MicroServer G10+ V2”?

Would qnap qm2-4p-384 ssd2pcie card be fitted to Microserver Gen10 Plus by length ?

It is about 295 mm. length.

So I want to use this with 4x Seagate Exos 16TB, which are said to consume up to 10W each at the maximum.. Also with QNAP QM2-2P10G1TB (10GBe Tbase + 2x NVME slots) which unlike the card reviewed here is PCIe Gen 3. The specification says it draws 5W but surely that’s just idle? How do I calculate what the network will draw at its peak? I may just use single SSD in the end, currently Intel 660p 2TB (wasn’t sure if 670p will work, have anyone tried?) which I want to partition and use small bit for TrueNAS boot and the rest as a cache drive. Or would it be better to still have 2 SSDs, one say 256GB for the system and other one for cache?

Forgot to add it has 32GB of ECC RAM, stock CPU and iLO control board.. Just worried about my wattages! Any advice much appreciated.

Hi, will there be a review/update to the new v2 version of the Microserver Gen10 Plus? The new one seems to have among other things PCIe Gen4, built in TPM, different USB port layout. Thank you!

Hi, anyone tested ct32g4dfd832a ?

Hi Everyone,

I would like to use the HPE MSG10+ with Smart Array E208i-p controler for RAID purpose and

Internal SSD mount with HPE 3.5 to 2.5 cage converter.

I would like at least 2To Raid aivailable space and also visible/boot by esxi.

Does anyone know/tested other 2.5 SSD models that fit the 2.23w hpe limit ? Thanks

Has anyone tried to upgrade g10+v2 cpu 6405 to 11400f?

For those looking to use an NVMe card, I’ve got the Sabrent EC-PCIE (NVMe M.2 SSD to PCIe X16/X8/X4 Card) and a Samsung 980 PRO NVMe drive working in my Gen10 Plus V2 (boot drive for Proxmox).

Currently considering a RAM upgrade, trying to find a pair of 32GB Unbuffered ECC 3200MHz UDIMMs which will work in this thing.

Running with the following:

Intel Xeon E-2278G

Crucial CP2K32G4DFRA32A. 2 x 32GB DDR4-3200 UDIMM 1.2V CL22

E208 Smart Array

4 x HPE 800GB SAS 12G ssd.

ILO

VMware ESXi running anywhere up to 20 VMs.

Power draw at VM start is 140w measured at the UPS.

Normally running 80w to 95w.

I used this cpu as it was the only one that I could get with 8 cores at a sensible price.

All firmware is updated to the latest available. The SAS connector is a bit tight to get onto the back of the card.

I have the Gen10 Plus V2,and I can confirm that it didn’t work with i9-11900kf,i9-11900f,i9-10900f :(

Maybe only Xeon E-2300 cpus

Hello,

I can confirm that Crucial ct32g4dfd832a 32GB modules are working like a charm in HPE Microserver Gen10+

Hello,

BRCM 10GbE 2P 57810S Adapter is SFP+ adapter working on HPE ms gen10+

I tried i5 11400f and i7 10700 with my Gen10 Plus V2 and those didn’t work.

i5 11400f looked quite promising because POST was ok and only some crash in the UEFI firmware after POST didn’t allow me to use the new CPU. However, after spending 1 month with HPE support they couldn’t recommend anything but returning to the stock CPU.

i7 10700 showed no signs on life at all.

Update 2024:

I tried to run QNAP QM2-2S-220A with two Samsung 970 EVO Plus NVMe M.2 SSD, 2 TB

Unfortunately Bifurcation Option has been canceled with Bios Update 2.60 see: https://community.hpe.com/t5/servers-general/microserver-gen10-plus-pcie-bifurcation/td-p/7217777

In my case, even after RollBack to the BIOS-Version 2.20 via ILO, I was NOT able to run QNAP QM2-2S-220A which needs Motherboard-Bifurcation ! It seems that the Bifurcation is gone after one time install of BIOS > 2.60.

This is really bad.

Now I run the StarTech.com PEX8M2E2 with 2xSamsung 970 EVO Plus NVMe M.2 SSD, 2 TB. This works fine.

11 in progress ?

Still 4x 1Gbit Eth…

https://www.hpe.com/psnow/doc/a50007028enw

Hello,

I have purchased 2 Kingston KTH-PL426/32G modules (2 x 32GB). But after inserting get an error telling: “229 unsupported dimm configuration detected”. I have seen reports that 2 x 32 GB shuld be ok even it the datasheet does not say so.

System BIOS u48 v3.40 (08/01/2024) are used.

CPU: Xeon E-2224

Please advice me how to do. Thanks!

KTH-PL426/32G is RDIMM and you need UDIMM for the Microserver to work.

Hello everyone,

A few years ago, I bought a HPE ProLiant MicroServer Gen10 Plus and currently run it with ESXi 7.0.1 (HPE custom image). According to the description, the Microserver has free PCI Express slots.

Expansion slots:

PCI Express x16 (1x), PCI Express x4 (2x), PCI Express x8 (1x), PCI Express x8 (2x)

PCI Express version (max.) 3

Internal connection options:

PCI Express x16 (1x), PCI Express x8 (2x)

Are there any compatible network cards (at least 2500 Mbit/s) that are compatible with ESXi? F

Thank you very much. Best regards, MB

Tried to add a NVMe SSD to the system by this PCIe card:

ICY BOX NVMe PCIe – PCIe 4.0 x4 IB-PCI208-HS

I cant see the disk in Windows. The SATA disks are using “HPE Software Raid”, do I have to change to AHCI for the disks?

Hey, I’m just letting you know that I installed two 32GB modules CT32G4DFD832A and they work flawlessly. I’m still waiting for E-2246G and will provide feedback once it’s installed :)

“Great tips! I totally agree that the right seasoning makes all the difference. For anyone who loves a kick of heat, Insane Hot Sauce has some insanely good sauces to spice things up!”

insainhotsauce

Thanks a lot for your detailed guide.

A week ago I installed Xeon E-2278GEL. It has 8 cores and 16 threads. Yes, it’s not so fast as 2278G but it’s TDP is only 35W! It’s working much faster than my old E-2224.

Hey guys,

I am trying to put a Intel Arc A310 LP card for video transcoding. The other PCIe slot has the ILO enabling kit. i will have 2 SSD and 2 HDD in there. i currently have 2 DIMM as well.

When the server boots after a few min i get a the device cant find enough free resources that it can use on the GPU. is the a power issue or PCIE bandwith issue ?

at the same time the Intel management engine interface also stops

Thank you so much for this article!

And my deepest thanks to all the commenters who left trace of their experience upgrading the hardware here in the comments.

I wanted to “give back” to the community with my little info, hoping it might be help someone else during the upgrade procedure.

My Gen 10 Plus is happily working (UNRAID) with the following upgrades:

– Intel i9-9900T (spend the little extra time and procure a “T” version since the TDW is way less than the counterpart)

– Corsair CT2K32G4DFD832A (64GB Kit) – I was able to only found the “higher” 3200 version but it gets happily downrated to 2666. I wish I could find a proper “ECC” but it wasn’t easily available in my marketplace and the prices have been spiking in the last year.

Firmware is updated to the latest SP available (take your time, load all the external packets according to your HW configuration) and BIOS is reporting v. U48 Friday, 21-02-2025.

Hello all,

Great article

I would like to know if it’s possible to have more than 64 gb ? I think I saw that in an article but I don’t find it..

Could you confirm this plz

Thanks for your comment