Times are changing. With the new generation of servers that support PCIe Gen5, it is now possible to have 400GbE NICs serviced by a single PCIe slot. As a result, the demands for server and storage bandwidth are going to increase, if even to just multiple 200GbE links per slot. That is why we are taking a look at the FS 400Gbase-SR8 optical transceiver today. Even if your network stack is not designed for 400Gbps nodes, aggregating more traffic onto a single switch port is going to be a key theme in 2023.

FS 400Gbase-SR8 QSFP-DD 400GbE Overview

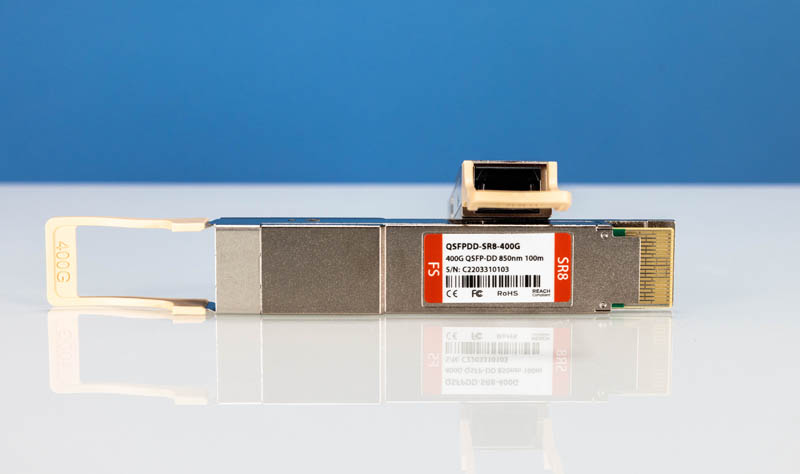

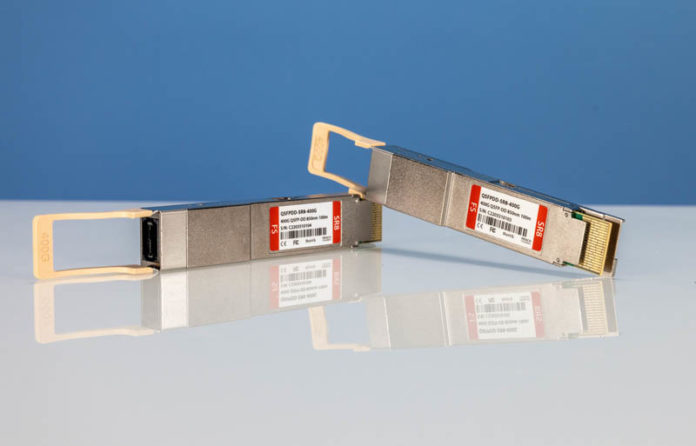

The new 400Gbps are in a new form factor, the QSFP-DD form factor. These are the modules.

The modules utilize the Inphi 850nm chip. At 400Gbps speeds, the DSPs being used become a big deal. Inphi is a company with awesome optical PHYs that was acquired by Marvell.

These are the “lower-cost” 400GbE optics. Currently, they sell for around $499 each. That is more than the 100Gbps and 200Gbps generation optics. At the same time, these are 2-4x the density, so they cost more and use more power.

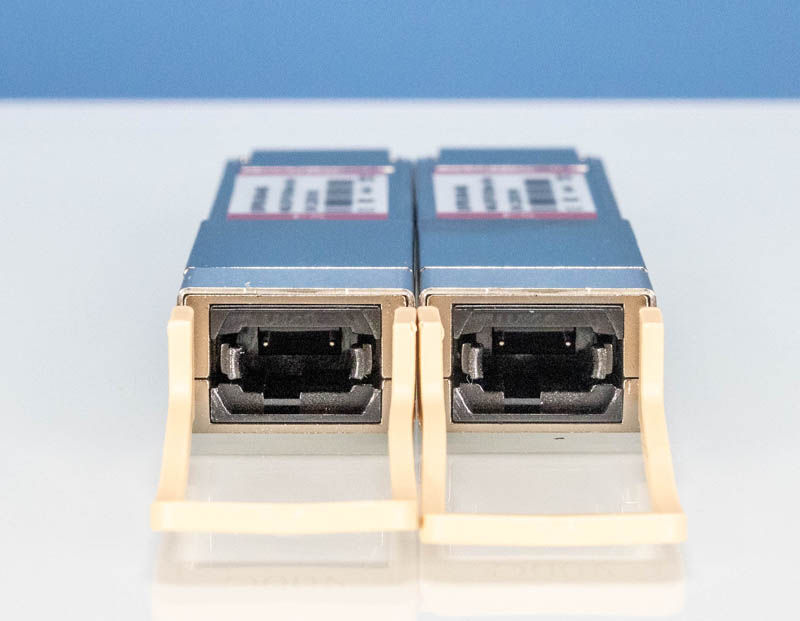

One of the other differences with this generation of short-range optics is the format. These are 400Gbase-SR8 optics. As a result, they utilize MPO/MTP-16 APC fiber.

Using MPO/MTP-16, these transceivers have a range of 70m over OM3 and 100m over OM4 multi-mode fiber.

Final Words

We were able to get 400Gbps speeds over these optics, but it was a bit of a challenge. Perhaps the biggest challenge was having test gear to be able to push 400Gbps over the pair. For that, FS let us borrow the N9510-64D. This is the company’s 64-port 400GbE switch.

Something that is really striking about the N9510-64D platform with these optics is just how much power is used by a modern switch. With 64x of even these short-range optics, that is 64x 10W per 400Gbase-SR8 module for 640W of power, and that does not include the switch itself.

We will have more on the N9510-64D in the next few weeks when we have our review of that platform. Stay tuned!

On the subject of heat/power: what does the curve look like in terms of speed vs. energy? My naive expectation is that you’d see some efficiency gains as you go faster at the low end(barring, perhaps, exotic ultra slow links for long term IoT stuff): 1 10GbE link vs. 10 1GbE links, that sort of thing; is there then a chunk of roughly linear relationship and then a very high end where incremental performance gains start costing vastly more energy?

Obviously the complexities of actually designing the cooling system go up significantly with density; but I don’t really have a sense of how the power cost of capacity varies at different speeds.

“These are the “lower-cost” 400GbE optics. Currently, they sell for around $499 each.”

In my data center engineer / Linux sys admin days, I wouldn’t have put these in a parts bin on the shelf (they would have gone in my locking parts cabinets). // Keeping honest people honest

Although less efficient, I would like to see the development of, dual (multi) step frequency, micro diffraction gratings. Gratings which modulate 0, 1st, 2nd… order diffracted steps (reflections) for different wavelength that result in similar final placement, given the same incident angle upon the grating. Such as a dual frequency reflection grating that has laired over the larger repeating steps (lower layer), reduced size steps (e.g. double the frequency, thus half in height and length, to perhaps non linear progression), placed along the inside corners of the primary, larger dimension steps, resulting in a top and secondary, higher frequency diffraction grating pattern layer. The completed single diffraction grating now having over the entire surface, both a high and low frequency component, the higher frequency step pattern, and partially overlaid upon the primary (lower) frequency step pattern, to perhaps piezo controlled, that by design, and for example, nominally reflects shorter wavelength light off the high frequency, upper diffraction pattern layer, while transmitting to the second diffraction pattern layer, longer wavelength light to reflect off the lower step frequency grating.