In 2012 ServeTheHome grew by 8.5% month-over-month over the entire year. In fact, it just about doubled between July 2012 and December 2012 and is looking strong in 2013 already setting two new all-time daily visitor records. That has made the site a very busy place. Likewise, the STH Fourms have doubled in visitors as well as seeing about 5x the weekly forum activity between January 2012 and January 2013. The sites are hosted completely separately This growth has also led to a series of infrastructure upgrades at STH. Suffice to say, it seemed like there was a quarterly mini-crisis due to the visitor growth and added complexity to the site. Here is a quick recap of the major upgrades on the main site over the past year:

- January 2012 traffic was served by one Amazon EC2 t1.micro instance (612MB RAM/ very little CPU.) This was after using a small virtual private server (VPS) and the instance grew to an Amazon EC2 m1.small instance (1.7GB, 1 virtual core, 1 EC2 compute units)

- June/ July 2012 we added expanded its CloudFront content delivery network usage to take the load off of the servers.

- October 2012 the switch was made to nginx and an Amazon m1.medium instance (3.75GB RAM, 1 virtual core 2 EC2 compute units.) Also, transitioned to a 64-bit server from 32-bit.

- December 2012 disk I/O overcame the server during a particularly busy instance. Check the Scalyr Blog’s post on this but Amazon Cloud performance is known to vary.

- January 2013 a simple script overloaded the main site’s server I/O.

One item we look at very seriously is the amount of time it takes to load pages. ServeTheHome served millions of pages in 2012 so saving 0.6s * 1 million pages = a week of aggregate visitor time. Frankly, the current infrastructure is not working.

It became time to stop running the bare minimum configuration and start overbuilding infrastructure to avoid these issues on a regular basis. In the October time frame just before moving to the Amazon EC2 m1.medium instance with nginx, I started getting serious about colocating a server for ServeTheHome. Another major consideration here is that memory costs an absolute ton in the VPS and cloud hosting world so moving beyond 3.75GB was going to cost a lot. The plan was to transition the forums, then transition the main site once everything was stable.

Why STH Stayed on the Cloud through 2012

The big issue with the original plan was that it involved colocating one server. If that server experienced a failure, the site would be down likely for a significant amount of time while a replacement was rushed in and the restoration progress occurred. That is precisely when the decision not to colocate was solidified. Single server colocation would be inexpensive, but would be full of risk. If some issue occurs in the massive Amazon AWS data center, downtime does occur. The instance goes down but will come back up on another node in a relatively timely fashion. Not as big deal for this type of site versus say one offering emergency services.

Cloud hosting is great. One can make backups in the cloud very easily. Someone else manages all of the hardware and network side of things. Life is easy on Amazon EC2.

We did consider moving to a HA Amazon EC2 setup with 2x Amazon RDS databases a load balancer and 2x front end instances. In that situation there would need to be some additional transfer between nodes so scaling down instances would not be an option. The cloud is cheap right? Not exactly. The four core web/ DB instances would need to be online 24×7 which means Amazon Reserved instances are the way to go. 2x m1.medium + 2x m1.small instances cost $1,800 to reserve up front. That is without any elastic scaling. Monthly charges in this configuration would be just shy of $250 given January 2013 bandwidth run rates and not accounting for growth or higher priced CDN endpoint servicing. The site mostly serves data rather than consuming it (it is about a 30:1 ratio for those wondering) so the fact that Amazon does not charge for inbound traffic does not help much.

$80/ month colocation is looking good, except for that redundany issue…

When it comes to hosting, spending $20/ month plus $600 for a high-utilization reserved instance gets one 2 EC2 compute units. Wonder what that equals? From Amazon’s description, that puts 1 EC2 compute unit at about the same speed of a Intel Xeon 5110 Woodcrest CPU. Yikes!

One EC2 Compute Unit provides the equivalent CPU capacity of a 1.0-1.2 GHz 2007 Opteron or 2007 Xeon processor. This is also the equivalent to an early-2006 1.7 GHz Xeon processor referenced in our original documentation. Over time, we may add or substitute measures that go into the definition of an EC2 Compute Unit, if we find metrics that will give you a clearer picture of compute capacity.

Interestingly enough, a dual L5520 1U machine costs around $450 today (e.g. these Dell C1100 servers). The Intel Xeon L5520 is a 60w TDP CPU (compared to 65w for the Xeon 5110) has four cores plus Hyper-Threading. Put that at 8-10x faster. Of course, there is a lot more that goes into it, but interesting comparison nonetheless. For those clamoring foul we are going to do a full breakdown of the hardware setup for the site, so don’t worry about the numbers here too much, a full accounting will come shortly.

Why STH is Leaving the Cloud

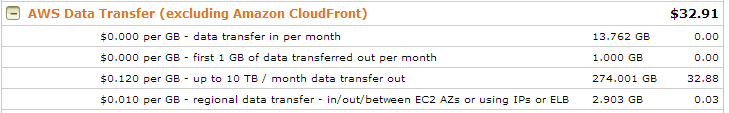

Amazon’s AWS service is very reasonable when one has a small blog. A few visits an hour is not an issue for even their “free” micro instance hosting plan. Just looking at STH’s bandwidth for the first 10 days of 2012 and the site has already delivered a few hundred GB of content. The new 2013 site re-design offers a lot more functionality, but does require a lot more bandwidth and more CPU. Here is a quick rundown of why Amazon EC2 hosting is expensive (not exact figures but this is a good idea taken from a few months ago):

- 600GB bandwidth @ $0.12/GB = $70/mo

- Disk I/O @ $0.10/ 1M I/O = $15/ mo

- EBS Storage and Snapshots = $65/ mo

So while you can purchase a m1.medium heavy utilization (since it will be on 24×7 as a web server) reserved instance for $600 and have an operating cost of $20 or so a month for three years, that is not the whole picture. The bandwidth and disk I/O costs go up linearly with traffic. It is hard to see exactly where the bandwidth goes because Amazon controls the firewall and network.

Storage is something fairly easy to control but one does need to keep an eye on it. Amazon charges $0.10/ GB and $0.095/GB for snapshot data so having an instance with a few development instances can cause a sizable monthly charge even if they are turned off but the data stored for a later date.

Let’s put that into perspective for a second:

- Colocation cost per mbps (unmetered): ~$2/ 1 mbps or $2 for a maximum of ~320GB transferred in a month per mbps.

- Raw disk cost using SSD: $1.80/ GB in RAID 1. That means two full SSDs in RAID 1 would payback in 18 months… except that disk I/O can cost quite a bit.

- Add in 2x 3TB drives in RAID 1 for backups and that adds another $0.105/ GB at current pricing. Note this is not monthly.

- Disk I/O $1 per 10M I/O requests. A modern SSD can put out well over 10,000 IOPS so that is $5 for 17 minutes of SSD disk I/O time.

At this point colocation seems like a no-brainer. It turns out that moving away from AWS and EC2 does start to make sense at some point. After going through the process, this is much later than one may have thought.

Time to Fall… A Quick Recap

Overall, Amazon has an excellent platform, however for web hosting, costs can quickly add up. There are certainly advantages of going the AWS route. We will have the opportunity to look at the flip side of the equation, colocation shortly. One of the major points here is that the $1,800 up front + $250/mo costs are only using the January 2013 projections. As the site’s traffic grows, those costs go up in a similar fashion. For many applications, the cloud lets you get scale quickly. For things like hosting a relatively static site, the cloud just doesn’t make sense. This is part 1 in the series documenting our build-out.

This line of articles definitely interests me! Temporary websites, elastic computing needs, these are wonderful for using the cloud to host. Long term, static sites with medium-large amounts of traffic don’t make sense in the cloud world. The costs are skewed against that usage model. A lot of people don’t realize that and just assume the cloud is always cheaper.

http://www.supermicro.com/products/system/2U/2027/SYS-2027TR-H71RF_.cfm

Seems like it might work for you. Only downside is that the backplane to the HDD’s is a SPOF.

This is going to be an epic series. Hopefully vendors help you out.

Can’t wait. Long time reader.

Found same thing, cloud not always cheap for web site hosting.

Very close actually. Using a 2U Twin2 derivative but with 3.5″ trays and not as fancy as that one (we do run on budgets.)

I seen other artikle on this. Good luck. Artikle says 20%-30% util. is payoff for owning hardware.

Come on Patrick. Everybody knows the cloud is always cheaper. I read it every day on the Internet.

And do not try to muddy the water with your numbers, facts and rational discussion.

That is not fair!

/snark

Great article. Love the new look.

Let’s not foget that on the cloud, if you have 10TB of data, and you want to transfer it to a new cloud, that’s at least $1,200 just to get your data OFF of THEIR servers. Plus, if ya use thier AMI, it is so custom that you gotta spend lots to change.

More details plz

Have you considered an alternative VPS cloud provider such as http://www.digitalocean.com? Seems cheaper than AWS.

Always suspected for things like WordPress hosting, using AWS didn’t make sense. Looks like you came to the same conclusion.

I have certainly looked at VPS. The issue is that we will be at the $80/mo plan for Digital Ocean in the next three months (so would just start there.) Two instances for HA then add another two instances for the forums. Financials to come. VPS is good, and STH ran on a VPS prior to AWS.

I’m happy that you’re staying very transparent about internal things Patrick :-)

Trying to stay as transparent as possible. The goal is to make this a resource for everyone. Sometimes I have to make decisions like this just to keep the site running. This should improve availability while at the same time providing some level of control in terms of costs which are on a not-sustainable trajectory. Right now, the architecture is scalable, but not too fast and not engineered for up time.

I will say, other than a few minor things here and there, AWS is a great platform.

Wait but I thought The Cloud™ was magical. A magical place that’s always better than the way we used to do it no matter what on account of the high degree of magic involved.

Odditory. Good to see that you visit this site as well! Your media server builds on [H]Forums and on AVSForums have more or less redefined budget mass-storage.

Nice of you to say, I’ve been on STH’s forum a long time actually, stop by some time!