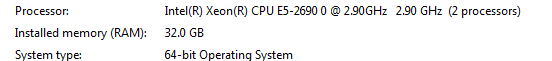

As I already mentioned, the new Sandy Bridge Xeons are fast, especially with two high-end Xeon E5-2690’s in a single system. One thing I mentioned in the previous dual Intel Xeon E5-2690 piece is that I had yet to test the larger Folding@Home units and get new power consumption figures with the machines. Today I decided to publish a little update to the previous piece.

Test Setup

For this test setup I again used some pre-production parts. Compared to the earlier Xeon E5 CPU samples I have seen, these seem to be very close to retail silicon. (In fact my retail silicon Intel Xeon E5-2690 CPUs are virtually identical to what is presented below.)

- CPUs: 2x Intel Xeon E5-2690 CPUs (these seem to be very close to the shipping parts)

- Motherboard: Tyan dual socket LGA 2011

- Memory: 8x 4GB Kingston unbuffered ECC 1333MHz DIMMs

- SSD: Corsair Force3 120GB, OCZ Vertex 3 120GB 2x OCZ Agility 3 120GB

- Power Supply: Corsair AX850 850w 80 Plus Gold

- Chassis: Norco RPC-4220

- Cooling: 2x CoolerMaster Hyper 212 EVOs

- Operating System: Ubuntu 10.10

Overall this ended up being a nice test platform but is not something robust enough that I think it is perfect. The Kingston memory used was only 1333MHz CL9 whereas we will be seeing a lot of 1600MHz CL11 soon. From what I can tell, the increased bandwidth will more than offset the increased latency.

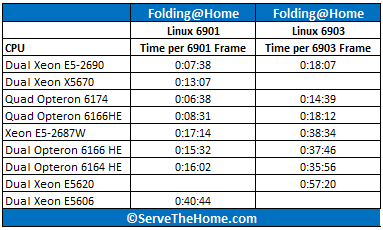

Folding@Home 6903 Work Unit Update

I am working to make Folding@Home Folding@Home a standardized benchmark, as it is a mature distributed computing client that runs very well in Linux. There is quite a bit of variability here, but the very interesting thing is that you can watch speeds scale very linearly with clock speed and cores (assuming the same architecture.) This scaling occurs not just with Intel CPUs, but also with AMD Operton CPUs in single to quad socket configurations and with core counts. It should be noted that on the Opteron side, Folding@Home is one of those applications that does favor the Magny Cours architecture over AMD’s Interlagos/ Bulldozer architectures when it comes to performance per watt. It turns out the newer 16 core Opterons are not as efficient as the older 12 core units, even with the jump from 45nm to 32nm. With F@H times are generally measured in TPF which stands for Time Per Frame which measures the amount of time it takes to complete each frame, or 1% of the work unit. A bit of tweaking needs to be done for each setup as things like NUMA settings need to be tweaked for each setup for them to run optimally.

Overall, the dual Intel Xeon E5-2690 system really shows off Intel’s new flagship in a positive light. I did not have both 6901 and 6903 units for all setups, but I did have them for a majority there. I think there are a few really interesting data points in that table. First, the Dual Intel Xeon E5-2690 system was able to complete the work units in similar times as to the Quad Opteron 6166 HE system. The bigger 6903 Folding@Home work units were not out when I had the dual Xeon X5670’s, but it is interesting to note that the Xeon E5-2690’s are a lot faster.

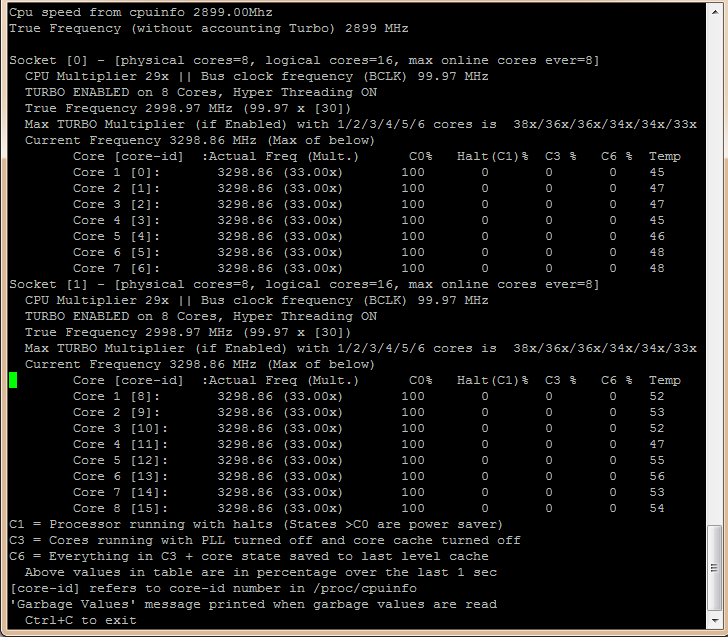

One other very interesting note is that the chips run at 3.3GHz across all eight physical cores, per processor, under the stressful workload. For those wondering, in Linux you can use i7z to see the clockspeed of these CPUs. I did (of course) see if I could get the CPUs to hold a 38x multiplier in turbo mode which is the single core max turbo, but trying that led to the cores operating all at 2.9GHz until a power cycle of the machine. This is after 12 hours of the CPUs working on the 6903 work units so components are thoroughly heat soaked. Note, the 2x CoolerMaster Hyper 212 EVOs are doing a great job here.

Power Consumption

As I stated in the original piece, I purchased an Extech 380803 True RMS power analyzer which is a really nice unit that even records usage over time. Using five 120mm fans, which consume a lot of power themselves, in the test system mentioned above, and a motherboard with IPMI 2.0 enabled, I was consistently seeing idle power consumption in the 120w range and maximum power consumption just shy of 400w. I need to get a few more systems on the Extech, but I did want to say that, unless one needs the horsepower of these CPUs, there is a really good case to be made for using lower-power parts. My Sandy Bridge based Intel Xeon E3-1230 test system idles around 37w and maxed out around 107w which I think is really interesting and I am eagerly awaiting the new Ivy Bridge Xeons because Ivy Bridge promises to reduce power consumption even more than the Sandy Bridge series.

Licensing costs aside, from a power consumption perspective, the sixteen physical core Dual Intel Xeon E5-2690 setup used about four times as much power as a four physical core system. Very interesting result indeed! If one can use separate systems, going the Xeon E3-1230 route yields about the same power consumption but one can build four complete Xeon E3-1230 servers for the price of just the dual Xeon E5-2690 CPUs. There are certainly situations where the Sandy Bridge-EP parts are going to be less optimal versus their Sandy Bridge and Ivy Bridge Xeon E3 cousins. Both the E3 variants (Sandy Bridge and Ivy Bridge) are meant for lower power physical servers rather than big iron systems that are the target of the Xeon E5 series. It will be very interesting when the Intel Xeon E5 series migrates to the 22nm tri-gate manufacturing process debuting with Ivy Bridge as that should lower power consumption by quite a bit.

Please Suggest Me Best PSU For

Intel Xeon E5 2690 x 2

X79 ATX MoBo

Cooler Master Hyper X CPU Cooler x 2

Nvidea Quadro M2000 x 2

8 X 16GB DDR3 Server Memory

500 GB M2 NVME SSD

2 TB SATA 7200RPM HDD

DVD WR

120mm RGB Cooling Fan x 5

Fan Controller

Wireless Keyboard Mouse

27″ IPS Monitor