This article will walk you through the process of building and flashing custom firmware to a re-labeled Mellanox Infiniband card from Dell, Sun, or HP. With the custom firmware, you will finally have RDMA in Windows Server 2012, increasing your file sharing throughput to 3,280MB/Second and nearly 250,000 IOPS. A common issue these days is that users of OEM Mellanox Infiniband cards do not have access to firmware revisions enabling RDMA. The performance benefit is significant.

What, no RDMA from my Infiniband Card in Windows Server 2012?

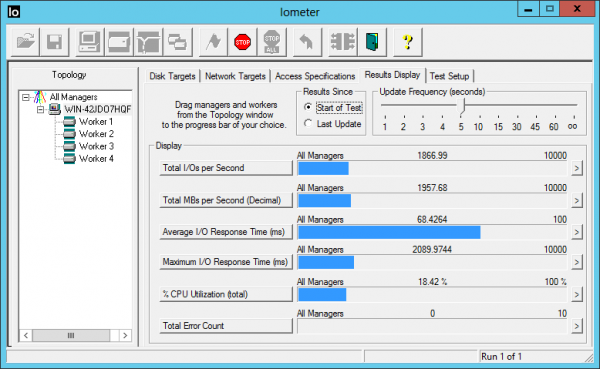

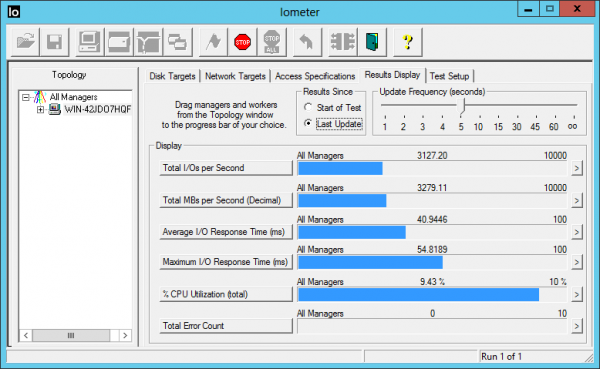

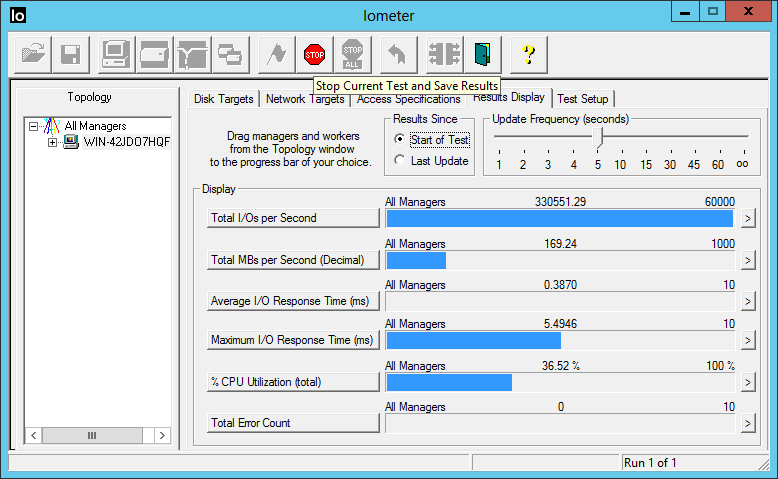

Seeking better file sharing performance, you install a Dell, Sun, or HP branded 40 Gigabit InfiniBand card in your Windows Server 2012 machine, one of the cards Based on the Mellanox ConnectX-2 hardware. The latest Microsoft OS includes a built-in IPoIB driver for these cards, so it looks like you are ready to go with just a reboot. You assign an IP address, configure your shares, and then test throughput. You run an IOMeter benchmark from a client machine to your file server and see results like the screenshot below – fast but not fast enough.

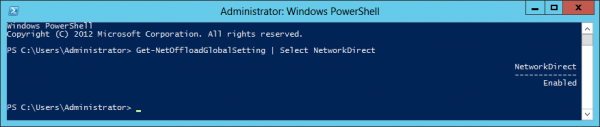

The test result of 1,958MB/s means that your 40 Gigabit card is delivering only around 15 Gigabits. So what is happening to all of that bandwidth you bought? To diagnose, you break out a PowerShell window. A quick Get-NetOffloadGlobalSetting shows that NetworkDirect is enabled, which means that you can use RDMA – if your card supports it.

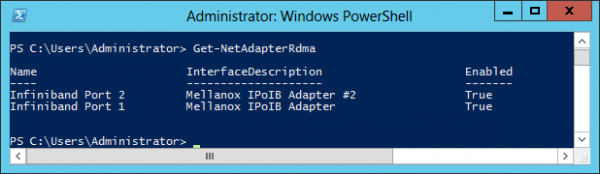

Running Get-NetAdapterRdma shows that the card itself is configured to use RDMA. So why isn’t it working?

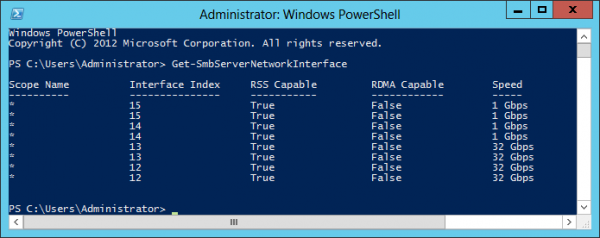

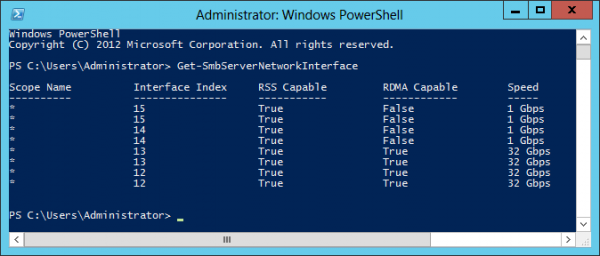

Even with a properly configured system – and Windows Server 2012 is properly configured by default – you won’t actually get RDMA if the firmware on your card doesn’t play well with the OS. To complete your diagnosis, the critical PowerShell query is Get-SmbServerNetworkInterface, which (see below) clearly shows that our InfiniBand card is not capable of IPoIB RDMA with Windows Server 2012. There is more detail in the Windows logs, but we don’t need it; we already know what’s going on.

Stale Firmware is the Problem

While our card promises RDMA on the spec sheet, it turns out that you need Mellanox firmware version 2.9.8350 (or greater) in order to use it on Windows Server 2012. Jose Baretto has the details right here. You can verify the firmware version for your card in a number of ways. Perhaps the easiest is the Windows device manager, as shown in the screenshot below, which shows a card with firmware version 2.9.1000 that does not support RDMA.

Can’t we just download a Firmware updater?

Mellanox provides (as of this writing) firmware version 2.10.720 in its Windows 2012 installer, but that installer will not update third-party re-labeled cards. The latest firmware version available from Dell and HP is 2.9.1000, which does not support RDMA. I have a few Sun cards as well, and they arrived running version 2.7.8130. Without updated firmware, we cannot use RDMA, but the vendors have not (so far) updated their installers.

Custom Firmware is the Solution

Fortunately, there is a solution: Create and burn your own firmware. It is actually much easier than it sounds. Your first time may take 30 minutes. After that, it’s a two minute procedure at most. We will begin with the Infiniband card installed, using the built-in Microsoft driver, and with IP address information configured, as above.

Steps to Create and Burn firmware version 2.10.720:

- Install Mellanox WinMFT

- Retrieve card Device ID

- Retrieve card Board ID

- Download .mlx file

- Download the .ini file

- Create and Burn new firmware using mlxburn

Firmware Burning Steps in Detail:

1) Install the Mellanox WinMFT software package. This gives us the tools we need in order to create and flash the firmware. At the time of writing, the latest version is 2.7.2 and the installer is named WinMFT_x64_2_7_2.msi.

2) Now we need to retrieve some information from your card. At a Windows prompt, run the command mst status to retrieve the PCI ID of your card. For my card, the ID is mt26428_pci_cr0, as shown below; yours will likely be the same unless you have multiple cards installed. By the way, the number 26428 is the Device ID (a product identifier) for the Dell mezzanine card. You may notice that it’s the same Device ID as some of the Sun and HP cards and a Mellanox ConnectX-2 dual-port QDR card, an indication that our Dell card is indeed a standard Mellanox product, albeit with Dell specific firmware.

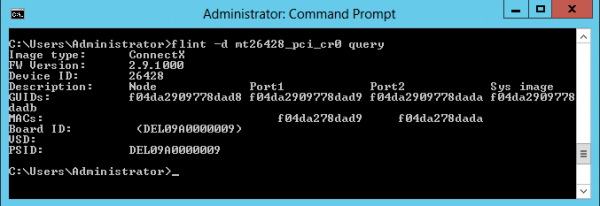

3) Now that you know your card’s PCI ID, we need to verify a few other card attributes. At the same command prompt, run the command flint -d <PCI ID> query (in most cases that will be flint -d mt26428_pci_cr0 query) and note your card’s Board ID. In the screenshot below, we see a Dell card which has a board ID of DEL09A0000009 (ignore the parenthesis). Some of the Sun cards have Board ID SUN0170000009.

-

Flint Query Results

4) Download the raw firmware file to a folder on your Infiniband server. The raw firmware file is a large text file with a .mlx extension. I used a version 2.10.720 firmware file named fw-ConnectX2-rel.mlx that I retrieved from the Mellanox 4.2 driver installer for Windows 2012. You can download my firmware copy from right here. If you don’t want to download my version, you can extract your own firmware file from the Mellanox installer. Start the installer, leave it running, and look in the folder c:\users\<username>\appdata\local\temp for a file with the .mlx extension (thanks to ServeTheHome member seang86s for the tip).

5) Download the .ini file as well, and place it in the same folder as the .mlx firmware file. My version for the Dell PowerEdge C6100 mezzanine card is right here. The .ini file must match the Board ID, so you’ll need to name it DEL09A0000009.ini. Skip to step six if you downloaded my version. If you don’t want to use my firmware file, you can create your own by editing and renaming the .ini file for the equivalent non-relabeled Mellanox board. With the Mellanox installer still running, find the file named MHQH29C_A1-A2.ini in one of the c:\users\<username>\appdata\local\temp folders. Edit the contents so that (for a Dell card) the attribute Name = DEL09A0000009 and PSID = DEL09A0000009 and then change the file name to DEL09A0000009.ini. If you have a Sun ConnectX-2 based card, just replace the Dell Board ID with the Sun Board ID in both in the file attributes and the file name.

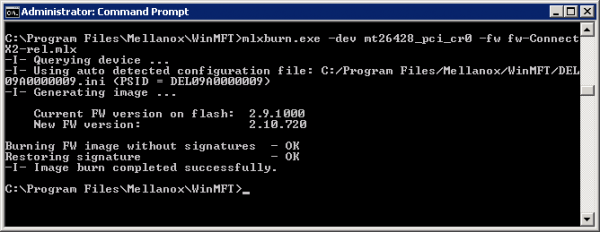

6) You are now ready to create the new firmware image and burn it to your card. These two steps take place with one command, and must be done on the server with your Infiniband card installed. Open a Windows prompt and navigate to the directory containing your downloaded files. From the prompt, enter the command mlxburn.exe -dev <PCI ID> -fw <firmware file path>, which in our case is mlxburn.exe -dev mt26428_pci_cr0 -fw fw-ConnectX2-rel.mlx.

When you run the command, mlxburn queries your card to find the Board ID. It then looks in your folder for a .ini file with that Board ID – and finds it since we created one. Mlxburn then uses both the .mlx firmware file and the .ini file (along with additional information from the card, I suspect) to create a firmware image and then burns the firmware to the card. When complete, reboot the server to make the new firmware take effect.

Check Your Work

To verify the new firmware version, open a Windows command prompt and run the command flint -d <PCI ID> (flint -d mt26428_pci_cr0 in our example). Look at the firmware version to verify that it is 2.10.720, or whatever version of mlx file you used.

Now verify that your card is RDMA capable. Open a PowerShell window and enter Get-SmbServerNetworkInterface. Like the screenhot below, your Infiniband ports should now show as RDMA capable.

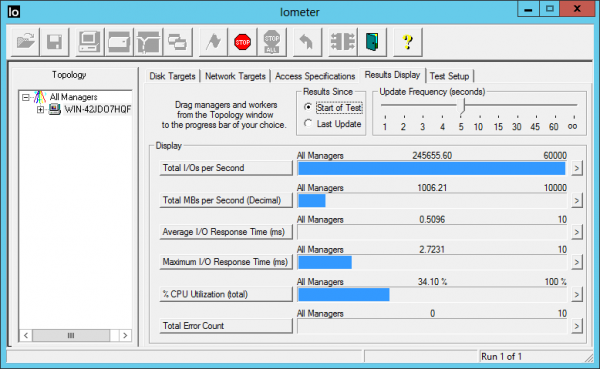

Finally, run another throughput test. This time we see a far more satisfying 3,279MB/s – 25.6 Gigabits of actual file sharing throughput.

Even more impressive is the incredibly low latency that IPoIB with RDMA gives us. An IOMeter test with 4kb random transfers shows just .51ms average latency and nearly 250,000 4kb random IOPS – from a circa 2009 Windows file server!

Additional Details

The file server for this article was a Dell PowerEdge C6100 XS23-TY3 node with dual Intel Xeon L5520 CPUs and a Dell QDR Infiniband mezzanine card (under $200 here.) I used a fresh Windows 2012 server installation with all default settings. For testing, I needed a file system faster than an Infiniband card – which isn’t easy. To achieve this, I ran the free StarWind RAM disk software on the file server and configured four 8GB RAM disk volumes. When tested locally with IOMeter, these RAM disks were capable of over 9GB/Second of throughput, which is more than enough to keep up with a single Infiniband card. These ultra-fast disk volumes were then configured as standard Windows shares.

The test client was another identical Dell C6100 node, connected to the server with a Mellanox Grid Director 4036 Infiniband switch. After mounting the four shared volumes across the IPoIB network, I ran the throughput and IOPS tests using IOMeter running on the client node. IOMeter was configured with four workers. The Access Specification for the throughput test was: 1MB transfers, 100% random and 100% reads, with all other settings left at their default values. For the IOPS test, the transfer size was 4kb. I used a test file size of 16,000,000 sectors on each of the four disks and a queue depth of 32. In IOMeter, you can test across multiple volumes by control-clicking on them to multi-select. All other settings and configurations were left at their defaults. For example, the Windows firewall was left running and large pages were not enabled.

What protocol were you using on top of the IPoIB? ISCSI?

Thanks

All tests were run using standard Windows file sharing, aka SMB3.

I am having some issues with trying to get this to work. I am using the Mellanox VPI package and the latest firmware.mlx and .ini for dell c6100/Win Server 2012. It seems I am limited at 10Gbps.. somehow. I am using 2 c6100 WS 2012 nodes with a direct connection and OpenSM (no switch) amd the dell mezannine 5dual port 40G Mellanox cards..

I see a warning in the eventlog:” The firmware version burned on the Mellanox ConnectX-2 IPoIB Adapter device is not up-to-date. CQ to EQ mapping feature is missing Hence, RSS feature will not function properly and will affect the NIC performance. Please burn a newer firmware and restart the Mellanox ConnectX device. For additional information on firmware burning process, please refer to Support information on http://mellanox.com”

Mst status and vstat show firmware 2.10.0720 and activespeed 10 Gbps. all the RDMA related settings are ok

Any ideas

nico,

If your connection is showing up as 10Gbit instead of 32Gbit, try this first:

In the Windows device manager, look in the “system devices” subfolder for an entry for your Mellanox card. Open this and find the “port protocol” tab. “HW Defaults” works perfectly for me on Windows, but you could also try forcing the card to IB mode with RoCE and Active ND both enabled.

nico,

If this doesn’t fix your problem, I suggest opening up a thread in the forums and/or visiting the Mellanox community site at http://community.mellanox.com/

Jeff, thanks for your help. I did a full power down reboot on both systems and now it is working spectacularly.. I have 2*2 ports connecting the nodes using fiber optic cables and I am getting 6300 MB/sec (!!!!) IOtest using 1MB block reads across 4 Ram Disks similar to the tests in the article – without any tweaks or changes in network settings…All this spending less than the price of a single 10GigE card and an evening of hacking :-)

I have an MHRH2A-XSR card that has firmware 2.9.1200 but there is no board ID when running the following command “flint -d mt26418_pci_cr0 query”. Is that normal and if so, how can I update the firmware without the board ID?

Hi Kevin,

That is not normal. Every board has a Board ID.

http://www.mellanox.com/page/firmware_table_ConnectX2IB

Maybe this is the PSID of MHRH2A ?! “”MT_0F90120008″”

Great “how-to” dba!

on the burn command – it requires you to specify the configuration file with a “-conf” switch. So for your example it would look like this:

mlxburn.exe -dev mt26428_pci_cr0 -fw fw-ConnectX2-rel.mlx -conf DEL09A0000009.ini

Thanks again for sharing!

I purchased my MHQH19B-XTR cards on Ebay and found they are IBM OEM cards. Initially I had problems updating FW however, I found a command that lets you change the PSID as you burn- console window output below:

C:\Program Files\Mellanox\WinMFT>flint -d mt26428_pci_cr0 –allow_psid_change -i

fw-ConnectX2-rel-2_9_1000-MHQH19B-XTR_A1-A3.bin burn

Current FW version on flash: 2.8.600

New FW version: 2.9.1000

You are about to replace current PSID on flash – “IBM0D90000009” with a diff

erent PSID – “MT_0D90110009”.

Note: It is highly recommended not to change the PSID.

Do you want to continue ? (y/n) [n] : y

Burning FS2 FW image without signatures – OK

Restoring signature – OK

C:\Program Files\Mellanox\WinMFT>flint -d mt26428_pci_cr0 query

Image type: FS2

FW Version: 2.9.1000

Device ID: 26428

Description: Node Port1 Port2 Sys image

GUIDs: 0002c903004ae06a 0002c903004ae06b 0002c903004ae06c 0002c903004a

e06d

MACs: 0002c94ae06a 0002c94ae06b

VSD:

PSID: MT_0D90110009

I then used the techniques described in this forum to bring cards up to FW 2.10.0720

They seem to function correctly now. I still working on getting my IB switch working.

audigo

Hey guys,

The inital how-to wasn’t helpful to me but audigos addition helped me a bit.

I have a couple of MNPA19s and they had HP Firmware 2.9.100 installed.

The Frmware from the how-to is different and not suitable.

So I grabbed the Mellanox from the website but this is only an 2.9.120 version.

I could burn the new firmware properly with

C:\Program Files\Mellanox\WinMFT>flint -d mt26448_pci_cr0 -allow_psid_change -i fw-ConnectX2-rel-2_9_1200-MNPA19_A1-A3-FlexBoot-3.3.400.bin burn

and change the HP PSID to a Mellanox one:

Note: Both the image file and the flash contain a ROM image.

Select “yes” to use the ROM from the given image file.

Select “no” to keep the existing ROM in the flash

Current ROM info on flash: type=PXE version=3.3.500 devid=26448 proto=VPI

ROM info from image file : type=PXE version=3.3.400 devid=26448 proto=VPI

Use the ROM from the image file ? (y/n) [n] : y

Current FW version on flash: 2.9.1000

New FW version: 2.9.1200

You are about to replace current PSID on flash – “HP_0F60000010” with a different PSID – “MT_0F60110010”.

Note: It is highly recommended not to change the PSID.

Do you want to continue ? (y/n) [n] : y

Burning FS2 FW image without signatures – OK

Restoring signature – OK

C:\Program Files\Mellanox\WinMFT>

But at the end it leaves me behind wiht an older firmware.

Installing the latest driver package from mellanox doens’t help at all.

So what is it I’m missing here?

Hello, i have some MHQH19B-XTR cards, i would like to know if the firmware version 2.10.720 is the latest or there is a new version…..

A question to Jeff, in your great article you wrote “firmware file named fw-ConnectX2-rel.mlx that I retrieved from the Mellanox 4.2 driver installer for Windows 2012”

may u explain where is it that file into the installer ??? wich folder ?

Thanks

When you open the installer (MLNX_VPI_win8_x64_4_2.exe), the contents are expanded in a folder in %temp% (usually C:\Users\[user_name]\AppData\Local\Temp) folder.

I’m trying to get the latest firmware from MLNX_VPI_WinOF-4_95_All_win2012R2_x64.exe. It does not seem to exist anywhere in C:\Users\[user_name]\AppData\Local\Temp when I run the installer. I also tried extracting all the contents from installer using uniextract but the firmware does appear in the extracted files? Does the installer download the firmware when it detects the card? Then do I have to use a non-oem card to get the installer to download the right firmware to use with my oem card? All I have are oem cards. Any chance you could extract and post the latest firmware?

The FW link doesn’t work and I have issues extracting it from the OFED installers (can’t find the .mlx file!). Can you please update the download link @Patrick ?

Did you get this going? I have the exact same cards. HP firmware is non-existent so I also flashed and changed the PSID to go up to 2.9.1200 but that is still very old and long before RDMA support. I’d love to hear if you found a way. I used the custom firmware approach and my system was very unhappy,

a version 2.10.720 firmware file named fw-ConnectX2-rel.mlx ,the firmware can not find now, Can you please update the download link @Patrick ?

Only known location for the OFED 4.2 installation that I’m aware of: http://h20564.www2.hpe.com/hpsc/swd/public/detail?swItemId=MTX_7dff755cf65c45f3a4f639a4e1

I realize this is a old article, but maybe somebody still reads.

I cannot get my Hands on the Firmware Version

firmware 2.10.0720

the link in the article is dead.

I have a connectx-2 , PSID: MT_0D90110009

running in Windows 2016

(IB mode does not work, but ETH does)

I tried to extract the .mlx file from the older Drivers packages at the mellanox site, but no luck. the .exe contains a .msi

and the MSI does not contain the mlx file

can anyone post the Firmware 2.10.0720 ? or a method on how to get it from mellanox?

thanks

Hi Chris, I found it in on the ASUS website under the ESC4000_G2 Server. Do a search for “MLNX_IB_CX3_Windows_VER20140305.zip”

I’ve got two MNPH29D or ConnectX-2 EN cards and while I have 2.10.270 FW on them, I can’t get Windows SMB Direct to perform as expected. On Dual 10Gbe connections direct attached I struggle to get more than 650MB’s to a RAM drive. The native IP gives better performance at 800MB’s. Disappointing really..

Here is a link for anyone that still needs the firmware https://mega.nz/#F!Y7gUATha!tyTecwHH036c15-tfQedwA

lookin for help. I have HP firmware 2.8 and cant find anything newer and im unable to figure this part out on making my own…

followed @Kaytro but I go to the mega link and I don’t know what im looking for as I don’t see an mlx extension…

I have a connectx not a 2 or 3 or anything…

you can see all my fun here…

https://hardforum.com/threads/starting-to-play-with-10gb-mellanox-and-need-help.1973101

appreciate any help…

It’s an awesome post designed for all the web people; they will get benefit from it

I am sure.

Hi, new member here, excited to get involved.